Sticky Postings

All 242 fabric | rblg updated tags | #fabric|ch #wandering #reading

By fabric | ch

-----

As we continue to lack a decent search engine on this blog and as we don't use a "tag cloud" ... This post could help navigate through the updated content on | rblg (as of 09.2023), via all its tags!

FIND BELOW ALL THE TAGS THAT CAN BE USED TO NAVIGATE IN THE CONTENTS OF | RBLG BLOG:

(to be seen just below if you're navigating on the blog's html pages or here for rss readers)

--

Note that we had to hit the "pause" button on our reblogging activities a while ago (mainly because we ran out of time, but also because we received complaints from a major image stock company about some images that were displayed on | rblg, an activity that we felt was still "fair use" - we've never made any money or advertised on this site).

Nevertheless, we continue to publish from time to time information on the activities of fabric | ch, or content directly related to its work (documentation).

Wednesday, March 12. 2025

Summoning the Ghosts of Modernity at MAMM (Medellin) | #exhibition #digital #algorithmic #matter #nonmatter

Note: fabric | ch is part of the exhibition Summoning the Ghosts of Modernity at the Museo de Arte Moderno de Medellin (MAMM), in Colombia.

The show constitutes a continuation of Beyond Matter that took place at the ZKM in 2022/23, and is curated by Lívia Nolasco-Rószás and Esteban Guttiérez Jiménez.

The exhibition will be open between the 13th of March and 15th of June 2025.

-----

By fabric | ch

Atomized (re-)Staging (2022), by fabric | ch. Exhibited during Summing the Ghosts of Modernity at the Museo de Arte Moderno de Medellin (MAMM). March 13 to June 15 2025.

More pictures of the exhibtion on Pixelfed.

Friday, February 09. 2024

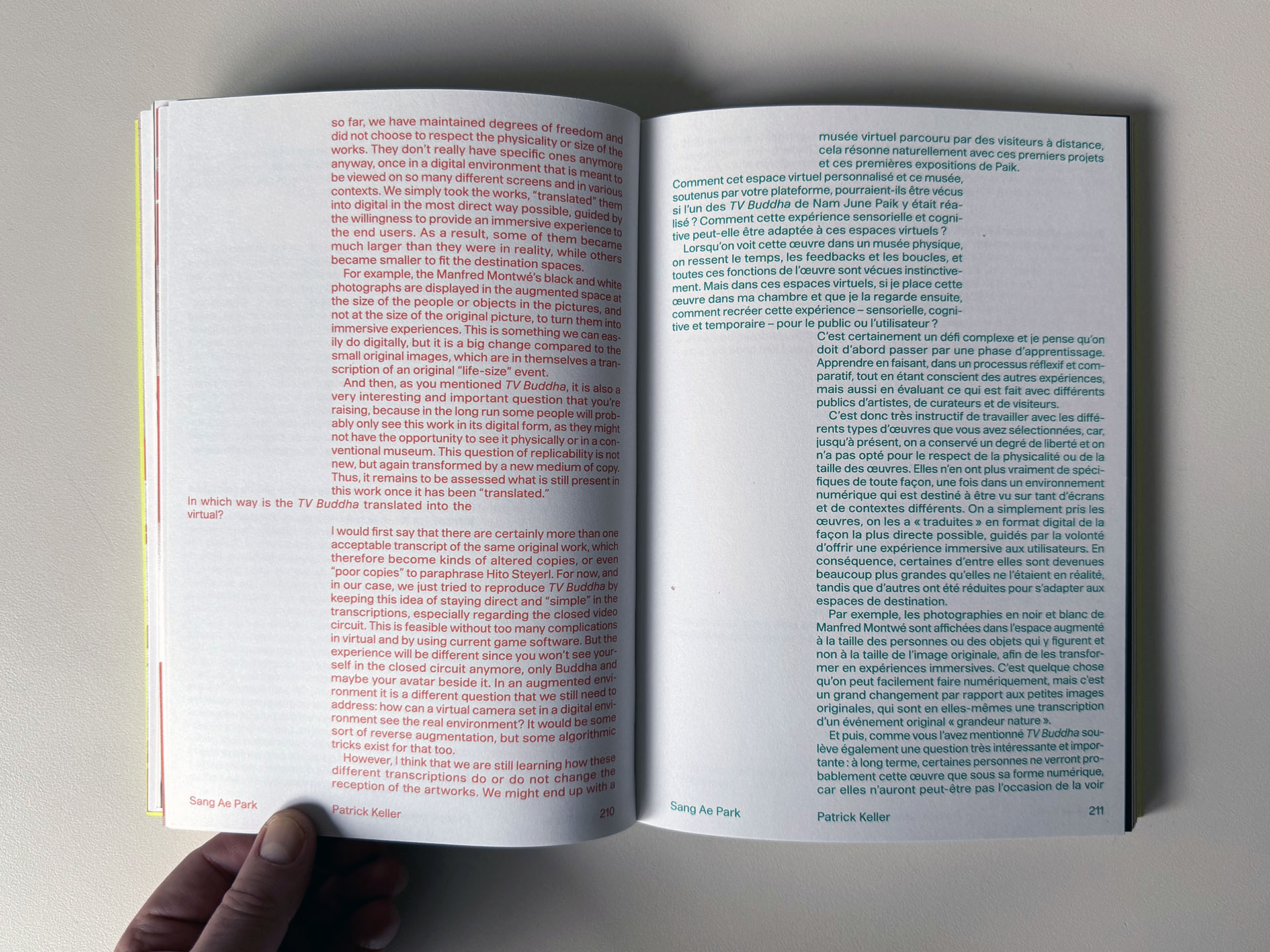

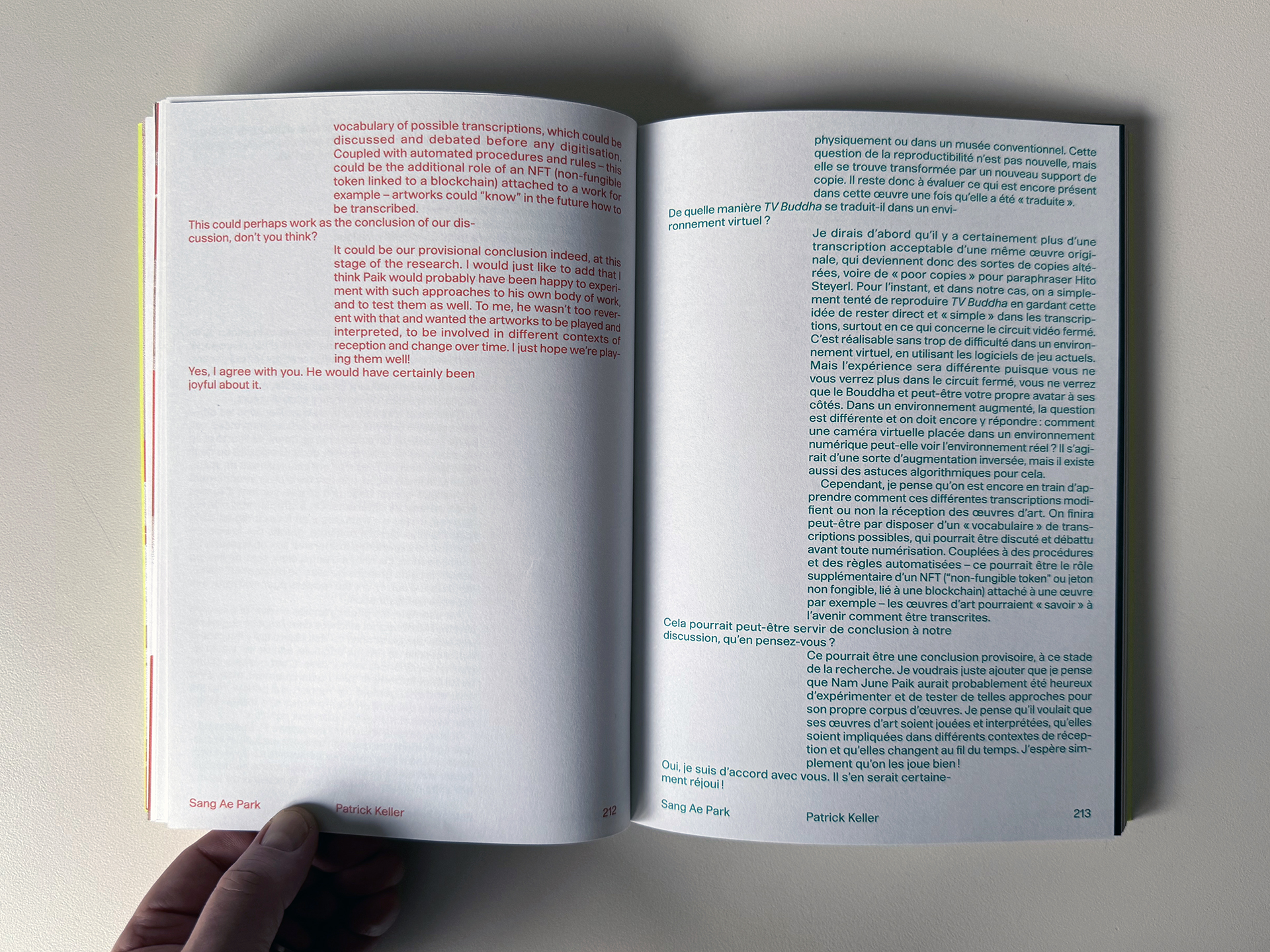

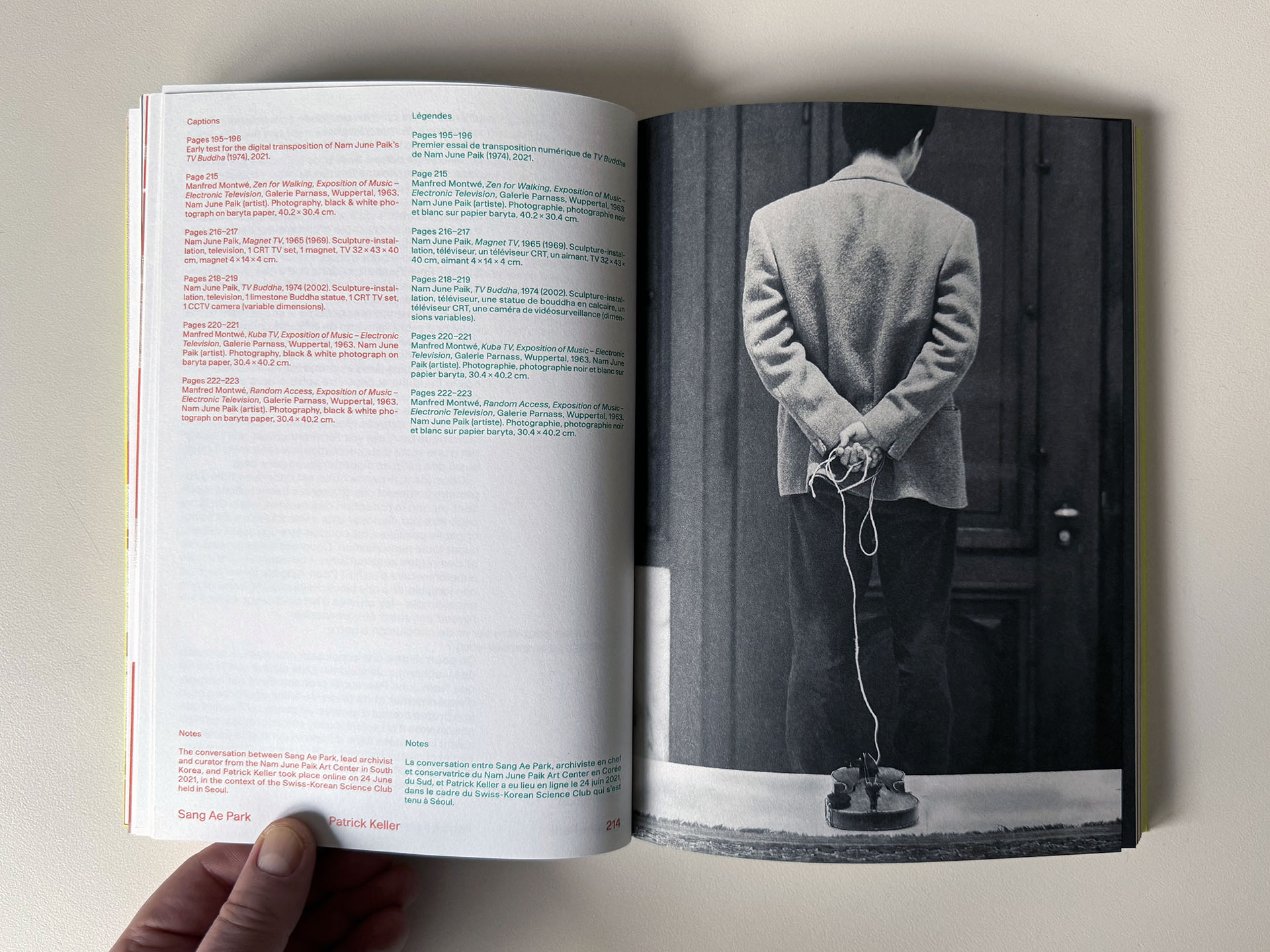

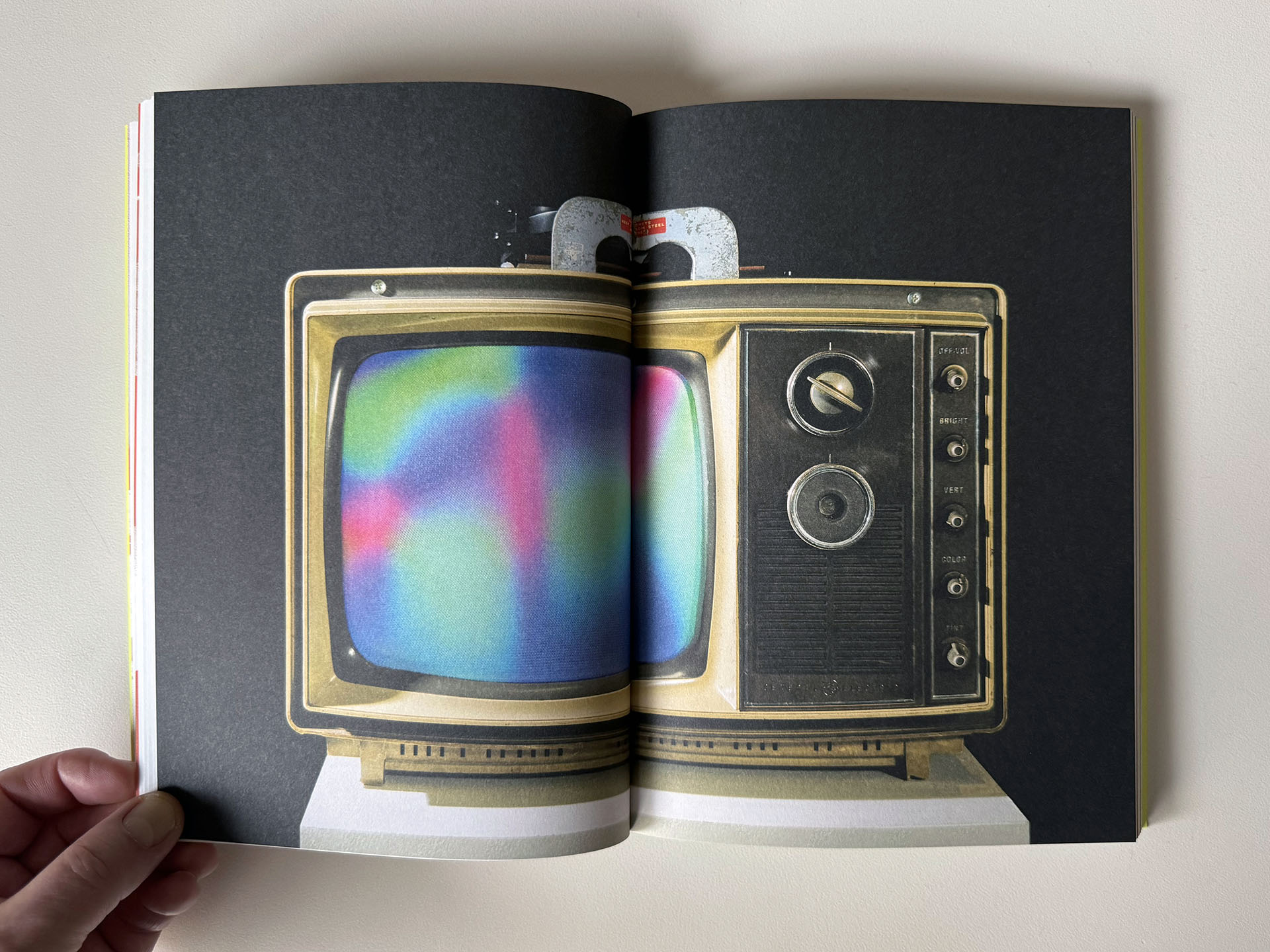

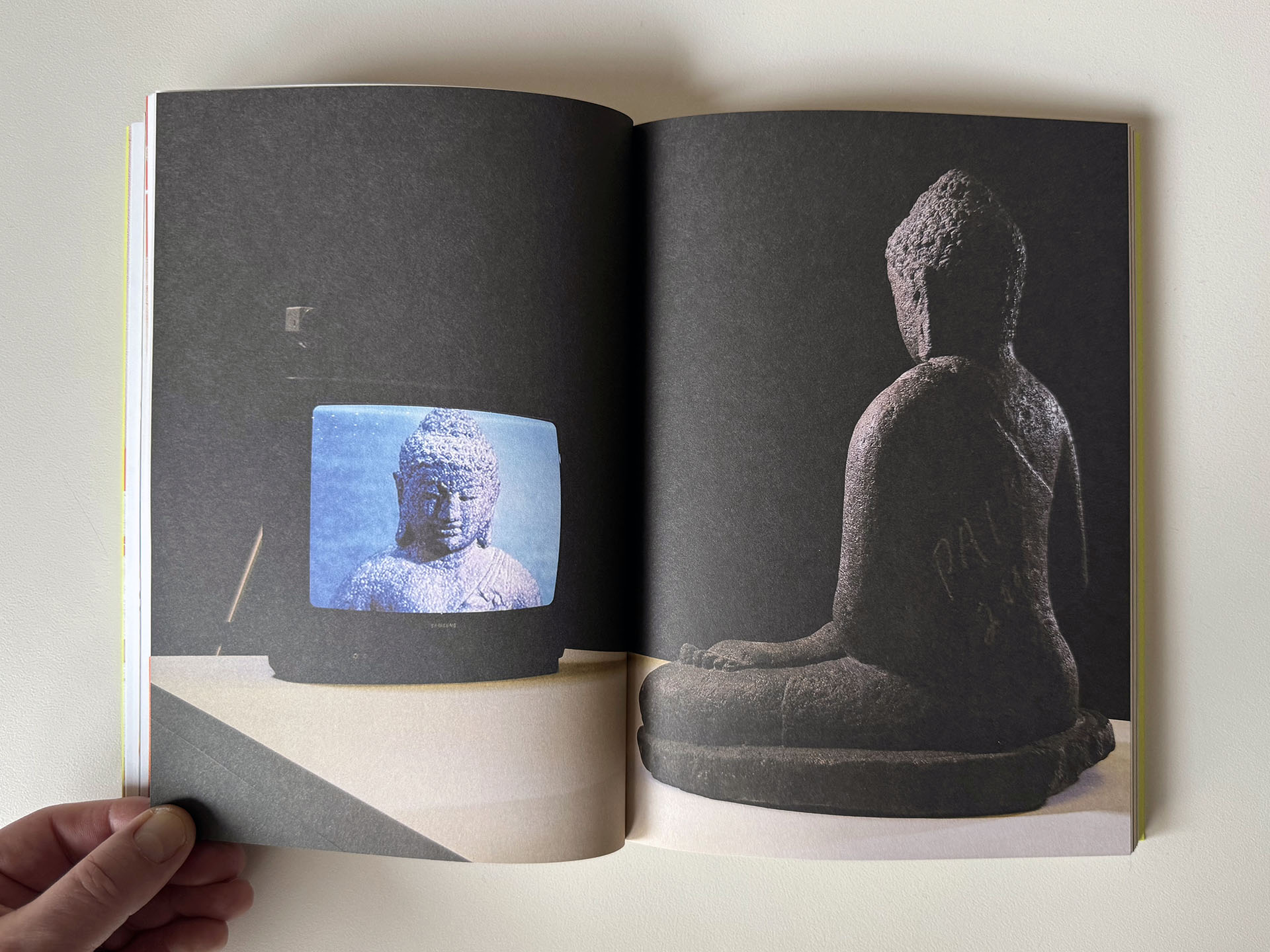

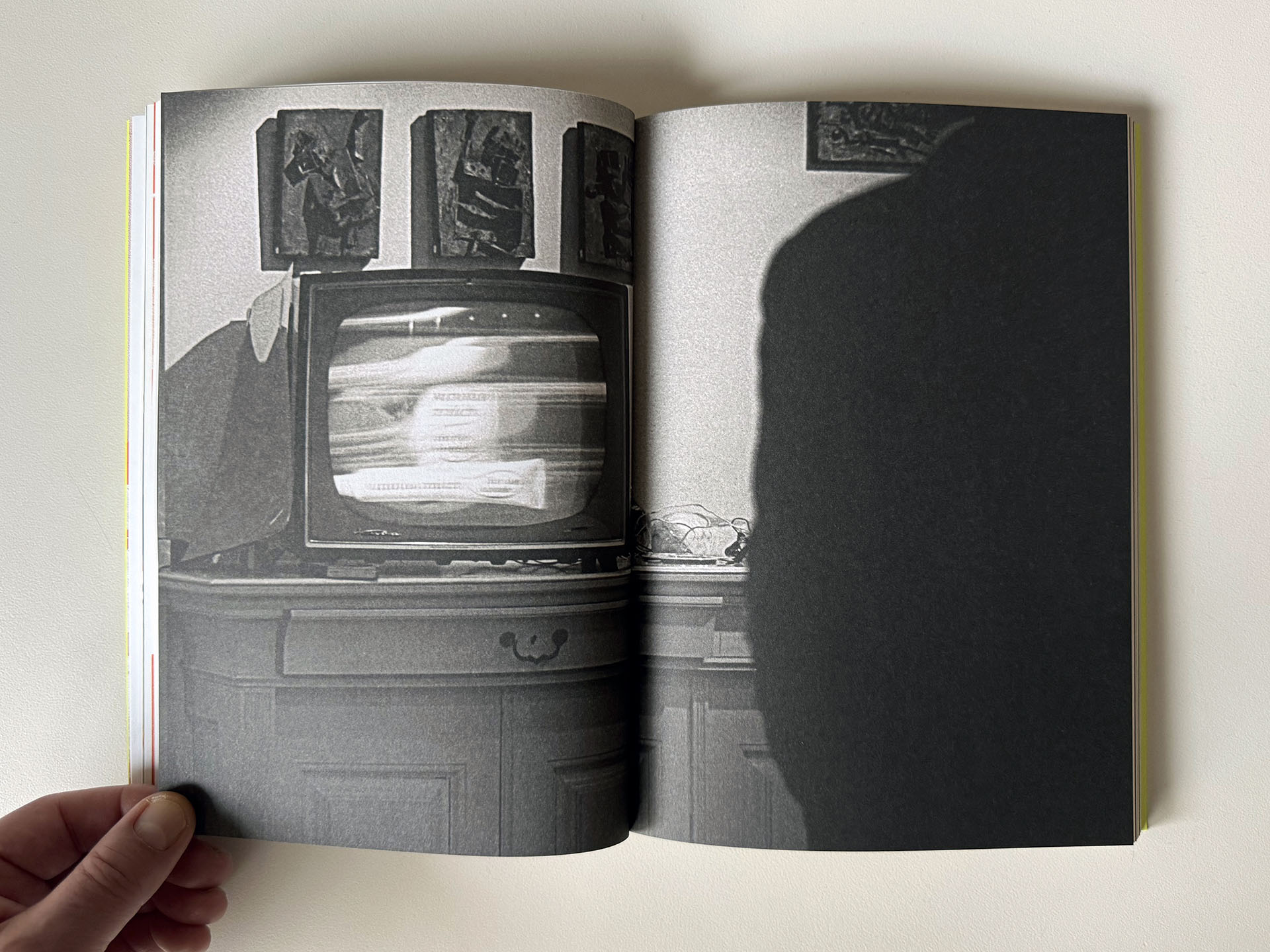

Mnemosyne History and Research in Arts and Design / (Re)Viewing Paik | #paik #digitization #digitalexhibition

Note: as part of a year-long preliminary research into digital exhibitions, we teamed up with the Nam June Paik Art Center (South Korea) - and their incredible collection and archive of Nam June Paik's works -, as well as ECAL/University of Art and Design Lausanne, to deliver initial thoughts and proofs of concept.

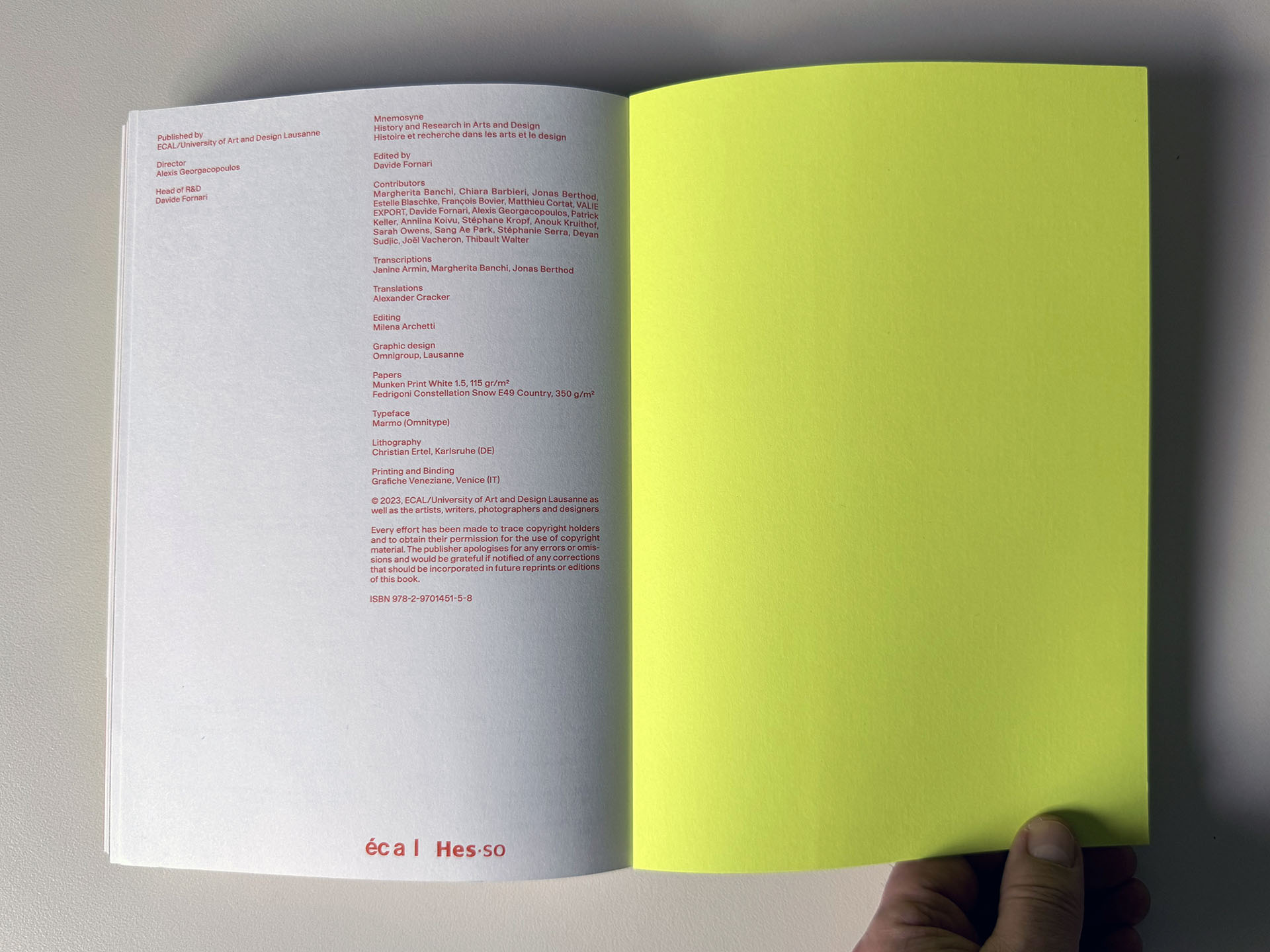

Late last year saw the publication of Mnemosyne, a book on "History and Research in Arts and Design" (ed. Davide Fornari, published by ECAL/University of Art and Design Lausanne (HES-SO)).

In this context, I had the chance to be in conversation with NJPAC curator Sang Ae Park about this joint research. Among other topics, we discussed the unrealized piece – at the time – "Symphony for 20 rooms" (1961) by Nam June Paik as a potential inspiration for "remote" exhibitions, at home.

This discussion gave ground to the paper "A Symphony for Nam June Paik, Digitally" (below), while this preliminary research is likely to continue in the form of a longer-term research.

-----

By Patrick Keller

Monday, October 30. 2023

fabric | ch receives the award "Architecture & Landscape" from the Fondation vaudoise pour la culture | #architecture #experimental #digital #award

Note: this Saturday (04.11) fabric | ch will receive the "Architecture & Landscape" price from the Art Council of Canton de Vaud (CH).

It is a rare but much-apreciated recognition of our work by the region where we've been working all those years (and, at the same occasion, also one to show our faces)! We're still waiting for an invitation to exhibit fabric | ch's work somewhere in our hometown though 😉

So rejoice, and let's celebrate together during the following drinks reception!

Via @fvpc

-----

Thursday, August 03. 2023

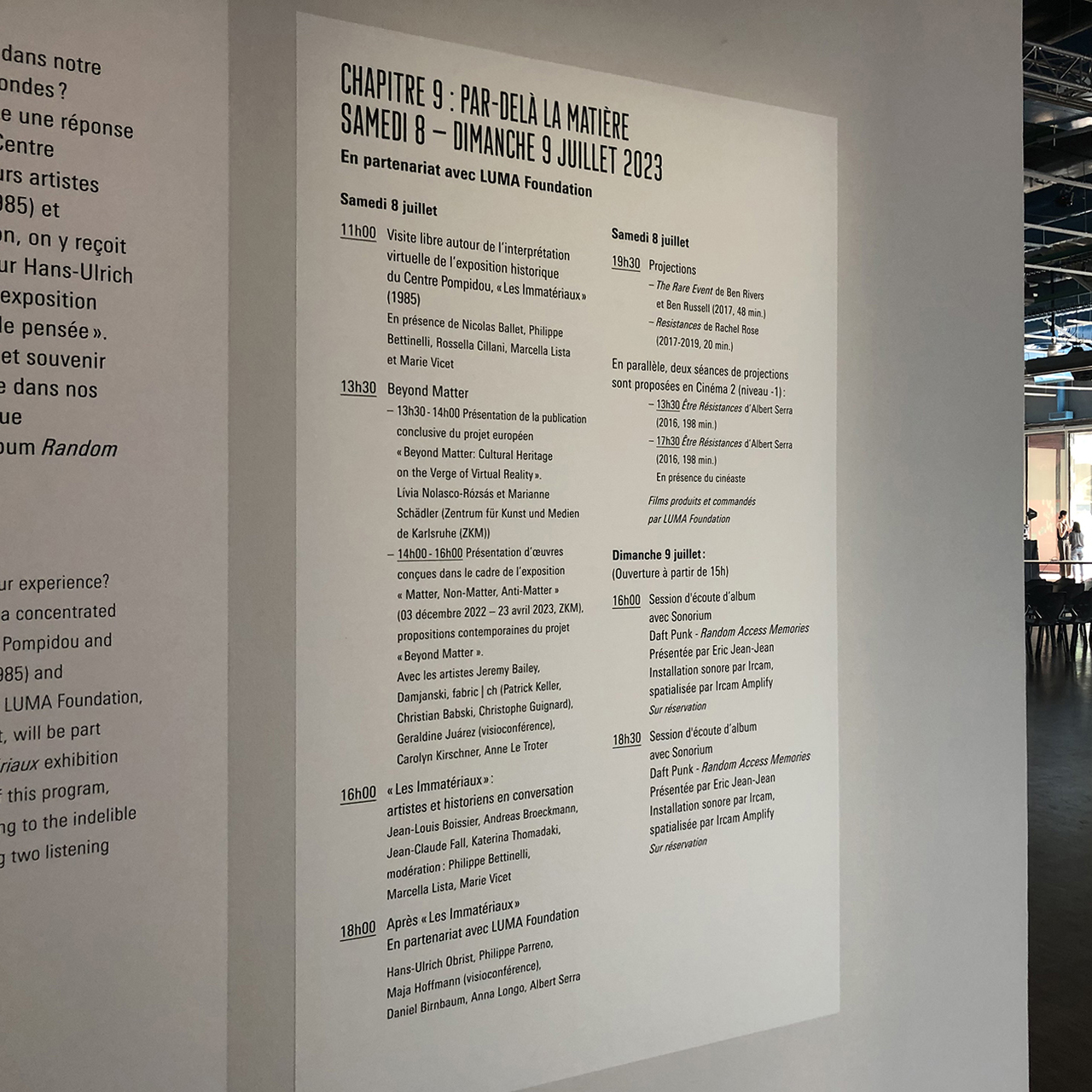

Moviment exhibition at the Centre Pompidou fabric | ch during Chapter "Par-delà la matière" | #matter #non-matter #automated #exhibition #fabricch

Note: Early last July, fabric | ch took part in the Moviment exhibition-festival at Centre Pompidou, in Paris.

Organized in 10 chapters (Red thread; The bedroom, the house, the city; In the spotlight; Aloud, Here and elsewhere, Other-worldly; Of gesture and time; To the max; Beyond matter; The grand finale!), this was the occasion – following the words of the curators – to "reactivate the essence of the Centre Pompidou ideals: to assemble all the different ways of encountering creativity, understanding it, participating in it; to be a monument in motion, a "moviment."

It was truly a success, with outstanding guests and a very interesting hybrid museum format, somewhere in-between exhibition and performance, talks and workshops.

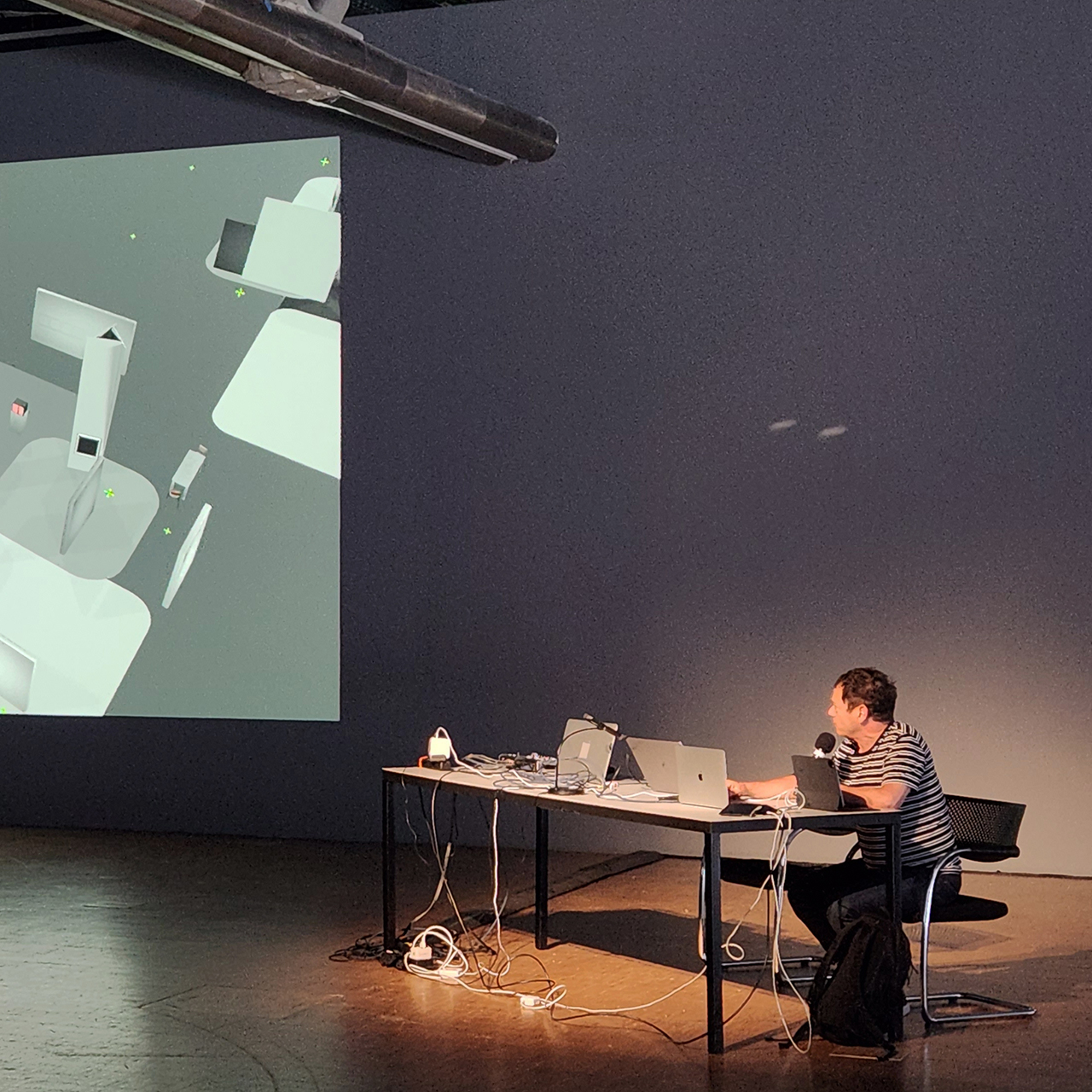

In this context and during "Chapitre 9: Par-delà la matière" curated by Marcella Lista & Philippe Bettinelli, fabric | ch presented recent works about digital exhibitions.

-----

By fabric | ch

![]()

A few pictures from Moviment / Chapter 9:

(with fabric | ch, M. Lista & Les Immatériaux, M. Klonaris & K. Thomadaki, H.U. Obrist – "Résistances" project with LUMA Foundation and P. Parreno, J.-L. Boissier - Electra / Pictures by C. Babski & H. Veronese)

Via Centre Pompidou

-----

Beyond Matter / Par-delà la matière

Moviment, chapter 9

Sat 8 – Sun 9 July 2023

Combining technology and memory, the penultimate chapter of Moviment looks back at two major cultural events in the world of art and music, and the ways in which they can be perpetuated, revived or experienced beyond their materiality and topicality.

In partnership with LUMA Foundation

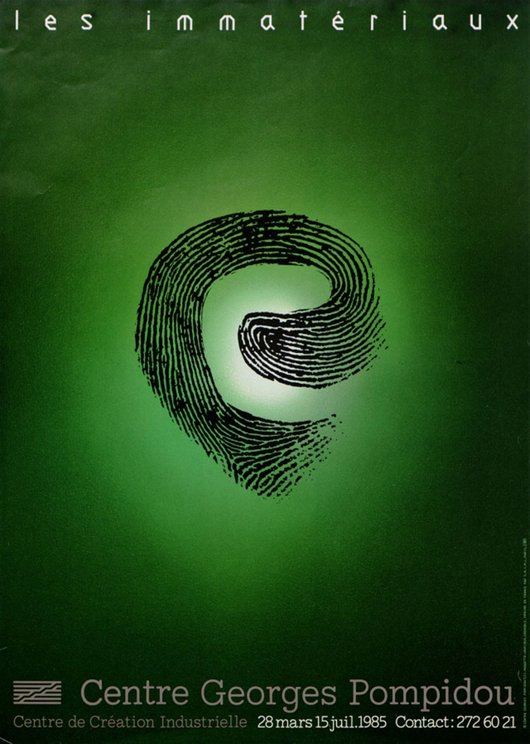

Affiche de l’exposition "Les Immatériaux", 1985 – © Centre Pompidou. Conception graphique : Grafibus.

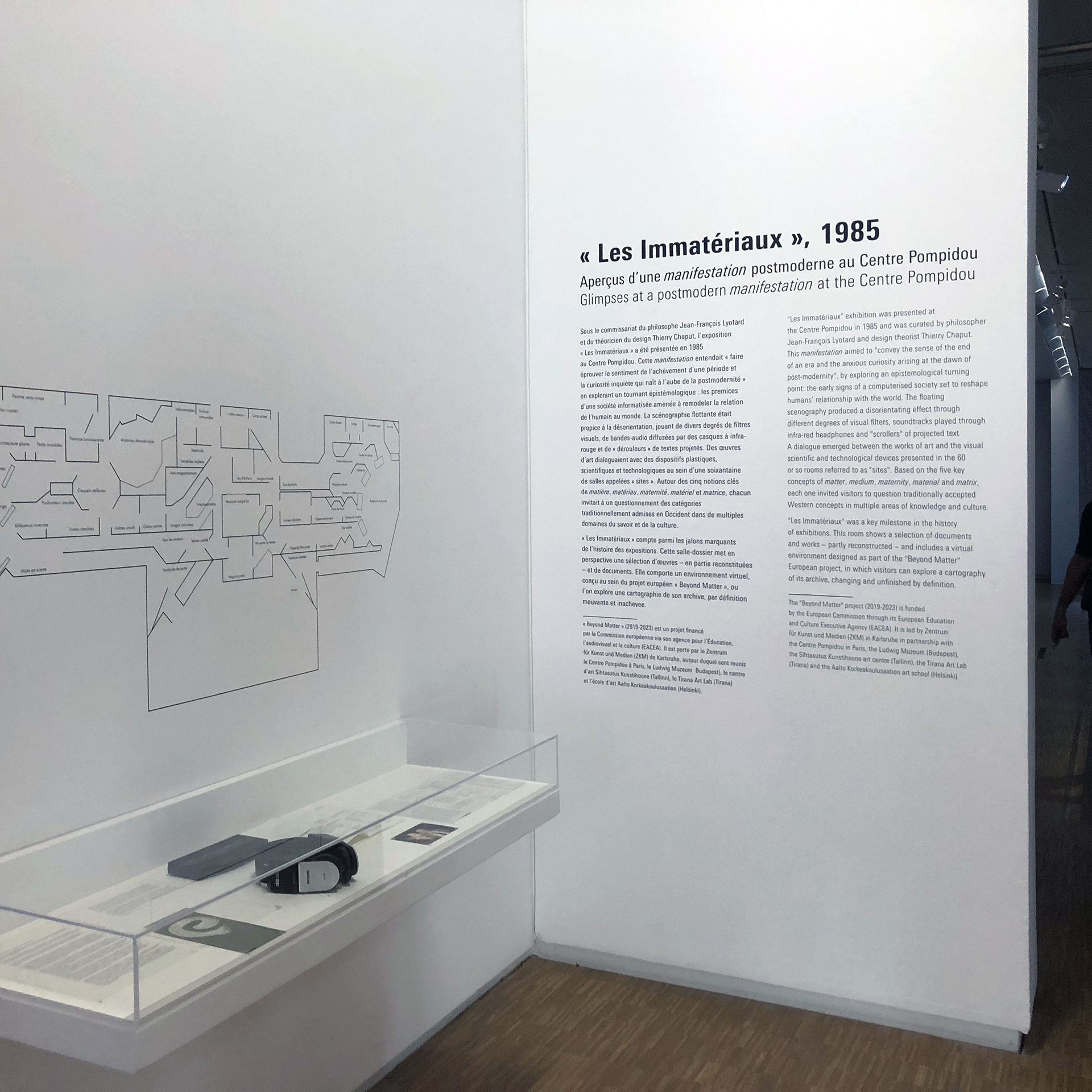

Retour sur « Les Immatériaux »

Arts plastiques, Nouveaux médias Exposition, rencontres

Organisé par les commissaires Jean-François Lyotard et Thierry Chaput en 1985, « Les Immatériaux » était un essai aux fondements philosophiques adoptant l’exposition comme média ou interface. En faisant dialoguer œuvres d’art, technologies et documents scientifiques, les commissaires interrogeaient la condition humaine à l’ère des nouvelles technologies, dans différents domaines de la vie physique et psychique. La scénographie, particulièrement novatrice, privilégiait la désorientation, la stimulation de tous les sens et l’interactivité. Les visiteurs, dont le parcours n’était pas contraint mais « induit » par des écrans suspendus à l’opacité variable, étaient munis d’un casque diffusant une bande sonore variant au gré de leur déambulation dans la soixantaine de sites et les vingt-six zones audio de l’exposition.

Cette exposition historique a récemment fait l’objet d’une reconstitution virtuelle dans le cadre du projet de recherche « Beyond Matter ».

Exposition en continu, samedi 8 juillet 2023

« Beyond Matter »

Financé par la Commission européenne, le projet de recherche « Beyond Matter: Cultural Heritage on the Verge of Virtual Reality » vise à développer des outils technologiques et théoriques pour la reconstitution virtuelle d’expositions historiques et la documentation d’expositions en cours. Les recherches menées dans le cadre de ce projet ont notamment porté sur deux expositions pionnières : « Iconoclash » (4 mai–1er septembre 2002, ZKM) et « Les Immatériaux » (28 mars–15 juillet 1985, Centre Pompidou).

Lívia Nolasco-Rózsás et Marianne Schädler du Zentrum für Kunst und Medien de Karlsruhe (ZKM) présenteront la publication conclusive du projet européen.

Les artistes Jeremy Bailey, Damjanski, fabric | ch (Patrick Keller, Christian Babski, Christophe Guignard), Geraldine Juárez (en visioconférence), Carolyn Kirschner et Anne Le Troter présenteront ensuite les œuvres qu’ils et elles ont pu concevoir dans le cadre de l’exposition « Matter, Non-Matter, Anti-Matter » (3 décembre 2022–23 avril 2023, ZKM), restituant une partie des résultats du projet « Beyond Matter ».

Samedi 8 juillet 2023, 13h30–16h

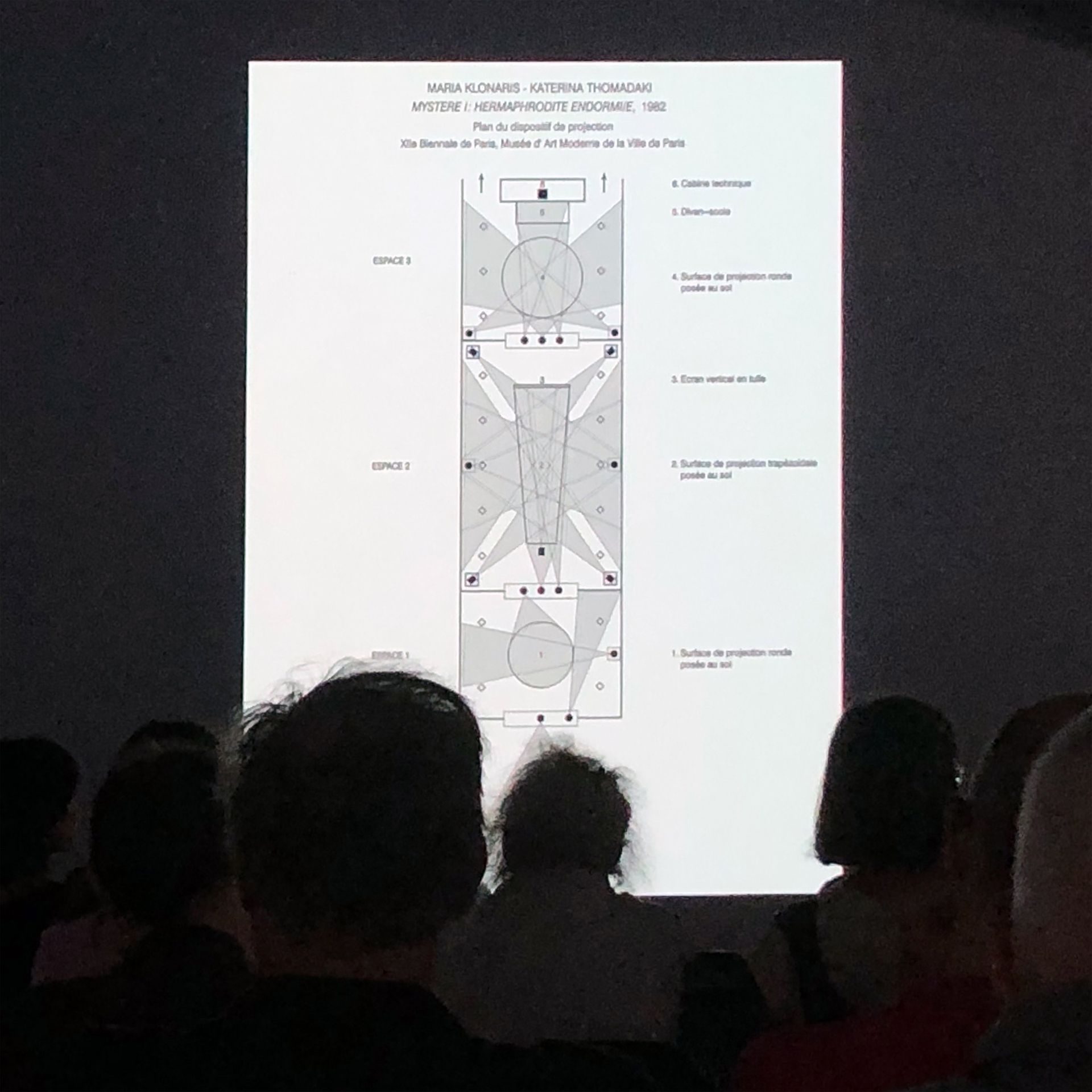

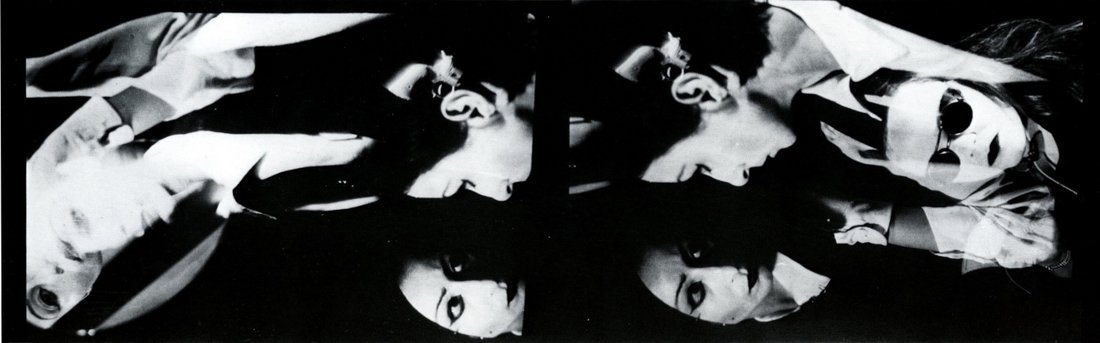

« Les Immatériaux » : Artistes et historiens en conversation

Le chercheur Andreas Broeckmann, ainsi que les artistes Katerina Thomadaki, Jean-Louis Boissier et Jean-Claude Fall reviennent sur « Les Immatériaux », exposition pionnière à laquelle ils ont participé.

Séance modérée par Marcella Lista, Philippe Bettinelli et Marie Vicet.

Samedi 8 juillet 2023, 16h-18h

Maria Klonaris, Katerina Thomadaki, "Orlando-Hermaphrodite II", 1985, photographies noir et blanc sur panneau.

Courtesy Katerina Thomadaki.

Après « Les Immatériaux »

Projet porté par le curateur Hans Ulrich Obrist et l’artiste Philippe Parreno avec le soutien de LUMA Foundation, « Résistances » fait écho à l’exposition « Les Immatériaux » imaginée par Jean-François Lyotard. Resté inachevé, ce projet se trouve tout à la fois continué et réimaginé à travers des rencontres et la production de « films de pensée ».

Samedi 8 juillet 2023, 18h–19h30

La conversation se déroulera en français et en anglais, suivie d’une projection des « films de pensée ».

Films de pensée :

Produits et commandés par LUMA Foundation

Courtesy Maja Hoffmann / Luma Foundation Collection

- Être Résistances d’Albert Serra (2016, 198 min.)

« Être Résistances est un film qui résiste à en être un, c'est-à-dire qu'il résiste à la conscience et au langage cinématographique. C'est un film qui traite de théorie, de corps, de bruits, de discours, d'images, de jeu et d'ironie. C'est un film inspiré par son propre sujet invisible : l'exposition inexistante de Lyotard qui devrait résister à la communication. Le film est finalement cette exposition devenue réalité, mais comme un mirage et comme une illusion. » Albert Serra.

Séances, samedi 8 juillet 2023, à 13h30 et 17h30

Introduction par Albert Serra à 17h30

Cinéma 2, niveau –1

- The Rare Event de Ben Rivers et Ben Russell (2017, 48 min.) sous-titrage en français

Tourné dans un studio d'enregistrement parisien au plancher de bois grinçant, lors d'un « forum des idées » inaugural de trois jours consacré aux multiples possibilités de la Résistance – titre de l'exposition de Jean-François Lyotard qui devait faire suite à son exposition « Les Immatériaux » de 1985 –, les collaborateurs occasionnels Ben Rivers et Ben Russell (avec la contribution de l'artiste américain Peter Burr) ont produit ce qui apparaît d'abord comme un document structuraliste d'une discussion philosophique performée qui se transforme lentement en The Rare Event, un portail qui réunit toutes les dimensions en une seule.

- Resistances de Rachel Rose (2017-2019, 20 min.) VO en anglais

Ce court-métrage documente la deuxième rencontre sur les « Résistances » qui a eu lieu en février 2017 à New York, offrant ainsi un aperçu des conversations et des réflexions qui se sont déployées au cours de ce « forum des idées » organisé par Maja Hoffmann. Parmi les intervenants figurent Tyrone Hayes, Isabelle Thomas Fogiel, Reza Negarestani, Ariana Reines, Fred Moten, et de nombreux autres invités.

Projection, samedi 8 juillet 2023, à 19h30

Plan fixe du film "THE RARE EVENT" de Ben Rivers et Ben Russell.

Courtesy Maja Hoffmann / Luma Foundation Collection.

En lien avec la présentation temporaire au Musée, niveau 4, Espace de consultation des collections vidéos, films, sons et œuvres numériques :

« Les Immatériaux » (1985). Aperçus d'une manifestation postmoderne au Centre Pompidou. Du 5 juillet au 30 octobre 2023

Couverture de l'album Daft Punk, "Random Access Memories" © Zaina

Daft Punk, Random Access Memories

Session d’écoute avec Sonorium

Musique Session d'écoute, rencontre

5 Grammy Awards et un triomphe instantané, un tube planétaire ("Get Lucky"), une production incroyable de précision et des collaborations prestigieuses (Pharrell Williams, Julian Casablancas, Panda Bear, Giorgio Moroder…), Random Access Memories, le dernier album de Daft Punk, a marqué les esprits et installé le duo comme une figure majeure de la pop contemporaine.

À l'occasion de l'édition 10e anniversaire de l'album culte le 12 mai dernier, le Centre Pompidou et Sonorium vous invitent à une session d'écoute de l'album en intégralité, dans des conditions exceptionnelles grâce à une installation sonore immersive réalisée par l’Ircam et une nouvelle technologie immersive développée par sa filiale Ircam Amplify.

Dimanche 9 juillet 2023 – gratuit sur réservation

Sessions précédées d'une introduction par Éric Jean-Jean et suivies d'une discussion avec le public

Day-by-day program

Saturday, 8 July 2023

|

Continuous 11am-1pm |

Performance Christian Falsnaes, First (2016) |

|

Exhibition Reconstitution virtuelle de l'exposition « Les Immatériaux » |

|

| 1:30pm-2pm |

Meeting « Beyond Matter » Présentation de la publication conclusive du projet européen « Beyond Matter: Cultural Heritage on the Verge of Virtual Reality » |

| 1:30pm-5pm |

Screening Après « Les Immatériaux » : Films de pensée Être Résistances d’Albert Serra (2016, 198 min.) |

| 2pm-4pm |

Meeting « Beyond Matter » Présentation d'œuvres conçues dans le cadre de l'exposition « Matter, Non-matter, Anti-Matter » Avec les artistes Jeremy Bailey, Damjanski, fabric | ch (Patrick Keller, Christian Babski, Christophe Guignard), Geraldine Juárez (en visioconférence), Carolyn Kirschner et Anne Le Troter |

| 4pm-6pm |

Meeting « Les Immatériaux » : Artistes et historiens en conversation Avec Andreas Broeckmann, Katerina Thomadaki, Jean-Louis Boissier et Jean-Claude Fall Modérée par Marcella Lista, Philippe Bettinelli et Marie Vicet (Centre Pompidou) |

| 5:30pm-9pm |

Screening Après « Les Immatériaux » : Films de pensée Être Résistances d’Albert Serra (2016, 198 min.) En présence du cinéaste |

| 6pm-7:30pm |

Meeting Après « Les Immatériaux » : Rencontre en partenariat avec LUMA Foundation Avec Hans Ulrich Obrist, Philippe Parreno, Daniel Birnbaum, Anna Longo, Maja Hoffmann (en visioconférence) et Albert Serra |

| 7:30pm-9pm |

>Screening Après « Les Immatériaux » : Films de pensée

|

Sunday, 9 July 2023

|

Reconstitution virtuelle de l'exposition « Les Immatériaux »

|

Closing of the gallery 3 at the beginning of the day |

|

Continuous 3pm-9pm |

Performance Christian Falsnaes, First (2016) |

|

4pm-6pm 6:30pm-8:30pm |

Listening session, meeting Daft Punk, Random Access memories Introduction par Éric Jean-Jean Session d'écoute Discussion avec le public |

Guests

|

Hans Ulrich ObristVisual arts

|

Philippe ParrenoVisual arts

|

|

Jean-Louis BoissierNew media

|

Katerina ThomadakiNew media

|

Also in the presence of:

Jeremy Bailey, Daniel Birnbaum, Andreas Broeckmann, Damjanski, fabric | ch (Patrick Keller, Christian Babski, Christophe Guignard), Jean-Claude Fall, Maja Hoffmann, Éric Jean-Jean, Geraldine Juárez, Carolyn Kirschner, Anna Longo, Marianne Schädler, Albert Serra, Anne Le Troter.

Tuesday, July 18. 2023

fabric | ch at Centre Pompidou for Moviment (Ch. 9, "Par-delà la matière") | #hybrid #exhibition #centrepompidou #matter #non-matter #LesImmatériaux

Note: fabric | ch presented its recent works at the Centre Pompidou in early July, as part of the Moviment program of exhibitions/performances/conferences/projections. We took part in Chapter 9: Beyond Matter.

The focus of the weekend was a return to the historic exhibition "Les Immatériaux" (1985, cur. T. Chaput & J.F. Lyotard) and the contemporary questioning of the postmodern period.

Participants included artists who took part in Les Immatériaux (J.-L. Boissier, K. Thomadaki, J.-C. Fall), as well as contemporary curators such as H.-U. Obrist and D. Birnbaum, so as artists and filmmakers P. Parreno, A. Serra and philosopher A. Longo.

Via @ptrckkllr

-----

Thursday, June 29. 2023

Atomized (re-)Staging by fabric | ch at Centre Pompidou | #exhibitions #digital #revival #iconoclash #immatériaux

Note: At the invitation of Macella Lista and Livia Nolasco-Roszas (curators), fabric | ch presents Atomized (re-)Staging during the Moviment festival-exhibition at the Centre Pompidou in Paris, as part of a weekend devoted to a return to the landmark exhibition Les Immatériaux (which took place at Beaubourg in 1985).

Via @ptrckkllr and @beyondmatereu (research project & exhibition: Beyond Matter)

-----

Tuesday, September 21. 2021

"Essais climatiques" by P. Rahm, Ed. B2 (Paris, 2020) | #2ndaugmentation #essay #AR #VR

Note: it is with great pleasure and interest that I read recently one of Philippe Rahm's last publication, "Essais climatiques" (published in French, by Editions B2), which consists in fact in a collection of articles published in the past 10+ years in various magazines, journals and exhibition catalogs. It is certainly less developed than the even more recent book, "L'histoire naturelle de l'architecture" (ed. Pavillon de l'Arsenal, 2020), but nonetheless an excellent and brief introduction to his thinking and work.

Philippe Rahm's call for the "return" of an "objective architecture", climatic and free of narrative issues, is of great interest. Especially at a time when we need to reduce our CO2 emissions and will need to reach energy objectives of slenderness. The historical reading of the postmodern era (in architecture), in relation to oil, vaccines and antibiotics is also really valuable in this context, when we are all looking to move forward this time in cultural history.

I also had the good surprise, and joy, to see the text "L'âge de la deuxième augmentation" finally published! It was written by Philippe back in 2009 probably, about the works of fabric | ch at the time, when we were preparing a publication that finally never came out... Though this text will also be part of a monographic publication that is expected to be finalized and self-published in 2022.

Via fabric | ch

-----

Friday, February 01. 2019

The Yoda of Silicon Valley | #NewYorkTimes #Algorithms

Note: Yet another dive into history of computer programming and algorithms, used for visual outputs ...

Via The New York Times (on Medium)

-----

By Siobhan Roberts

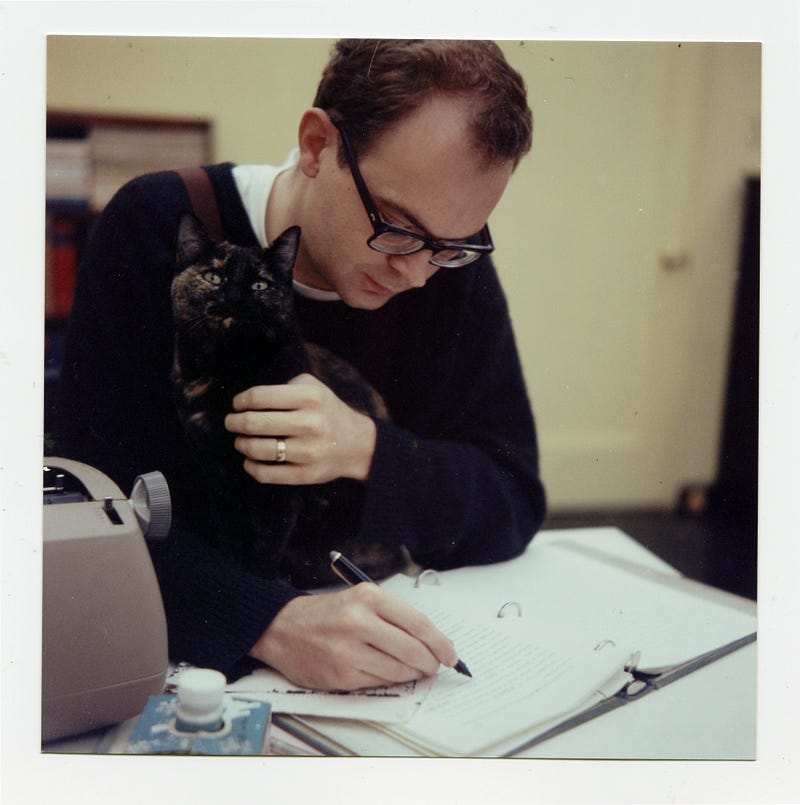

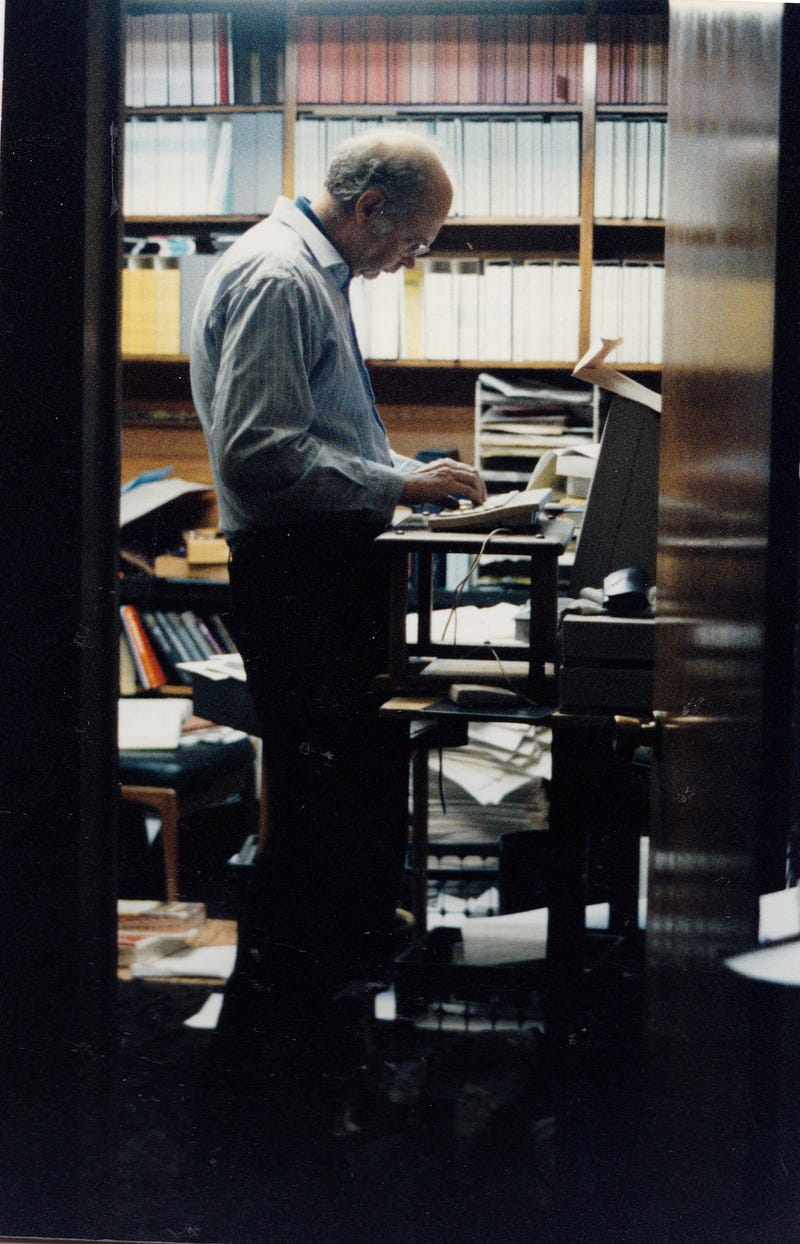

Donald Knuth at his home in Stanford, Calif. He is a notorious perfectionist and has offered to pay a reward to anyone who finds a mistake in any of his books. Photo: Brian Flaherty

For half a century, the Stanford computer scientist Donald Knuth, who bears a slight resemblance to Yoda — albeit standing 6-foot-4 and wearing glasses — has reigned as the spirit-guide of the algorithmic realm.

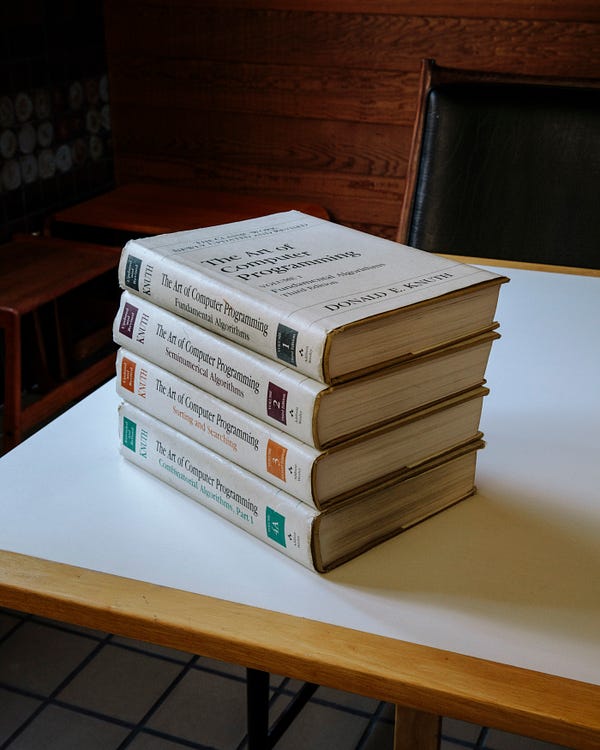

He is the author of “The Art of Computer Programming,” a continuing four-volume opus that is his life’s work. The first volume debuted in 1968, and the collected volumes (sold as a boxed set for about $250) were included by American Scientist in 2013 on its list of books that shaped the last century of science — alongside a special edition of “The Autobiography of Charles Darwin,” Tom Wolfe’s “The Right Stuff,” Rachel Carson’s “Silent Spring” and monographs by Albert Einstein, John von Neumann and Richard Feynman.

With more than one million copies in print, “The Art of Computer Programming” is the Bible of its field. “Like an actual bible, it is long and comprehensive; no other book is as comprehensive,” said Peter Norvig, a director of research at Google. After 652 pages, volume one closes with a blurb on the back cover from Bill Gates: “You should definitely send me a résumé if you can read the whole thing.”

The volume opens with an excerpt from “McCall’s Cookbook”:

Here is your book, the one your thousands of letters have asked us to publish. It has taken us years to do, checking and rechecking countless recipes to bring you only the best, only the interesting, only the perfect.

Inside are algorithms, the recipes that feed the digital age — although, as Dr.Knuth likes to point out, algorithms can also be found on Babylonian tablets from 3,800 years ago. He is an esteemed algorithmist; his name is attached to some of the field’s most important specimens, such as the Knuth-Morris-Pratt string-searching algorithm. Devised in 1970, it finds all occurrences of a given word or pattern of letters in a text — for instance, when you hit Command+F to search for a keyword in a document.

Now 80, Dr. Knuth usually dresses like the youthful geek he was when he embarked on this odyssey: long-sleeved T-shirt under a short-sleeved T-shirt, with jeans, at least at this time of year. In those early days, he worked close to the machine, writing “in the raw,” tinkering with the zeros and ones.

“Knuth made it clear that the system could actually be understood all the way down to the machine code level,” said Dr. Norvig. Nowadays, of course, with algorithms masterminding (and undermining) our very existence, the average programmer no longer has time to manipulate the binary muck, and works instead with hierarchies of abstraction, layers upon layers of code — and often with chains of code borrowed from code libraries. But an elite class of engineers occasionally still does the deep dive.

“Here at Google, sometimes we just throw stuff together,” Dr. Norvig said, during a meeting of the Google Trips team, in Mountain View, Calif. “But other times, if you’re serving billions of users, it’s important to do that efficiently. A 10-per-cent improvement in efficiency can work out to billions of dollars, and in order to get that last level of efficiency, you have to understand what’s going on all the way down.”

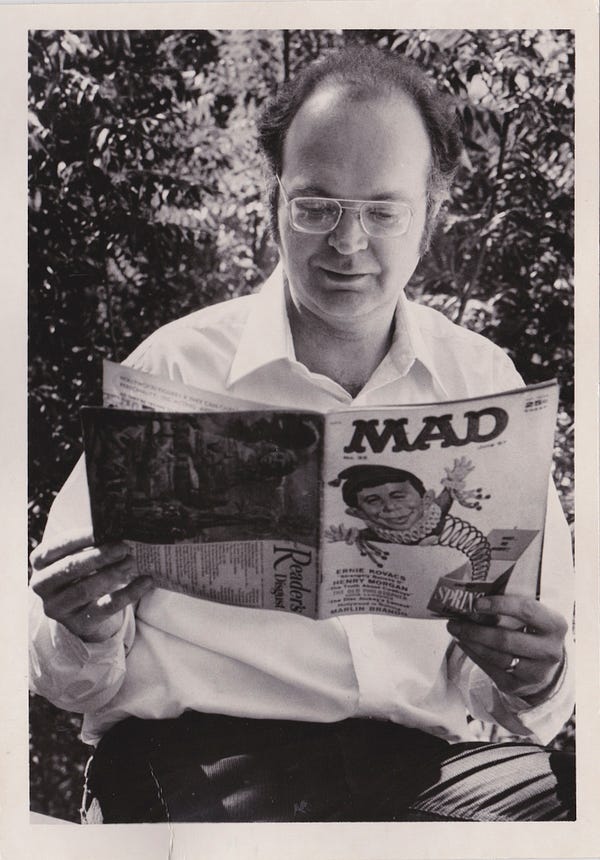

Dr. Knuth at the California Institute of Technology, where he received his Ph.D. in 1963. Photo: Jill Knuth

Or, as Andrei Broder, a distinguished scientist at Google and one of Dr. Knuth’s former graduate students, explained during the meeting: “We want to have some theoretical basis for what we’re doing. We don’t want a frivolous or sloppy or second-rate algorithm. We don’t want some other algorithmist to say, ‘You guys are morons.’”

The Google Trips app, created in 2016, is an “orienteering algorithm” that maps out a day’s worth of recommended touristy activities. The team was working on “maximizing the quality of the worst day” — for instance, avoiding sending the user back to the same neighborhood to see different sites. They drew inspiration from a 300-year-old algorithm by the Swiss mathematician Leonhard Euler, who wanted to map a route through the Prussian city of Königsberg that would cross each of its seven bridges only once. Dr. Knuth addresses Euler’s classic problem in the first volume of his treatise. (He once applied Euler’s method in coding a computer-controlled sewing machine.)

Following Dr. Knuth’s doctrine helps to ward off moronry. He is known for introducing the notion of “literate programming,” emphasizing the importance of writing code that is readable by humans as well as computers — a notion that nowadays seems almost twee. Dr. Knuth has gone so far as to argue that some computer programs are, like Elizabeth Bishop’s poems and Philip Roth’s “American Pastoral,” works of literature worthy of a Pulitzer.

He is also a notorious perfectionist. Randall Munroe, the xkcd cartoonist and author of “Thing Explainer,” first learned about Dr. Knuth from computer-science people who mentioned the reward money Dr. Knuth pays to anyone who finds a mistake in any of his books. As Mr. Munroe recalled, “People talked about getting one of those checks as if it was computer science’s Nobel Prize.”

Dr. Knuth’s exacting standards, literary and otherwise, may explain why his life’s work is nowhere near done. He has a wager with Sergey Brin, the co-founder of Google and a former student (to use the term loosely), over whether Mr. Brin will finish his Ph.D. before Dr. Knuth concludes his opus.

The dawn of the algorithm

At age 19, Dr. Knuth published his first technical paper, “The Potrzebie System of Weights and Measures,” in Mad magazine. He became a computer scientist before the discipline existed, studying mathematics at what is now Case Western Reserve University in Cleveland. He looked at sample programs for the school’s IBM 650 mainframe, a decimal computer, and, noticing some inadequacies, rewrote the software as well as the textbook used in class. As a side project, he ran stats for the basketball team, writing a computer program that helped them win their league — and earned a segment by Walter Cronkite called “The Electronic Coach.”

During summer vacations, Dr. Knuth made more money than professors earned in a year by writing compilers. A compiler is like a translator, converting a high-level programming language (resembling algebra) to a lower-level one (sometimes arcane binary) and, ideally, improving it in the process. In computer science, “optimization” is truly an art, and this is articulated in another Knuthian proverb: “Premature optimization is the root of all evil.”

Eventually Dr. Knuth became a compiler himself, inadvertently founding a new field that he came to call the “analysis of algorithms.” A publisher hired him to write a book about compilers, but it evolved into a book collecting everything he knew about how to write for computers — a book about algorithms.

Left: Dr. Knuth in 1981, looking at the 1957 Mad magazine issue that contained his first technical article. He was 19 when it was published. Photo: Jill Knuth. Right: “The Art of Computer Programming,” volumes 1–4. “Send me a résumé if you can read the whole thing,” Bill Gates wrote in a blurb. Photo: Brian Flaherty

“By the time of the Renaissance, the origin of this word was in doubt,” it began. “And early linguists attempted to guess at its derivation by making combinations like algiros [painful] + arithmos [number].’” In fact, Dr. Knuth continued, the namesake is the 9th-century Persian textbook author Abū ‘Abd Allāh Muhammad ibn Mūsā al-Khwārizmī, Latinized as Algorithmi. Never one for half measures, Dr. Knuth went on a pilgrimage in 1979 to al-Khwārizmī’s ancestral homeland in Uzbekistan.

When Dr. Knuth started out, he intended to write a single work. Soon after, computer science underwent its Big Bang, so he reimagined and recast the project in seven volumes. Now he metes out sub-volumes, called fascicles. The next installation, “Volume 4, Fascicle 5,” covering, among other things, “backtracking” and “dancing links,” was meant to be published in time for Christmas. It is delayed until next April because he keeps finding more and more irresistible problems that he wants to present.

In order to optimize his chances of getting to the end, Dr. Knuth has long guarded his time. He retired at 55, restricted his public engagements and quit email (officially, at least). Andrei Broder recalled that time management was his professor’s defining characteristic even in the early 1980s.

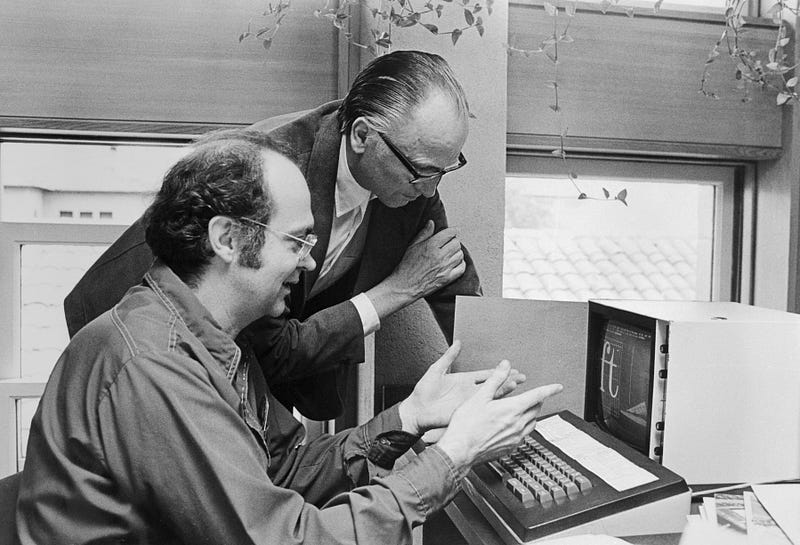

Dr. Knuth typically held student appointments on Friday mornings, until he started spending his nights in the lab of John McCarthy, a founder of artificial intelligence, to get access to the computers when they were free. Horrified by what his beloved book looked like on the page with the advent of digital publishing, Dr. Knuth had gone on a mission to create the TeX computer typesetting system, which remains the gold standard for all forms of scientific communication and publication. Some consider it Dr. Knuth’s greatest contribution to the world, and the greatest contribution to typography since Gutenberg.

This decade-long detour took place back in the age when computers were shared among users and ran faster at night while most humans slept. So Dr. Knuth switched day into night, shifted his schedule by 12 hours and mapped his student appointments to Fridays from 8 p.m. to midnight. Dr. Broder recalled, “When I told my girlfriend that we can’t do anything Friday night because Friday night at 10 I have to meet with my adviser, she thought, ‘This is something that is so stupid it must be true.’”

When Knuth chooses to be physically present, however, he is 100-per-cent there in the moment. “It just makes you happy to be around him,” said Jennifer Chayes, a managing director of Microsoft Research. “He’s a maximum in the community. If you had an optimization function that was in some way a combination of warmth and depth, Don would be it.”

Dr. Knuth discussing typefaces with Hermann Zapf, the type designer. Many consider Dr. Knuth’s work on the TeX computer typesetting system to be the greatest contribution to typography since Gutenberg. Photo: Bettmann/Getty Images

Sunday with the algorithmist

Dr. Knuth lives in Stanford, and allowed for a Sunday visitor. That he spared an entire day was exceptional — usually his availability is “modulo nap time,” a sacred daily ritual from 1 p.m. to 4 p.m. He started early, at Palo Alto’s First Lutheran Church, where he delivered a Sunday school lesson to a standing-room-only crowd. Driving home, he got philosophical about mathematics.

“I’ll never know everything,” he said. “My life would be a lot worse if there was nothing I knew the answers about, and if there was nothing I didn’t know the answers about.” Then he offered a tour of his “California modern” house, which he and his wife, Jill, built in 1970. His office is littered with piles of U.S.B. sticks and adorned with Valentine’s Day heart art from Jill, a graphic designer. Most impressive is the music room, built around his custom-made, 812-pipe pipe organ. The day ended over beer at a puzzle party.

Puzzles and games — and penning a novella about surreal numbers, and composing a 90-minute multimedia musical pipe-dream, “Fantasia Apocalyptica” — are the sorts of things that really tickle him. One section of his book is titled, “Puzzles Versus the Real World.” He emailed an excerpt to the father-son team of Martin Demaine, an artist, and Erik Demaine, a computer scientist, both at the Massachusetts Institute of Technology, because Dr. Knuth had included their “algorithmic puzzle fonts.”

“I was thrilled,” said Erik Demaine. “It’s an honor to be in the book.” He mentioned another Knuth quotation, which serves as the inspirational motto for the biannual “FUN with Algorithms” conference: “Pleasure has probably been the main goal all along.”

But then, Dr. Demaine said, the field went and got practical. Engineers and scientists and artists are teaming up to solve real-world problems — protein folding, robotics, airbags — using the Demaines’s mathematical origami designs for how to fold paper and linkages into different shapes.

Of course, all the algorithmic rigmarole is also causing real-world problems. Algorithms written by humans — tackling harder and harder problems, but producing code embedded with bugs and biases — are troubling enough. More worrisome, perhaps, are the algorithms that are not written by humans, algorithms written by the machine, as it learns.

Programmers still train the machine, and, crucially, feed it data. (Data is the new domain of biases and bugs, and here the bugs and biases are harder to find and fix). However, as Kevin Slavin, a research affiliate at M.I.T.’s Media Lab said, “We are now writing algorithms we cannot read. That makes this a unique moment in history, in that we are subject to ideas and actions and efforts by a set of physics that have human origins without human comprehension.” As Slavin has often noted, “It’s a bright future, if you’re an algorithm.”

Dr. Knuth at his desk at home in 1999. Photo: Jill Knuth

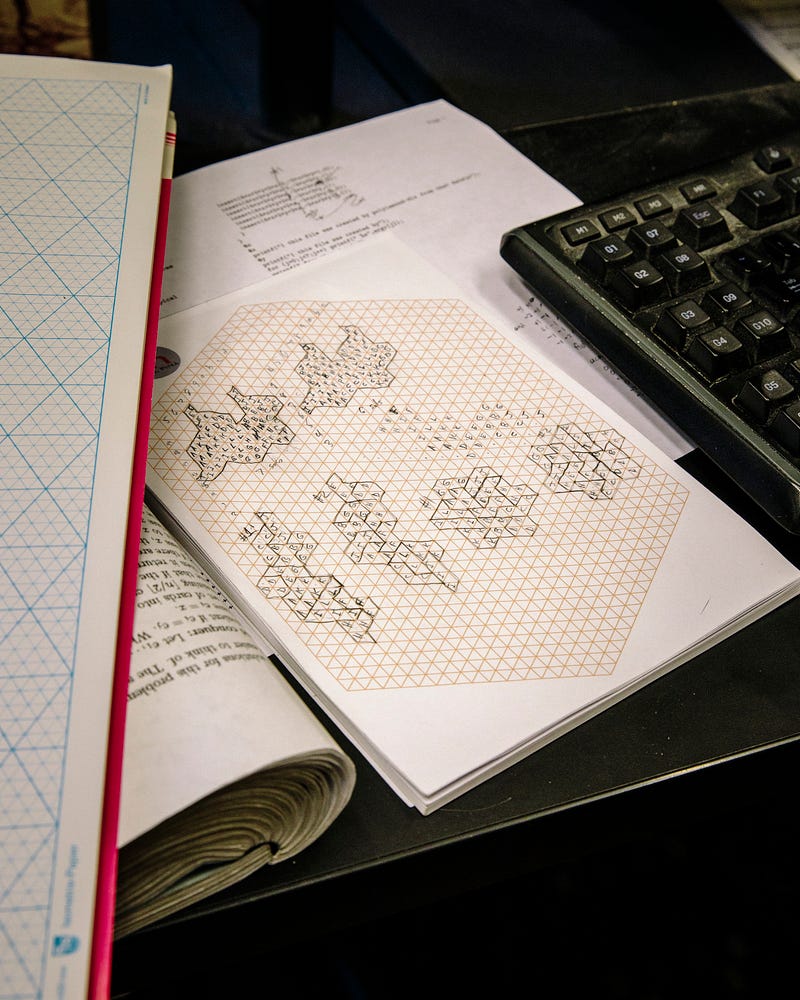

A few notes. Photo: Brian Flaherty

All the more so if you’re an algorithm versed in Knuth. “Today, programmers use stuff that Knuth, and others, have done as components of their algorithms, and then they combine that together with all the other stuff they need,” said Google’s Dr. Norvig.

“With A.I., we have the same thing. It’s just that the combining-together part will be done automatically, based on the data, rather than based on a programmer’s work. You want A.I. to be able to combine components to get a good answer based on the data. But you have to decide what those components are. It could happen that each component is a page or chapter out of Knuth, because that’s the best possible way to do some task.”

Lucky, then, Dr. Knuth keeps at it. He figures it will take another 25 years to finish “The Art of Computer Programming,” although that time frame has been a constant since about 1980. Might the algorithm-writing algorithms get their own chapter, or maybe a page in the epilogue? “Definitely not,” said Dr. Knuth.

“I am worried that algorithms are getting too prominent in the world,” he added. “It started out that computer scientists were worried nobody was listening to us. Now I’m worried that too many people are listening.”

Friday, July 13. 2018

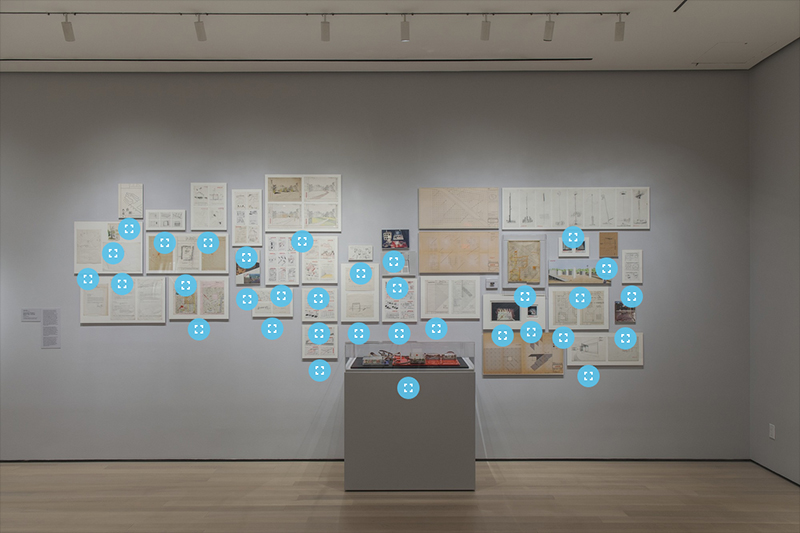

Thinking Machines at MOMA | #computing #art&design&architecture #history #avantgarde

Note: following the exhibition Thinking Machines: Art and Design in the Computer Age, 1959–1989 until last April at MOMA, images of the show appeared on the museum's website, with many references to projects. After Archeology of the Digital at CCA in Montreal between 2013-17, this is another good contribution to the history of the field and to the intricate relations between art, design, architecture and computing.

How cultural fields contributed to the shaping of this "mass stacked media" that is now built upon the combinations of computing machines, networks, interfaces, services, data, data centers, people, crowds, etc. is certainly largely underestimated.

Literature start to emerge, but it will take time to uncover what remained "out of the radars" for a very long period. They acted in fact as some sort of "avant-garde", not well estimated or identified enough, even by specialized institutions and at a time when the name "avant-garde" almost became a "s-word"... or was considered "dead".

Unfortunately, no publication seems to have been published in relation to the exhibition, on the contrary to the one at CCA, which is accompanied by two well documented books.

Via MOMA

-----

Thinking Machines: Art and Design in the Computer Age, 1959–1989

November 13, 2017–

Drawn primarily from MoMA's collection, Thinking Machines: Art and Design in the Computer Age, 1959–1989 brings artworks produced using computers and computational thinking together with notable examples of computer and component design. The exhibition reveals how artists, architects, and designers operating at the vanguard of art and technology deployed computing as a means to reconsider artistic production. The artists featured in Thinking Machines exploited the potential of emerging technologies by inventing systems wholesale or by partnering with institutions and corporations that provided access to cutting-edge machines. They channeled the promise of computing into kinetic sculpture, plotter drawing, computer animation, and video installation. Photographers and architects likewise recognized these technologies' capacity to reconfigure human communities and the built environment.

Thinking Machines includes works by John Cage and Lejaren Hiller, Waldemar Cordeiro, Charles Csuri, Richard Hamilton, Alison Knowles, Beryl Korot, Vera Molnár, Cedric Price, and Stan VanDerBeek, alongside computers designed by Tamiko Thiel and others at Thinking Machines Corporation, IBM, Olivetti, and Apple Computer. The exhibition combines artworks, design objects, and architectural proposals to trace how computers transformed aesthetics and hierarchies, revealing how these thinking machines reshaped art making, working life, and social connections.

Organized by Sean Anderson, Associate Curator, Department of Architecture and Design, and Giampaolo Bianconi, Curatorial Assistant, Department of Media and Performance Art.

-

More images HERE.

fabric | rblg

This blog is the survey website of fabric | ch - studio for architecture, interaction and research.

We curate and reblog articles, researches, writings, exhibitions and projects that we notice and find interesting during our everyday practice and readings.

Most articles concern the intertwined fields of architecture, territory, art, interaction design, thinking and science. From time to time, we also publish documentation about our own work and research, immersed among these related resources and inspirations.

This website is used by fabric | ch as archive, references and resources. It is shared with all those interested in the same topics as we are, in the hope that they will also find valuable references and content in it.

Quicksearch

Categories

Calendar

|

|

July '25 | |||||

| Mon | Tue | Wed | Thu | Fri | Sat | Sun |

| 1 | 2 | 3 | 4 | 5 | 6 | |

| 7 | 8 | 9 | 10 | 11 | 12 | 13 |

| 14 | 15 | 16 | 17 | 18 | 19 | 20 |

| 21 | 22 | 23 | 24 | 25 | 26 | 27 |

| 28 | 29 | 30 | 31 | |||