Sticky Postings

All 242 fabric | rblg updated tags | #fabric|ch #wandering #reading

By fabric | ch

-----

As we continue to lack a decent search engine on this blog and as we don't use a "tag cloud" ... This post could help navigate through the updated content on | rblg (as of 09.2023), via all its tags!

FIND BELOW ALL THE TAGS THAT CAN BE USED TO NAVIGATE IN THE CONTENTS OF | RBLG BLOG:

(to be seen just below if you're navigating on the blog's html pages or here for rss readers)

--

Note that we had to hit the "pause" button on our reblogging activities a while ago (mainly because we ran out of time, but also because we received complaints from a major image stock company about some images that were displayed on | rblg, an activity that we felt was still "fair use" - we've never made any money or advertised on this site).

Nevertheless, we continue to publish from time to time information on the activities of fabric | ch, or content directly related to its work (documentation).

Wednesday, November 29. 2023

Architecture & Landscape Award 2023 from Fondation Vaudoise pour la Culture (FVPC) | #award #fabricch #architecture #interaction #research

Note: fabric | ch was honored to receive this year the Award for Architecture and Landscape Culture from the Fondation vaudoise pour la culture (FVPC).

The price distinguishes an actor who is "involved in the design or promotion of built environment and who, through this commitment, contributes to the quality of our natural, [digital] and built environment".

A brief video portait of fabric | ch was produced on this occasion by director Pierre-Yves Borgeaud, as well as a small publication by Art Director Emmanuel Crivelli and photographic portraits by Matthieu Croizier (see below).

We'd like to thank the foundation and its jury for awarding our studio in 2023!

-----

By fabric | ch

---

---

---

... and for the record,

our bit of the award ceremony!

Monday, August 07. 2023

Satellite Daylight Pavilion (2017) at AC Cube in Chengdu (Sichuan, CN), during Chengdu Biennale | #pavilion #environmental # device

Note: Satellite Daylight Pavilion (2017) – pdf file documentation HERE – by fabric | ch is presented during Chengdu Biennale at AC Cube in Chengdu (Sichuan, CN).

The piece is an architectural experimentation, displayed as 4 videos in loops, and articulated around two "environmental devices", namely two Satellight Daylight pieces, which tend to reorganize and entertwine the natural rythms of day and night within the pavilion.

This creates a form of luminous phasing between two spatio-temporal referents (the localized one of London's Hyde Park and those of two fictitious satellites circling the Earth), hybridizing their time and space... in a quest for a new liveable relationship with the now mediated space.

The work is part of the exhibition Community of the Future: The Same Frequency and Resonance (images below) and is curated by Guo Jinman.

Via @fabricch_asfound (fabric | ch's default Instragram account)

Tuesday, January 17. 2023

Pro Helvetia Visual Arts Beneath the Skin, Between the Machines (2022) | #exhibition #bestof2022 #review

Note: fabric | ch is thrilled to be part of Pro Helvetia's Shanghai best of 2022! Thanks for this new post and for the support to the exhibition at the HOW Art Museum, as well as it's performances and online lectures program during the pandemic!

We were glad to see that the architectural installation fabric | ch realized in this context remained useful also for remote interaction, exchange of ideas and collaboration.

...

And it's also a way – and still the time – to wish everyone a good start in 2023! With, we hope, many successes, exciting projects and creative statements responding to the challenges of our time.

Via Pro Helvetia (Visual Arts)

-----

Our Best Picks of 2022

For art practitioners or audiences alike, it has not been an easy year. The path of global encounter seemed distant for a while, but has never vanished. Somehow we know, maybe in the most surprising manner, that we will meet each other again halfway. It could be one of these reassuring moments that convinced us of hope when the world is turned upside down: the flipping of bookpages, the smiles from digital rooms, a concert without performers, a recital without playwrights. We are so eager to present what has excited, motivated, or touched us in the past year. Scroll down and discover a diverse selection of projects highlighted under the three overarching themes -- support, connect, and inspire.

Wish you a brilliant start and a Happy New Year 2023!

Pro Helvetia Shanghai

Pro Helvetia Shanghai

Swiss Arts Council

Room 509, Building 1

No.1107, Yuyuan Road, Changning District

Shanghai 200050, China

shanghai@prohelvetia.cn

-----

Beneath the Skin, Between the Machines

Exhibition overview

---

---

“Man is only man at the surface. Remove the skin, dissect, and immediately you come to machinery.” When Paul Valéry wrote this down, he might not foresee that human beings – a biological organism – would indeed be incorporated into machinery at such a profound level in a highly informationized and computerized time and space. In a sense, it is just as what Marx predicted: a conscious connection of machine[1]. Today, machine is no longer confined to any material form; instead, it presents itself in the forms of data, coding and algorithm – virtually everything that is “operable”, “calculable” and “thinkable”. Ever since the idea of cyborg emerges, the man-machine relation has always been intertwined with our imagination, vision and fear of the past, present and future.

In a sense, machine represents a projection of human beings. We human beings transfer ideas of slavery and freedom to other beings, namely a machine that could replace human beings as technical entities or tools. Opposite (and similar, in a sense,) to the “embodiment” of machine, organic beings such as human beings are hurrying to move towards “disembodiment”. Everything pertinent to our body and behavior can be captured and calculated as data. In the meantime, the social system that human beings have created never stops absorbing new technologies. During the process of trial and error, the difference and fortuity accompanying the “new” are taken in and internalized by the system. “Every accident, every impulse, every error is productive (of the social system),”[2] and hence is predictable and calculable. Within such a system, differences tend to be obfuscated and erased, but meanwhile due to highly professional complexities embedded in different disciplines/fields, genuine interdisciplinary communication is becoming increasingly difficult, if not impossible.

As a result, technologies today are highly centralized, homogenized, sophisticated and commonized. They penetrate deeply into our skin, but beyond knowing, sensing and thinking. On the one hand, the exhibition probes into the reconfiguration of man by technologies through what’s “beneath the skin”; and on the other, encourages people to rethink the position and situation we’re in under this context through what’s “between the machines”. As an art institute located at Shanghai Zhangjiang Hi-Tech Industrial Development Zone, one of the most important hi-tech parks in China, HOW Art Museum intends to carve out an open rather than enclosed field through the exhibition, inviting the public to immerse themselves and ponder upon the questions such as “How people touch machines?”, “What the machines think of us?” and “Where to position art and its practice in the face of the overwhelming presence of technology and the intricate technological reality?” Departing from these issues, the exhibition presents a selection of recent works of Revital Cohen & Tuur Van Balen, Simon Denny, Harun Farocki, Nicolás Lamas, Lynn Hershman Leeson, Lu Yang, Lam Pok Yin, David OReilly, Pakui Hardware, Jon Rafman, Hito Steyerl, Shi Zheng and Geumhyung Jeong. In the meantime, it intends to set up a “panel installation”, specially created by fabric | ch for this exhibition, trying to offer a space and occasion for decentralized observation and participation in the above discussions. Conversations and actions are to be activated as well as captured, observed and archived at the same time.

[1] Karl Marx, “Fragment on Machines”, Foundations of a Critique of Political Economy

[2] Niklas Luhmann, Social Systems

---

fabric | ch, Platform of Future-Past, 2022, Installation view at HOW Art Museum.

Work by fabric | ch

HOW Art Museum has invited Lausanne-based artist group fabric | ch to set up a “panel installation” based on their former project “Public Platform of Future Past” and adapted to the museum space, fostering insightful communication among practitioners from different fields and the audiences.

“Platform of Future-Past” is a temporary environmental device that consists in a twenty meters long walkway, or rather an observation deck, almost archaeological: a platform that overlooks an exhibition space and that, paradoxically, directly links its entrance to its exit. It thus offers the possibility of crossing this space without really entering it and of becoming its observer, as from archaeological observation decks. The platform opens- up contrasting atmospheres and offers affordances or potential uses on the ground.

The peculiarity of the work consists thus in the fact that it generates a dual perception and a potential temporal disruption, which leads to the title of the work, Platform of Future-Past: if the present time of the exhibition space and its visitors is, in fact, the “archeology” to be observed from the platform, and hence a potential “past,” then the present time of the walkway could be understood as a possible “future” viewed from the ground…

“Platform of Future-Past” is equipped in three zones with environmental monitoring devices. The sensors record as much data as possible over time, generated by the continuously changing conditions, presences and uses in the exhibition space. The data is then stored on Platform Future-Past’s servers and replayed in a loop on its computers. It is a “recorded moment”, “frozen” on the data servers, that could potentially replay itself forever or is waiting for someone to reactivate it. A “data center” on the deck, with its set of interfaces and visualizations screens, lets the visitors-observers follow the ongoing process of recording.

The work could be seen as an architectural proposal built on the idea of massive data production from our environment. Every second, our world produces massive amounts of data, stored “forever” in remote data centers, like old gas bubbles trapped in millennial ice.

As such, the project is attempting to introduce doubt about its true nature: would it be possible, in fact, that what is observed from the platform is already a present recorded from the past? A phantom situation? A present regenerated from the data recorded during a scientific experiment that was left abandoned? Or perhaps replayed by the machine itself ? Could it already, in fact, be running on a loop for years?

---

Schedule

Duration: January 15-April 24, 2022

Artists: Revital Cohen & Tuur Van Balen, Simon Denny, fabric | ch, Harun Farocki, Geumhyung Jeong, Nicolás Lamas, Lynn Hershman Leeson, Lu Yang, Lam Pok Yin, David OReilly, Pakui Hardware, Jon Rafman, Hito Steyerl, Shi Zheng

Curator: Fu Liaoliao

Organizer: HOW Art Museum, Shanghai

Lead Sponsor: APENFT Foundation

Swiss participation is supported by Pro Helvetia Shanghai, Swiss Arts Council.

---

[Banner image: fabric | ch, Platform of Future-Past, 2022, Scaffolding, projection screens, sensors, data storage, data flows, plywood panels, textile partitions, Dimensions variable.]

Event I

Beneath the Skin, Between the Machines — Series Panel

Investigating Sensoriums: Beyond Life/Machine Dichotomy

Schedule

Organizers: HOW Art Museum, Pro Helvetia Shanghai, Swiss Arts Council

Date: March 19, 2022

Time : 15:00 -16:30 (CST) / 8:00 – 9:30 (CET)

Host: Iris Long

Guests: Zian Chen, Geocinema (Solveig Qu Suess, Asia Bazdyrieva), Marc R. Dusseiller

Language: Chinese, English (with Chinese translation)

Event on Zoom(500 audience limit)

Link: https://zoom.us/j/92512967837

---

This round of discussion derived from the participating speakers’ responses toward to the title of the exhibition, Beneath the Skin, Between The Machines.

What lies beneath the skin of the earth and between the machines may well be signals among sensors. Geocinema’s study on the One Belt One Road initiative and exploration of a “planetary” notion of cinema relate directly to the above concern. Marc R. Dusseiller as a transdisciplinary scholar draws our attention to the possible pathways that skin/machines may generate for understanding to go beyond life/machine dichotomy. Zian Chen’s recent research attempts to bring the mediatized explorations back to our real living conditions. He will resort to real news events as cases to show why these mediatized explorations are embodied experience.

HOW Art Museum in collaboration with Pro Helvetia Shanghai, Swiss Arts Council, invite Zian Chen (writer and curator), Geocinema (art collective) and Marc R. Dusseiller (transdisciplinary scholar and artist) to have a panel discussion, probing into the topic of Beneath the Skin, Between The Machines from the perspectives of their own practice and experience. The panel will be moderated by curator and writer Iris Long.

* Due to pandemic restrictions, the panel will take place online. Video recording of the panel will be played on Platform of Future-Past (2022), an environmental installation conceived by fabric | ch, studio for architecture, interaction and research, for the exhibition.

---

About the Host

Iris Long

About the Guests

Zian Chen, Geocinema, Marc R. Dusseiller

---

Event II

Schedule

Organizers: HOW Art Museum, Pro Helvetia Shanghai, Swiss Arts Council, Conversazione (CVSZ)

Date: April 16, 2022

Time : 14:00-15:30(CST)/ 7:00 – 8:30 (CET)

Host: Cai Yixuan

Guests:Chloé Delarue, fabric | ch, Pedro Wirz, Chun Shao

Language: Chinese, English (with Chinese translation)

Event on Zoom(500 audience limit)

Link: https://us06web.zoom.us/j/85822263121

---

In the hotbed to breed new forms of life, the notion of “life” and digital intimacy are under constant construction and development.

Unfolding the histories of nature and civilization, what kind of interactions could be perceived from the materials, which we constantly used in past narratives, and the environment? How have these interactive relationships, perceivable yet invisible, been evolving and entwined?

This Saturday from 14:00 to 15:30, HOW Art Museum in collaboration with Pro Helvetia Shanghai, Swiss Arts Council and Conversazione, research-based art and design collective based in China, invite Chloé Delarue, whose ongoing body of work centers on the notion of TAFAA – Towards A Fully Automated Appearance; fabric | ch, studio for architecture and research who present an environmental installation at HOW Art Museum where discussions and events could take place; Pedro Wirz whose practice seeks to merge the supernatural with scientific realities; and Shao Chun whose new media artistic practice is dedicated to combing traditional handicrafts and electronic programming to have a panel discussion.

The event intends to probe into the topics covered in Beneath the Skin, Between The Machines and participants will share with audience their insights to materials and media from the perspectives of their own practices. The panel will be moderated by curator Cai Yixuan.

---

About the Host

Cai Yixuan

About the Guests

Chloé Delarue, fabric | ch, Pedro Wirz, Chun Shao

---

Event III

Beneath the Skin, Between the Machines — Online Performance

Holding it together*: myself and the other by Jessica Huber

Schedule

Artist: Jessica Huber, in collaboration with the performers Géraldine Chollet & Robert Steijn, video by Michelle Ettlin

Date: May 31, 2022, 21:00-June 4, 24:00, 2022

Organizer: HOW Art Museum

Performance Support: Pro Helvetia Shanghai, Swiss Arts Council

Technical Support: Centre for Experimental Film (CEF)

Online Screening: Link

---

*Due to COVID-related prevention measures, this projection of the performance will take place online via CEF.

Performance projection will be played when the museum can reopen on Platform of Future-Past (2022), an environmental installation conceived by fabric | ch, studio for architecture, interaction and research, for the exhibition.

HOW Art Museum (Shanghai) will also be temporarily closed during this period.

---

About the artist

Jessica Huber works as an artist in the field of the performing arts and is also together with Karin Arnold a founding member of mercimax, a performance collective based in Zürich.

After finishing her dance studies in London, she danced for various dance companies and most of her early pieces have been dance pieces too. Though her recent work and the forms and formats she chooses, have become more diverse during the past few years. Recently she has been collaborating with the British artist and activist James Leadbitter aka the vacuum cleaner on the hope & fear project.

Jessica works with curiosity and has a special interest in the texture of relationships and in how we function as individuals and as communities in society. She regularly gives workshops to professionals and non-professionals (different social groups) and teaches as a guest tutor at the Hyperwerk in Basel (Institut for studies for Post Industrial Design – or as they call it “the place where we think about how we want to live together in the future”) and is one of two artists who are part of the newly founded dramaturgy pool of Tanzhaus Zürich. She deeply enjoys diversity and is fascinated by the many possible aesthetics of exchange and sharing.

---

About the performance

Picture in the dark: Fletcher/Huber, picture daytime: Ettlin/Huber *pictures from “holding it together”: Nelly Rodriguez

Holding it together*: myself and the other is first of all an encounter between the two performers Géraldine Chollet and Robert Steijn – and the underlying questions of what binds us together and how we create intimacy, playfulness and trust by sharing rituals and treatments with each other.

Two very different and differently old bodies nestle together, doing silent rituals of gentle intimacy. They explain, apparently quite privately, how they met at a party and worked with each other – one with, one without a plan – and what this resulted in. The Dutch performer with a penchant for Shamanism and the dancer from Lausanne offer each other a song, a dance and neither shy away from deep feelings…

“Holding it together” is a series of collaborations, performances and researches Jessica Huber created in collaboration with different artists. The first idea for ‘Holding it together’ sparked during her studio residency in Berlin in 2013. This led to a collection of ideas, approaches to movement and formats under the thematic umbrella: ‘Holding it together’. Four thematic chapters or acts stem from this period: – The Thing; The Mass; Myself; Myself & the other(s).

“Holding it together” is not only a reference to the series’ overarching theme of cooperation and the question of how we perceive and create our world, but also an announcement of the working method: At the core of this series were reflections about the aesthetics and praxis of exchange and sharing, about rituals, as well as the longing for space and time for encounter.

Wednesday, August 25. 2021

Cloud of Cards A design research publication, ed. ECAL, Hemisphere & Frame magazines (2019 2020) | #design #research #datacenters #infrastructure

Note: still catching up on past publications, these ones (Cloud of Cards and related) are "pre-covid times", in Print-on-Demand and related to a the design research on data and the cloud led jointly between ECAL / University of Art & Design, Lausanne and HEAD - Genève (with Prof. Nicolas Nova). It concerns mainly new propositions for hosting infrastructure of data, envisioned as "personal", domestic (decentralized) and small scale alternatives. Many "recipes" were published to describe how to creatively hold you data yourself.

It can also be accessed through my academia account, along with it's accompanying publication by NIcolas Nova: Cloud of Practices.

-----

By Patrick Keller

--

The same research was shortly presented in the Swiss journal Hemispheres, as well as in the international magazine Frame:

--

Making Sense A publication about design research, (ed. D. Fornari), ECAL (Lausanne, 2017) | #iiclouds #datacenter #research

Note: to catch up on time and work with the documentation of our past publications, this one was published already some time ago by ECAL / University of Art and Design, Lausanne (HES-SO), but still a topical issue (> how to redesign/codesign datacenters and the access to personal data in both a sustainable and "fair" way for the end user?)

We're currently working on an evolution of this project that involves the recent decentralized technologies that emerged in the meantime (a.k.a "blockchains", "NFT", etc.). In the meantime, we are preparing academic talks on the subject with the media sociologist Joël Vacheron, who will be invoved in the next phases of the research -- would they happen... --

-----

By Patrick Keller

(sorry for the strange colors on these 3 img. below...)

Wednesday, July 14. 2021

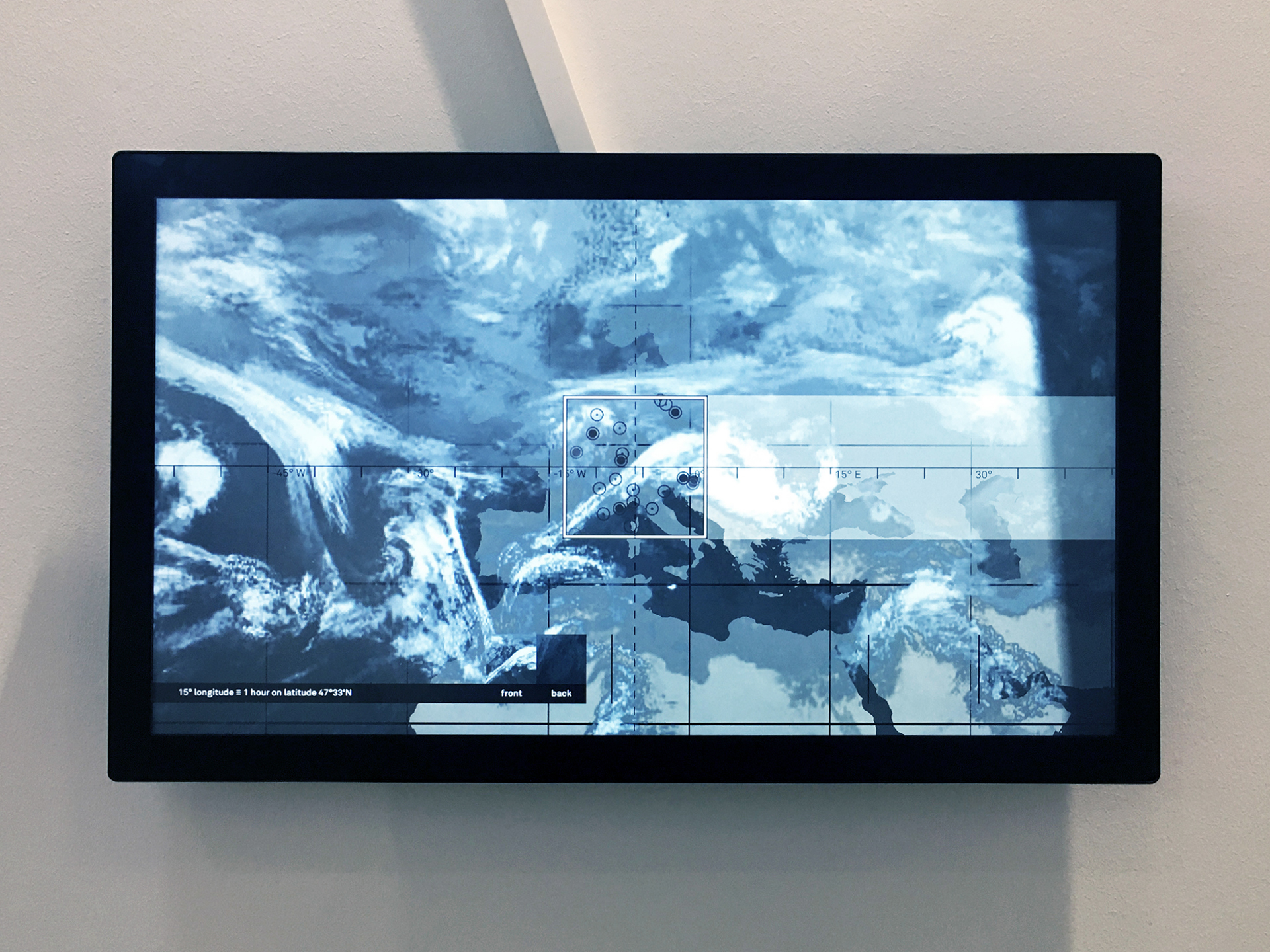

Interview with Patrick Keller fabric | ch, HEK Blog (Basel, 2020) | #satellite #daylight #device

Note: Patrick Keller (fabric | ch) was in discussion with curator Sabine Himmelsbach, from Haus der elektronischen Künste in Basel (CH), about their new acquisition for the collection of the Museum: Satellite Daylight 47°33'N.

The piece will be displayed permanently in the public space of the HeK. At least until breakdown... But interestingly, it is also part of a whole program of digital conservation at HeK that should prevent its technological collapse, for which we had to follow a tight protocol of documentation and provide the source code pf the work.

-----

fabric | ch, Satellite Daylight, 47°33‘N, 2020, Vue de l'installation durant «Shaping the Invisible World», 2021, HeK. Photo.: P. Keller.

Shooting set in preparation ...

Followed by our discussion with Sabine Himmelsbach ... (with a lot of reverb in the staircase!)

-----

Via Haus der elektronischen Künste (blog)

Patrick Keller of fabric | ch, a studio for architecture, interaction and research in Lausanne, provides thrilling insight about the new work installed in the staircase of HeK.

Patrick Keller of fabric | ch, a studio for architecture, interaction and research in Lausanne, provides information about the new work in an interview. The installation Satellite Daylight, 47°33’N, commissioned for the HeK collection, simulates the light registered by an imaginary meteorological satellite orbiting the earth at the latitude of Basel at a speed of 7541m/s.

Monday, June 28. 2021

Exhibition "Shaping the Invisible World" at the Haus der elektronischen Künste | #exhibitions #countercartography

Note: fabric | ch was part of the exhibition Shaping the Invisible World, in the middle of the world pandemic (03.03 - 23.05.21).

A new creation, Satellite Daylight 47°33'N was exhibited at this occasion (img. below), which was also acquiredby HeK and enters it's collection at the same time.

Among others, the exhibition displays works by Tega Brain, Bengt Sjölén & Julian Oliver, Bureau d'études, James Bridle, Trevor Paglen, Quadrature, etc. and was curated by Boris Magrini and Christine Schranz.

-----

Via Haus der eletronischen Künste

The exhibition «Shaping the Invisible World – Digital Cartography as an Instrument of Knowledge» examines, through cartography, the representational forms of the map as a tool between knowledge and technology. The works of the artists on view negotiate the meaning of the map as a gauge of our digital, technological and global society.

(Photo.: fabric | ch)

(Photo.: T. Marti)

fabric | ch, Satellite Daylight 47°33'N (2021) at the Haus der elektronischen Künste (photo.: fabric | ch).

Cartography – the science of surveying and representing the world – developed in antiquity and provided the springboard for communication and economic exchange between people and cultures around the globe. At the same time, maps are undeniably never neutral, since their creation inherently involves interpretation and imagination. Today, it is IT companies that drive progress in the field and drastically influence our views of the world and how we communicate, navigate and consume globally. While map production has become more democratic, digital maps are nevertheless increasingly used for political and economic manipulation. Questions of privacy, authorship, economic interests and big data management are more poignant than ever before and closely intertwined with contemporary cartographic practices.

Today’s maps not only depict, but also document, negotiate and visualize subjective views of the world. But are these maps more democratic? Who benefits from self-determined productions and what consequences do they lead to?

The strategies in digital mapping and cartography employed by the artists presented in Shaping the Invisible World – Digital Cartography as an Instrument of Knowledge are subversive. Their spectacular panoramas and virtual scenarios reveal how the digital technologies culturally affect our understanding of the world.

Navigating between subversive cartography and digital mapping, the exhibition puts the spotlight on the fascination of maps in relation to the democratization of knowledge and appropriation. By uncovering hidden realities, scarcely visible developments and possible new social relationships within a territory, the artists delineate the evolution of invisible worlds.

-

Artists: Studio Above&Below, Tega Brain & Julian Oliver & Bengt Sjölén, James Bridle, Persijn Broersen & Margit Lukács, Bureau d'études/ Collectif Planète Laboratoire, fabric | ch, Fei Jun, Total Refusal (Robin Klengel & Leonhard Müllner), Trevor Paglen, Esther Polak & Ivar Van Bekkum, Quadrature, Jakob Kudsk Steensen

Curators: Boris Magrini and Christine Schranz

(View the pictures of the exhibition directly on HeK's website).

fabric | ch, Satellite Daylight 47°33N | #luminosity #daylight #device #environment

Note: A new work by fabric | ch has been commissionned and aquired by the Haus der elektronischen Künste (HeK) in Basel for the museum's collection, part of the Satellite Daylight serie of "environmental devices".

It is the second work of fabric | ch that enters the collection, after a serie of four videos related to Satellite Daylight and entitled Satellite Daylight Pavilion. We are glad to join artists in the collection like Jodi, mediengruppe!Bitnick, Etoy, ... and also former students or colleagues at ECAL/University of Art and Design Lausanne (Juerg Lehni, Gisin & Vanetti, FragmentIn)!

This new artwork is entitled Satellite Daylight 47°33'N, and circles in fictious and continous way around the 47°33'N latitude -- while acquiring live environmental data about daylight, light intensity, nebulosity and cloud cover that drive the luminous display. --

This continuous circonvolution, at the speed of a real Earth Satellite and that triggers 16 nights and days per regular day on Earth, produces a new combined daylight at the point of installation, both local and internationally mediated.

Satellite Daylight's is an open serie of unique artworks, each located on a different latitude.

Via fabric | ch

-----

(Photo.: P. Keller)

Monday, May 03. 2021

Satellite Daylight 46°28'N during Media Quanrantine at Pushkin Museum | #artificial #luminosity #satellite

Note: during the first museum's lockdown in Russia (2020), The Pushkin State Museum of Fine Art in Moscow invited curators to participate in the "media quanrantine" by reacting to the theme "100 Ways to Live a Minute".

Sabine Himmelsbach, director and curator from HeK picked fabric | ch's work Satellite Daylight 46°28N (2007). Below is her presentation.

-----

fabric | rblg

This blog is the survey website of fabric | ch - studio for architecture, interaction and research.

We curate and reblog articles, researches, writings, exhibitions and projects that we notice and find interesting during our everyday practice and readings.

Most articles concern the intertwined fields of architecture, territory, art, interaction design, thinking and science. From time to time, we also publish documentation about our own work and research, immersed among these related resources and inspirations.

This website is used by fabric | ch as archive, references and resources. It is shared with all those interested in the same topics as we are, in the hope that they will also find valuable references and content in it.

Quicksearch

Categories

Calendar

|

|

April '24 | |||||

| Mon | Tue | Wed | Thu | Fri | Sat | Sun |

| 1 | 2 | 3 | 4 | 5 | 6 | 7 |

| 8 | 9 | 10 | 11 | 12 | 13 | 14 |

| 15 | 16 | 17 | 18 | 19 | 20 | 21 |

| 22 | 23 | 24 | 25 | 26 | 27 | 28 |

| 29 | 30 | |||||

.jpg)