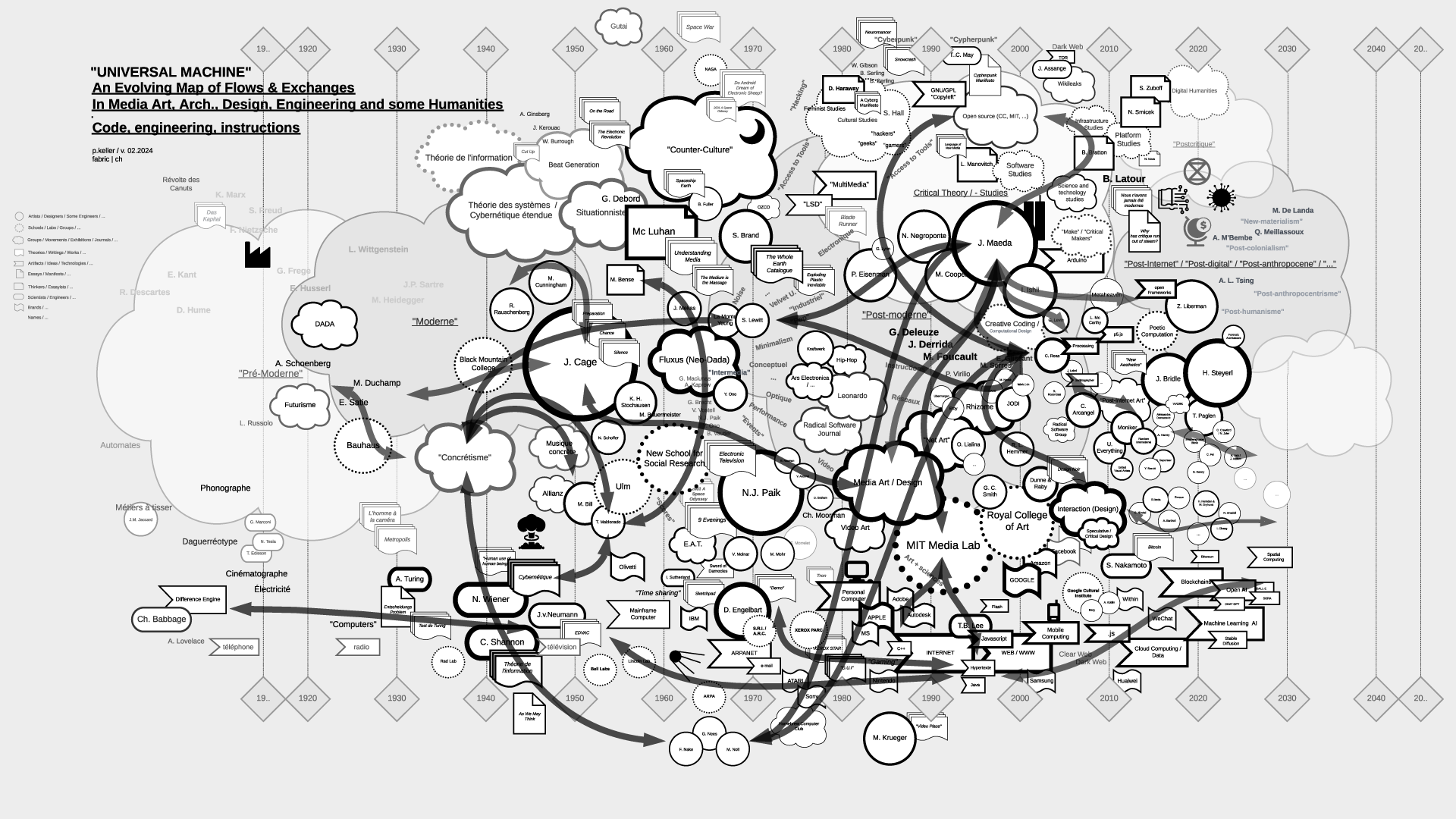

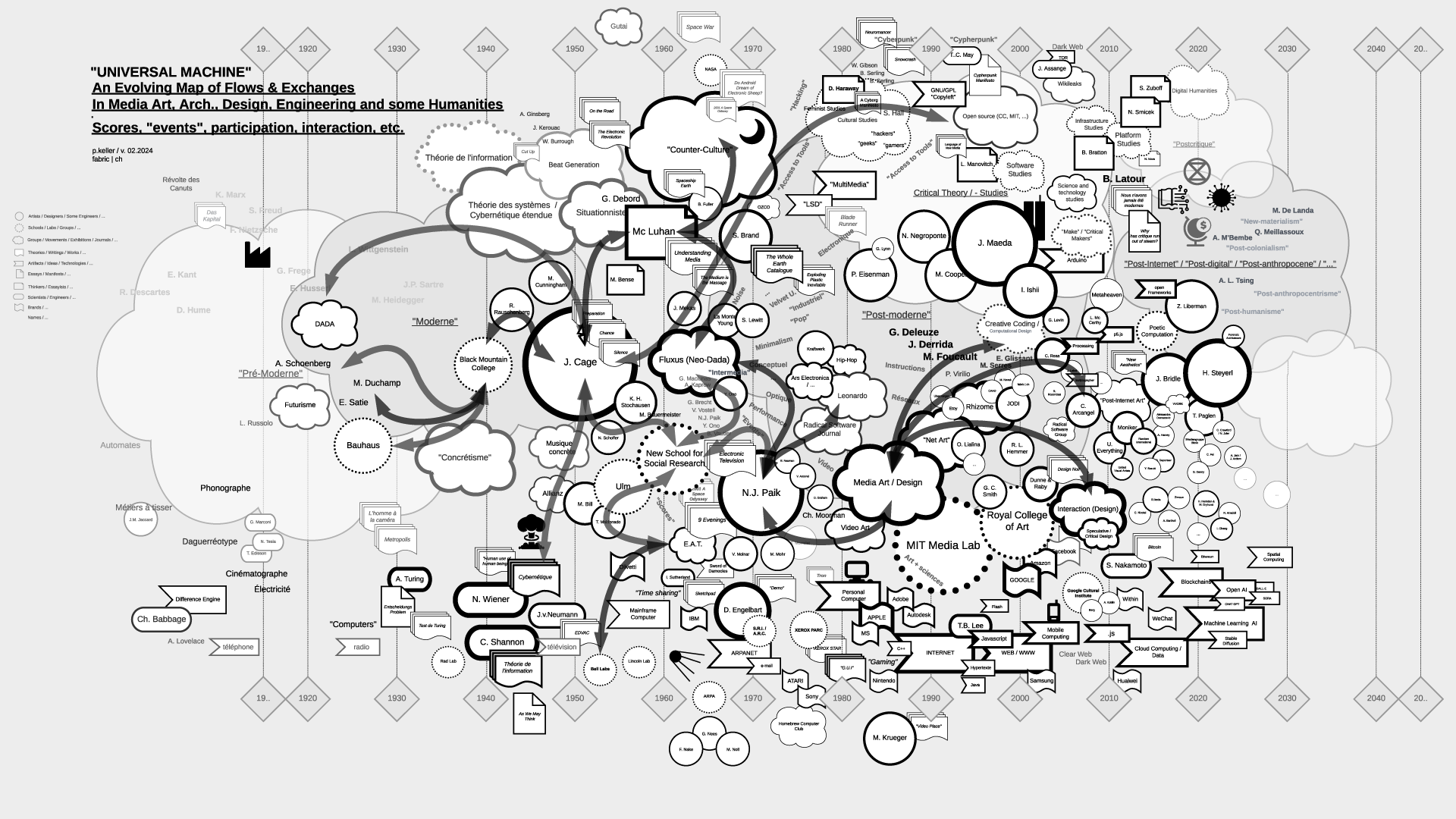

Note (03.2024): The contents of the files (maps) have been updated as of 02.2024.

-

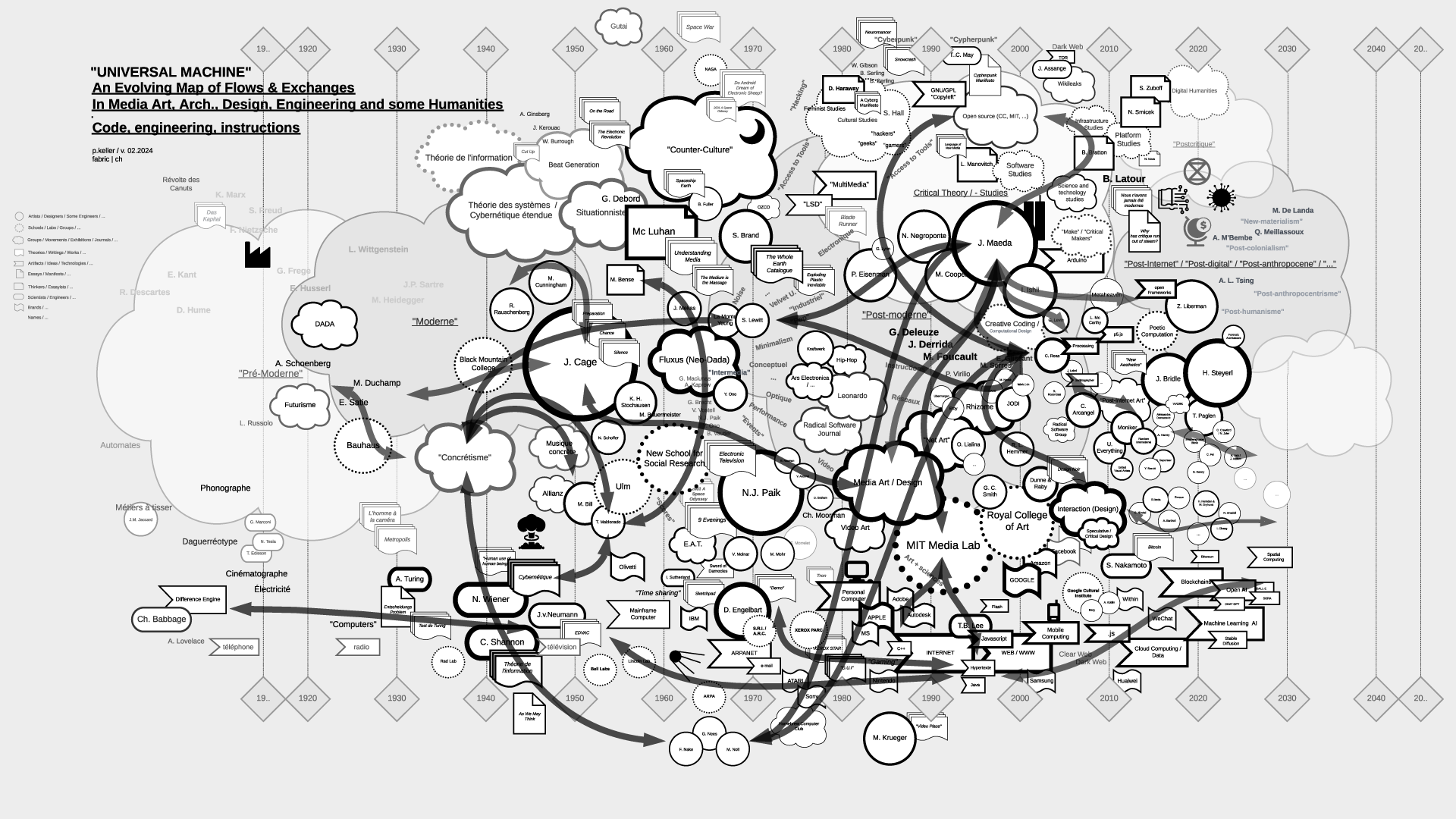

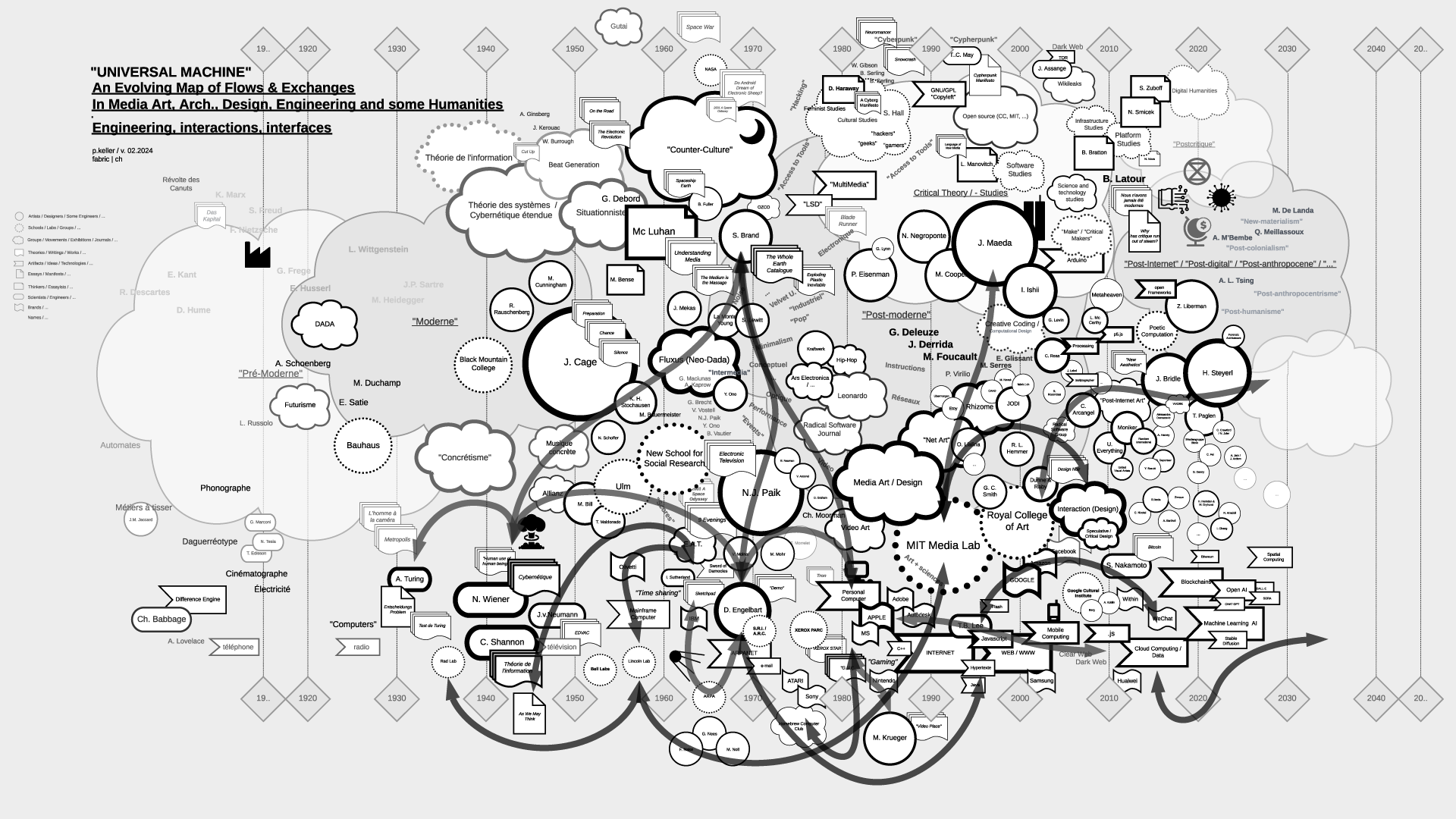

Note (07.2021): As part of my teaching at ECAL / University of Art and Design Lausanne (HES-SO), I've delved into the historical ties between art and science. This ongoing exploration focuses on the connection between creative processes in art, architecture, and design, and the information sciences, particularly the computer, also known as the "Universal Machine" as coined by A. Turing. This informs the title of the graphs below and this post.

Through my work at fabric | ch, and previously as an assistant at EPFL followed by a professorship at ECAL, to experience first hand some of these massive transformations in society and culture.

Thus, in my theory courses, I've aimed to create "maps" that aid in comprehending, visualizing, and elucidating the flux and timelines of interactions among individuals, artifacts, and disciplines. These maps, imperfect and constrained by size, are continuously evolving and open to interpretation beyond my own. I regularly update them as part of the process.

Yet, in the absence of a comprehensive written, visual, or sensitive history of these techno-cultural phenomena as a whole, these maps serve as valuable approximation tools for grasping the flows and exchanges that either unite or divide them. They offer a starting point for constructing personal knowledge and delving deeper into these subjects.

This is precisely why, despite their inherent fuzziness - or perhaps because of it - I choose to share them on this blog (fabric | rblg), in an informal manner. It's an invitation for other artists, designers, researchers, teachers, students, and so forth, to begin building upon them, to depict different flows, to develop pre-existing or subsequent ideas, or even more intriguingly, to diverge from them. If such advancements occur, I'm keen on featuring them on this platform. Feel free to reach out for suggestions, comments, or to share new developments.

...

It's worth mentioning that the maps are structured horizontally along a linear timeline, spanning from the late 18th century to the mid-21st century, predominantly focusing on the industrial period. Vertically, they are organized around disciplines, with the bottom representing engineering, the middle encompassing art and design, and the top relating to humanities, social events, or movements.

Certainly, one might question this linear timeline, echoing the sentiments of writer B. Latour. What about considering a spiral timeline, for instance? Such a representation would still depict both the past and the future, while also illustrating the historical proximities of topics, connecting past centuries and subjects with our contemporary context in a circular manner. However, for the time being, and while recognizing its limitations, I adhere to the simplicity of the linear approach.

Countless narratives can emerge as inherent properties of the graphs, underscoring that they are not their origins but rather products thereof.

...

The selection of topics (code, scores-instructions, countercultural, network-related, interaction, "post-...") currently aligns with the themes of my teaching but is subject to expansion, possibly toward an underlying layer revealing the material conditions that underpinned and facilitated the entire process.

In any case, this could serve as a fruitful starting point for some further readings or perhaps a new "Where's Waldo/Wally" kind of game!

Via fabric | ch

-----

By Patrick Keller

Rem.: By clicking on the thumbnails below you'll get access to HD versions.

"Universal Machine", main map (late 18th to mid 21st centuries):

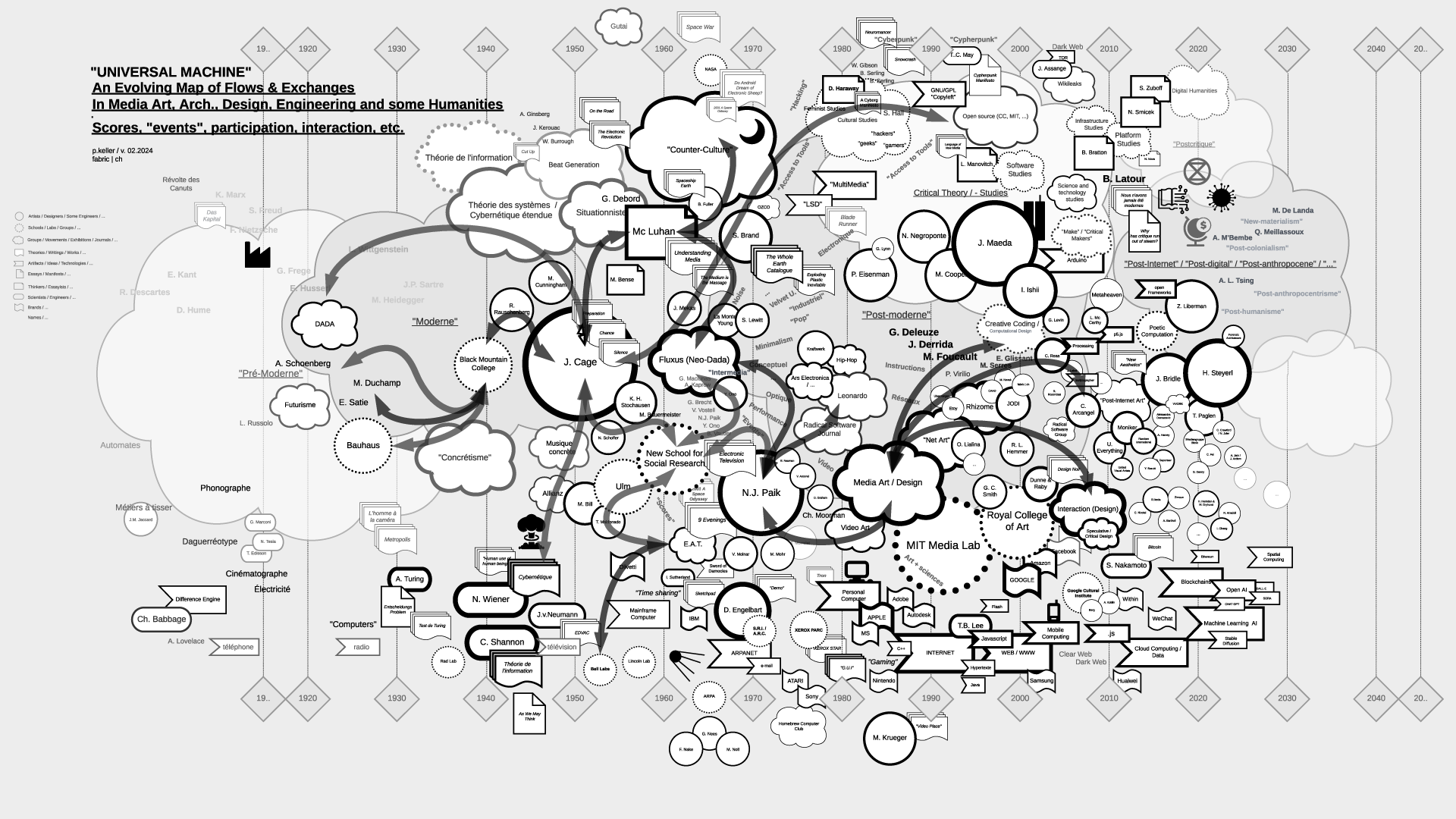

Flows in the map > "Code":

Flows in the map > "Scores, Partitions, ...":

Flows in the map > "Countercultural, Subcultural, ...":

Flows in the map > "Network Related":

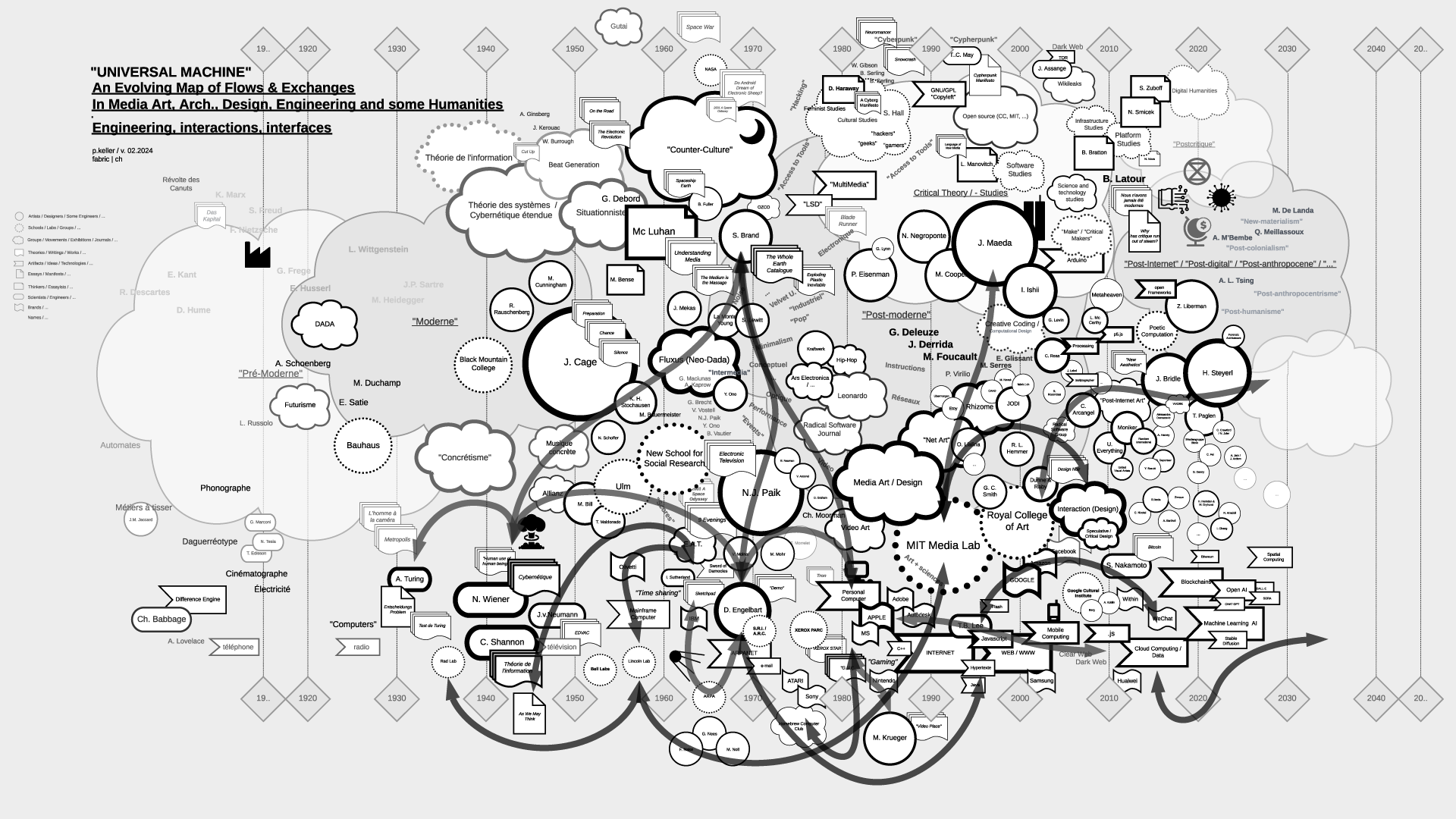

Flows in the map > "Interaction":

Flows in the map > "Post-Internet/Digital, "Post -..." , "Neo -...", ML/AI":

...

To be continued (& completed) ...