Thursday, June 21. 2012

Via MIT Technology Review

-----

By Duncan Graham-Rowe

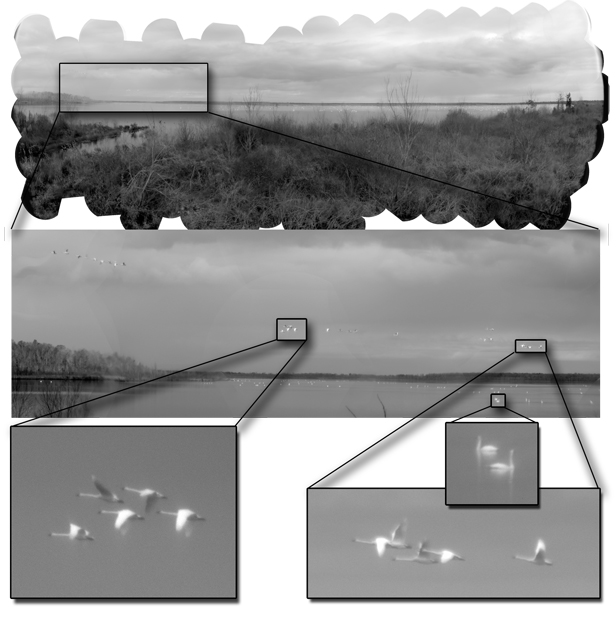

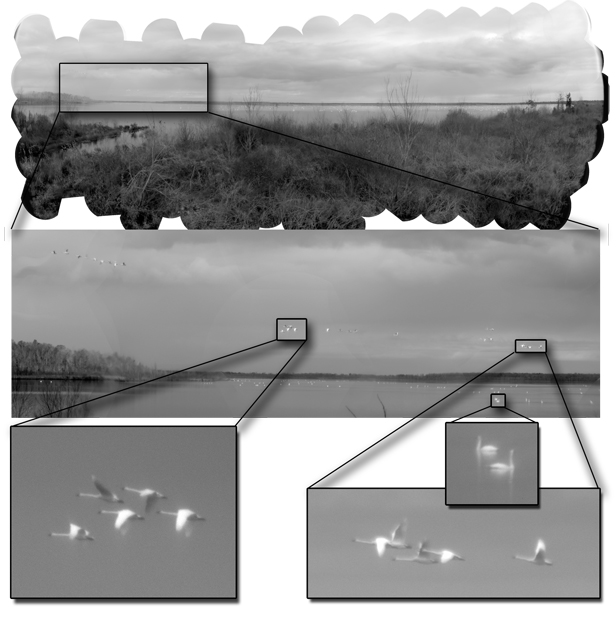

Beady eye: The Aware-2 gigapixel camera with some of its many micro-cameras.

Duke University

Imagine trying to spot an individual pixel in an image displayed across 1,000 high-definition TV screens. That's the kind of resolution a new kind of "compact" gigapixel camera is capable of producing.

Developed by David Brady and colleagues at Duke University in Durham, North Carolina, the new camera is not the first to generate images with more than a billion pixels (or gigapixel resolution). But it is the first with the potential to be scaled down to portable dimensions. Gigapixel cameras could not only transform digital photography, says Brady, but they could revolutionize image surveillance and video broadcasting.

Until now, gigapixel images have been generated either by creating very large film negatives and then scanning them at extremely high resolutions or by taking lots of separate digital images and then stitching them together into a mosaic on a computer. While both approaches can produce stunningly detailed images, the use of film is slow, and setting up hundreds of separate digital cameras to capture an image simultaneously is normally less than practical.

It is not possible to simply scale up a normal digital camera by increasing the number of light sensors to a billion, because this would require a lens so large that imperfections on its surface would cause distortion.

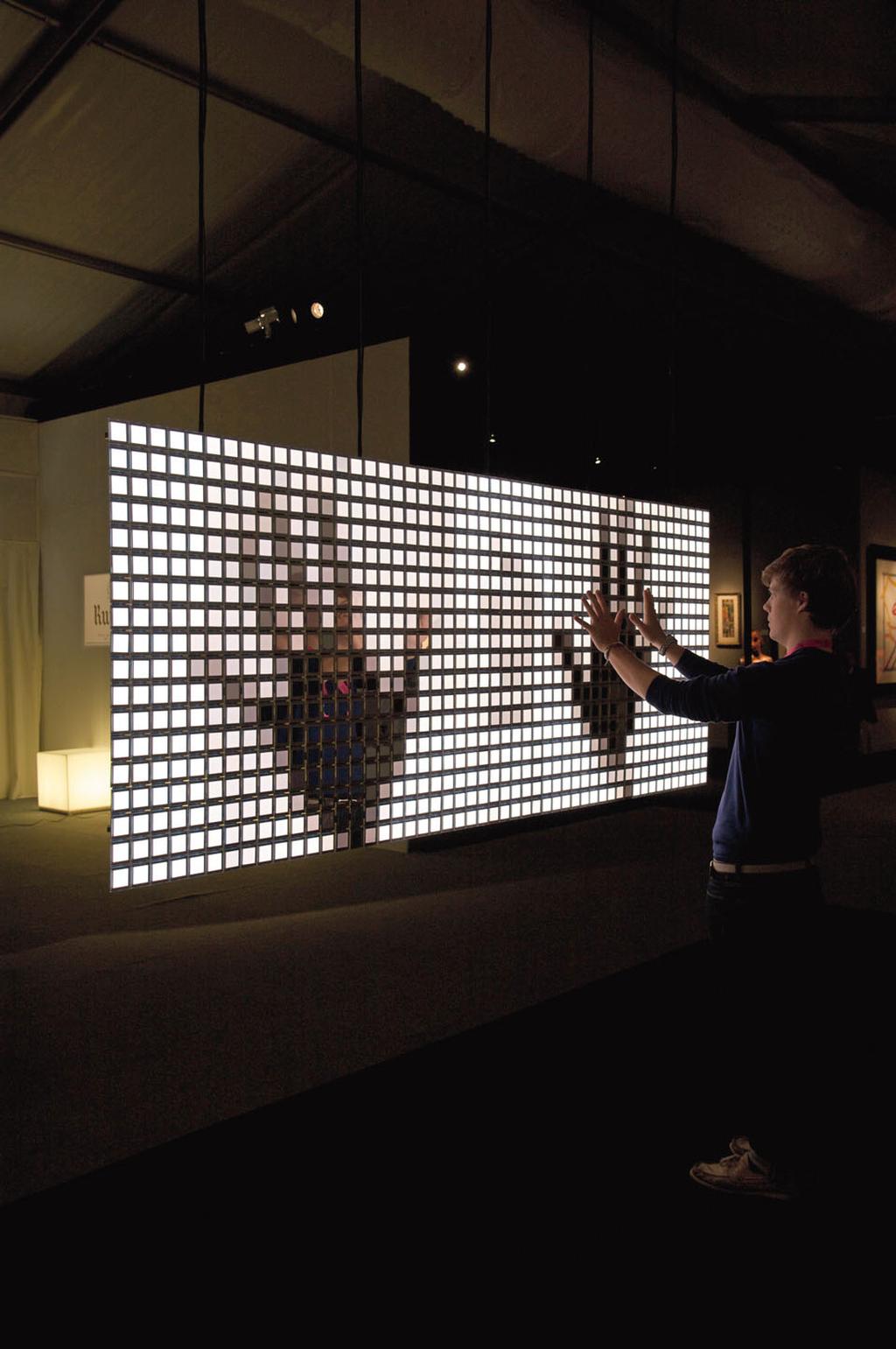

Zoom in: A gigapixel image of Pungo Lake.

Duke University

Brady's solution, a camera called AWARE, has 98 micro-cameras similar to those found in smart phones, each with 10-megapixel resolution. By positioning these high quality micro-cameras behind the lens, it becomes possible to process different portions of the image separately and to correct for known distortions. "We realized we could turn this into a parallel-processing problem," Brady says.

The corrections are made possible by eight graphical processing units working in parallel. Breaking the problem up this way allows more complex techniques to be used to correct for optical aberrations, says Illah Nourbakhsh a lead researcher of a similar project, called Gigapan, at Carnegie Mellon University.

Eventually, as computer processing power improves, the hardware needed for such a camera should shrink. Portable gigapixel resolution could be useful in a number of ways. For example, additional pixels already help with image stabilization. "Also, if you increase the resolution, you increase the chances of automated recognition and artificial intelligence systems being able to accurately recognize things in the world," Nourbakhsh says.

The project is described in this week's issue of the journal Nature. In one gigapixel image of Pungo Lake in the Pocosin Lakes National Wildlife Refuge, Brady's group shows that individual swans in the extreme distance can be resolved. The picture was taken using a prototype camera capable of capturing and processing an entire image in just 18 seconds.

As graphical processors improve, so too will the speed of the camera, says Bradley. And although the prototype currently stands 75 centimeters tall–about the size of a television studio camera—the device's size is dictated in large part by the equipment needed to cool the circuit boards.

"In the near term, we think this concept of a micro-camera imaging system is the future of cameras," says Brady. By the end of next year, his group hopes to be able to produce and sell 100 units a year, each costing around $100,000. This is comparable to the cost of a broadcast TV camera, he says.

Gigapixel cameras could eventually allow events to be covered in new ways. "Rather than showing a camera angle that the producer lets you see, the viewer will be able to see anything in the scene that they want," Brady says.

Friday, June 15. 2012

-----

de noreply@blogger.com (Geoff Manaugh)

I'm excited to be launching a new project called Venue, a 16-month collaboration with the Nevada Museum of Art's Center for Art + Environment, Columbia University GSAPP's Studio-X NYC, and Future Plural, the small publishing and curatorial group I'm a part of with Nicola Twilley.

We kick things off this Friday, June 8, with a launch event at the Nevada Museum of Art in downtown Reno, from 6-8pm; if you're near Reno, consider stopping by!

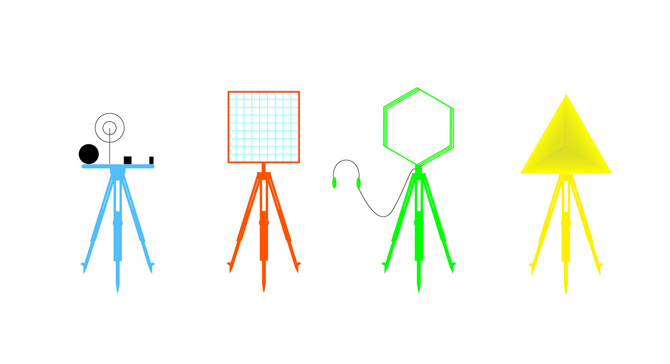

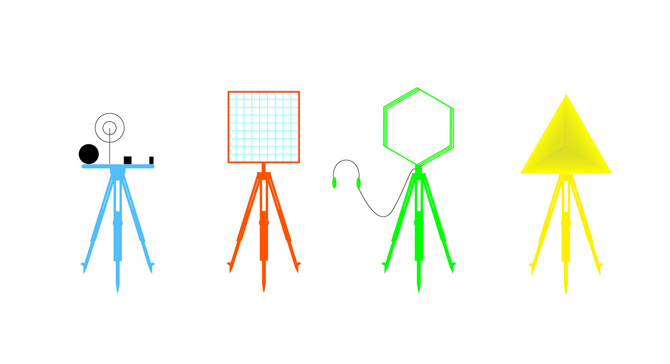

[Image: The tools and props of surveying; courtesy of the USGS]. [Image: The tools and props of surveying; courtesy of the USGS].

In brief, Venue is equal parts surveying expedition and forward-operating landscape research base, a DIY interview booth and media rig that will pop up at sites across North America through September 2013.

Nicola Twilley and I will be traveling on and off, in a series of discontinuous trips, over the next 16 months, visiting a variety of sites including infrastructural landmarks, science labs, factories, film sets, archaeological excavations, art installations, university departments, design firms, National Parks, urban farms, corporate offices, studios, town halls, and other locations across North America, where we'll both record and broadcast original interviews, tours, and site visits. From architects to scientists and novelists to mayors, from police officers to civil engineers and athletes to artists, Venue’s interview archive will form a cumulative, participatory, and media-rich core sample of the greater North American landscape.

[Image: Understanding landscapes by way of strange devices; courtesy of the USGS]. [Image: Understanding landscapes by way of strange devices; courtesy of the USGS].

While there will no doubt be regular updates here on BLDGBLOG, you can follow along, both online and off, by reading our latest dispatches, suggesting sites and people we should visit, and keeping an eye on our schedule (or signing up for our mailing list) to find out when we will be bringing Venue to a neighborhood near you. In addition, our best content will be syndicated on a dedicated channel online by The Atlantic, so keep your eye out for our first interviews or site visits—photos, short films, MP3s—as our travels get underway.

[Image: The Venue tripods, universal mounts for interchangeable devices; designed by Chris Woebken]. [Image: The Venue tripods, universal mounts for interchangeable devices; designed by Chris Woebken].

There's a lot more information available about the project at the Venue website—including some early images depicting the incredible array of devices designed for us by Chris Woebken, a gorgeous hand-made interview box custom-fabricated for Venue by Semigood, and the " Descriptive Camera" that we'll have on the first leg of our trip—so by all means stop by and see the ideas behind the project, from conceptual inspiration taken from historical survey expeditions to Ant Farm's Media Van.

[Image: The Venue box takes shape, custom-designed by Semigood]. [Image: The Venue box takes shape, custom-designed by Semigood].

And hopefully somewhere down the line, we can meet many of you in person.

Personal comment:

An interesting publishing project/initiative to follow and/or participate by Nicola Twilley and Geoff Manaugh.

Tuesday, April 24. 2012

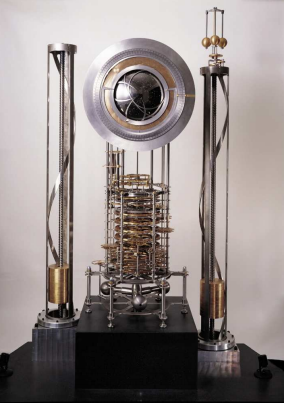

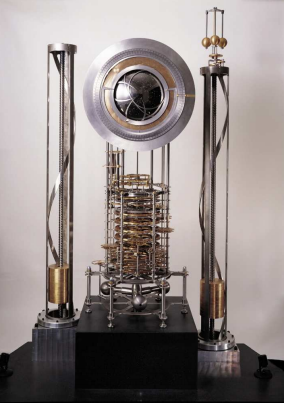

Ten thousand years is about the age of civilisation. Archaeologists have a few relics that have spanned this period, mostly stone tools and works of art. But most evidence of the earliest civilisations has long crumbled into dust.

So the plan to build a mechanical clock that will keep time for the next ten thousand years is hugely ambitious. And yet that is exactly the goal of the Long Now Foundation, an organisation set up to promote long term thinking and responsibility.

These guys are currently building a prototype of their clock inside a mountain in Texas near the border with New Mexico. And today, Danny Hillis at the foundation and a few pals, outline the way in which it will keep time.

Keeping time over such a period generates numerous challenges. First is ensuring the mechanical integrity of the machinery, which they achieve with long-lasting materials such as titanium, ceramics, quartz and sapphire.

Just as important is the environment: a series of tunnels carved into a mountainside in the high desert. Inside the mountain, the conditions are dry and the temperature constant.

Outside, however, the temperature varies between dessert extremes of hot and freezing. Hillis and co plan to exploit this temperature difference to power the clock using metal rods that change in length as the temperature varies. Human visitors will also be able to wind it.

As for time, the heart of the clock is a titanium pendulum with a 10 second cycles. Pendulum Time advances one unit once every 30 cycles, in other words every five minutes.

The rest of the clock is a digital computer using Pendulum Time as an input and generating analogue outputs in the form of various displays of time.

The mechanism first calculates Uncorrected Solar Time using a straightforward equation of time, which has been precomputed for the next ten thousand years to within the accuracy of the Earth's variable rotation.

Next, the clock calculates Solar Time using a correction provided by a solar sychroniser: a vertical chamber that heats up when the sun is directly overhead and shines into it. This can add or take away a tick if the clock is out of sync. "The correction is positive if the Sun is detected before the just-before-noon tick, and negative if it is detected after the just after-noon tick," say Hillis and co.

An obvious problem occurs if the Sun is obscured for long periods of time, perhaps because of dust from a volcanic eruption. In that case, the clock will keep uncorrected solar time until the Sun becomes visible again. Then it can correct the time in 5 minutes steps each sunny day.

It is this mechanism that corrects for any changes in the Earth's rotation, caused by climate change, shifts in the Earth's crust and so on. An accumulation of ice at the poles will cause the rotation to speed up, for example.

But provided the clock does not drifted by more than 12 hours, it should return to the right time. "This allows the clock to successfully recover after more than a century of overcast skies," say Hillis and co.

The clocks uses Corrected Solar Time to generate a Displayed Solar Time that visitors will see. It also calculates Orrery Time, a display of the position and phase of the Moon, the tropical year, the sidereal day, orbits of the visible planets, and the precession of the Earth's axis.

The plan is to use the lessons from building this prototype to create another clock in a mountain in Nevada. After that, the creators hope that other groups around the world will make their own millennium clocks, thereby spreading the pattern of long term thinking.

A profoundly impressive project.

Personal comment:

Obviously we are interested in "dimensions" and the way to architecture or interact with them. In this case, it is particularly interesting to underline the relationship between the construction of the clock (its materials, architecture) and the environment (the tunnels) that could/should last unchanged for 10'000 years.

Thursday, April 12. 2012

By fabric | ch

-----

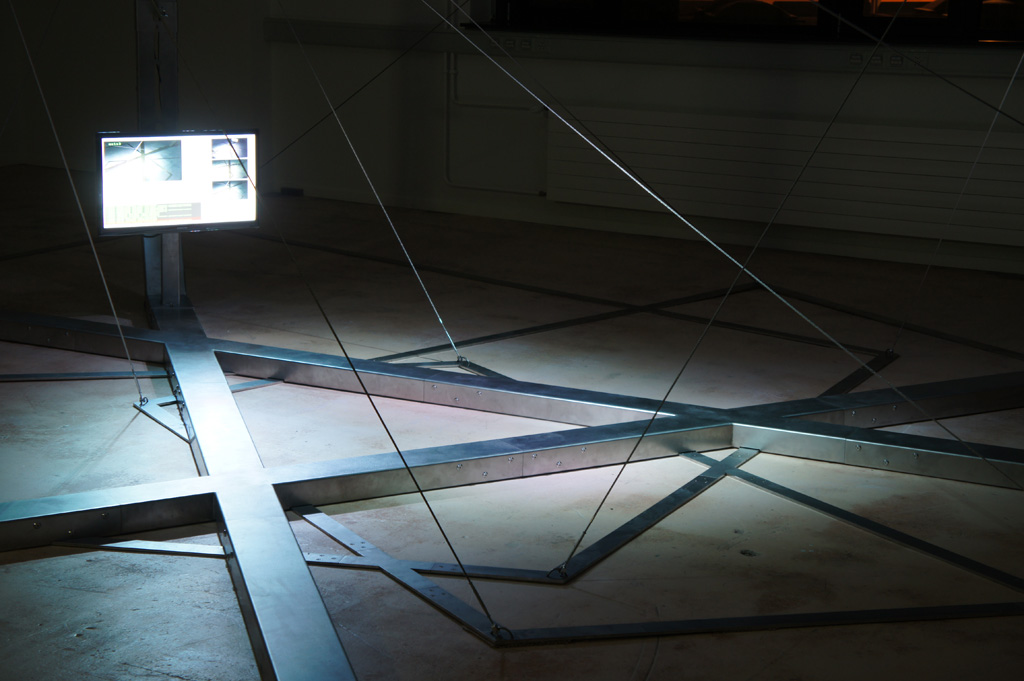

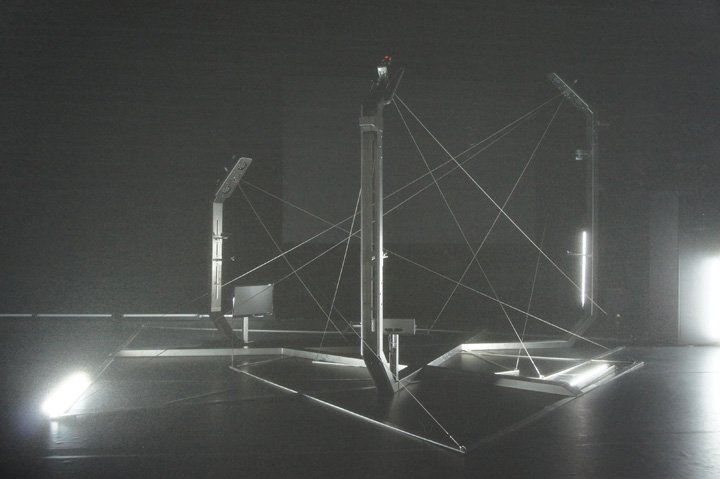

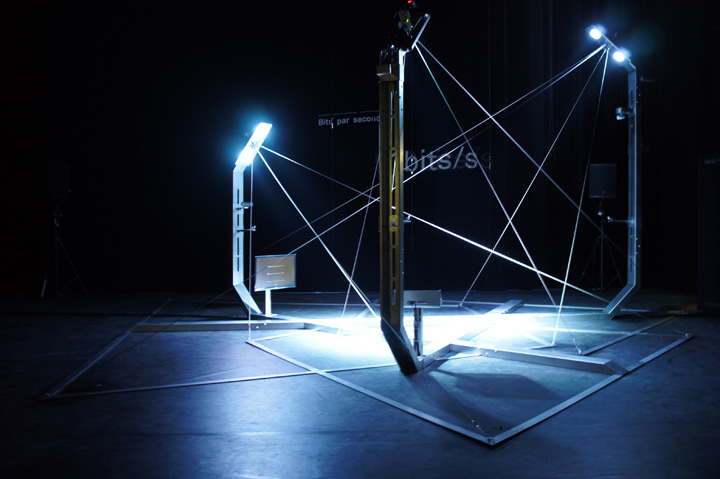

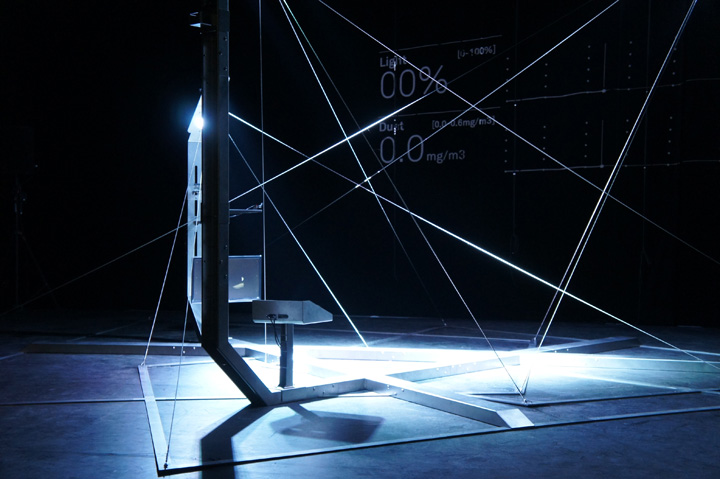

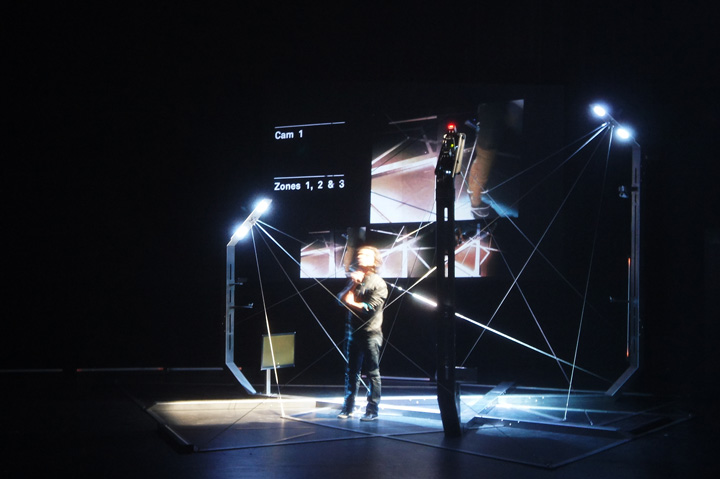

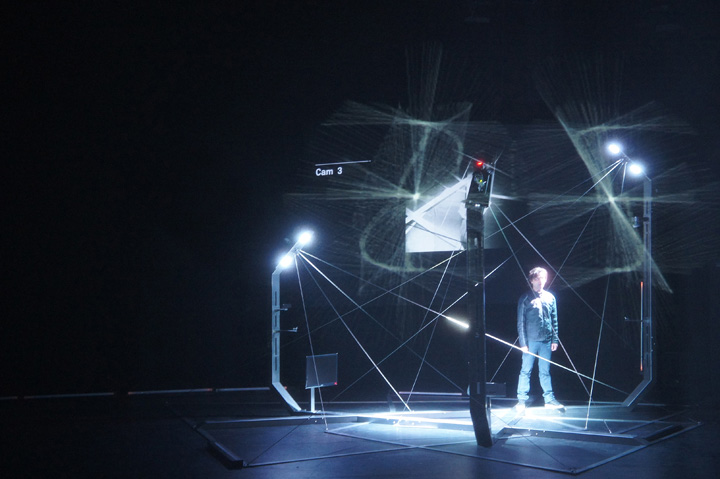

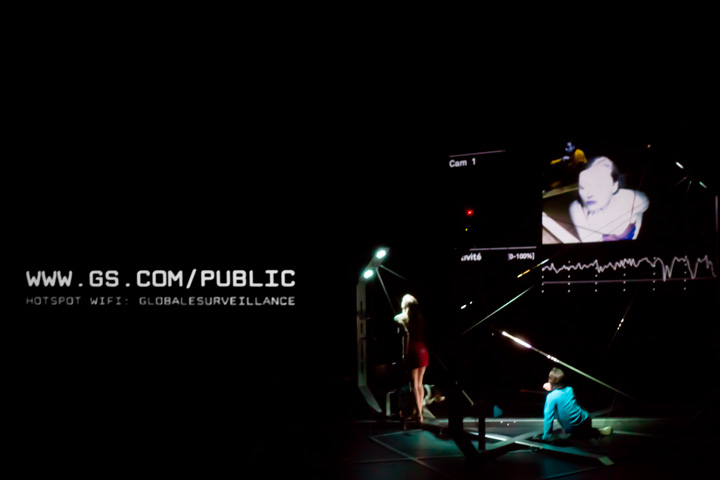

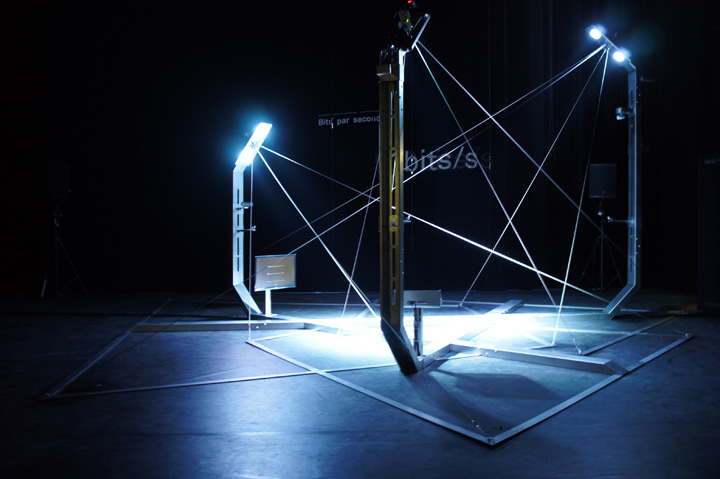

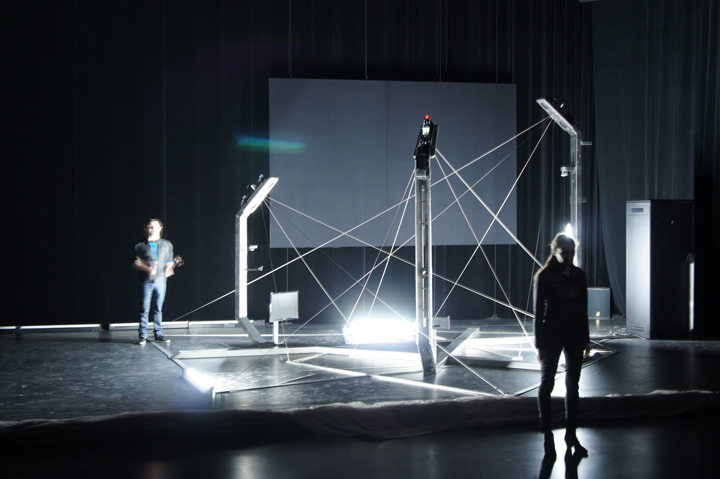

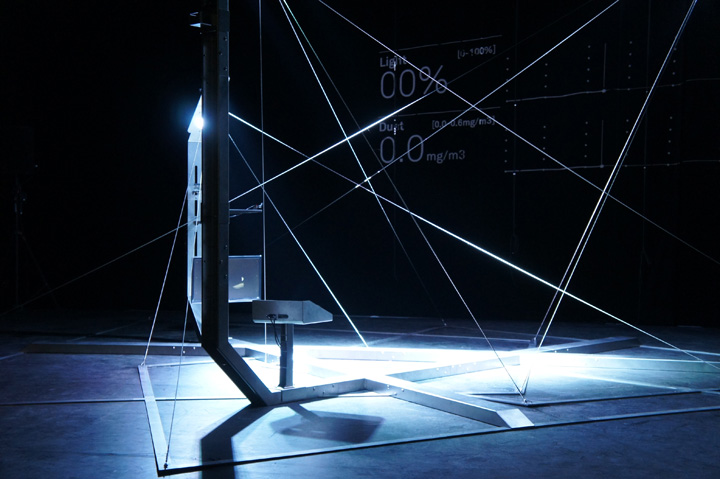

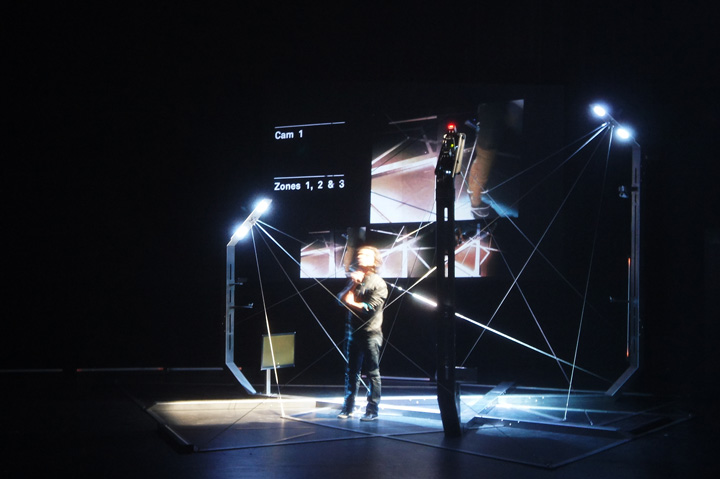

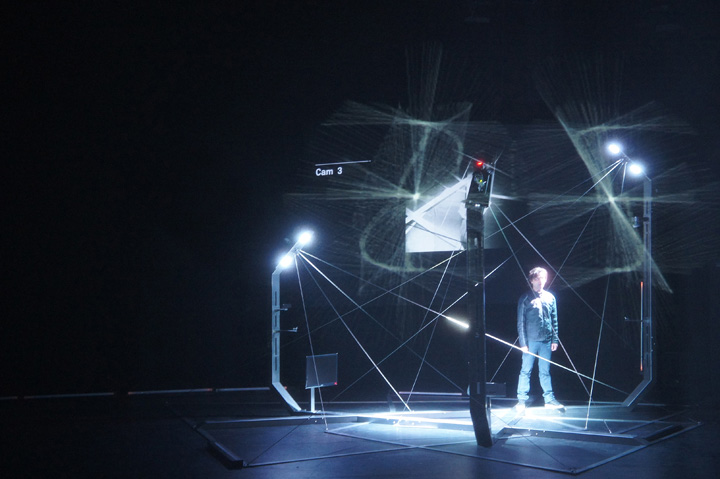

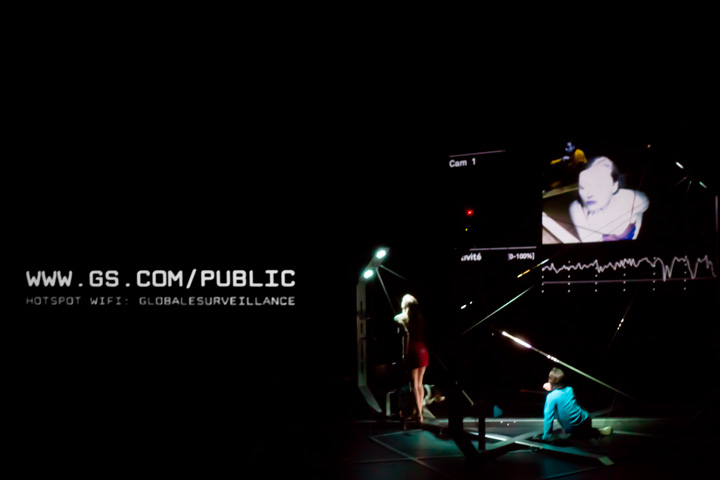

Last week I published a post about Paranoid Shelter, a recent architectural installation by fabric | ch that deals with "smartness", sensing and surveillance. As explained then, this new work can be displayed on its own, as a study on the "contemporary shelter" that articulates the notions of (the nearly fantasized) shelter and the one of surveillance. But this installation or "architectural device" was also created with a collaborative context in mind.

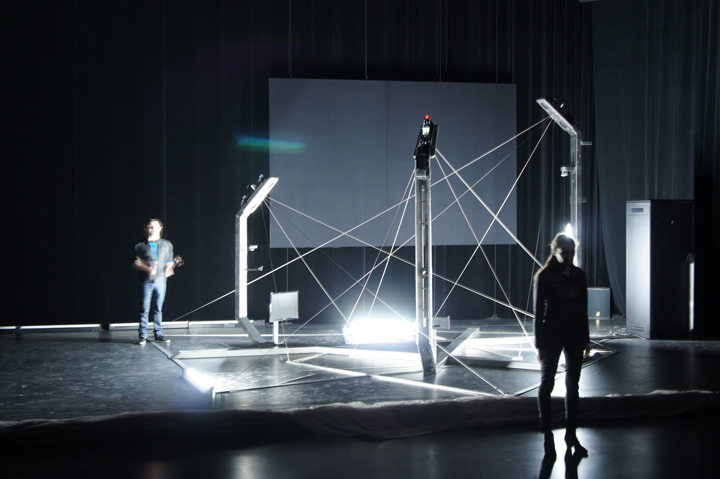

This collaboration, or at lest the first one, was for a theatrical: Globale Surveillance, for which we worked on the scenography and co-initiated the project. This is a project and performance-like theatrical staged by Eric Sadin, a french essayist and writer, based on the books Globale Paranoïa (Éditions Les Petits Matins, 2009) --fiction-- & Surveillance Globale (Climats/Flammarion, 2009) --theory--, both written by him. NOhista (sound and video design) as well as Abigail Fowler (light design) joined the team to finalize the project as well, of course, as the two comedians, Laure Wolf and Gurshad Shaeman.

Rehearsals and opening of Globale Surveillance were last February and March in Caen (France), at the Comédie de Caen-Centre Dramatique National de Normandie (CDN). The play should travel to Paris for the new theater season (12-13) and then hopefully to other cities in France and Switzerland, before, eventually to travel further away and in english.

GLOBALE SURVEILLANCE

About the play (notice from the Comédie de Caen):

We live in a world under surveillance, no one would dispute that point anymore. But what configurations do the new devices of control take, in what forms and in which way are they different from the practices of the last century? How do they alter our relationship to the world and to the others? Do they reach the point of threatening the right to privacy? Globale Surveillance sets up an hyper-monitored zone, within which actors and spectators are subject to quantities of traceability procedures, in this case made visible, conversely many scary mechanisms at work daily that are affected by the phenomenon of invisibility.

Text & staging : Éric Sadin

Scenic architecture, responsive spatial device : fabric | ch

Sound & video design : Nohista

Light design : Abigail Fowler

Comedians : Laure Wolf & Gurshad Shaheman

Paranoid Shelter on stage during the play with the two comedians (Laure Wolf, Gurshad Shaeman), CDN (Centre Dramatique National) / ESAM of Caen in France.

GLOBALE SURVEILLANCE from Bruno Ribeiro on Vimeo.

A compressed preview and short of the play by NOhista.

-

.jpg)

Pictures: Nicolas Besson, Patrick Keller, Alban Van Wassenhove

-

Co-production: Comédie de Caen-Centre Dramatique National de Normandie (CDN), France; Ecole supérieure d'arts et médias de Caen (ESAM), France.

With the support of: DICRéAM, France; Office Fédérale de la Culture, Swirtzerland; Pro Helvetia, Switzerland; Ville de Lausanne, Switzerland and Etat de Vaud (Switzerland).

Wednesday, April 04. 2012

By fabric | ch

-----

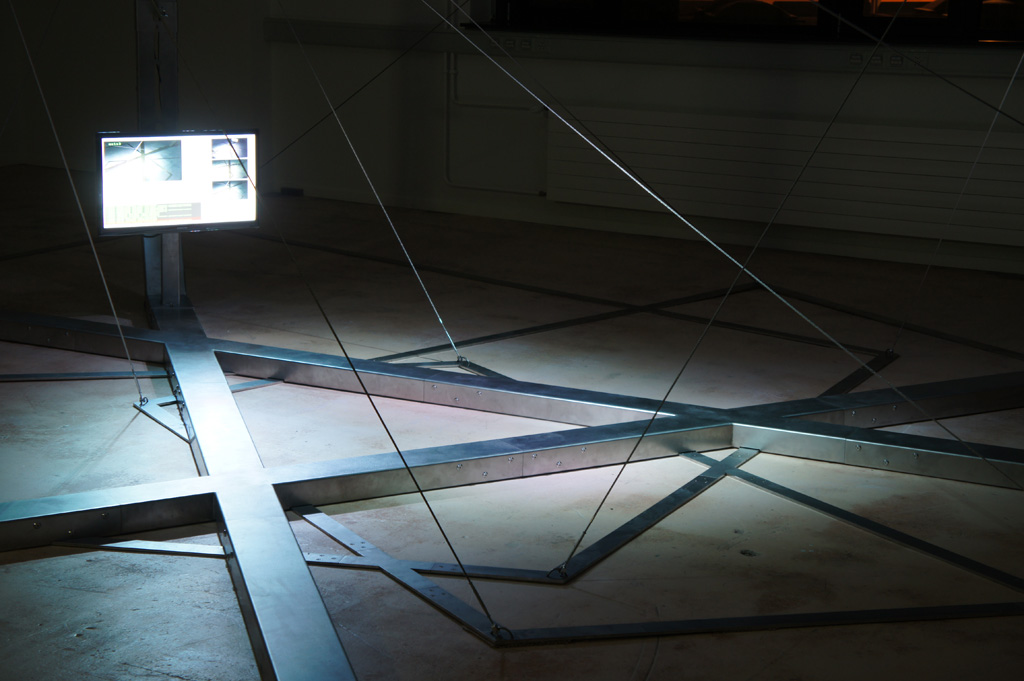

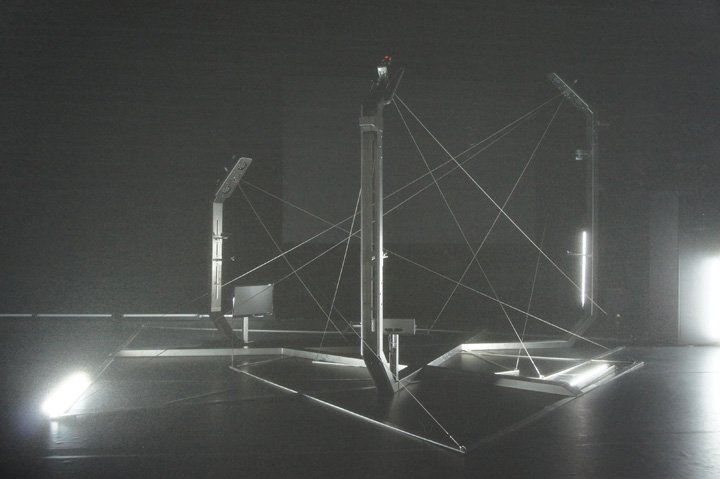

Paranoid Shelter, beta testing, residency at the EPFL-ECAL Lab (late summer and winter 2011)

PARANOID SHELTER

Paranoid Shelter is a recent installation / architectural device that fabric | ch finalized later in 2011 after a 6 months residency at the EPFL-ECAL Lab in Renens (Switzerland). It was realized with the support of Pro Helvetia, the OFC, the City of Lausanne and the State of Vaud. It was initiated and first presented as sketches back in 2008 (!), in the context of a colloquium about surveillance at the Palais de Tokyo in Paris.

Being created in the context of a theatrical collaboration with french writer and essayist Eric Sadin around his books about contemporary surveillance ( Surveillance globale and Globale paranoïa --both published back in 2009--), Paranoid Shelter revisits the old figure/myth of the architectural shelter, articulated by the use of surveillance technologies as building blocks

Doing so, it states that contemporary surveillance is in a “de facto” relation with the old myth of the shelter and that it can be considered as a contemporary way to build it, yet in a total different way, somehow problematic because it usually mixes public and private interests, freedom and penalty or censorship and remains unclear.

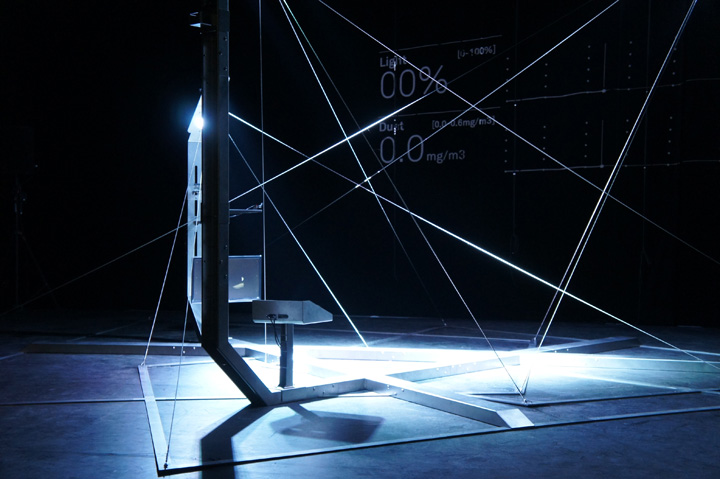

Therefore, filled with monitoring technologies and exploring their formal potential (the main formal aspects are “autological”: the materialisation of the cones of vision from the surveillance cameras fixed on a frame that evokes public infrastructure) as well as their functional incidence (a tele-"neo-nomad" condition?), the project also comments about "smartness" as Paranoid Shelter is composed of the same ingredients : active, mediated, monitored or scrutinized, possibly robotized and sometimes "intelligent". Consequently, it points out the links between “smartness” and surveillance that can’t be undervalued (what is the status of the data that are collected? what are the inner natures of codes and softwares that drive the behaviours of the architecture?)

"Smartness" is undoubtedly a coming way to architecture space largely debated in specialized circles. Will this be the work of the architect or the engineer is still to be decided (following the heritage of architectural critic from the 60ies Reyner Banham, we state it should mainly be designed by the architect and implemeted in close relation with the engineer). It is, along with parallel questions about sustainability, digital design & fabrication, code, weather, robotics and possibly "geo-engineering", one of the hot topics within the recent architectural debate that will drive the evolution of the discipline.

As with surveillance, "smartness" will generate data, it will leave "traces" and "tracks". This latter inevitably led to raise the same issues as those related to the privacy (or not) of personal data. The statuses of data, codes and technologies are, in fact, a real issue for society in general and for architecture in particular. To envision the question, just think about a public space monitored by a private company that owns and uses the data for marketing purposes (we all know this already) or a private home where all the data and images are openly released on the networks (next frontier for some hackers). Worse: a state that sells its monitored but non-nominal public information to companies for them to build commercial products. We are confronted to a confusion of gender, at best an hybridization.

Paranoid Shelter articulates and objectifies the idea of surveillance/smartness as one of the main vectors of transformation in our contemporary space: while responding to none of the traditional ways to describe a shelter (Paranoid Shelter doesn't protect you from rain or cold, it doesn't really protect you neither from any physical danger but can maybe anticipate it), while being nearly totally transparent and mostly immaterial, it is nonetheless totally different to stand inside its layered frames and limits than to stand outside them. If an outside does, indeed, still exist. The space is changed and architected by the means of invisible codes, behaviours and is mediated through the channels of technologies and networks.

With Paranoid Shelter, the code is obviously an integral part of the architecture and its status tries to be clear: custom created by the design team (architects and scientists) for the project along with its physical parts, the code is in this case part of the structure and the author’s work. The raw data, generated by the public and that drives the reactivity of the spatial device, are public and fully open to any other public use. It is a shared common space where the infrastructure is made available to the public but doesn’t belong to him, the space and data do.

Paranoid Shelter demonstrates the fact that a piece of code and technology should indeed be considered as architecture, that it transforms the nature of space and our experience of it, like previous technologies did (electricity, artificial lighting, heating, air conditioning, elevators, etc.), but also like walls and windows can do. It suggests new ways of living too, nearly tribal within an equally new, partly immaterial, open and mediated shelter.

Paranoid Shelter can be displayed either as an architectural installation of its own (museum), where its programs let it behave in an autonomous and "intelligent" way, or as a scenographic architecture, on stage (theatre) in the context of collaborations and where it is driven by different programs that are (mostly) remotely controlled.

Paranoid Shelter building blocks are (hardware):

- 3 network (surveillance) cameras, w. night shot functionalities

- 1 network (surveillance) PTZ (Pan Tilt Zoom) camera

- 3 embeded microphones

- 2 libellium wireless network sensors boards

- 3 temperature sensors

- 2 light sensors

- 1 humidity sensor

- 1 pressure sensor

- 1 CO2 level sensor

- 1 O2 level sensor

- 1 pollution sensor

- 1 dust sensor

- 1 dedicated wifi access point ("paranoid hotspot")

- 6 dmx controllable led lights

- 1 lcd led acreen (1920x1080), 2nd optional.

- (optional) 1 beamer 1280x800, 4000lm.

- 1 air conditioned servers' cabinet

- servers, lanboxes

- a set of inox cables

- an overall inox frame

- some books

- some blankets

Paranoid Shelter other building blocks are (custom softwares, codes & data):

- quantity of mouvement & camera tracking

- areas' occupation, heat zones

- moving objects' location

- presence/absence counting / per overall object and surrounding / per sub zones

- normal/abnormal behaviours

- sound patterns recognition, "gunshot tracking"

- noise level

- sound location

- atmosphere monitoring

- normal/abnormal atmosphere

- wifi monitoring ("snooping")

- "intelligent" behaviours, set of rules, scripts and codes

- spatial uses' data

Pictures: Patrick Keller, Nicolas Besson, Alban Van Wassenhove

On stage with Laure Wolf + Gurshad Shaheman (actors). Used as a scenographic space/device, in the context of Eric Sadin's theatrical, Globale Surveillance. CDN (Centre Dramatique National) / ESAM of Caen in France. March 2012.

The theatrical deals with societal, spatial and theoretical questions within a "global surveillance" context (networks, communications, marketing, health, economy, (state) security).

-

Team:

Project: Patrick Keller, Christian Babski, Christophe Guignard, Stéphane Carion, Nicolas Besson, Leticia Carmo

Software development: fabric | ch, Computed·By

Digital fabrication, metal: Metoforme SA

Friday, February 24. 2012

Nick Bilton at the Times’s Bits Blog, hardly a site for speculation on vaporware, tells us to expect something remarkable from Google by the year’s end: heads-up display glasses “that will be able to stream information to the wearer’s eyeballs in real time.”

That’s right. Google’s going to turn us all into the Terminator. Minus the wanton killing, of course.

The Times post builds on the reporting of Seth Weintraub, who blogs at 9 to 5 Google. He had written about the glasses project in December, as well as this month. Weintraub had one tipster, who told him the glasses would look something like Oakley Thumps. Bilton cites “several Google employees familiar with the project,” who said the devices would cost between $250 and $600. The device is reportedly being built in Google’s “X offices,” a top-secret lab that is nonetheless not-top-secret-enough that you and I and other readers of the Times know about it. (X is favored letter for Google of late, when it comes to blue sky projects.)

A few other details about the glasses, that have emerged from either Bilton or Weintraub: they would be Andoid-based and feature a small screen that sits inches from the eye. They’d have access to a 3G or 4G network, and would have motion and GPS sensors. And, in wild, Terminator style, the glasses would even have a low-res camera “that will be able to monitor the world in real time and overlay information about locations, surrounding buildings and friends who might be nearby,” per Bilton. Google co-founder Sergey Brin is reportedly serving as a leader on the project, along with Steve Lee, who made Latitude, Google’s mapping software.

Though reportedly arriving for sale in 2012, the glasses may never reach a mass market. Google is said to be exploring ways to monetize the glasses should consumers take a liking to them. “If consumers take to the glasses when they are released later this year, then Google will explore possible revenue streams,” writes Bilton.

Google isn’t the first to dabble in the idea of heads-up display glasses. Way back in 2002, in fact, we wrote about how electronics could enable augmented reality glasses for soldiers. Though its ambitions are much more modest--hardly anything to hold a candle to The Terminator--a company called 4iiii Innovations has made some basic heads-up display glasses for athletes wanting to monitor their progress. And two years ago, TR took a pair of $2,000 augmented reality glasses from Vuzix for a spin, declaring them “dazzling”--but still wondering, “who’ll wear them?”

I’ve written before that smartwatches could represent a frontier of smartness-on-your-person. “They stand to transform your wrist into something akin to (if a wee bit short of) a heads-up display,” was how I put it. If the information Bilton and Weintraub have on Google is sound, I may have to dial back my enthusiasm on smartwatches--or at least stop likening them to heads-up displays, once the real thing exists.

Then again, smartwatches may still occupy a middle ground between utility and style. On the one hand, Oakley Thump-style smartglasses would be extraordinarily useful, for some. On the other hand, they would also be--let's face it--irredeemably geeky. As Bilton writes, “The glasses are not designed to be worn constantly — although Google expects some of the nerdiest users will wear them a lot.”

If you thought your smartwatch-sporting friend was a geek, just wait till he's flanked by people playing cyborg with Google’s forthcoming technology.

Personal comment:

Well, I'n not so sure if this is a good news... (in fact I don't --I don't like Oakley's...--, unless it would be an open project / open data with a more "design noir" approach), but Terminators will certainly be happy!

Monday, January 09. 2012

Via MIT Technology Review

-----

New system detects emotions based on variables ranging from typing speeds to the weather.

By Duncan Graham-Rowe

Researchers at Samsung have developed a smart phone that can detect people's emotions. Rather than relying on specialized sensors or cameras, the phone infers a user's emotional state based on how he's using the phone.

For example, it monitors certain inputs, such as the speed at which a user types, how often the "backspace" or "special symbol" buttons are pressed, and how much the device shakes. These measures let the phone postulate whether the user is happy, sad, surprised, fearful, angry, or disgusted, says Hosub Lee, a researcher with Samsung Electronics and the Samsung Advanced Institute of Technology's Intelligence Group, in South Korea. Lee led the work on the new system. He says that such inputs may seem to have little to do with emotions, but there are subtle correlations between these behaviors and one's mental state, which the software's machine-learning algorithms can detect with an accuracy of 67.5 percent.

The prototype system, to be presented in Las Vegas next week at the Consumer Communications and Networking Conference, is designed to work as part of a Twitter client on an Android-based Samsung Galaxy S II. It enables people in a social network to view symbols alongside tweets that indicate that person's emotional state. But there are many more potential applications, says Lee. The system could trigger different ringtones on a phone to convey the caller's emotional state or cheer up someone who's feeling low. "The smart phone might show a funny cartoon to make the user feel better," he says.

Further down the line, this sort of emotion detection is likely to have a broader appeal, says Lee. "Emotion recognition technology will be an entry point for elaborate context-aware systems for future consumer electronics devices or services," he says. "If we know the emotion of each user, we can provide more personalized services."

Samsung's system has to be trained to work with each individual user. During this stage, whenever the user tweets something, the system records a number of easily obtained variables, including actions that might reflect the user's emotional state, as well as contextual cues, such as the weather or lighting conditions, that can affect mood, says Lee. The subject also records his or her emotion at the time of each tweet. This is all fed into a type of probabilistic machine-learning algorithm known as a Bayesian network, which analyzes the data to identify correlations between different emotions and the user's behavior and context.

The accuracy is still pretty low, says Lee, but then the technology is still at a very early experimental stage, and has only been tested using inputs from a single user. Samsung won't say whether it plans to commercialize this technology, but Lee says that with more training data, the process can be greatly improved. "Through this, we will be able to discover new features related to emotional states of users or ways to predict other affective phenomena like mood, personality, or attitude of users," he says.

Reading emotion indirectly through normal cell phone use and context is a novel approach, and, despite the low accuracy, one worth pursuing, says Rosalind Picard, founder and director of MIT's Affective Computing Research Group, and cofounder of Affectiva, which last year launched a commercial product to detect human emotions. "There is a huge growing market for technology that can help businesses show higher respect for customer feelings," she says. "Recognizing when the customer is interested or bored, stressed, confused, or delighted is a vital first step for treating customers with respect," she says.

Copyright Technology Review 2012.

Personal comment:

While we can doubt a little about the accuracy of so few inputs to determine someone's emotions, context aware (a step further from "geography" aware) devices seems a way to go for technology manufacturers.

Transposed into the field of environement design and architecture (sensors, probes) it could lead to some designs where there is a sort of collaboration between the environment and it's users (participants? ecology of co-evolutive functions?). We mentioned this in a previous post (The measured life): "could we think of a data based architecture (open data from health and Real Life monitoring --in addition to environmental sensors and online data--) that would collaborate with those health inputs?" and now with those emotional inputs? A sensitive environment?

We worked on something related a few years ago within the frame of a research project between EPFL (Swiss Institute of Technology, Lausanne) and ECAL (University of Art and Design, Lausanne), it was rather a robotized proof of concept at a small scale than a full environment though: The Rolling Microfunctions. The idea in this case was not that "function follows context", but rather that "function understand context and triggers open/sometimes disruptive patterns of usage, base on algorithmic and semi-autonomous rules"... Functions as a creative environment. Sort of... The result was a bit disapointing of course, but it was the idea behind it that we thought was interesting.

And of course, all these projects should be backed up by a very rigourous policy about user's, function's and space's data: they belong either to the user (privacy), to the public (open data) or why not, to the space..., but not to a company (googlacy, facebookacy, twitteracy, etc.) unless the space belong's to them, like in a shop (see "La ville franchisée", by David Mangin) .

Thursday, September 22. 2011

Via MIT Technology Review

-----

Could Abraham Lincoln have become president of the United States in a world in which poor children lack access to physical books?

By Christopher Mims

Andrew Carnegie's decision to fund free libraries at the turn of the last century -- like this one in Houston -- was inspired by the belief that knowledge and education are public goods

Today Amazon announced that it is finally rolling out Kindle-compatible ebooks to public libraries in the U.S., a much-needed evolution of the dominant e-reading platform. But there's a larger problem that this development fails to address, and it's an issue exacerbated by every part of Amazon's business model.

Access to knowledge has long been seen as vital to the public interest -- literally, in economic parlance, a "public good" -- which is why libraries have always been supported through taxes and philanthropy. (Carnegie's decision to fund 2,509 of them around the turn of the century being an especially notable example of this.)

I challenge anyone reading this to recall his or her earliest experiences with books -- nearly all of which, I'm willing to bet, were second-hand, passed on by family members or purchased in that condition. Now consider that the eBook completely eliminates both the secondary book market and any control that libraries -- i.e. the public -- has over the copies of a text it has purchased.

Except under limited circumstances, eBooks cannot be loaned or resold. They cannot be gifted, nor discovered on a trip through the shelves of a friend or the local library. They cannot be re-bound and, unlike all the rediscovered works that literally gave birth to the Renaissance, they will not last for centuries. Indeed, publishers are already limiting the number of times a library can loan out an eBook to 26.

If the transition to eBooks is complete -- and with libraries being among the most significant buyers of books, it now seems inevitable -- the flexibility of book ownership will be gone forever. Knowledge, in as much as books represent it, will belong to someone else.

Worse yet, there is the problem of the e-reader itself. This issue may be resolved by falling prices of e-readers, but there remains the possibility that the demands of profitability will drive makers of e-readers to simply set a floor on the price they're willing to charge for one and attempt to continually innovate toward tablet-like functionality in order to justify that price.

Unlike books, which are one of the few media that do not require a secondary external device for playback, e-books put additional barriers between readers and knowledge. Some of those barriers, as I've mentioned, consist of Digital Rights Management and other attempts to use intellectual property laws as a kind of rent-seeking, but others are more subtle.

One in five children in the U.S. lives below the poverty line, and those numbers are likely to increase as the world economy continues to work through a painful de-leveraging of accrued debt. In the past, the only thing a child needed to read a book was basic literacy, something that our public education system in theory still provides.

Imagine Abraham Lincoln, born in a log cabin, raised in poverty, self-taught from a small cache of books, being stymied in his early education by the lack of an e-reader. And there are countless other examples -- in his biography, Bob Dylan recounts spending his first, penniless days in New York City lost in a friend's library of classics, reading and re-reading the greatest poets of history as he found his own voice.

Sure, these are extreme examples, but it is undeniable that books have a democratizing effect on learning. They are inherently amenable to the frictionless dissemination of information. Durable and cheap to produce, to the point of disposability, their abundance, which we currently take for granted, has been a constant and invisible force for the creation of an informed citizenry.

So the question becomes: Do we want books to become subject to the 'digital divide?' Is that really wise, given the trajectory of the 21st century?

Copyright Technology Review 2011.

Monday, June 20. 2011

by nospam@example.com (Christian Babski)

Via slashgear via Computed·Blg

-----

Microsoft’s Kinect for Windows SDK will be released in beta form this week, according to Microsoft Spain president María Garaña, the toolkit allowing developers to use the motion-tracking hardware with PCs rather than the Xbox 360. Among the initially supported features, WinRumors reports, will be skeletal tracking for one or two people along with use of the four-microphone array.

That array is coupled with acoustic noise and echo cancellation, and can pinpoint which person in the field of view is speaking. Microsoft will also link it with the existing Windows speech recognition API, opening up the possibility of two users individually controlling a PC with their voice, and the system automatically recognizing which commands come from which person.

Finally, there’s XYZ depth perception to allow the Kinect camera – and the connected PC – to track how far away a user is. Microsoft’s gaming platform uses all this for motion-controlled titles such as sports, bowling and other games; back at E3 2011 the company confirmed that Star Wars would be coming to Kinect, as well as Mass Effect 3 and other titles.

For the PC, while gaming is likely to be one strand of Kinect’s use, Microsoft has also talked about its potential for other applications. Remote control of an HTPC – without having to navigate either a complex remote or wireless keyboard – is one suggestion, along with control of presentations and other media. With the Kinect for Windows SDK, third-party developers will also be able to bake support into their apps.

The Microsoft announcement will be held online via the company’s Channel 9, at 9.30am PST on Thursday June 16.

Monday, December 20. 2010

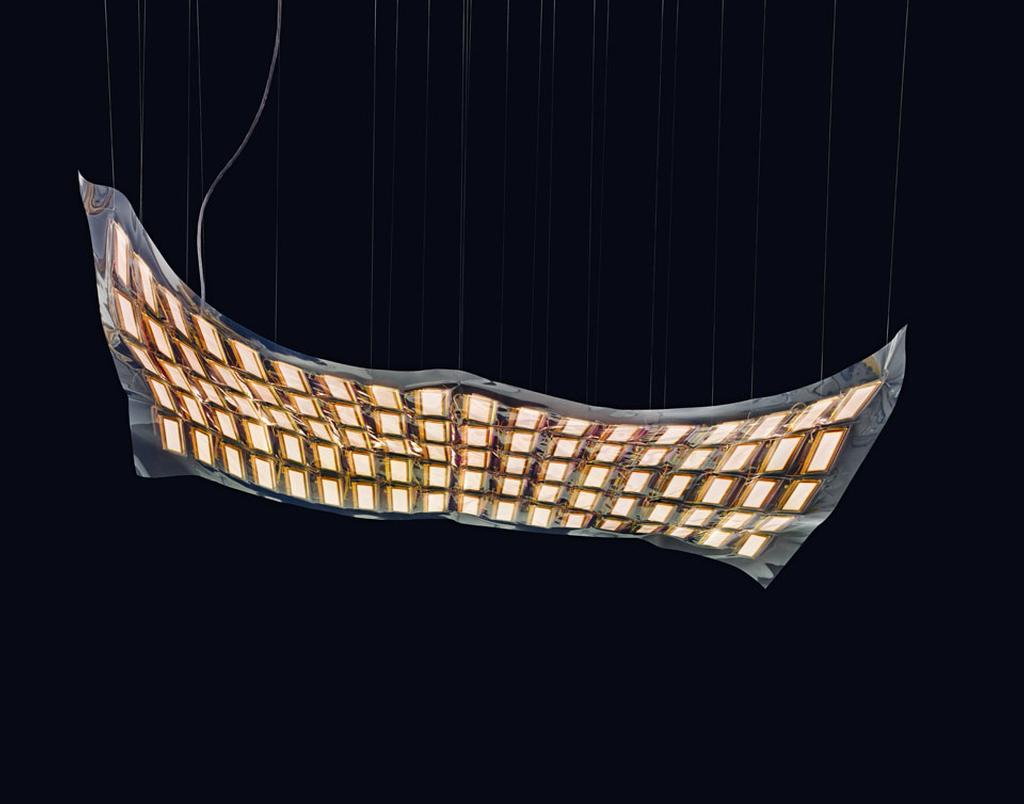

Personal comment:

Can also be used for windows of course, as the oled can be transparent when not lit and semi-transparent when lit.

|

[Image: The tools and props of surveying; courtesy of the

[Image: The tools and props of surveying; courtesy of the  [Image: Understanding landscapes by way of strange devices; courtesy of the

[Image: Understanding landscapes by way of strange devices; courtesy of the  [Image: The Venue tripods, universal mounts for interchangeable devices; designed by Chris Woebken].

[Image: The Venue tripods, universal mounts for interchangeable devices; designed by Chris Woebken]. [Image: The Venue box takes shape, custom-designed by

[Image: The Venue box takes shape, custom-designed by

.jpg)