Tuesday, August 27. 2013

Douglas Engelbarts Unfinished Revolution

-----

Computing pioneer Doug Engelbart’s inventions transformed computing, but he intended them to transform humans.

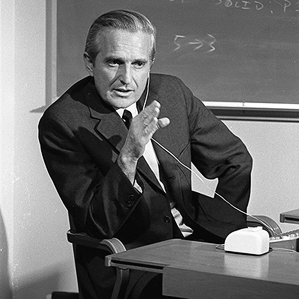

Peripheral vision: Engelbart rehearses for the “mother of all demos.”

Doug Engelbart knew that his obituaries would laud him as “Inventor of the Mouse.” I can see him smiling wistfully, ironically, at the thought. The mouse was such a small part of what Engelbart invented.

We now live in a world where people edit text on screens, command computers by pointing and clicking, communicate via audio-video and screen-sharing, and use hyperlinks to navigate through knowledge—all ideas that Engelbart’s Augmentation Research Center at Stanford Research Institute (SRI) invented in the 1960s. But Engelbart never got support for the larger part of what he wanted to build, even decades later when he finally got recognition for his achievements. When Stanford honored Engelbart with a two-day symposium in 2008, they called it “The Unfinished Revolution.”

To Engelbart, computers, interfaces, and networks were means to a more important end—amplifying human intelligence to help us survive in the world we’ve created. He listed the end results of boosting what he called “collective IQ” in a 1962 paper, Augmenting Human Intellect. They included “more-rapid comprehension … better solutions, and the possibility of finding solutions to problems that before seemed insoluble.” If you want to understand where today’s information technologies came from, and where they might go, the paper still makes good reading.

Engelbart’s vision for more capable humans, enabled by electronic computers, came to him in 1945, after reading inventor and wartime research director Vannevar Bush’s Atlantic Monthly article “As We May Think.” Bush wrote: “The summation of human experience is being expanded at a prodigious rate, and the means we use for threading through the consequent maze to the momentarily important item is the same as was used in the days of square-rigged ships.”

That inspired Engelbart, a young electrical engineer, to come up with the idea of people using screens and computers to collaboratively solve problems. He worked on his ideas for the rest of his life, despite being warned over and over by people in academia and the computer industry that his ideas of using computers for anything other than scientific computations or business data processing was “crazy” and “science fiction.”

Englebart knew right from the start that screens, input devices, hardware, and software could allow the necessary collaborative problem-solving only as part of a system that included cognitive, social, and institutional changes. But he found introducing new ways for people to work together more effectively, the lynchpin of his overall vision, more difficult than transforming the way humans and computers interact.

Engelbart labored for most of his life and career to get anyone to think seriously about his ideas, of which the mouse was an essential but low-level component. Only for one golden decade did he get significant backing. In 1963, the U.S. Defense Department provided the wherewithal for Engelbart to assemble a team, create the future, and blow the mind of every computer designer in the world by way of what has come to be known as “the mother of all demos.”

I first met Engelbart in 1983 in his Cupertino office in a small building that was completely surrounded by the Apple campus. A company that no longer exists, Tymshare, had purchased what was left of Engelbart’s lab and hired him after the Stanford Research Institute stopped supporting the Augmentation Research Center due to the Department of Defense withdrawing funding.

Engelbart noted with dismay that although the personal computer was evolving quickly, the other elements of his plan weren’t. At the time, personal computers weren’t networked to one another—as terminals of large computers could be at the time—and they lacked a mouse or point-and-click interface.

Engelbart told me in our first conversation, as I’m sure he must have told many others, that the computer and mouse were just the “artifacts” in a system that centered on “humans, using language, artifacts, methodology, and training.”

In the late 1980s, Engelbart set up his self-funded “Bootstrap Institute” to try and get his ideas about working more effectively the acceptance his artifacts had. He developed ways of analyzing how people acted inside an organization and specific techniques that he claimed would boost “collective IQ.” A set of detailed presentations on those methodologies started with what he called CODIAK. “Collective IQ is a measure of how effectively a collection of people can concurrently develop, integrate, and apply its knowledge toward its mission,” (emphasis Engelbart’s).

Mouse manufacturer Logitech provided office space, but the Bootstrap Institute – staffed by Engelbart and his daughter Christina—never sold bootstrapping, collective IQ, or CODIAK to any funder, major company, or government department.

Engelbart’s failure to spread the less tangible parts of his vision stems from several circumstances. He was an engineer at heart, and engineers’ utopian solutions don’t always account for the complexities of human social institutions. He only added a social scientist to his lab just before it was shut down.

What’s more, Engelbart’s pitches of linked leaps in technology and organizational behaviors probably sounded as crazy to 1980s corporate managers as augmenting human intellect with machines did in the early 1960s. In the end, the way Silicon Valley companies work changed radically in recent decades not through established companies going through the kind of internal transformations Engelbart imagined, but by their being displaced by radical new startups.

When I talked with him again in the mid-2000s, Engelbart marveled that people carry around in their pockets millions of times more computer power than his entire lab had in the 1960s, but the less tangible parts of his system had still not evolved so spectacularly.

Like Tim Berners-Lee, Engelbart never sought to own what he contributed to the world’s ability to know. But he was frustrated to the end by the way so many people had adopted, developed, and profited from the digital media he had helped create, while failing to pursue the important tasks he had created them to do.

Howard Rheingold, a visiting lecturer at Stanford University, has written since the early 1980s about how innovations in computers and networking change peoples’ thinking. He profiled Doug Engelbart’s work in his 1985 book, Tools for Thought and is most recently author of Net Smart: How to Thrive Online.

Wednesday, June 26. 2013

The audacious plan to end hunger with 3-D printed food

Via Computed·Blg via Quartz

-----

Anjan Contractor’s 3D food printer might evoke visions of the “replicator” popularized in Star Trek, from which Captain Picard was constantly interrupting himself to order tea. And indeed Contractor’s company, Systems & Materials Research Corporation, just got a six month, $125,000 grant from NASA to create a prototype of his universal food synthesizer.

But Contractor, a mechanical engineer with a background in 3D printing, envisions a much more mundane—and ultimately more important—use for the technology. He sees a day when every kitchen has a 3D printer, and the earth’s 12 billion people feed themselves customized, nutritionally-appropriate meals synthesized one layer at a time, from cartridges of powder and oils they buy at the corner grocery store. Contractor’s vision would mean the end of food waste, because the powder his system will use is shelf-stable for up to 30 years, so that each cartridge, whether it contains sugars, complex carbohydrates, protein or some other basic building block, would be fully exhausted before being returned to the store.

Ubiquitous food synthesizers would also create new ways of producing the basic calories on which we all rely. Since a powder is a powder, the inputs could be anything that contain the right organic molecules. We already know that eating meat is environmentally unsustainable, so why not get all our protein from insects?

If eating something spat out by the same kind of 3D printers that are currently being used to make everything from jet engine parts to fine art doesn’t sound too appetizing, that’s only because you can currently afford the good stuff, says Contractor. That might not be the case once the world’s population reaches its peak size, probably sometime near the end of this century.

“I think, and many economists think, that current food systems can’t supply 12 billion people sufficiently,” says Contractor. “So we eventually have to change our perception of what we see as food.”

There will be pizza on Mars

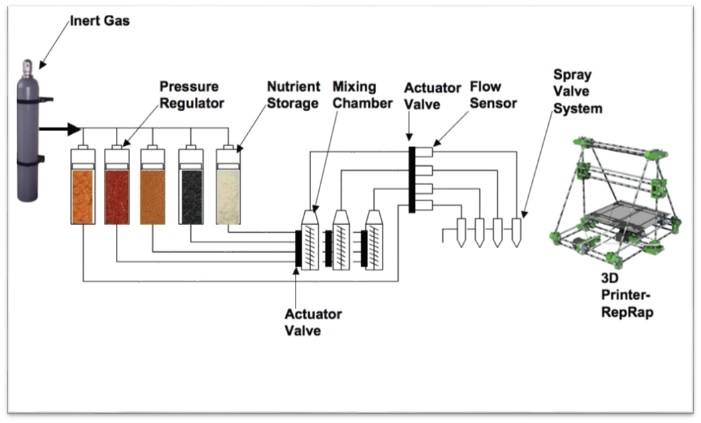

The ultimate in molecular gastronomy. (Schematic of SMRC’s 3D printer for food.)SMRC

If Contractor’s utopian-dystopian vision of the future of food ever comes to pass, it will be an argument for why space research isn’t a complete waste of money. His initial grant from NASA, under its Small Business Innovation Research program, is for a system that can print food for astronauts on very long space missions. For example, all the way to Mars.

“Long distance space travel requires 15-plus years of shelf life,” says Contractor. “The way we are working on it is, all the carbs, proteins and macro and micro nutrients are in powder form. We take moisture out, and in that form it will last maybe 30 years.”

Pizza is an obvious candidate for 3D printing because it can be printed in distinct layers, so it only requires the print head to extrude one substance at a time. Contractor’s “pizza printer” is still at the conceptual stage, and he will begin building it within two weeks. It works by first “printing” a layer of dough, which is baked at the same time it’s printed, by a heated plate at the bottom of the printer. Then it lays down a tomato base, “which is also stored in a powdered form, and then mixed with water and oil,” says Contractor.

Finally, the pizza is topped with the delicious-sounding “protein layer,” which could come from any source, including animals, milk or plants.

The prototype for Contractor’s pizza printer (captured in a video, above) which helped him earn a grant from NASA, was a simple chocolate printer. It’s not much to look at, nor is it the first of its kind, but at least it’s a proof of concept.

Replacing cookbooks with open-source recipes

SMRC’s prototype 3D food printer will be based on open-source hardware from the RepRap project.RepRap

Remember grandma’s treasure box of recipes written in pencil on yellowing note cards? In the future, we’ll all be able to trade recipes directly, as software. Each recipe will be a set of instructions that tells the printer which cartridge of powder to mix with which liquids, and at what rate and how it should be sprayed, one layer at time.

This will be possible because Contractor plans to keep the software portion of his 3D printer entirely open-source, so that anyone can look at its code, take it apart, understand it, and tweak recipes to fit. It would of course be possible for people to trade recipes even if this printer were proprietary—imagine something like an app store, but for recipes—but Contractor believes that by keeping his software open source, it will be even more likely that people will find creative uses for his hardware. His prototype 3D food printer also happens to be based on a piece of open-source hardware, the second-generation RepRap 3D printer.

“One of the major advantage of a 3D printer is that it provides personalized nutrition,” says Contractor. “If you’re male, female, someone is sick—they all have different dietary needs. If you can program your needs into a 3D printer, it can print exactly the nutrients that person requires.”

Replacing farms with sources of environmentally-appropriate calories

2032: Delicious Uncle Sam’s Meal Cubes are laser-sintered from granulated mealworms; part of this healthy breakfast.TNO Research

Contractor is agnostic about the source of the food-based powders his system uses. One vision of how 3D printing could make it possible to turn just about any food-like starting material into an edible meal was outlined by TNO Research, the think tank of TNO, a Dutch holding company that owns a number of technology firms.

In TNO’s vision of a future of 3D printed meals, “alternative ingredients” for food include:

- algae

- duckweed

- grass

- lupine seeds

- beet leafs

- insects

From astronauts to emerging markets

While Contractor and his team are initially focusing on applications for long-distance space travel, his eventual goal is to turn his system for 3D printing food into a design that can be licensed to someone who wants to turn it into a business. His company has been “quite successful in doing that in the past,” and has created both a gadget that uses microwaves to evaluate the structural integrity of aircraft panels and a kind of metal screw that coats itself with protective sealant once it’s drilled into a sheet of metal.

Since Contractor’s 3D food printer doesn’t even exist in prototype form, it’s too early to address questions of cost or the healthiness (or not) of the food it produces. But let’s hope the algae and cricket pizza turns out to be tastier than it sounds.

Related Links:

Personal comment:

It looks like cats and dogs are already eating "rapid prototyped" food --from what I see on the pictures here-- and are a step forward in the future from us! ;)

But would it be given to Adrià Ferran, it could start to look and taste like something!

But we could also put this in perspective with the recommendation from UN (Food & Agriculture) that humanity should eat more insects in the future, both because it needs less energy and produces less carbon dioxide to produce 1kg of insects (2kg of food produce 1 kg of insects while 20kg produce 1kg of meat...) and because they provide good and healthy nutriments. As many people still don't like to eat insects due to their aspect, turn them into powder and print them might be an interesting way.

Tuesday, May 28. 2013

Arctic Instruments

Via BLDGBLOG

-----

de noreply@blogger.com (Geoff Manaugh)

[Image: Via the Extreme Environments & Future Landscapes program].

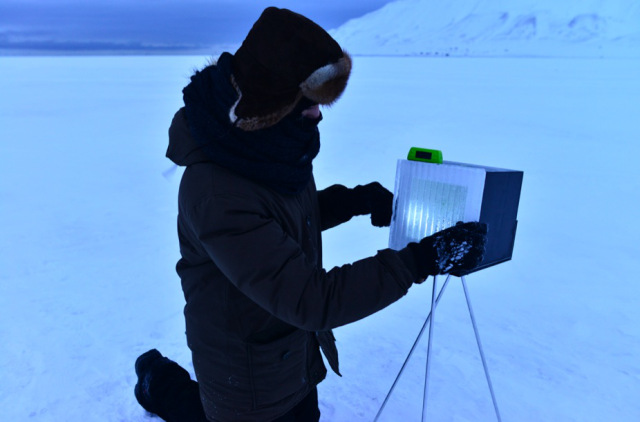

[Image: Via the Extreme Environments & Future Landscapes program].Returning briefly to the theme of landscape devices—particularly in the run-up to the launch of Landscape Futures—I thought I'd post a quick look at a trip to the Arctic island of Svalbard last autumn led by David Garcia with students from the University of Lund School of Architecture.

As part of Lund's Extreme Environments & Future Landscapes program, students flew up to visit "the far north, beyond the Polar Circle, to Svalbard, to study the growing communities affected by the melting ice cap and the large opportunities for transportation and resources that the northeast passage now offers."

[Image: Via the Extreme Environments & Future Landscapes program].

[Image: Via the Extreme Environments & Future Landscapes program].There, the students would also research first-hand the performance of "urban structures in the extreme cold."

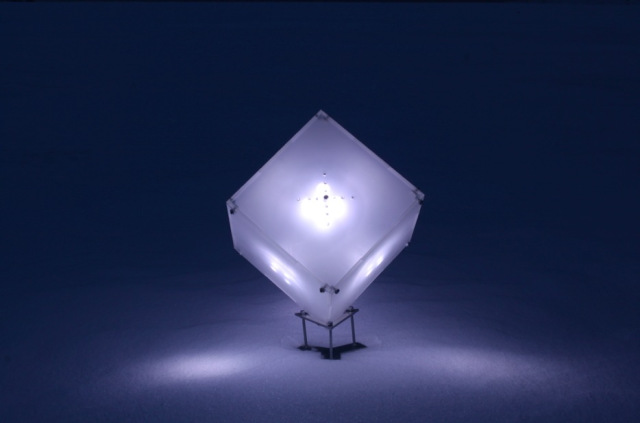

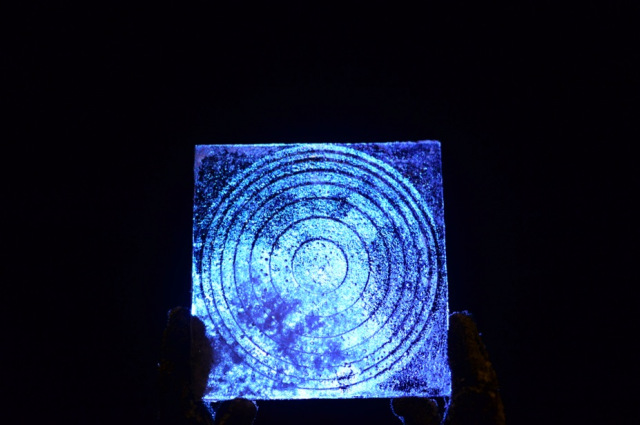

[Images: From a series of "light beacon studies" by Marta Nestorov].

[Images: From a series of "light beacon studies" by Marta Nestorov].However, Garcia adds, during their time in the extreme environment of the north, the group also "tested and probed the surroundings with surveying equipment designed and built for the expedition, at urban and natural landscapes, from -30 degrees Celsius to overcast blackout weather."

[Image: An instrument for analyzing "perception and interpretation of the aurora borealis" by Christopher Erdman].

[Image: An instrument for analyzing "perception and interpretation of the aurora borealis" by Christopher Erdman].Some of that equipment is pictured here, ranging from a colorful audio device "used for testing sound absorption properties of snow" to a kind of portable oven meant for testing the thermal-insulation qualities of "ice tiles" that might someday be used in constructing frozen architecture.

[Image: Device "for testing sound absorption qualities of snow," by Milja Lindberg and Liina Pikk].

[Image: Device "for testing sound absorption qualities of snow," by Milja Lindberg and Liina Pikk].For their test of the acoustic properties of snow, for example, using tripods seen in the above photo, students Milja Lindberg and Liina Pikk designed an experiment that would operate "by transmitting sound onto snow and reflecting it to a receiver":

The device consists of three parts. A blue transmitter tube (Ø 60mm) sends focused sound frequencies through a speaker fitted at one end of the tube. A red receiver tube 20mm wider in diameter picks up sound waves reflected from the snow test bed. Both tubes are connected to tripods with an angle adjuster that works as a protractor to set the right angle. This piece also connects two lasers on top of the tubes. The lasers meet in the middle of the snow test bed.

[Images: The aforementioned device "used for testing sound absorption qualities of snow," by Milja Lindberg and Liina Pikk].

[Images: The aforementioned device "used for testing sound absorption qualities of snow," by Milja Lindberg and Liina Pikk].

The accompanying pink & lavender light show lent a strangely theatrical air to the operation—perhaps also inadvertently revealing the possibility of designing "architecture" in snow-intensive environments using nothing but colored light.

[Images: A device for performing "biomimicry of polar plants" by Clemens Hochreiter].

[Images: A device for performing "biomimicry of polar plants" by Clemens Hochreiter].

Clemens Hochreiter's installation for studying the "biomimicry of polar plants," meanwhile, was an attempt to reproduce the shapes of Arctic flowers in small translucent shells, in order to test—if I've understood this correctly—what architectural shapes might be most useful in future greenhouse design.

Hochreiter hoped to "clarify if it [is] possible to improve the microclimate within the flower shaped volumes by using transparent, translucent, light absorbing or light reflecting materials."

[Image: Device for studying the "insulation properties and light transmission" of ice tiles, by Daniela Miller].

[Image: Device for studying the "insulation properties and light transmission" of ice tiles, by Daniela Miller].

Continuing to move through the projects relatively quickly, we come to Daniela Miller's study, pictured above, which seems to be one of the more practical investigations of the bunch. Miller's goal was to analyze the ability of specially made "ice tiles" to insulate against heat loss as well as to transmit light.

[Images: Ice tiles by Daniela Miller].

[Images: Ice tiles by Daniela Miller].

"The tiles are produced in different thicknesses," Miller explains, "and some of them encase different kinds of material. Using a heat source within the box, the insulation properties of the tiles can be measured with a thermometer.

The other series of studies deals with the translucency of the tiles. A light source is placed inside the box and the light intensity and quality crossing through the tile can be measured with a luxmeter."

The "different ice and snow plates were produced by use of a mould system," she adds, and each tile was subsequently "registered and analyzed to quantify the most relevant data"; this was all part of her attempt to explore "the potential of benefiting from ice and snow in architecture."

[Images: A "polar bear alarm" by studio director David Garcia].

[Images: A "polar bear alarm" by studio director David Garcia].

Finally, David Garcia himself built an alarm system for detecting polar bears, or what he calls "a 'soft' perimeter alarm, not one that will stop polar bears from approaching the designed area, but allows for an acoustic or visual alarm to be triggered."

Cue a team of screenwriters here to produce the world's first Arctic bear-heist film, in which enterprising 800-pound superthieves rumble up on a snow-covered city in the dark one winter and hatch a devious plan, subverting the red-laser alarm lights, slinking past optically-advanced high-tech fencing, and erasing their own paw-prints as they pull off the world's most spectacular case of ursine burglary. The Svalbard Job.

In any case, more images of other projects—and, in most cases, longer descriptions of each—are available on the expedition website.

Tuesday, March 12. 2013

Chinese Physicists Build Ghost Cloaking Device

-----

A working invisibility cloak that makes one object look like ghostly versions of another has been built in China.

Illusion cloaks that make one object look like another are a fascinating type of invisibility device. The general idea is that such a device would make an apple look like a banana or a fighter plane look like an airliner. Clearly this would have important applications.

But while materials scientists have made great strides in building ordinary invisibility cloaks that work in the microwave, infrared and optical parts of the spectrum, making illusion cloaks is much harder. That’s because the bespoke materials they rely on require manufacturing techniques that seem like a distant dream.

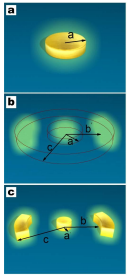

Today, Tie Jun Cui and buddies at Southeast University in Nanjing, China, say they’ve designed and built a practical alternative to illusion cloaks, which they call a “ghost cloak”.

Conventional illusion cloaks rely on a two stage process. The first is a kind of invisibility stage which distorts incoming light to remove the scattering effect of the cloaked object, an apple for example. The second stage then distorts the scattered light to make it look as if it has been scattered off another object, a banana, for example. The result is that the apple ends up looking like a banana.

But materials that can perform this two-stage process are too demanding to make with current techniques.

So Tie Jun Cui and co have developed a single stage process that achieves a slightly different effect. Their idea is to do away with the first stage that makes the apple invisible.

Instead, their device takes the light scattered from the apple and distorts it to look like something else such as a banana. The symmetry of the effect–light is scattered on both sides of the apple–mean that this approach produces two “ghost” bananas, one on each side of the apple. The technique does not remove the apple entirely but distorts it, making it appear much smaller.

So the result is that the apple is changed into a much more complex picture that is significantly different from the original.

The big advantage of this approach is that it can be achieved now with existing technology. Tie Jun Cui and co first simulate the effect of their ghost cloak on a computer model.

They then go on to build a working prototype using concentric cylinders of split ring resonators that operates in 2 dimensions. They say that the results of their tests on this device closely match the results of the simulation.

That’s an interesting advance. The ability to distort and camouflage objects is clearly useful. However, an important question is whether the distortion that this device offers is good enough for any practical applications. Tie Jun Cui and co mention “security enhancement” but just how effective this would be when the original object is still visible, albeit in shrunken form, is debatable.

It may be that there are ways of improving the performance so it’ll be interesting to see what this team comes out with next.

Ref: arxiv.org/abs/1301.3710: Creation of Ghost Illusions Using Metamaterials in Wave Dynamics

Related Links:

Monday, February 11. 2013

MeCam $49 flying camera concept follows you around, streams video to your phone

Via Computed By via liliputing

-----

Always Innovating is working on a tiny flying video camera called the MeCam. The camera is designed to follow you around and stream live video to your smartphone, allowing you to upload videos to YouTube, Facebook, or other sites.

And Always Innovating thinks the MeCam could eventually sell for just $49.

The camera is docked in a nano copter with 4 spinning rotors to keep it aloft. There are 14 different sensors which help the copter detect objects around it so it won’t bump into walls, people. or anything else.

Always Innovating also includes stabilization technology so that videos shouldn’t look too shaky.

Interestingly, there’s no remote control. Instead, you can control the MeCam in one of two ways. You can speak voice commands to tell it, for instance, to move up or down. Or you can enable the “follow-me” feature which tells the camera to just follow you around while shooting paparazzi-style video.

The MeCam features an Always Innovating module with an ARM Cortex-A9 processor, 1GB of RAM, WiFI, and Bluetooth.

The company hopes to license the design so that products based on the MeCam will hit the streets in early 2014.

If Always Innovating sounds familiar, that’s because it’s the same company that brought us the modular Touch Book and Smart Book products a few years ago.

If the MeCam name sounds familiar, on the other hand, it’s probably worth pointing out that the Always Innovating flying camera is not related the wearable camera that failed to come close to meeting its fundraising goals last year.

Personal comment:

Will each of us be soon sourrounded by its own arrow of little flying devices / personal agents? Like swarm of electronic flies.

Friday, December 21. 2012

Augmented Light Bulb Turns a Desk Into a Touch Screen

-----

A computer that can be screwed into a light socket can project interactive images onto any nearby surface.

By Tom Simonite on November 29, 2012

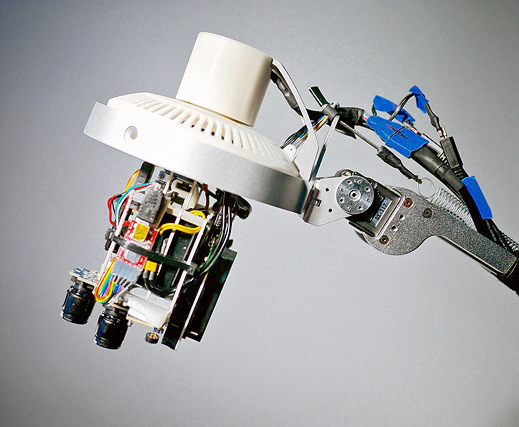

Desk toy: A computer with a camera and projector fits into a light bulb socket, and can make any surface interactive.

Powerful computers are becoming small and cheap enough to cram into all sorts of everyday objects. Natan Linder, a student at MIT’s Media Lab, thinks that fitting one inside a light bulb socket, together with a camera and projector, could provide a revolutionary new kind of interface—by turning any table or desk into a simple touch screen.

The LuminAR device, created by Linder and colleagues at the Media Lab, can project interactive images onto a surface, sensing when a person’s finger or hand points to an element within those images. Linder describes LuminAR as an augmented-reality system because the images and interfaces it projects can alter the function of a surface or object. While LuminAR might seem like a far-fetched concept, many large technology companies are experimenting with new kinds of computer interfaces in hopes of discovering new markets for their products (see “Google Game Could Be Augmented Reality’s First Killer App” and ”A New Chip to Bring 3-D Gesture Control to Smartphones”).

Linder’s system uses a camera, a projector, and software to recognize objects and project imagery onto or around them, and also to function as a scanner. It connects to the Internet using Wi-Fi. Some capabilities of the prototype, such as object recognition, rely partly on software running on a remote cloud server.

LuminAR could be used to create an additional display on a surface, perhaps to show information related to a task in hand. It can also be used to snap a photo of an object, or of printed documents such as a magazine. A user can then e-mail that photo to a contact by interacting with LuminAR’s projected interface.

“I’m really excited by the way this would be used by engineers and designers,” says Linder, who believes it could be useful for any creative occupation that often involves working with paper and other tangible objects as well as computers.

LuminAR could have uses beyond the desk or office environment. One demo to illustrate the use of one of the devices features a mock-up of an electronics store, where the device projected price tags next to cameras on a table, as well as buttons that could be used to call up more product information. Linder has also tried using it for Skype-style video calls, projecting the caller’s video onto the wall next to the desk the lamp stood on.

The current prototype is built around a processor from Qualcomm’s Snapdragon series, commonly used in smartphones and tablets. Linder and colleagues are experimenting with both a custom Linux-based operating system and a modified version of Google’s Android mobile operating system.

Earlier LuminAR prototypes included a motorized arm for the lamp, too. But the researchers are currently focused on finessing the bulb-only version. That design cuts costs and complexity, and also makes the technology easier to adopt, says Linder. “It has zero cost of adoption. You just change the bulb in your lamp,” he says.

Friday, November 23. 2012

First experiments with Leap Motion and Cinder

-----

After months of everyone sharing the Leap Motion demo video, the first Developer Kits are making their way into the hands of those that signed up early. Dofl Yun was one of the few to receive it last week, Leap Motion Dev Board (v.04) and with some help from Robert Hodgin and Andrew Bell with the setup, he has shared the early progress.

For those that do not know, Leap Motion is a 3d depth camera much like Microsoft Kinect except provides much better precession. It aims to represent an entirely new way of interacting with your computers. It’s more accurate than a mouse, as reliable as a keyboard and more sensitive than a touchscreen. For the first time, you can control a computer in three dimensions with your natural hand and finger movements.

Related Links:

Wednesday, October 24. 2012

Get moving

Related Links:

Personal comment:

Tony Dunne & Fiona Raby would certainly produce a far less slick scenario for this type of robotic product (they would probably rather even not design such a functional robot at all ;)). But still, it is now the third time that I see an ad for this type of product and I find it interesting to envision streets of cities filled with "ghosts" of remote citizens from distant cities or countrysides (that won't be the countryside anymore therefore) hanging around in the form of robots. Therefore, we'll have to design cities and architectures for its many inhabitants including now, robots.

Friday, September 21. 2012

Kindle as an Art Object Two Projects

-----

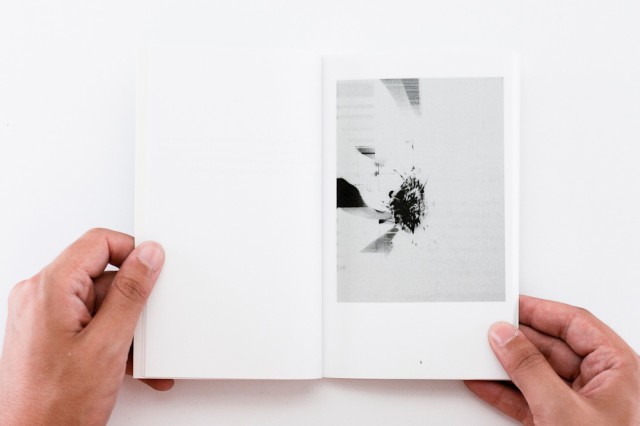

Affordable hardware has it’s benefits and problems. As cheap electronics become widespread and more available, we become less protective of them. No longer we purchase cases or try to protect them. Kindle, costing mere $7o is one of those devices, your library is in the cloud, if lost or broken it’s easily replaced. What happens with all the broken, lost or damaged Kindles?

Belnjamin Gaulon and Silvio Lorusso+Sebastian Schmieg try to address this, building on the glitch aesthetic and reappropriating Kindles into art objects.

Continue reading.... Kindle as an Art Object – Two Projects

Tuesday, July 24. 2012

Summer works (vs. Summer break)

By fabric | ch

-----

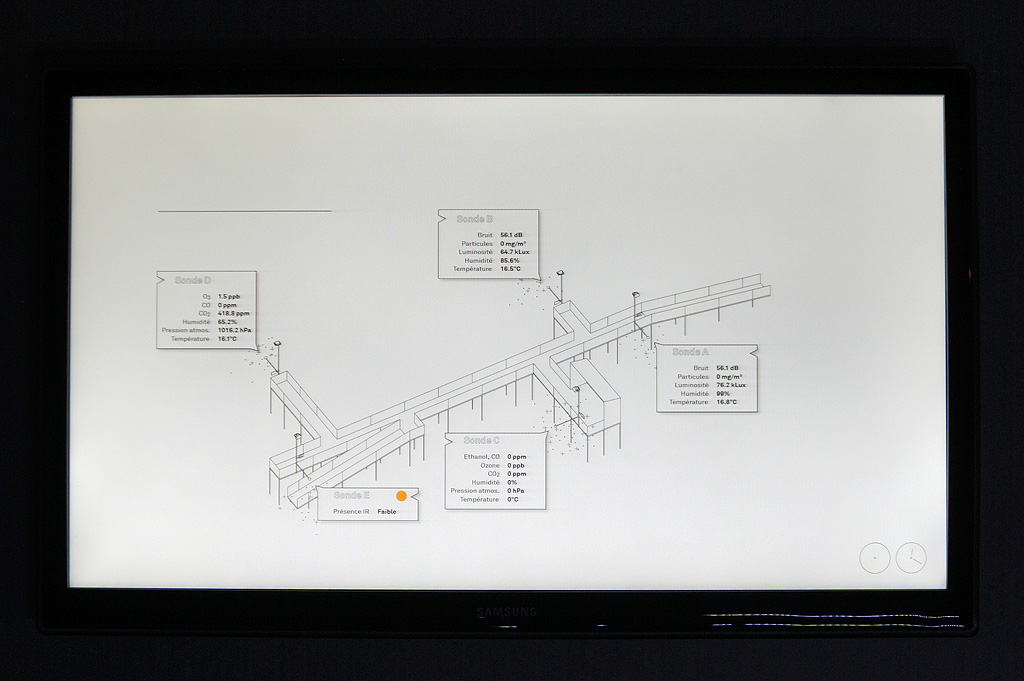

We remained relatively quiet on | rblg recently, as you may have noticed... This is mainly due to the fact that we were working hard on two new exhibitions for which we were setting up two different architectural installations.

One of these installations was an old work, Perpetual (Tropical) Sunshine (re-exhibited in the context of the Transat Festival 2012, the work was presented in one of the oldest churches of the city) and the other was a new one, Hétérochronie, quite large for an installation (~40m long), that we were presenting in a very crowded festival (Festival de la Cité 2012), in the park of the old academy (16th century building, old school of theology). It was in this later case a real "crash test" for this type of work as the public was 1° not used at all to this type of architecture and 2° very "undisciplined", with lots of kids running everywhere... (that enjoyed it a lot by the way, but were so disappointed that the screens were not "touchable"...)

For once, both exhibitions happened in our home town and base camp: Lausanne and that was the first occasion for us to show our work to our... parents! Worse public, frightening! ;) As the Transat Festival is at first a music festival, as there was a fantastic organ in the church, we took the chance to set up a special sound performance with ensemBle baBel around John Cage's work during the exhibition of Perpetual (Tropical) Sunshine: Tropical - Cage.

I'll make a detailed post later this summer about the new work, Hétérochronie (Heterochrony), once we'll be back from the "Summer break". We'll also start to post again on a more regular basis on | rblg back in September. But before that, we'll go unplugged for a few weeks!

Till then, here are a few shots from both exhibitions:

.jpg)

.jpg)

Perpetual (Tropical) Sunshine, Église Saint-François, Lausanne, June 2012.

.jpg)

.jpg)

Heterochrony, Cour de l'Académie, Lausanne, July 2012.

fabric | rblg

This blog is the survey website of fabric | ch - studio for architecture, interaction and research.

We curate and reblog articles, researches, writings, exhibitions and projects that we notice and find interesting during our everyday practice and readings.

Most articles concern the intertwined fields of architecture, territory, art, interaction design, thinking and science. From time to time, we also publish documentation about our own work and research, immersed among these related resources and inspirations.

This website is used by fabric | ch as archive, references and resources. It is shared with all those interested in the same topics as we are, in the hope that they will also find valuable references and content in it.

Quicksearch

Categories

Calendar

|

|

May '24 | |||||

| Mon | Tue | Wed | Thu | Fri | Sat | Sun |

| 1 | 2 | 3 | 4 | 5 | ||

| 6 | 7 | 8 | 9 | 10 | 11 | 12 |

| 13 | 14 | 15 | 16 | 17 | 18 | 19 |

| 20 | 21 | 22 | 23 | 24 | 25 | 26 |

| 27 | 28 | 29 | 30 | 31 | ||