Monday, April 06. 2009

The Immersive Edge

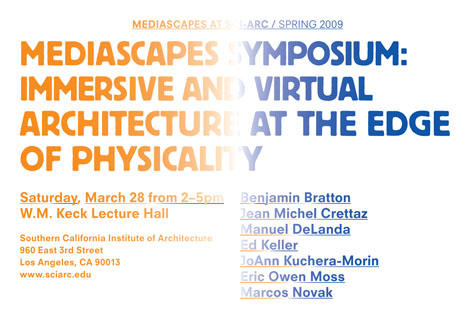

Starting just a few hours from now down at SCI-Arc, on a cloudless 73º day, "seven distinguished architects and theorists" whose designs straddle "the intersection of physical and virtual worlds" will be presenting their work at the Mediascapes Symposium, led by Ed Keller.

The bulk of the afternoon's discussion will encompass "the practice of immersive and virtual architecture, which spans animation and 3D technologies, digital environments, and questions of materiality... asking how these classifications will define our understanding of the relationships between tangible and intangible worlds."

The bulk of the afternoon's discussion will encompass "the practice of immersive and virtual architecture, which spans animation and 3D technologies, digital environments, and questions of materiality... asking how these classifications will define our understanding of the relationships between tangible and intangible worlds."

One of today's speakers, Benjamin Bratton, who will also be presenting next week at Postopolis! LA, describes his talk: "Pervasive computing will make inanimate objects see, hear, and comment on our interactions with them. This experience will, in many cases, be indistinguishable from a psychotic break, or from the rituals of classical Animism." That, or it will feel like The Sorcerer's Apprentice.

If you're in LA, be sure to stop by.

Via BLDBLOG

Personal comment:

Some names... actifs dans la région de LA autour de thématiques qui ne nous sont pas étrangères! Des "usual suspects" (comme Marcos Novak ou Eric Owenn Moss) et des nouveaux suspects (Ed Keller, Benjamin Bratton, etc.)

The Best Computer Interfaces: Past, Present, and Future

|

||

| Multitouch screen: Microsoft’s Surface is an example of a multitouch screen. Credit: Microsoft |

||

|

Multimedia

|

The Command Line

The granddaddy of all computer interfaces is the command line, which surfaced as a more effective way to control computers in the 1950s. Previously, commands had to be fed into a computer in batches, usually via a punch card or paper tape. Teletype machines, which were normally used for telegraph transmissions, were adapted as a way for users to change commands partway through a process, and receive feedback from a computer in near real time.

Video display units allowed command line information to be displayed more rapidly. The VT100, a video terminal released by Digital Equipment Corporation (DEC) in 1978, is still emulated by some modern operating systems as a way to display the command line.

Graphical user interfaces, which emerged commercially in the 1980s, made computers much easier for most people to use, but the command line still offers substantial power and flexibility for expert users.

The Mouse

Nowadays, it's hard to imagine a desktop computer without its iconic sidekick: the mouse.

Developed 41 years ago by Douglas Engelbart at the Stanford Research Institute, in California, the mouse is inextricably linked to the development of the modern computer and also played a crucial role in the rise of the graphic user interface. Engelbart demonstrated the mouse, along with several other key innovations, including hypertext and shared-screen collaboration, at an event in San Francisco in 1968.

Early computer mouses came in a variety of shapes and forms, many of which would be almost unrecognizable today. However, by the time mouses became commercially available in the 1980s, the mold was set. Three decades on and despite a few modifications (including the loss of its tail), the mouse remains relatively unchanged. That's not to say that companies haven't tried adding all manner of enhancements, including a mini joystick and an air ventilator to keep your hand sweat-free and cool.

Logitech alone has now sold more than a billion of these devices, but some believe that the mouse is on its last legs. The rise of other, more intuitive interfaces may finally loosen the mouse's grip on us.

The Touchpad

Despite stiff competition from track balls and button joysticks, the touchpad has emerged as the most popular interface for laptop computers.

With most touchpads, a user's finger is sensed by detecting disruptions to an electric field caused by the finger's natural capacitance. It's a principle that was employed as far back as 1953 by Canadian pioneer of electronic music Hugh Le Caine, to control the timbre of the sounds produced by his early synthesizer, dubbed the Sackbut.

The touchpad is also important as a precursor to the touch-screen interface. And many touchpads now feature multitouch capabilities, expanding the range of possible uses. The first multitouch touchpad for a computer was demonstrated back in 1984, by Bill Buxton, then a professor of computer design and interaction at the University of Toronto and now also principle researcher at Microsoft.

The Multitouch Screen

Mention touch screen computers, and most people will think of Apple's iPhone or Microsoft's Surface. In truth, the technology is already a quarter of a century old, having debuted in the HP-150 computer in 1983. Long before desktop computers became common, basic touch screens were used in ATMs to allow customers, who were largely computer illiterate, to use computers without much training.

However, it's fair to say that Apple's iPhone has helped revive the potential of the approach with its multitouch screen. Several cell-phone manufacturers now offer multitouch devices, and both Windows 7 and future versions of Apple's Macbook are expected to do the same. Various techniques can enable multitouch screens: capacitive sensing, infrared, surface acoustic waves, and, more recently, pressure sensing.

With this renaissance, we can expect a whole new lexicon of gestures designed to make it easier to manipulate data and call up commands. In fact, one challenge may be finding means to reproduce existing commands in an intuitive way, says August de los Reyes, a user-experience researcher who works on Microsoft's Surface.

Gesture Sensing

Compact magnetometers, accelerometers, and gyroscopes make it possible to track the movement of a device. Using both Nintendo's Wii controller and the iPhone, users can control games and applications by physically maneuvering each device through the air. Similarly, it's possible to pause and play music on Nokia's 6600 cell phone simply by tapping the device twice.

New mobile applications are also starting to tap into this trend. Shut Up, for example, lets Nokia users silence their phone by simply turning it face down. Another app, called nAlertMe, uses a 3-D gestural passcode to prevent the device from being stolen. The handset will sound a shrill alarm if the user doesn't move the device in a predefined pattern in midair to switch it on.

The next step in gesture recognition is to enable computers to better recognize hand and body movements visually. Sony's Eye showed that simple movements can be recognized relatively easily. Tracking more complicated 3-D movements in irregular lighting is more difficult, however. Startups, including Xtr3D, based in Israel, and Soft Kinetic, based in Belgium, are developing computer vision software that uses infrared for whole-body-sensing gaming applications.

Oblong, a startup based in Los Angeles, has developed a "spatial operating system" that recognizes gestural commands, provided the user wears a pair of special gloves.

Force Feedback

A field of research called haptics explores ways that technology can manipulate our sense of touch. Some game controllers already vibrate with an on-screen impact, and similarly, some cell phones shake when switched to silent.

More specialized haptic controllers include the PHANTOM, made by SensAble, based in Woburn, MA. These devices are already used for 3-D design and medical training--for example, allowing a surgeon to practice a complex procedure using a simulation that not only looks, but also feels, realistic.

Haptics could soon add another dimension to touch screens too: by better simulating the feeling of clicking a button when an icon is touched. Vincent Hayward, a leading expert in the field, at McGill University, in Montreal, Canada, has demonstrated how to generate different sensations associated with different icons on a "haptic button". In the long term, Hayward believes that it will even be possible to use haptics to simulate the sensation of textures on a screen.

Voice Recognition

Speech recognition has always struggled to shake off a reputation for being sluggish, awkward, and, all too often, inaccurate. The technology has only really taken off in specialist areas where a constrained and narrow subset of language is employed or where users are willing to invest the time needed to train a system to recognize their voice.

This is now changing. As computers become more powerful and parsing algorithms smarter, speech recognition will continue to improve, says Robert Weidmen, VP of marketing for Nuance, the firm that makes Dragon Naturally Speaking.

Last year, Google launched a voice search app for the iPhone, allowing users to search without pressing any buttons. Another iPhone application, called Vlingo, can be used to control the device in other ways: in addition to searching, a user can dictate text messages and e-mails, or update his or her status on Facebook with a few simple commands. In the past, the challenge has been adding enough processing power for a cell phone. Now, however, faster data-transfer speeds mean that it's possible to use remote servers to seamlessly handle the number crunching required.

Augmented Reality

An exciting emerging interface is augmented reality, an approach that fuses virtual information with the real world.

The earliest augmented-reality interfaces required complex and bulky motion-sensing and computer-graphics equipment. More recently, cell phones featuring powerful processing chips and sensors have to bring the technology within the reach of ordinary users.

Examples of mobile augmented reality include Nokia's Mobile Augmented Reality Application (MARA) and Wikitude, an application developed for Google's Android phone operating system. Both allow a user to view the real world through a camera screen with virtual annotations and tags overlaid on top. With MARA, this virtual data is harvested from the points of interest stored in the NavTeq satellite navigation application. Wikitude, as the name implies, gleans its data from Wikipedia.

These applications work by monitoring data from an arsenal of sensors: GPS receivers provide precise positioning information, digital compasses determine which way the device is pointing, and magnetometers or accelerometers calculate its orientation. A project called Nokia Image Space takes this a step further by allowing people to store experiences--images, video, sounds--in a particular place so that other people can retrieve them at the same spot.

Spatial Interfaces

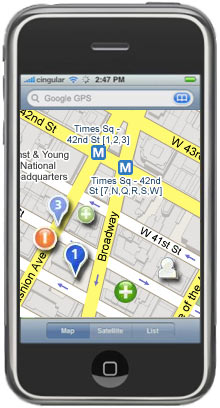

In addition to enabling augmented reality, the GPS receivers now found in many phones can track people geographically. This is spawning a range of new games and applications that let you use your location as a form of input.

Google's Latitude, for example, lets users show their position on a map by installing software on a GPS-enabled cell phone. As of October 2008, some 3,000 iPhone apps were already location aware. One such iPhone application is iNap, which is designed to monitor a person's position and wake her up before she misses her train or bus stop. The idea for it came after Jelle Prins, of Dutch software development company Moop, was worried about missing his stop on the way to the airport. The app can connect to a popular train-scheduling program used in the Netherlands and automatically identify your stops based on your previous travel routines.

SafetyNet, a location-aware application developed for Google's Android platform, lets user define parts of town that they deem to be generally unsafe. If they accidentally wander into one of these no-go areas, the program becomes active and will sound an alarm and automatically call 911 on speakerphone in response to a quick shake.

Brain-Computer Interfaces

Perhaps the ultimate computer interface, and one that remains some way off, is mind control.

Surgical implants or electroencephalogram (EEG) sensors can be used to monitor the brain activity of people with severe forms of paralysis. With training, this technology can allow "locked in" patients to control a computer cursor to spell out messages or steer a wheelchair.

Some companies hope to bring the same kind of brain-computer interface (BCI) technology to the mainstream. Last month, Neurosky, based in San Jose, CA, announced the launch of its Bluetooth gaming headset designed to monitor simple EEG activity. The idea is that gamers can gain extra powers depending on how calm they are.

Beyond gaming, BCI technology could perhaps be used to help relieve stress and information overload. A BCI project called the Cognitive Cockpit (CogPit) uses EEG information in an attempt to reduce the information overload experienced by jet pilots.

The project, which was formerly funded by the U.S. government's Defense Advanced Research Projects Agency (DARPA), is designed to discern when the pilot is being overloaded and manage the way that information is fed to him. For example, if he is already verbally communicating with base, it may be more appropriate to warn him of an incoming threat using visual means rather than through an audible alert. "By estimating their cognitive state from one moment to the next, we should be able to optimize the flow of information to them," says Blair Dickson, a researcher on the project with U.K. defense-technology company Qinetiq.

Copyright Technology Review 2009.

-----

Related Links:

Personal comment:

Un petit overview des "coolest interfaces", passées, présentes ou en cours d'avènement ( pour l'Augmented Reality, ou les Brain Computer Interfaces).

The Future of Mobile Social Networking

|

| Places to go: In June, the startup Pelago is expected to release a version of Whrrl, its mobile social-mapping software, for the iPhone. Whrrl helps people locate friends or find things to do nearby, and it incorporates recommendations made by others in the user’s social network. The image above is an artist's interpretation of what Whrrl might look like on an iPhone. Credit: Technology Review |

One rising company that's hoping for a mention during the Steve Jobs Show is Pelago, a startup that recently garnered $15 million from funders, including Kleiner Perkins Caufield and Byers. Pelago will soon offer a version of its software, called Whrrl, for the iPhone. The software enables something Pelago's chief technology officer, Darren Erik Vengroff, calls social discovery: using the iPhone's map and self-location features, as well as information about the prior activities of the user's friends, Whrrl proposes new places to explore or activities to try.

"If you think about your day-to-day life and how you discover things around you and places to go, to a great extent the source of that information is your friends," Vengroff says. With Whrrl, a user can "look through the eyes of friends and see the places they find compelling." The software begins with the user's position on the iPhone's map and indicates a smattering of nearby establishments. If the user's friends have visited and rated these places, the software indicates that as well. The map also shows the positions of nearby friends who have enabled a feature that lets them be seen by others.

Whrrl may turn out to be the leading edge of a wave of new location-based applications. "I think we're going to see a lot of new players showing up in this space," says Kurt Partridge, a research scientist at the Palo Alto Research Center who works on a similar project called Magitti. "Part of the reason," he says, "is the universal availability of GPS or access to location, which hasn't been available to application writers before." The iPhone and Nokia's N95 phone are two examples of phones that provide location data to computer programmers. Google's forthcoming Android mobile operating system may also help push location-based applications onto the market.

The idea of community-generated reviews is, of course, not new. The popular recommendation service Yelp, for example, is already integrated into Google Maps. And the concept of locating friends using a mobile phone has also been around for years; Loopt, a service that runs on Sprint and Boost Mobile phones, is one of the most common examples. Whrrl, which can also be downloaded onto BlackBerry Pearl, Curve, and Nokia N95 smart phones, is commonly compared to both types of service. But it differs from either in that it combines aspects of both. In addition, Vengroff explains, Whrrl has collected details on establishments in 17 cities, which allows the service to provide fine-tuned local search, letting the user narrow down the hunt for, say, a café to one that has outdoor seating and vegetarian options and is recommended by at least one friend.

While the possibilities presented by Whrrl are exciting to many, its mass appeal has yet to be established. First, the location data might not be fine-grained enough to be useful in all cases, so it could lead to false positives. The iPhone relies on data from Skyhook Wireless, a company that uses an enormous database of the locations of Wi-Fi base stations to locate a person within about 30 meters; GPS, however, could do much better. Also, Whrrl is most useful when members of the user's social network actively contribute reviews. This requires that the user's friends have smart phones--and the motivation to critique the places they go.

Still, the biggest obstacle faced by services like Whrrl is privacy concerns. Vengroff points out that users control whom the program lists as their friends, who can read their reviews, and who can see their physical locations. The software also offers a "cloaking" feature that lets a person become completely invisible to his or her entire Whrrl network.

"Generally, if you give people more control, they're more willing to participate," says Tanzeem Choudhury, a professor of computer science at Dartmouth College. However, some people are still concerned about how long the company will store information about its customers' locations. Choudhury says that these first-generation services will likely be used by small groups of early adopters who are more aware than most of potential privacy risks and will push companies to confront them.

Regardless, Choudhury and others are excited about the potential of services such as Whrrl. In the future, she suspects, location-based services will include more predictive features. For instance, instead of explicitly requiring you to write a review, the software might recognize how often you visit a restaurant and infer that it is a favorite. "Eventually, I think that a whole lot of exciting technology will emerge that figures out how to reduce the burden on the user," Choudhury says. "There will always be the case where user input will be important, but when we find the sweet spot, that's when I think it will take off."

Copyright Technology Review 2008.

-----

Related Links:

Personal comment:

The article up there si dated from June 2008, but Whrrl is still in beta 2.0. Since then, we've seen of course this type of services grow and this will obviously continue. It's interesting to read the article with already some distance. What seems in june 2008 something to come is now so obvious (and that was in fact already quite obvious).

fabric | rblg

This blog is the survey website of fabric | ch - studio for architecture, interaction and research.

We curate and reblog articles, researches, writings, exhibitions and projects that we notice and find interesting during our everyday practice and readings.

Most articles concern the intertwined fields of architecture, territory, art, interaction design, thinking and science. From time to time, we also publish documentation about our own work and research, immersed among these related resources and inspirations.

This website is used by fabric | ch as archive, references and resources. It is shared with all those interested in the same topics as we are, in the hope that they will also find valuable references and content in it.