Wednesday, February 10. 2010

If you're at CES and just can't stand wires, be sure to drop by the Haier booth where the company is showing off its completely wireless HDTV. Employing both Wireless Electricity technology developed at MIT, as well as Wireless Home Digital Interface (WHDI) this tube can supposedly stream video over 100 feet, but there's no telling if that WiTricity signal will be as far reaching. All this technology does add a good bit of heft to the panel's profile, so even though you might be avoiding that mess of tangled cables, don't think you're getting off that easy. Video of the wire-free panel is after the break.

Via endgadget

Friday, January 29. 2010

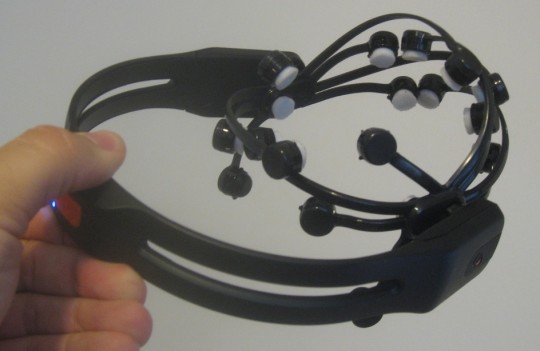

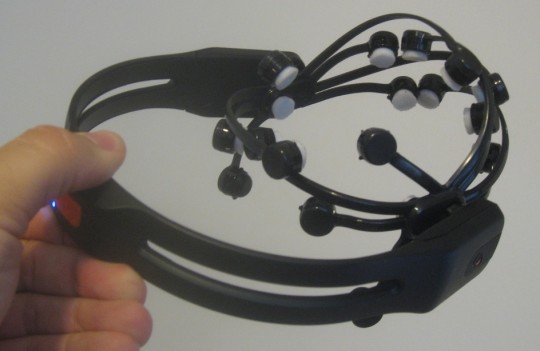

Emotiv’s brain-reading Epoq gaming headset has been floating around for a while now, but it’s only recently that customers finally received their orders. One such early adopter is Rick Dakan over at Joystiq, who slapped down $299 for an Epoq of his own. Unfortunately, while the theory may be great, the implementation is sadly lacking.

From the start, the Epoq is difficult to wear, with sixteen individual head-pads that each need to be separately moistened and slotted into place. Unfortunately Rick found that then trying to don the headset generally popped a few of those pads off; you also have to factor in the removal process, retrieving each pad and carefully stowing it in its box.

Once you’re actually wearing the Epoq, it doesn’t get much better. Learning how to use it is frustrating, given there’s no indication of what you’re actually doing wrong when it fails to work (or, conversely, what exactly you did right when it responds), and even the three basic games – including Emotipong, shown below – were difficult to control. You can map the commands from Emotiv’s software to general keyboard and mouse shortcuts, but if you can’t even master the up/down movement of Pong then more advanced system navigation seems unduly ambitious. The conclusion? ”Seldom has the early adopter tax (one I’ve paid often) felt more onerous” says Rick.

Via Slash Gear

In a week when we are all learning to say iPad, we might also start practicing 'iGlasses'. Apple's got patents on augmented reality goggles:

- Apple See-Through Augmented Reality HMD Glasses

The January issue of Mac Life sports a fauxtograph of possible Apple augmented reality HMD glasses. It’s hard to know how much of this article is based on concept, but Apple working on an AR HMD would be a huge jumpstart to the nascent technology.

In mid-April of 2008, Apple published a patent for a “Head Mounted Display System.” The patent shows screens and fiber optics and vision imaging controls. Would the display use pico projection or utilize OLED displays? Pico displays could be used right now, but OLEDs might be a year out.

Would Apple make HMD goggle for augmented reality? Looking back at this 2006 interview on MacSimumNews, we can see that Steve Jobs was already considering it. Given that he also denies Apple is looking at a HMD practically guarantees they have something in the works.

Jobs: Yes, you want a nice big screen so that you can see lots of music and you can pick out what you want, versus a tiny little screen. But then again, you want the screen to be small so that you can put it in your pocket. Actually, discovering and buying music on a computer and downloading it to the iPod—in our opinion, that’s one of the geniuses of the iPod. So you can look at changing that—and maybe that will happen over time—but I think the experience you’ll get on a device optimized for putting in your pocket is going to be far less satisfactory than on a personal computer. You may still want to do that [on a small screen] occasionally, but I don’t think it’s ever going to mean that you can not have some other device that is your primary device for buying and cataloguing music.

Swisher: What would solve that? Can it be solved?

Jobs: Rollable screens, goggles you can put on; I don’t know. It’s not on the horizon.

Goggles you can put on, indeed.

-----

Via /Message

Personal comment:

Il faut battre le fer pendant qu'il est chaud... Pour contribuer à lancer la prochaine rumeur Apple...

Monday, January 18. 2010

Posted February 24th, 2008 by uh@pachube

Pachube is a web service available at http://www.pachube.com that enables you to store, share & discover realtime sensor, energy and environment data from objects, devices & buildings around the world. Pachube is a convenient, secure & scalable platform that helps you connect to & build the 'internet of things'.

As a generalized realtime data brokerage platform, the key aim is to facilitate interaction between remote environments, both physical and virtual. Apart from enabling direct connections between any two environments, it can also be used to facilitate many-to-many connections: just like a physical "patch bay" (or telephone switchboard) Pachube enables any participating project to "plug-in" to any other participating project in real time so that, for example, buildings, interactive environments, networked energy meters, virtual worlds and mobile sensor devices can all "talk" and "respond" to each other.

... read more »

Personal comment:

The Pachube community and environment is growing. Can it become a sort of equivalent to Processing (to which it connects and gets inspired) for "intelligent" environments and the "internet of things"?

Friday, December 11. 2009

Today, at the International Climate Change Conference (COP15) in Copenhagen, we demonstrated a new technology prototype that enables online, global-scale observation and measurement of changes in the earth's forests. We hope this technology will help stop the destruction of the world's rapidly-disappearing forests. Emissions from tropical deforestation are comparable to the emissions of all of the European Union, and are greater than those of all cars, trucks, planes, ships and trains worldwide. According to the Stern Review, protecting the world's standing forests is a highly cost-effective way to cut carbon emissions and mitigate climate change. The United Nations has proposed a framework known as REDD (Reducing Emissions from Deforestation and Forest Degradation in Developing Countries) that would provide financial incentives to rainforest nations to protect their forests, in an effort to make forests worth "more alive than dead." Implementing a global REDD system will require that each nation have the ability to accurately monitor and report the state of their forests over time, in a manner that is independently verifiable. However, many of these tropical nations of the world lack the technological resources to do this, so we're working with scientists, governments and non-profits to change this. Here's what we've done with this prototype to help nations monitor their forests:

Start with satellite imagery

Satellite imagery data can provide the foundation for measurement and monitoring of the world's forests. For example, in Google Earth today, you can fly to Rondonia, Brazil and easily observe the advancement of deforestation over time, from 1975 to 2001:

(Landsat images courtesy USGS)

This type of imagery data — past, present and future — is available all over the globe. Even so, while today you can view deforestation in Google Earth, until now there hasn't been a way to measure it.

Then add science

With this technology, it's now possible for scientists to analyze raw satellite imagery data and extract meaningful information about the world's forests, such as locations and measurements of deforestation or even regeneration of a forest. In developing this prototype, we've collaborated with Greg Asner of Carnegie Institution for Science, and Carlos Souza of Imazon. Greg and Carlos are both at the cutting edge of forest science and have developed software that creates forest cover and deforestation maps from satellite imagery. Organizations across Latin America use Greg's program, Carnegie Landsat Analysis System ( CLASlite), and Carlos' program, Sistema de Alerta de Deforestation ( SAD), to analyze forest cover change. However, widespread use of this analysis has been hampered by lack of access to satellite imagery data and computational resources for processing.

Handle computation in the cloud

What if we could offer scientists and tropical nations access to a high-performance satellite imagery-processing engine running online, in the “Google cloud”? And what if we could gather together all of the earth’s raw satellite imagery data — petabytes of historical, present and future data — and make it easily available on this platform? We decided to find out, by working with Greg and Carlos to re-implement their software online, on top of a prototype platform we've built that gives them easy access to terabytes of satellite imagery and thousands of computers in our data centers.

Here are the results of running CLASlite on the satellite imagery sequence shown above:

CLASlite online: This shows deforestation and degradation in Rondonia, Brazil

from 1986-2008, with the red indicating recent activity

Here's the result of running SAD in a region of recent deforestation pressure in Mato Grosso, Brazil:

SAD online: The red "hotspots" indicate deforestation

that has happened within the last 30 days

Combining science with massive data and technology resources in this way offers the following advantages:

- Unprecedented speed: On a top-of-the-line desktop computer, it can take days or weeks to analyze deforestation over the Amazon. Using our cloud-based computing power, we can reduce that time to seconds. Being able to detect illegal logging activities faster can help support local law enforcement and prevent further deforestation from happening.

- Ease of use and lower costs: An online platform that offers easy access to data, scientific algorithms and computation horsepower from any web browser can dramatically lower the cost and complexity for tropical nations to monitor their forests.

- Security, privacy and transparency: Governments and researchers don't want to share sensitive data and results before they are ready. Our cloud-based platform allows users to control access to their data and results. At the same time, because the data, analysis and results reside online, they can also be easily shared, made available for collaboration, presented to the public and independently verified — when appropriate.

- Climate change impact: We think that a suitably scaled-up and enhanced version of this platform could be a promising as a tool for forest monitoring, reporting and verification (MRV) in support of efforts such as REDD.

As a Google.org product, this technology will be provided to the world as a not-for-profit service. This technology prototype is currently available to a small set of partners for testing purposes — it's not yet available to the general public but we expect to make it more broadly available over the next year. We are grateful to a host of individuals and organizations ( find full list here) who have advised us on developing this technology. In particular, we would like to thank the Gordon and Betty Moore Foundation for their close partnership since the initial inception of this project. We're also working with the Group on Earth Observations ( GEO), a consortium of national government bodies, inter-governmental organizations, space agencies and research institutions through GEO's Forest Carbon Tracking (FCT) task force. Last month together we launched the GEO FCT portal and are now exploring how we can also together bring the power of this new technology to tropical nations.

We're excited to be able to share this early prototype and look forward to seeing what's possible.

Posted by Rebecca Moore, Engineering Manager, Google.org and Dr. Amy Luers, Environment Manager, Google.org

-----

Via The Official Google Blog

Personal comment:

Sustainability, de- or re-forestation quatification, satellite imagery, cloud computing and Google!

Friday, December 04. 2009

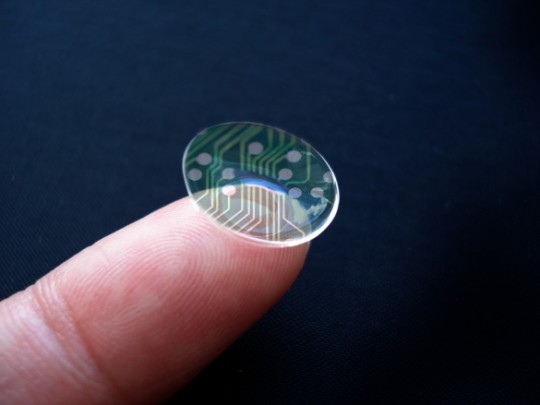

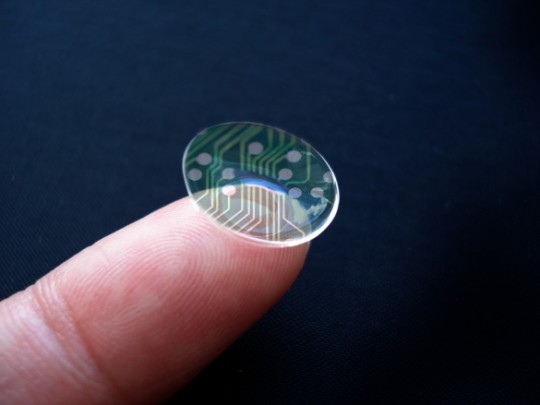

The opportunity to jab yourself in the eye with a tiny computer display is one step closer, thanks to the ongoing work with opto-electronic contact lenses taking place at the University of Washington in Seattle. The lab there has been showing off the latest prototype, the handiwork of Dr. Babak Parviz: a semi-transparent array – including an LED – embedded into a lens that receives 330 microwatts of power wirelessly from a nearby RF transmitter. Parviz has been using the prototypes to display biosensor feedback about the wearer’s vital signs, but they’ll eventually serve as a heads-up display for displaying other data.

The wireless power is picked up by a loop antenna built into the lens, and future iterations of the hardware are expected to integrate the transmitter into a cellphone. There’ll also be far many more LEDs involved, so that the resolution is high enough to be useful.

“Conventional contact lenses are polymers formed in specific shapes to correct faulty vision. To turn such a lens into a functional system, we integrate control circuits, communication circuits, and miniature antennas into the lens using custom-built optoelectronic components. Those components will eventually include hundreds of LEDs, which will form images in front of the eye, such as words, charts, and photographs. Much of the hardware is semitransparent so that wearers can navigate their surroundings without crashing into them or becoming disoriented” Dr Parviz, University of Washington in Seattle

Future plans see the opto-electronic lenses being used for more than just displaying data; they’ll also be able to monitor the eye’s surface chemistry, which would allow wearable computers to keep track on blood sugar levels in diabetics and other information. Parviz’s eventual goal is the contact lens becoming a platform “like the iPhone is today”, with developers creating custom apps. However it seems that’s a reasonably long way off into the distance.

Via Slashdot

Friday, November 13. 2009

Researchers at NASA’s Homeland Security Cell-All program have developed a chemical sniffer for the I-Phone that can detect low levels of airborne ammonia, chlorine gas and methane. Read more at NASA.

[Image Credit:Dominic Hart/NASA]

What fascinates me about this development is that it integrates an innovative sensing technology with a ubiquitous digital device. Imagining a world where everyone walked around with one of these sensors on their person at all times, the capabilities of human corporeal perception become enhanced and invisible nuances of occupied environments are made apparent.

-----

Via Jargon, etc.

Tuesday, October 20. 2009

In case you missed it, Sony's got a thing for 3D with big plans to push the technology into your living room next year. While the first application will be applied to the flat screen TV, Sony's obviously thinking about other displays judging by this tiny prototype set for reveal at Tokyo's Digital Content EXP0 2009 on Thursday. The 13 x 27-cm device packs a stereoscopic, 24-bit color image measuring just 96 × 128 pixels viewable at 360-degrees without special glasses. If the prototype ever hits the assembly line then Sony envisions its commercial use in digital signage or medical imaging -- or as a 3D photo frame, television, house for your virtual pet, or visualizer to assist with web shopping in the home. We'll be on-hand for the unveil on Thursday with live coverage and hands-on, check back then for more.

Via Engadget

Thursday, October 08. 2009

KDDI used the CEATEC conference in Tokyo as a platform to show off its updated prototype methanol fuel cell-powered mobile phone. In addition to the methanol, the fuel cell includes a lithium ion battery to help the unit deal with surges in energy use.

The battery is optimized to offer users 320 hours of power, a significant increase over current standard battery capacities. Of course the main benefit of such a power source is that the methanol can instantly be refilled (as shown in the photo above) rather than waiting for a power outlet to gradually recharge your mobile phone. KDDI hopes to have a sellable version of this technology on the market within the next few years.

Via DVice-IT Media

Personal comment:

Retour d'une technologie qui était tombée en désuétude avant même d'avoir pu être réellement testée par les utilisateurs.

Maintenant, à la vue de certaines images, il est clair que le mariage d'une source d'énergie liquide et de matériel électronique n'est pas forcément évident à gérer, surtout avec des appareils qui doivent rester dans des fourchettes de tailles limitées.

Cela dit, le principe semble fonctionner, mais il est possible que cette solution restera viable et cantonnée uniquement à des conditions d'utilisation particulières et/ou extrêmes.

Tuesday, September 29. 2009

A novel nanoscale sensor needs no power source.

By Katherine Bourzac

|

Stress sensor: This scanning-electron-microscope image shows a stress-triggered transistor in cross section. The zinc oxide nanowire, 25 nanometers in diameter, is embedded in a polymer (black area), leaving the top region free to bend.

Credit: ACS/Nano Letters |

Nanoscale sensors have many potential applications, from detecting disease molecules in blood to sensing sound within an artificial ear. But nanosensors typically have to be integrated with bulky power sources and integrated circuits. Now researchers at Georgia Tech have demonstrated a nanoscale sensor that doesn't need these other parts.

The new sensors consist of freestanding nanowires made of zinc oxide. When placed under stress, the nanowires generate an electrical potential, functioning as transistors.

Zhong Lin Wang, professor of materials science at Georgia Tech, has previously used piezoelectric nanowires to make nanogenerators that can harvest biomechanical energy, which he hopes will eventually be used to power portable electronics. Now Wang's group is taking advantage of the semiconducting properties of zinc oxide nanowires--the electrical potential generated when the new nanowires are bent, allowing them to act as transistors.

The Georgia Tech researchers used a vertical zinc oxide wire 25 nanometers in diameter to make a field-effect transistor. The nanowire is partially embedded in a substrate and connected at the root to gold electrodes that act as the source and the drain. When the wire is bent, the mechanical stress concentrates at the root, and charges build up. This creates an electrical potential that acts as a gate voltage, allowing electrical current to flow from source to drain, turning the device on. Wang's group has tested various triggers, including using a nanoscale probe to nudge the wire, and blowing gas over it.

Wang's group is "unique in using nanostructures to make something like this," says Liwei Lin, codirector of the University of California, Berkeley Sensor and Actuator Center. Nanowire sensors could be used for high-end sensing devices such as fingerprint scanners, Lin suggests.

Previous nanowire sensors have been tethered at both ends, limiting their range of motion. Wang says that the freestanding nanowires resemble the sensing hairs of the ear. If grouped into arrays of different lengths, each responsive to a different frequency of sound, the nanowires could potentially lead to battery-free hearing aids, he says.

The next step is to make arrays of the devices. "This is challenging because you have to make the electrical contact reliable, but we will be able to do that," says Wang.

Copyright Technology Review 2009.

-----

Via MIT Technology Review

|