Friday, July 25. 2014

Algorithmic creationism | #code #games

This algorithmic creationism game makes me think, to some extent, to the researches lead by philosophers, mathematicians, or physicists to prove that our own everyday world would be (or wouldn't be) the result of an extra large simulation...Yet, funilly, even so this game world is announced as "algoritmically generated", planets populated by dynosaurs or similar creatures are still present, so as the dark emperor's cosmic fleet! There's probably some commercial determinism within their creationism rules...

At some point though, we could make the following comment: what is the fundamental difference between the use of algorithms to carve a digital world for a game (a computer generated simulation) and the pratice of many contemporary architects that uses similar (generative) algorithms to carve physical buildings to live in, not to speak about all the other algorithms that structure our everyday life? If not by somebody else, we are creating our own simulation, so to say.

-----

No Man’s Sky: A Vast Game Crafted by Algorithms

A new computer game, No Man’s Sky, demonstrates a new way to build computer games filled with diverse flora and fauna.

By Simon Parkin

The quality of the light on any one particular planet will depend on the color of its solar system’s sun.

Sean Murray, one of the creators of the computer game No Man’s Sky, can’t guarantee that the virtual universe he is building is infinite, but he’s certain that, if it isn’t, nobody will ever find out. “If you were to visit one virtual planet every second,” he says, “then our own sun will have died before you’d have seen them all.”

No Man’s Sky is a video game quite unlike any other. Developed for Sony’s PlayStation 4 by an improbably small team (the original four-person crew has grown only to 10 in recent months) at Hello Games, an independent studio in the south of England, it’s a game that presents a traversable universe in which every rock, flower, tree, creature, and planet has been “procedurally generated” to create a vast and diverse play area.

“We are attempting to do things that haven’t been done before,” says Murray. “No game has made it possible to fly down to a planet, and for it to be planet-sized, and feature life, ecology, lakes, caves, waterfalls, and canyons, then seamlessly fly up through the stratosphere and take to space again. It’s a tremendous challenge.”

Procedural generation, whereby a game’s landscape is generated not by an artist’s pen but an algorithm, is increasingly prevalent in video games. Most famously Minecraft creates a unique world for each of its players, randomly arranging rocks and lakes from a limited palette of bricks whenever someone begins a new game (see “The Secret to a Video Game Phenomenon”). But No Man’s Sky is far more complex and sophisticated. The tens of millions of planets that comprise the universe are all unique. Each is generated when a player discovers it, and is subject to the laws of its respective solar systems and vulnerable to natural erosion. The multitude of creatures that inhabit the universe dynamically breed and genetically mutate as time progresses. This is virtual world building on an unprecedented scale (see video below).

This presents numerous technological challenges, not least of which is how to test a universe of such scale during its development – the team is currently using virtual testers—automated bots that wander around taking screenshots which are then sent back to the team for viewing. Additionally, while No Man’s Sky might have an infinite-sized universe, there aren’t an infinite number of players. To avoid the problem of a kind of virtual loneliness, where a player might never encounter another person on his or her travels, the game starts every new player in the same galaxy (albeit on his or her own planet) with a shared initial goal of traveling to its center. Later in the game, players can meet up, fight, trade, mine, and explore. “Ultimately we don’t know whether people will work, congregate, or disperse,” Murray says. “I know players don’t like to be told that we don’t know what will happen, but that’s what is exciting to us: the game is a vast experiment.”

The game also bears the weight of unrivaled expectation. At the E3 video game conference in Los Angeles in June, no other game met with such applause. It is the game of many childhood science fiction dreams. For Murray, that is truer than for most. He was born in Ireland, but the family lived on a farm in the Australian outback, away from civilization. “At night you could see the vastness of space,” he says. “Meanwhile, we were responsible for our own electricity and survival. We were completely cut off. It had an impact on me that I carry through life.”

Murray formed Hello Games in 2009 with three friends, all of whom had previously worked at major studios. Hello Games’ first title, Joe Danger, let players control a stuntman. The game was, according to Murray, “annoyingly successful” in the sense that it locked him and his friends into a cycle of sequels that they had formed the company to escape. During the next few years the team made four Joe Danger games for seven different platforms. “Then I had a midlife game development crisis,” says Murray. “It changes your mindset when a single game’s development represents a significant chunk of life.”

Murray decided it was time to embark upon the game he’d imagined as a child, a game about frontiership and existence on the edge of the unexplored. “We talked about the feeling of landing on a planet and effectively being the first person to discover it, not knowing what was out there,” he says. “In this era in which footage of every game is recorded and uploaded to YouTube, we wanted a game where, even if you watched every video, it still wouldn’t be spoiled for you.”

When players discover a new planet, climb that planet’s tallest peak, or identify a new species of plant or animal, they are able to upload the discovery to the game’s servers, their name forever associated with the location, like a digital Christopher Columbus or Neil Armstrong. “Players will even be able to mark the planet as toxic or radioactive, or indicate what kind of life is there and then that then appears on everyone’s map,” says Murray.

Experimentation has been a watchword throughout the game’s production. Originally the game was entirely randomly generated. “Only around 1 percent of the time would it create something that looked natural, interesting, and pleasing to the eye; the rest of the time it was a mess and, in some cases where the sky, the water, and the terrain were all the same color, unplayable,” Murray says. So the team began to create simple rules, such as the distance from a sun at which it is likely that there will be moisture,” he explains. “From that we decide there will be rivers, lakes, erosion, and weather, all of which is dependent on what the liquid is made from. The color of the water in the atmosphere will derive from what the liquid is; we model the refractions to give you a modeled atmosphere.”

Similarly, the quality of light will depend on whether the solar system has a yellow sun or, for example, a red giant or red dwarf. “These are simple rules, but combined they produce something that seems natural, recognizable to our eyes. We have come from a place where everything was random and messy to something which is procedural and emergent, but still pleasingly chaotic in the mathematical sense. Things happen with cause and effect, but they are unpredictable for us.”

At the blockbuster studios in which he once worked, 300-person teams would have to build content from scratch. Now, thanks to the increased power of PCs and video game consoles, a relatively tiny team is able to create unimaginable scope. In this sense, Hello Games may be on the cusp not only of a new universe, but also of an entirely new way of creating games. “When I look at game development in general I think the cost of creating content is the real problem,” he says. “The sheer amount of assets that artists must build to furnish a world is what forces so many safe creative bets. Likewise, you can’t have 300 people working experimentally. Game development is often more like building a skyscraper that has form and definition but is ultimately quite similar to what is around it. It never sat right with me to be in a huge warehouse with hundreds of people making a game. That is not the way it should be—and now it doesn’t have to be.”

Related Links:

Wednesday, July 23. 2014

Force Illusions | #perception

-----

Could “Force Illusions” Help Wearables Catch On?

By John Pavlus

What if the compass app in your phone didn’t just visually point north but actually seemed to pull your hand in that direction?

Two Japanese researchers will present tiny handheld devices that generate this kind of illusion at next month’s annual SIGGRAPH technology conference in Vancouver, British Columbia. The “force display” devices, called Traxion and Buru-Navi3, exploit the fact that a vibrating object is perceived as either pulling or pushing when held. The effect could be applied in navigation and gaming applications, and it suggests possibilities in mobile and wearable technology as well. Tomohiro Amemiya, a cognitive scientist at NTT Communication Science Laboratories, began the Buru-Navi project in 2004, originally as a way to research how the brain handles sensory illusions. His initial prototype was roughly the size of a paperback novel and contained a crankshaft mechanism to generate vibration, similar to the motion of a locomotive wheel. Amemiya discovered that when the vibrations occurred asymmetrically at a frequency of 10 hertz—with the crankshaft accelerating sharply in one direction and then easing back more slowly—a distinctive pulling sensation emerged in the direction of the acceleration. With his collaborator Hiroaki Gomi, Amemiya continued to modify and miniaturize the device into its current form, which is about the size of a wine cork and relies on a 40-hertz electromagnetic actuator similar to those found in smartphones. When pinched between the thumb and forefinger, Buru-Navi3 creates a continuous force illusion in one direction (toward or away from the user, depending on the device’s orientation). The second device, called Traxion, was developed within the last year at the University of Tokyo by a team led by computer science researcher Jun Rekimoto. Traxion also generates a force illusion via an asymmetrically vibrating actuator held between the fingers. “We tested many users, and they said that it feels as if there’s some invisible string pulling or pushing the device,” Rekimoto says. “It’s a strong sensation of force.” Both devices create a pulling force significant enough to guide a blindfolded user along a path or around corners. This way-finding application might be a perfect fit for the smart watches that Samsung, Google, and perhaps Apple are mobilizing to sell. Haptics, which is the name for the technology behind tactile interfaces, has been explored for years in limited or niche applications. But Vincent Hayward, who researches haptics at the Pierre and Marie Curie University in Paris, says the technology is now “reaching a critical mass.” He adds, “Enough people are trying a sufficient number of ideas that the balance between novelty and utility starts shifting.” Nonetheless, harnessing these kinesthetic effects for mainstream use is easier said than done. Amemiya admits that while his device generates strong force illusions while being pinched between a finger and thumb, the effect becomes much weaker if the device is merely placed in contact with the skin (as it would be in a watch). The rise of even crude haptic wearable devices could accelerate this kind of scientific research, though. “A wearable system is always on, so it records data constantly,” Amemiya explains. “This can be very useful for understanding human perception.”

Related Links:

Monday, July 14. 2014

Apple's lost future: phone, tablet, and laptop prototypes of the 80s | #design #curiosities

Note: it looks like many products we are using today were envisioned a long time ago (peak of expectations vs plateau)... back in the early years of personal computing (80ies). It funnily almost look like a lost utopian-future. Now that we are moving from personal computing to (personal) cloud computing (where personal must be framed into brackets, but should necessarily be a goal), we can possibly see how far personal computing was a utopian move rooted into the protest and experimental ideologies of the late 60ies and 70ies. So was the Internet in the mid 90ies. And now, what?

Via The Verge

-----

By Jacob Kastrenakes

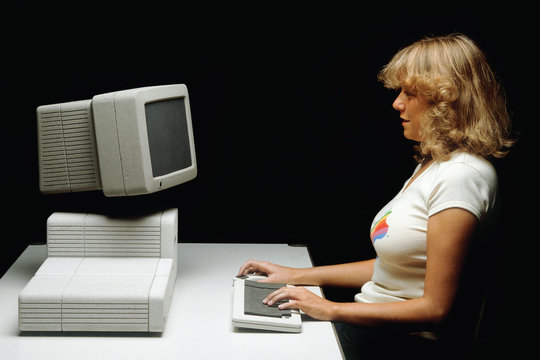

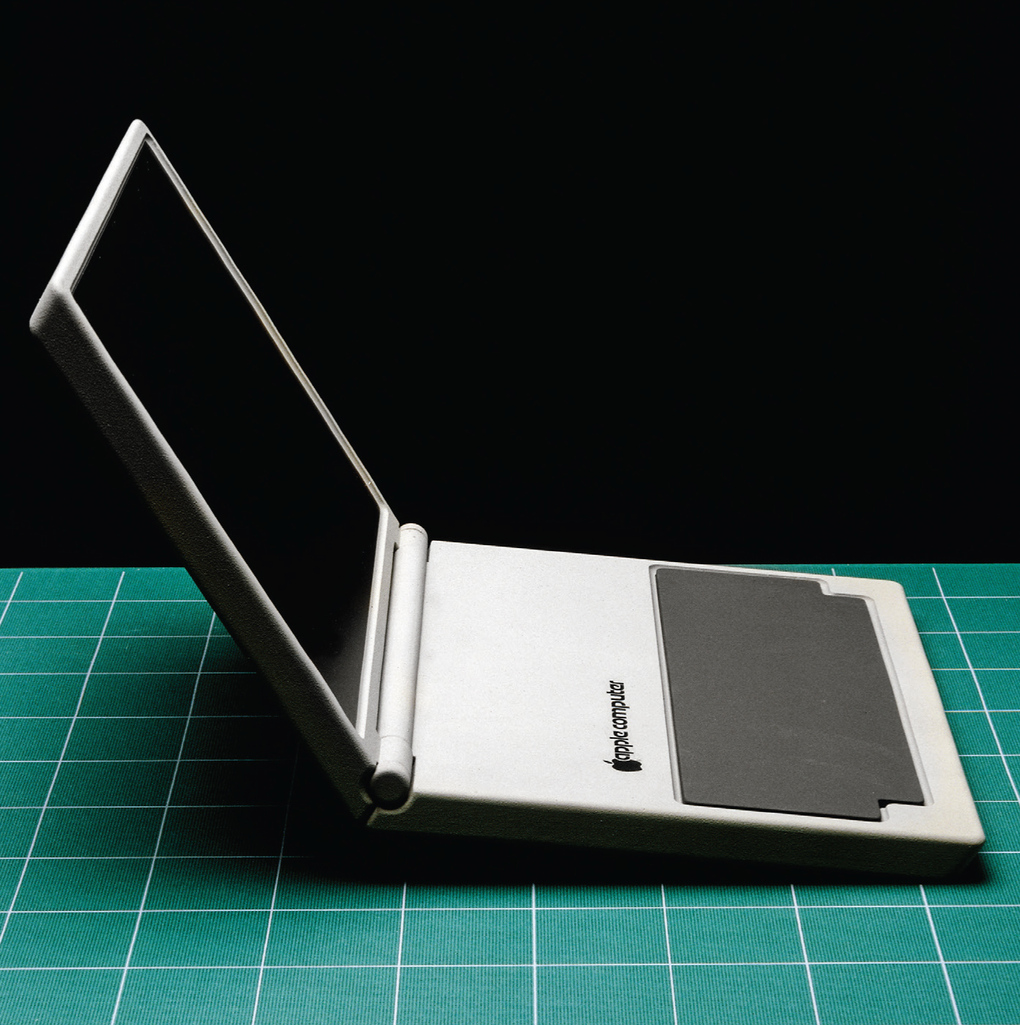

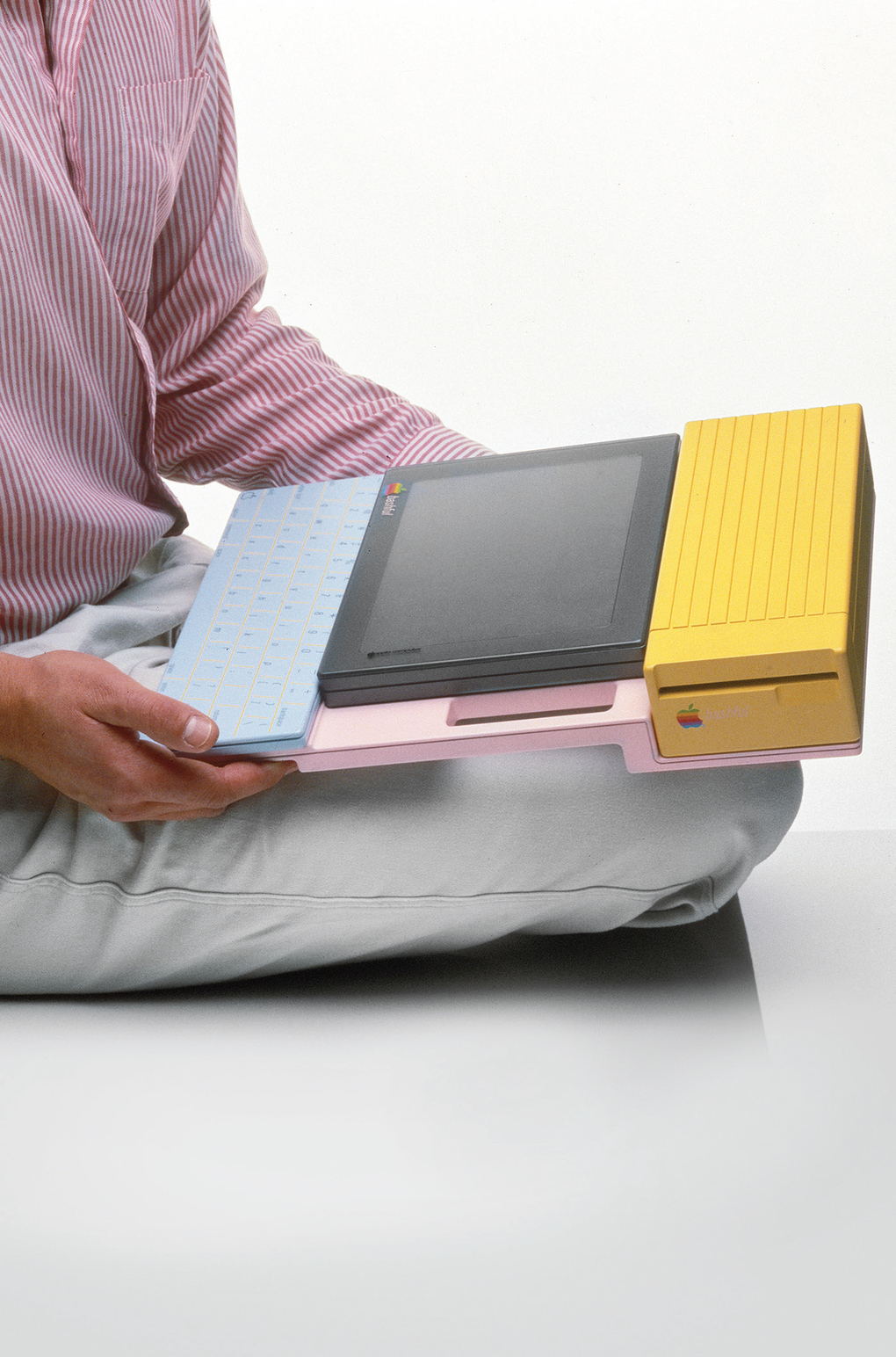

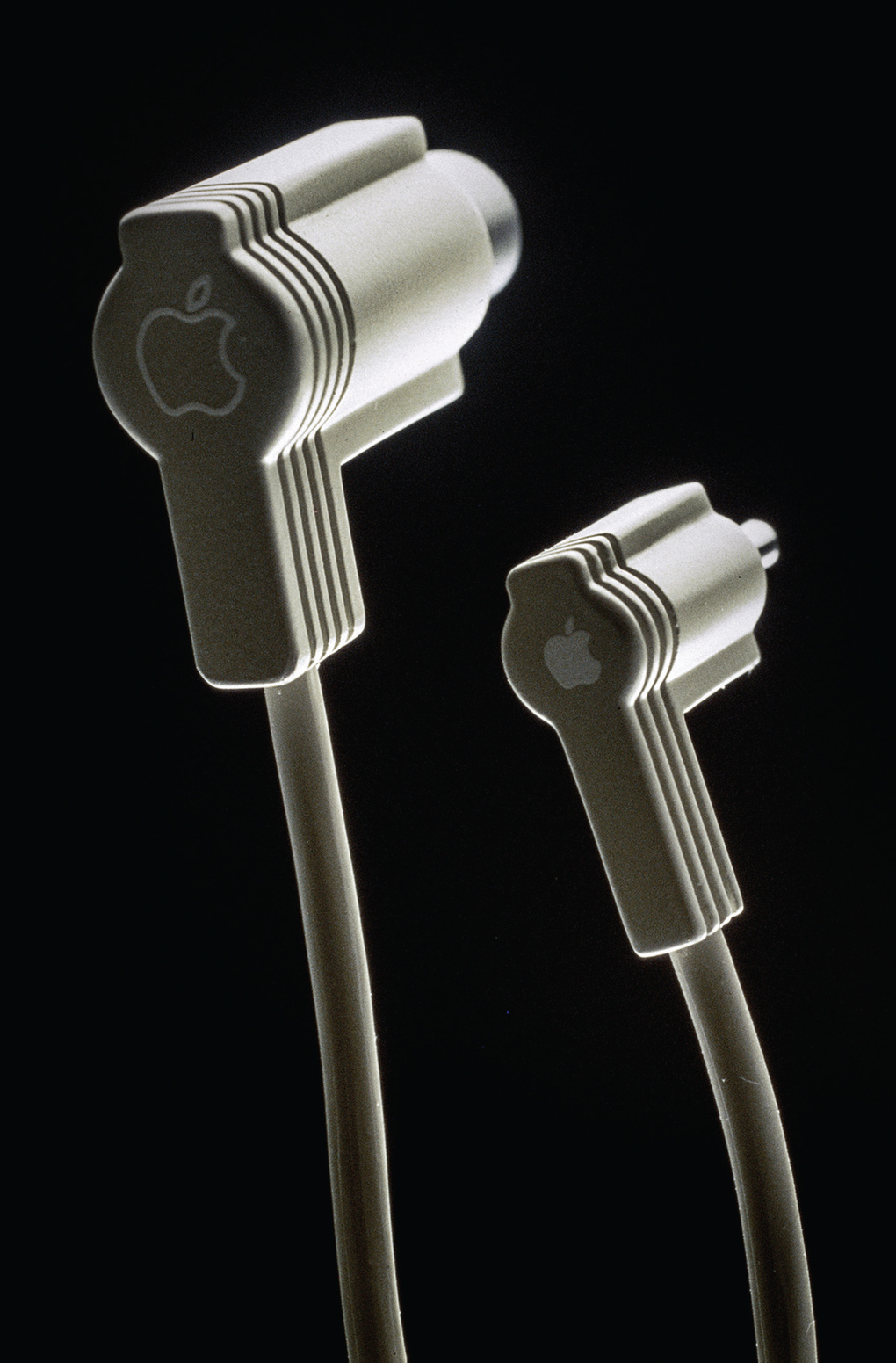

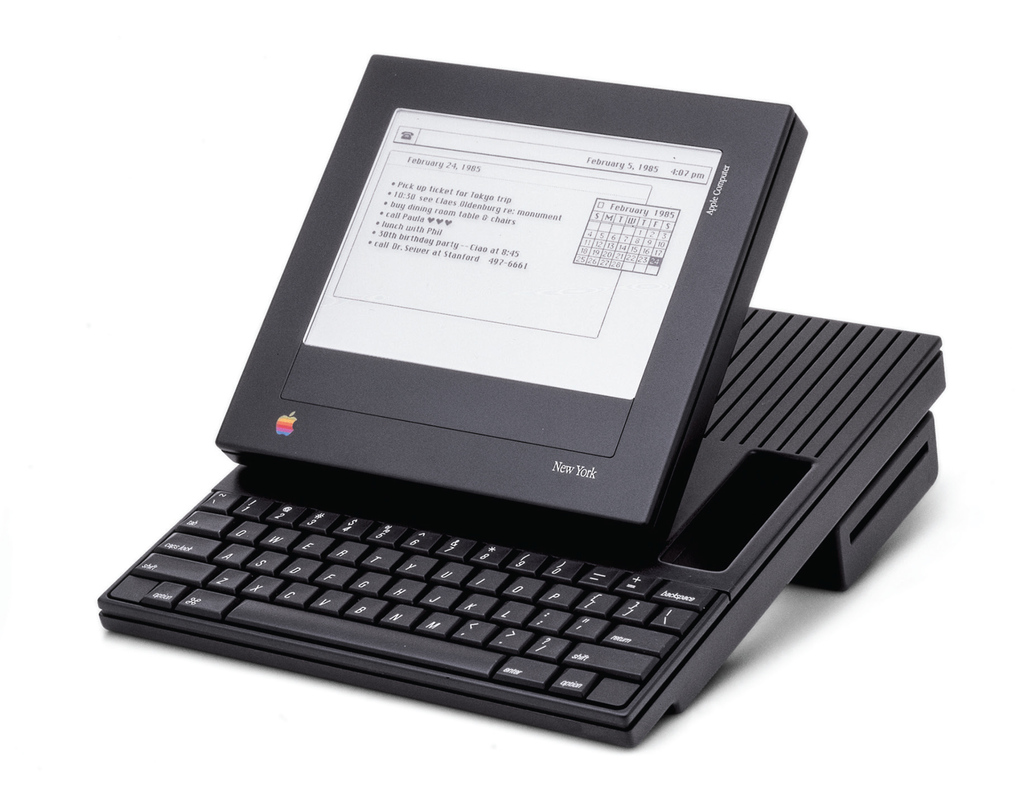

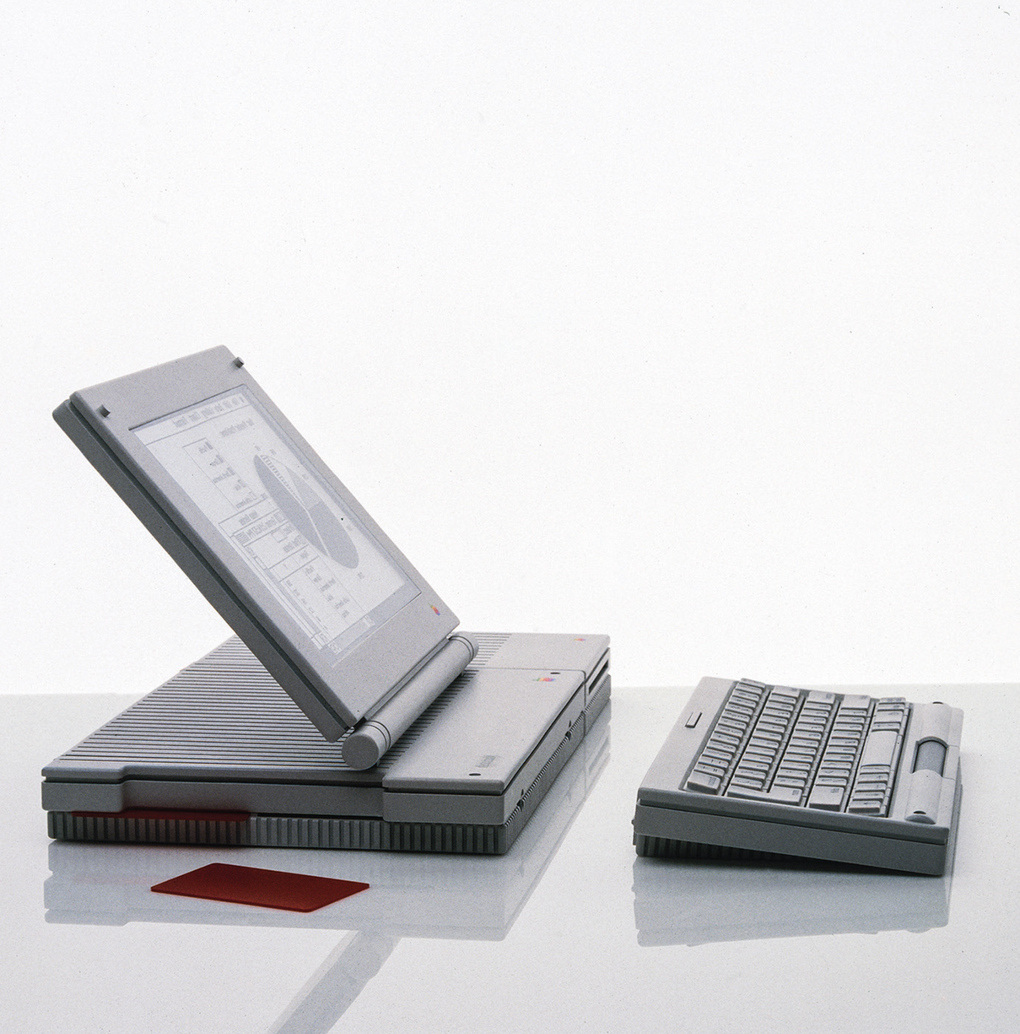

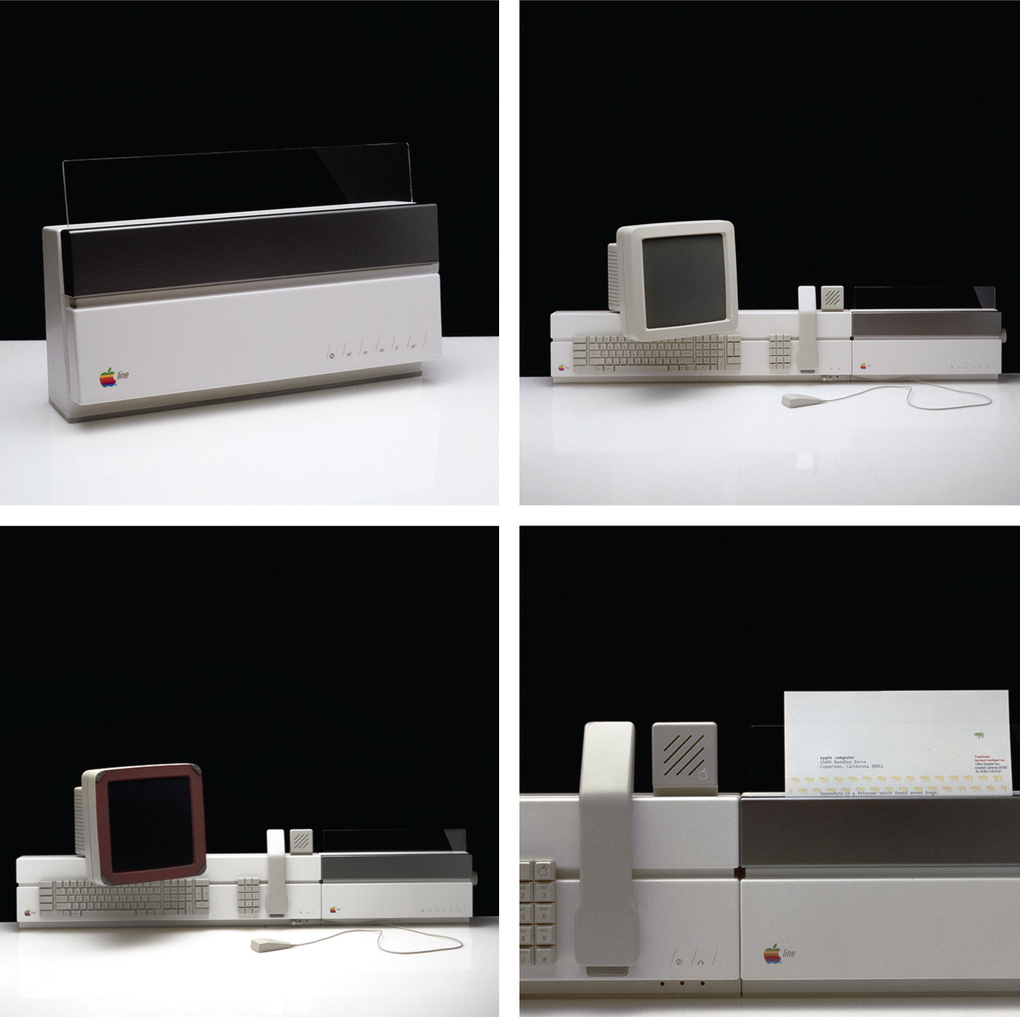

Apple's focus on design has long been one of the key factors that set its computers apart. Some of its earliest and most iconic designs, however, didn't actually come from inside of Apple, but from outside designers at Frog. In particular, credit goes to Frog's founder, Hartmut Esslinger, who was responsible for the "Snow White" design language that had Apple computers of the ’80s colored all white and covered in long stripes and rounded corners meant to make the machines appear smaller.

In fact, Esslinger goes so far as to say in his recent book, Keep it Simple, that he was the one who taught Steve Jobs to put design first. First published late last year, the book recounts Esslinger's famous collaboration with Jobs, and it includes amazing photos of some of the many, many prototypes to come out of it. They're incredibly wide ranging, from familiar-looking computers to bizarre tablets to an early phone and even a watch, of sorts.

This is far from the first time that Esslinger has shared early concepts from Apple, but these show not only a variety of styles for computers but also a variety of forms for them. Some of the mockups still look sleek and stylish today, but few resemble the reality of the tablets, laptops, and phones that Apple would actually come to make two decades later, after Jobs' return. You can see more than a dozen of these early concepts below, and even more are on display in Esslinger's book.

All images reproduced with permission of Arnoldsche Art Publishers.

Related Links:

Thursday, July 03. 2014

The Emerging Threat from Twitter's Social Capitalists | #social #networks

Note: I'm happy to learn that I'm not a "social capitalist"! I am not a "regular capitalist" either...

Via MIT Technology

-----

Social capitalists on Twitter are inadvertently ruining the network for ordinary users, say network scientists.

A couple of years, ago, network scientists began to study the phenomenon of “link farming” on Twitter and other social networks. This is the process in which spammers gather as many links or followers as possible to help spread their messages.

What these researchers discovered on Twitter was curious. They found that link farming was common among spammers. However, most of the people who followed the spam accounts came from a relatively small pool of human users on Twitter.

These people turn out to be individuals who are themselves trying to amass social capital by gathering as many followers as possible. The researchers called these people social capitalists.

That raises an interesting question: how do social capitalists emerge and what kind of influence do they have on the network? Today we get an answer of sorts, thanks to the work of Vincent Labatut at Galatasaray University in Turkey and a couple of pals who have carried out the first detailed study of social capitalists and how they behave.

These guys say that social capitalists fall into at least two different categories that reflect their success and the roles they play in linking together diverse communities. But they warn that social capitalists have a dark side too.

First, a bit of background. Twitter has around 600 million users who send 60 million tweets every day. On average, each Twitter user has around 200 followers and follows a similar number, creating a dynamic social network in which messages percolate through the network of links.

Many of these people use Twitter to connect with friends, family, news organizations, and so on. But a few, the social capitalists, use the network purely to maximize their own number of followers.

Social capitalists essentially rely on two kinds of reciprocity to amass followers. The first is to reassure other users that if they follow this user, then he or she will follow them back, a process called Follow Me and I Follow You or FMIFY. The second is to follow anybody and hope they follow back, a process called I Follow You, Follow Me or IFYFM.

This process takes place regardless of the content of messages, which is how they get mixed up with spammers, a point that turns out to be significant later.

Clearly, social capitalists are different from Twitter users who choose to follow people based on the content they tweet. The question that Labatut and co set out to answer is how to automatically identify social capitalists in Twitter and to work out how they sit within the Twitter network.

A clear feature of the reciprocity mechanism is that there will be a large overlap between the friends and followers of social capitalists. It’s possible to measure this overlap and categorize users accordingly. Social capitalists tend to have an overlap much closer to 100 percent than ordinary users.

Having identified social capitalists, another important measure is the ratio of friends to followers. Labatut and co say that those using the FMIFY strategy have a ratio smaller than 1 while those using the IFYFM will have a ration greater than 1 (because the number of followers is always greater than the number of friends).

One final way to categorize them is by their level of success. Here, Labatut and others set an arbitrary threshold of 10,000 followers. Social capitalists with more than this are obviously more successful than those with less.

To study these groups, Labatut and coanalyze an anonymized dataset of 55 million Twitter users with two billion links between them. And they find some 160,000 users who fit the description of social capitalist.

In particular, the team is interested in how social capitalists are linked to communities within Twitter, that is groups of users who are more strongly interlinked than average.

It turns out that there is a surprisingly large variety of social capitalists playing different roles. “We find out the different kinds of social capitalists occupy very specific roles,” say Labatut and co.

For example, social capitalists with fewer than 10,000 followers tend not to have large numbers of links within a single community but links to lots of different communities. By contrast, those with more than 10,000 followers can have a strong presence in single communities as well as link disparate communities together. In both cases, social capitalists are significant because their messages travel widely across the entire Twitter network.

That has important consequences for the Twitter network. Labatut and co say there is a clear dark side to the role of social capitalists. “Because of this lack of interest in the content produced by the users they follow, social capitalists are not healthy for a service such as Twitter,” they say.

That’s because they provide an indiscriminate conduit for spammers to peddle their wares. “[Social capitalists’] behavior helps spammers gain influence, and more generally makes the task of finding relevant information harder for regular users,” say Labatut and co.

That’s an interesting insight that raises a tricky question for Twitter and other social networks. Finding social capitalists should now be straightforward now that Labatut and others have found a way to spot them automatically. But if social capitalists are detrimental, should their activities be restricted?

Ref:

http://arxiv.org/abs/1406.6611 : Identifying the Community Roles of Social Capitalists in the Twitter Network.

http://www.mpi-sws.org/~farshad/TwitterLinkfarming.pdf : Understanding and Combating Link Farming in the Twitter Social Network

Wednesday, July 02. 2014

Smart Home Devices Need to Get a Lot Smarter | #smart?

Note: this sounds like a boring "smart house" digital future (of household appliances: the famous "smart washing machine" might finally and sadly indeed be connected, but it will also certainly include a Google login and password...) Surprisingly (or let's rather say pedictably), it is not an architecture magazine that speaks about the stakes of this close future, but a technological one.

-----

Imagine a dishwasher that requires a username and password. Smart homes will require unprecedented effort to ensure not just security but also usability.

The battle between Google and Apple is moving from smart phones to smart things, with both companies vying to provide the underlying architecture that networks your appliances, utilities, and entertainment equipment. Earlier in June, at its annual developer conference, Apple announced HomeKit, a new software framework for communications between home devices and Apple’s devices. Meanwhile, Nest, a maker of smart thermostats and smoke alarms that was bought by Google earlier this year for $3.2 billion, recently launched a similar endeavor with software that lets developers build apps for its products and those from several other companies.

Indeed, a quick look at the “Works with Nest” website reveals just how interconnected our future is about to become, with smart cars telling our smart thermostats when we’ll be home, smart dryers keeping our clothes “fresh and wrinkle-free” until we arrive, and household lights that flash red when the Nest detector senses smoke or carbon monoxide.

In fact, though, many of us are already living amongst an Internet of (some) things. We have desktops, laptops, cell phones, streaming devices like Apple TV and Roku boxes, and even smart televisions. It’s just that these systems have barely begun to work together properly, and therein lies the problem.

The visions of Google and Apple will require a lot more than new frameworks and developer conferences to be truly transformative. They will require heretofore-unseen levels of reliability, security, and usability. Otherwise we’re in for a frustrating and possibly dangerous networked future.

Wi-Fi is a key enabler of the networked home. But while Wi-Fi is now present in more than 61 percent of U.S. households, many homes have incomplete coverage, and when Wi-Fi doesn’t work, debugging is difficult. It will need to be dramatically more reliable than today to support the networked future.

Broadband Internet will need to be more reliable as well—as reliable as electric service is today. For many this may mean cable modems that can fall back to some kind of wireless 4G service, perhaps from a different provider. These modems will need to be dramatically easier to install and maintain than today’s.

We will also need improved debugging systems for when the Internet doesn’t work as it should. Today the primary recourse when your Internet is down is to reboot the cable modem, the laptop, or the smart TV—or even all three! And perhaps the problem wasn’t even in the house. To legitimately be considered smart, smart devices must assess what’s wrong with the connection, and then help fix it.

Connecting anything to a secure home Wi-Fi network is a challenge for many. And some devices need additional authentication information, such as an Apple or Google username and password. When passwords change, the smart objects need to get the new passwords, or they cease to work.

This approach of binding our smart devices to our personal accounts may be an easy engineering decision today, but it will make less sense as more devices show up in households with multiple family members. Families shouldn’t be forced to decide if the dishwasher is bound to Mom’s Gmail account or Dad’s. Instead, the household should have its own identity, with different family members having different levels of access depending on their needs.

Differential access will also be critical for the wide range of formal and informal arrangements that many households require. Think about babysitters, housecleaners, maintenance workers, and building superintendents. If these people need some way to interact with your smart devices, there should be some way to give them that access without sharing your username and password. And there should be some way to review their actions after the fact. And all of this delegation and auditing will need to be easy to configure and use without reading a manual or watching a video.

Beyond the issue of usability, the smart home will be an attractive target for hackers and malware. Even if the devices themselves repel attackers, other points of vulnerability include malware-infested desktops, laptops, and mobile phones. Smart things will be attacked, almost certainly in ways that we can’t anticipate today. Even simple data leaks might cause significant problems if they can be systematically harvested and exploited—for example, thieves might be able to determine when you’re not home. Voyeurs might hack your surveillance cameras.

With both Google and Apple aggressively moving into this space, another concern is the degree of compatibility between devices. Today, these firms are erecting barriers between their home entertainment offerings, with Apple TV and Chromecast, for example, offering separate content, pricing, and streaming models.

Some third-party vendors will surely try to stay out of this fight, offering apps that run on both iOS and Android, or are simply controlled via a Web interface. While that kind of strategy might work for a smart light bulb, it’ll be harder for the maker of a major appliance. If companies chose one ecosystem over another, it will be hard for consumers to switch from Apple-powered appliances to Google-powered ones.

Two things about the smart home of the future seem sure. First, given the array of resources being lined up on both sides of this fight, there is unlikely to be a dominant winner, meaning less flexibility for homeowners. Second, the coming wave of smart devices will rely on technology that is ill-equipped to guarantee reliability, and will also introduce completely new ways for things to go wrong. So the companies that make them will need to put far more focus on security, usability, and privacy to earn both customer acceptance and trust.

fabric | rblg

This blog is the survey website of fabric | ch - studio for architecture, interaction and research.

We curate and reblog articles, researches, writings, exhibitions and projects that we notice and find interesting during our everyday practice and readings.

Most articles concern the intertwined fields of architecture, territory, art, interaction design, thinking and science. From time to time, we also publish documentation about our own work and research, immersed among these related resources and inspirations.

This website is used by fabric | ch as archive, references and resources. It is shared with all those interested in the same topics as we are, in the hope that they will also find valuable references and content in it.