Friday, October 24. 2008

The Web in the World by Timo Arnall

Watch this Timo Arnall speech in fullscreen. Think about it as it scrolls by. It's important.

http://www.slideshare.net/tmo/the-web-in-the-world-presentation

Related Links:

Personal comment:

Un résumé de la situation "internet of things" par Timo Arnall

Thursday, October 23. 2008

Selectively Deleting Memories

|

| Credit: Technology Review |

For more than two decades, researchers have been studying the chemical--a protein called alpha-CaM kinase II--for its role in learning and memory consolidation. To better understand the protein, a few years ago, Joe Tsien, a neurobiologist at the Medical College of Georgia, in Athens, created a mouse in which he could activate or inhibit sensitivity to alpha-CaM kinase II.

In the most recent results, Tsien found that when the mice recalled long-term memories while the protein was overexpressed in their brains, the combination appeared to selectively delete those memories. He and his collaborators first put the mice in a chamber where the animals heard a tone, then followed up the tone with a mild shock. The resulting associations: the chamber is a very bad place, and the tone foretells miserable things.

Then, a month later--enough time to ensure that the mice's long-term memory had been consolidated--the researchers placed the animals in a totally different chamber, overexpressed the protein, and played the tone. The mice showed no fear of the shock-associated sound. But these same mice, when placed in the original shock chamber, showed a classic fear response. Tsien had, in effect, erased one part of the memory (the one associated with the tone recall) while leaving the other intact.

"One thing that we're really intrigued by is that this is a selective erasure," Tsien says. "We know that erasure occurred very quickly, and was initiated by the recall itself."

Tsien notes that while the current methods can't be translated into the clinical setting, the work does identify a potential therapeutic approach. "Our work demonstrates that it's feasible to inducibly, selectively erase a memory," he says.

"The study is quite interesting from a number of points of view," says Mark Mayford, who studies the molecular basis of memory at the Scripps Research Institute, in La Jolla, CA. He notes that current treatments for memory "extinction" consist of very long-term therapy, in which patients are asked to recall fearful memories in safe situations, with the hope that the connection between the fear and the memory will gradually weaken.

"But people are very interested in devising a way where you could come up with a drug to expedite a way to do that," he says. That kind of treatment could change a memory by scrambling things up just in the neurons that are active during the specific act of the specific recollection. "That would be a very powerful thing," Mayford says.

But the puzzle is an incredibly complex one, and getting to that point will take a vast amount of additional research. "Human memory is so complicated, and we are just barely at the foot of the mountain," Tsien says.

Copyright Technology Review 2008.

Related Links:

Personal comment:

Cela rappelle évidemment la (science-)fiction du film de Michel Gondry (Eternal sunshine of the spotless mind -cf liens ci-dessus-)

Friday, October 17. 2008

Computing with RNA

|

| Credit: Technology Review |

Scientists in California have created molecular computers that are able to self-assemble out of strips of RNA within living cells. Eventually, such computers could be programmed to manipulate biological functions within the cell, executing different tasks under different conditions. One application could be smart drug delivery systems, says Christina Smolke, who carried out the research with Maung Nyan Win and whose results are published in the latest issue of Science.

The use of biomolecules to perform computations was first demonstrated by the University of Southern California's Leonard Adleman in 1994, and the approach was later developed by Ehud Shapiro of the Weizmann Institute of Science, in Rehovot, Israel. But according to Shapiro, "What this new work shows for the first time is the ability to detect the presence or absence of molecules within the cell."

That opens up the possibility of computing devices that can respond to specific conditions within the cell, he says. For example, it may be possible to develop drug delivery systems that target cancer cells from within by sensing genes used to regulate cell growth and death. "You can program it to release the drug when the conditions are just right, at the right time and in the right place," Shapiro says.

Smolke and Win's biocomputers are built from three main components--sensors, actuators, and transmitters--all of which are made up of RNA. The input sensors are made from aptamers, RNA molecules that behave a bit like antibodies, binding tightly to specific targets. Similarly, the output components, or actuators, are made of ribozymes, complex RNA molecules that have catalytic properties similar to those of enzymes. These two components are joined by yet another RNA molecule that serves as a transmitter, which is activated when a sensor molecule recognizes an input chemical and, in turn, triggers an actuator molecule.

Smolke and Win designed their RNA computers to detect the drugs tetracycline and theophylline within yeast cells, producing a fluorescent protein as an output. By combining the RNA components in certain ways, the researchers showed that they can get them to behave like different types of logic gates--circuit elements common to any computer. For example, an AND gate produces an output only when its inputs detect the presence of both drugs, while a NOR gate produces an output only when neither drug is detected.

But this is just a demonstration, Smolke says. "We're using these modular molecules that have a sort of plug-and-play capability," she says--meaning that they can be combined in a number of different ways. Different kinds of aptamers could potentially detect thousands of different metabolic or protein inputs.

Smolke and Win produce their device by encoding RNA sequences into DNA and introducing it into the cell. "So the cell is making these devices," Smolke says. "RNA is actually a very programmable substrate."

Indeed, the attractive thing about this approach is that the components of the device and the substrate holding them together are all made from RNA, says Friedrich Simmel, a bioelectronics researcher at the Technical University of Munich, in Germany. "This is something that we also would like to do," he says, for not only do such devices self-assemble, but they can also be produced on a single long strand of RNA, all at once.

Smolke and Win have already found collaborators for possible animal studies, to see how biocomputers can be delivered to cells and used once they're there. Smolke also envisions a large-scale collaboration to create a huge library of sensors out of which these devices can be made.

Copyright Technology Review 2008.

Related Links:

Friday, October 10. 2008

Sony, Microsoft virtual communities to start

By Associated Press

-

CHIBA, Japan (AP) _ Video game rivals Sony and Microsoft are going head-to-head in virtual worlds for their home consoles later this year.

Both companies announced their services, which use graphic images that represent players called "avatars," Thursday at the Tokyo Game Show.

Sony Corp.'s twice delayed online "Home" virtual world for the PlayStation 3 console will be available sometime later this year, while U.S. software maker Microsoft Corp., which competes with its Xbox 360, is starting "New Xbox Experience" worldwide Nov. 19.

Microsoft's service will be adapted to various nations, but people will be able to communicate with other Xbox 360 users around the world, according to the Redmond, Washington-based company.

The real-time interactive computer-graphic worlds are similar to Linden Lab's "Second Life," which can be played on personal computers and has drawn millions of people.

In the so-called "metaverse" in cyberspace, players manipulate digital images called "avatars" that represent themselves, engaging in relationships, social gatherings and businesses.

Internet search leader Google Inc. has unveiled a similar three-dimensional software service called "Lively." Japanese companies have also set up such communities for personal computers.

Ryoji Akagawa, a producer at Sony Computer Entertainment Inc., Sony's gaming unit, said 24 game designing companies will provide content for "Home."

He did not give a launch date or other details. A limited test version over the summer was handy in preparing for a full-fledged service, he said.

In both Sony's and Microsoft's virtual worlds, players can personalize their avatars, choosing hairstyles, facial features and clothing. Akagawa said avatars will be able to dress up like heroes in hit video games.

"The Home has beautiful imagery with high quality three-dimensional graphics," he told reporters.

But Hirokazu Hamamura, a game expert and head of Japanese publisher Enterbrain Inc., who was at the Sony booth, said he needs to see more to assess "Home."

"You still can't tell what it's all about," he told The Associated Press, adding that "Home" may be coming a little late compared to rivals. "There are so many more possibilities for a virtual community."

Schappert said Microsoft's service promises to be more varied as a gateway to various entertainment, such as watching movies, going to virtual parties and sharing your collection of photos.

"Our goal is to make the Xbox experience more visual, easier to use, more fun to use and more social," he said in an interview at a nearby hotel. "We focused a lot on friends and other experiences outside just playing games."

Personal comment:

C'est la même idée qui vient et revient encore depuis 10 ans (un monde partagé en 3d, "réaliste" voire banal et quotidien, on y crée ou customize son avatar et sa maison, on y "discute", etc.). Je me demande si elle finira par prendre et décoller... ou si il s'agit simplement d'une mauvaise idée, de quelquechose qui n'intéresse pas les "users".

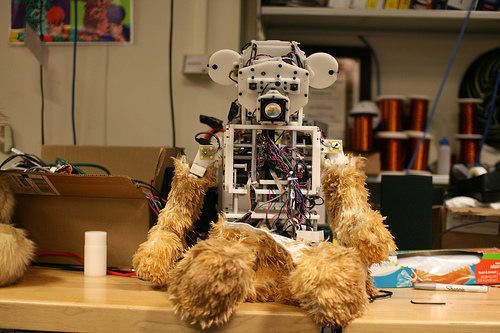

Uncanny valley variability

Which part of this bear encountered yesterday at MIT Medialab is close to the Uncanny Valley? The bear-head-shaped face? The way it looks down? the fur-like body parts? or the explosive body wiring? Is the furry part more uncanny than the head?

Related Links:

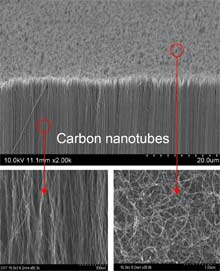

Sticky Nanotape

|

||

| Gecko tape: Arrays of carbon nanotubes with a vertically aligned section (lower left) and a branched, tangled upper layer (lower right) mimic the structures of gecko feet but are 10 times more adhesive. Credit: Science/AAAS |

||

|

Multimedia

|

For years, materials scientists have been trying to catch up with geckos. Adhesives that, like gecko feet, are dry, powerful, reusable, and self-cleaning could help robots climb walls or hold together electrical components, even in the harsh conditions of outer space. But it's been difficult to design strong adhesives that can be lifted back up again. Now researchers have developed an adhesive made of carbon nanotubes whose structure closely mimics that of gecko feet. It's 10 times more adhesive than the lizards' feet and, like the natural adhesive, easy to lift back up. And it works on a variety of surfaces, including glass and sandpaper.

Developed by a group led by Liming Dai, a professor of materials engineering at the University of Dayton, and Zhong Wang, director of the Center for Nanostructure Characterization at Georgia Tech, the adhesive is not the first made from carbon nanotubes. However, it's much stronger than previous nanotube adhesives. Its branched structure more closely mimics the structures on gecko feet, which are covered with millions of microscale hairs that branch into many smaller hairs, each of which has a weak electrical interaction with a surface. These many weak interactions add up to strong adhesion over the area of the foot. Previously, researchers have shown that arrays of vertically aligned carbon nanotubes have similar interactions with a surface.

"People have tried to mimic the gecko structures, but it's not easy," says Dai. Using a silicon substrate, he and his group grew arrays of vertically aligned carbon nanotubes topped with an unaligned layer of nanotubes, like rows of trees with branching tops. The adhesive force of these nanotube arrays is about 100 newtons per square centimeter--enough for a four-by-four-millimeter square of the material to hold up a 1,480-gram textbook. And its adhesive properties were the same when tested on very different surfaces, including glass plates, polymer films, and rough sandpaper.

One advantage of this adhesive over others is that its strength is strongly direction dependent. When it's pulled in a direction parallel to its surface, it's very strong. That's because the branched nanotubes become aligned, says Dai. But when it's pulled up with little force, as one would peel a piece of Scotch tape, the nanotubes lose contact one by one.

The greater the adhesive strength, the better, says Ali Dhinojwala, a professor of polymer science at the University of Akron. However, says Dhinojwala, who works on carbon-nanotube adhesives as well, "we also need to solve other problems before they're commercially viable." Wall-climbing robots will require adhesives that work again and again without wearing out or getting clogged with dirt. "We want a robot to take more than 50 steps in a dirty environment," says Dhinojwala. No one has demonstrated strong gecko-inspired adhesives that can do this. And nanotube adhesives will need to be grown on different substrates than those used so far. Carbon nanotubes are easy to grow on silicon wafers; creating large areas of the adhesive wouldn't be a problem. But "we're not going to stick silicon wafers to robot feet," says Dhinojwala.

Dai says that carbon nanotubes' versatility may help overcome the dirt problem. These structures can readily be functionalized with proteins and other polymers. Dai is developing adhesive nanotube arrays coated with proteins that change their shape in response to temperature changes. A robot could have feet that heat up when they get clogged, shedding dirt so that it can keep walking.

Other applications of the adhesive may take better advantage of carbon nanotubes' properties than robotics would. Carbon nanotubes are highly conductive to electricity and have promising thermal properties, Dai notes. Nanotube adhesives created to replace solder for holding together electronics components could also act as heat sinks. Other gecko-inspired adhesives made of polymers can't hold up to high temperatures, says Metin Sitti, who heads the nanorobotics lab at Carnegie Mellon. Spacecraft using nanotube adhesives instead of polymers could go to hotter areas.

Copyright Technology Review 2008.

Related Links:

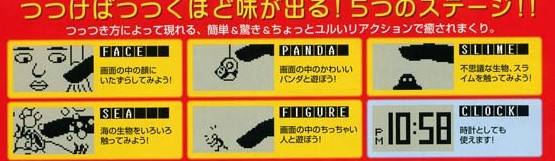

Augmented Reality? The Tuttuki Bako box needs your finger to play with virtual characters

Who needs Augmented Reality Cybermaid Alice? Bandai Japan plans to release a palm-size cube in the middle of next month that lets you stick your finger into it to interact with the beings and things contained in the box.

The so-called Tuttuki Bako, Tuttuki Box, features a display that shows a digital replica of your finger once you put it into the hole in the right side of the device and start moving. Users can then play with a panda bear or a small figure, explore a “virtual” lake and touch a girl’s face or a slime ball.

The battery-powered box, which also doubles as a clock, is sized at just 105×120×87mm. Bandai will sell it for $30 but hasn’t said yet whether it will ever be available outside Japan as well.

Related Links:

NeuroSky to Unveil Brainwave-Controlled Video Game Concept Co-Developed With Square Enix

NeuroSky, Inc., the leading manufacturer of “wearable” consumer bio-sensors, announced today the unveiling of a brainwave-controlled video game at the Tokyo Game Show 2008 in Makuhari, Japan, on October 9 and 10. This exciting and entertaining demonstration is based on a new game concept being jointly developed with Square Enix Co., Ltd. (Square Enix).

The technical demonstration developed with Square Enix operates in conjunction with Windows PC machines and features the NeuroSky commercial headset, MindSet™. The recently introduced MindSet is capable of interfacing to a variety of gaming platforms, including PC, console and mobile. It resembles a pair of headphones, with a single electrode contacting the user’s forehead while reading the player’s brainwave information, or EEG data. The headset registers the current state of relaxation or concentration of players, allowing them to perform a variety of actions within the game.

“At Square Enix, we are actively involved in developing a variety of gaming interfaces. I am thrilled to have this opportunity to work with NeuroSky, and apply their advanced sensor technology in this brain-wave controlled game demonstration,” commented Ryutaro Ichimura, Producer at Square Enix. “Although the main purpose of the demo is to test the results of our short development period, I hope it also unlocks new potentials in gaming.”

Stanley Yang, the CEO of NeuroSky, considers the joint development with Square Enix as further validation that brainwave-reading (EEG) technology is rapidly emerging onto the video game scene. “We are delighted to have built such an important partnership with a key industry player to have jointly developed a demonstration based on brainwave-reading technology. The market has been anticipating the introduction of this technology for many years, and the reality of controlling features of a video game through mental control is finally taking root.”

Related Links:

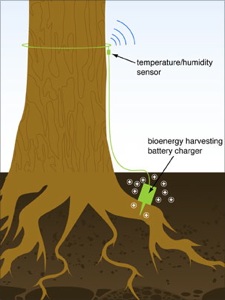

Sensors powered by trees

MIT researchers are developing a novel power scavenging systes for small wireless sensors that monitor for forest fires. The sensors are powered by the trees themselves. Each sensor's battery is trickle charged with the electricity generated by the imbalance in pH between the tree and the soil. From the MIT News Office:

A single tree doesn't generate a lot of power, but over time the "trickle charge" adds up, "just like a dripping faucet can fill a bucket over time," said Shuguang Zhang, one of the researchers on the project and the associate director of MIT's Center for Biomedical Engineering (CBE).

The system produces enough electricity to allow the temperature and humidity sensors to wirelessly transmit signals four times a day, or immediately if there's a fire. Each signal hops from one sensor to another, until it reaches an existing weather station that beams the data by satellite to a forestry command center in Boise, Idaho.

Related Links:

Tuesday, October 07. 2008

Simplified Displays - Energy efficient

|

| On display: The liquid-crystal display technology used by the One Laptop per Child project is the foundation for Mary Lou Jepsen’s new for-profit company, Pixel Qi. Credit: Pixel Qi |

In 2005, Mary Lou Jepsen joined Nicholas Negroponte, founder of MIT's Media Lab, to build a $100 laptop for each of the poorest children of the world. As CTO of the One Laptop per Child (OLPC) project, Jepsen discovered that a laptop could be many times cheaper and more power efficient if the display was made differently. Thus, she designed a liquid-crystal display (LCD) that consumes only a fraction of the power of normal displays. And to ensure that it could be manufactured cheaply, she made certain that it could be built using existing LCD manufacturing technology.

Earlier this year, Jepsen cofounded Pixel Qi (pronounced "Pixel Chee"), a startup based in San Francisco that will make displays using her 48 patents on display technology. Next year, she says, displays from Pixel Qi will be on the market. Jepsen recently spoke at the Grace Hopper Celebration of Women in Computing conference in Keystone, CO, where Technology Review caught up with her.

Technology Review: Why did you leave the One Laptop per Child project?

Mary Lou Jepsen: My job was done. It was my job to figure out how to develop the laptop and convince the manufacturers to work with us. I decided that, paradoxically, the best way to help OLPC was to spin out a for-profit company and help lower the cost of the components that go into the OLPC. It's economy of scale. If you make more of something, then you can make it cheaper.

TR: What technology lessons did you learn from OLPC?

MLJ: [That] it's a lot faster and easier to use the large manufacturing faculties of the world as your lab, rather than a small little lab where you make handcrafted things, but you have to create the relationships and the structure to do that. One of the technology lessons was to work inside the cost envelope of the developing world to lower costs overall. What's even more important and useful is dramatically lowering power consumption. Everyone wants batteries that can last 10 times longer.

TR: Why haven't we seen much innovation in display technology over the years?

MLJ: A lot of people get really seduced by demos of the next display technology. I myself fell under that spell for about 20 years. I worked on heads-up displays, virtual-reality technology, and holographic displays--all sorts of really cool technology. It's an emotional response to the display, and people want to have them. The truth is that over the last 50 years, only one display technology has gotten into mass production, and that's LCD. There are two others, in smaller productions--plasma and DLP [digital light processing]--but they're not in high volume.

TR: Your goal at Pixel Qi is to innovate within the LCD manufacturing process. How does this give us better displays?

MLJ: What became obvious to me after spending time at Intel [Jepsen was CTO of Intel's now defunct display division between 2003 and 2004] was that the silicon people did things differently from the display people. Engineers who work with silicon send their design to a fab and have a chip back in months. But engineers making displays can't just ship a new design off to a fab. So I thought, why don't we just use the manufacturing infrastructure to get the high yields like the silicon people do? That's what I did at OLPC. I went on to design a mass-producible product in six months, skipping the 20 years, millions of dollars, and missed window of opportunity that usually occurs in the display industry.

We've got deals in place with 40 percent of the LCD manufacturing industry. They said no to us initially. But we proved ourselves and our designs, and now they're willing to make a bigger effort with us and our customers.

TR: How are Pixel Qi displays different from typical displays?

MLJ: We've got new screens based on the same ideas as the OLPC screens. Importantly, there are no manufacturing changes, and no material changes [compared with traditional LCD displays]; we're using the same manufacturing process and following the same design rules. But what you can do that's interesting when you change the design is produce sunlight-readable screens and super-color saturation. You can get really great reflective screens that rival e-paper at really amazing price points and with fantastic ultra-low-power capabilities. These displays have 1 percent of the power consumption of a regular screen by using a reflector behind the LCD grid to reflect ambient light and allowing the backlight to be turned down in bright environments. Plus, you can implement a power-management system used in OLPC [that refreshes the screen only when it changes] that saves you even more. We've done this by reinventing the display based on understanding the details of factories and how they work. All of these things work, and we are shipping them next year.

TR: Which products will they be in?

MLJ: We can't announce our customers or products yet, but you'll see these displays in low-power laptops.

TR: How do you see display technology developing over the next two years?

MLJ: I see an improvement in the readability of screens. The number-one reason why people print a page is resolution, and the number-two reason is that they don't want to stare into a flashlight. Ultimately, in a year or two, we'd like to have a lawyer's monitor or an editor's monitor--some readable screen that's just for reading. When I started meeting kids in the developing world and seeing that their schools were outside, we saw the opportunity to make screens more readable in sunlight. It's ironic that the poorest kids in the world are getting the best screen technology through OLPC, but soon the rich people in the rest of the world are going to have access to it too.

TR: And beyond that?

MLJ: We have a road map that goes out pretty far. I mean, we can make improvements in all sorts of screens. Take the iPhone [touch] display. It's actually two screens. One's a touch screen and one's a display screen. Why don't we use the layers in the screen itself to do touch? It solves problems of alignment and lowers cost. We are following trends and watching how they evolve, and the great thing is that we can design a screen and have something that's reliable that we can ship in about a year. We can actually do it in that kind of time frame.

Copyright Technology Review 2008.

Related Links:

fabric | rblg

This blog is the survey website of fabric | ch - studio for architecture, interaction and research.

We curate and reblog articles, researches, writings, exhibitions and projects that we notice and find interesting during our everyday practice and readings.

Most articles concern the intertwined fields of architecture, territory, art, interaction design, thinking and science. From time to time, we also publish documentation about our own work and research, immersed among these related resources and inspirations.

This website is used by fabric | ch as archive, references and resources. It is shared with all those interested in the same topics as we are, in the hope that they will also find valuable references and content in it.

Quicksearch

Categories

Calendar

|

|

May '24 | |||||

| Mon | Tue | Wed | Thu | Fri | Sat | Sun |

| 1 | 2 | 3 | 4 | 5 | ||

| 6 | 7 | 8 | 9 | 10 | 11 | 12 |

| 13 | 14 | 15 | 16 | 17 | 18 | 19 |

| 20 | 21 | 22 | 23 | 24 | 25 | 26 |

| 27 | 28 | 29 | 30 | 31 | ||