Thursday, November 06. 2008

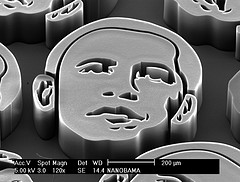

How to build a president from carbon nanotubes.

Wednesday, November 05, 2008

By Katherine Bourzac

John Hart, assistant professor of mechanical engineering at the University of Michigan, has created the president-elect's likeness using vertically grown carbon nanotubes and imaged them with a scanning-electron microscope. His gallery--and instructions on how to make your own "nanobama"--are at flickr and nanobama.com. Hart says that he made the nanobamas to promote interest in nanotechnology research.

Credit: John Hart

Personal comment:

Pour marquer le jour!

The Internet is enabling "the greatest get-out-the-vote effort in American history."

Tuesday, November 04, 2008

By David Talbot

At 9:38 this morning, the Obama campaign sent out an urgent e-mail seeking help in making one million calls to voters before 3 P.M. All you had to do was log in to his website, click on a battleground state of your choice, and obtain a call list. At 11:55 A.M., the McCain campaign did something similar but made it even easier: they simply placed the names and phone numbers of 10 Floridians into the body of an e-mail, together with a short script.

These are only the most visible signs of a vast 11th-hour effort to leverage the Web to influence voters on an unprecedented scale. And it will help decide this election. "It's hard to even describe the difference it is making today. It is the greatest get-out-the-vote effort in American history, all because these guys are able to mash the old operations with technology--everything from Google Maps to online tools--to help reach voters," says Joe Trippi, who was campaign manager for Howard Dean in 2004, when he broke ground in using online campaigning tools. "It's pretty amazing. It's come a long way in four years."

In similar fashion, massive shoe-leather door-knocking efforts are under way, also organized on large scale through the candidates' websites. Volunteers have been able to sign up to visit battleground states, obtaining maps, lists of people to visit, and scripts to follow. Generally, the targets are people registered with the candidate's party but who have had spotty voting records.

In the past two days, Obama e-mails claimed that the online calling tools enabled 500,000 calls on Sunday alone, and 600,000 on Monday. McCain's entreaties made no numerical claims. It's likely that Obama is outgunning his opponent, having aggressively built an online network of supporters and their e-mail addresses--and having developed strategies to connect them with each other and with campaign tasks--since he became a candidate in early 2007. The McCain camp overhauled its social and Web tools several months ago. "They are doing the same stuff, but with a much smaller network. Obama has been growing virally for two years. And the first mover has a huge advantage," Trippi observes.

The possibilities are many. At the simplest level, they start with voter lists and get known supporters to call them and take notes (using Web-based tools) on the outcomes. Then the campaign can revamp the lists for follow-ups as appropriate. But public databases allow myriad ways to slice and dice voter lists for more custom pitches that are made far easier via the Web. For example, the Obama campaign could, in theory, cross-reference voter lists with lists of people who hold hunting licenses, and then have hunter supporters call that group to tell them Obama won't disarm them. Or they could use demographic information from census data to make general guesses about the income bracket of the voter based on where he or she lives and make pitches about proposed tax policies. All of this becomes vastly easier on the Web: matching the right callers with the right targets, getting things done quickly, and keeping accurate records of it all.

Beyond what the campaigns are offering, some nonpartisan efforts are also leveraging technology to aid in the get-out-the-vote effort. For example, Mobile Commons, a startup in New York City, is offering a way to find your polling place by simply text-messaging your home address and zip code. Let's say you live at Tech Review headquarters, One Main Street, in Cambridge, MA, 02142. You text "pp 1 Main 02142 69866" and then get out and vote.

The Presidential election held yesterday makes a new kind of history, as the tipping point away from dominance by old media. Not only has user generated content and the blogosphere emerged to often scoop the main stream media, and to add competitive nuance, slant, and consensus. There is a root level change: news gathering and presentation in this election has swung to the smart mob era. For example, the Yahoo Political Dashboard gathers (mobs) information elections results in ways that makes both print and air media obsolete. Print media has no way to refresh itself every 5 minutes, much less “NOW.” Air media whizzes by in time — it is repeatable but not refreshable.

The internet will have more political history to make. I would predict that we will be have experimental online voting in 2012 and by 2016 our inalienable right to vote — which will have become genuine in many more parts of the world by then — will by exercised using our mobile device.

Personal comment:

D'un autre côté, on voit de nouveaux "empires" se reconstruire, différement. Le contenu n'est peut-être plus (uniquement) distribué en "top - down" comme dans les désormais vieux "msm", mais certains groupes en maîtrisent malgré tout les canaux, même si le contenu est en partie "user generated". Je pense bien entendu à de nouveaux trusts comme Google-Maps-Youtube-... ou dans un autre registre Apple-Itunes-Ietc... et dans un autre encore, peut-être, Amazon-Kindle-ebooks-...

“Mobile technology appropriation in a distant mirror: baroque infiltration, creolization and cannibalism” by Bar, Pisani and Weber is one of these mysterious academic paper that I enjoy running across. It basically investigate appropriation of mobile phones in Latin America, and how this technology is embedded within people social, economic, and political practices.

Relying on the classic literature about appropriation (for example S-shaped curves and Roger’s theories), they show how technology evolution progress through successive phases of adoption, appropriation, and reconfiguration. By analogy with the historical process of cultural appropriation in Latin America, they draw a parallel between these steps and the 3 following modes: “baroque”, “creolization” and “cannibalism”:

“Baroque layering: The most basic way in which users can appropriate a technology is for them to use the personalization features that are provided to them with that intent in mind. As technical objects, mobile phones come with many such affordances. These include for example the ability to change the ringtone, screen wallpaper, upload one’s phonebook, set up short-cuts for most-often called numbers, download games, and upload one’s music, photo, or video collection.

(…)

Creolization represents a deeper transformation, a more profound form of appropriation. It refers to practices where the user recombines or reprograms elements of the technology. In this appropriation mode, by contrast with baroque layering, users are more deeply involved in changing the technology. They now explore ways to adapt the technology beyond the options that have been designed by the phone makers and service providers.

(…)

Cannibalism: This third form of appropriation is the most extreme in the sense that it corresponds to practices where the user chooses to engage in direct conflict with the suppliers of the technology (or at least with the power relation as embodied in the technology.) Cannibalism includes modifications of the device that place the user in direct opposition with the providers’ business model, destruction of the device.“

Why do I blog this? following theories of technology adoption for a while (especially for a course I give about innovation and foresight in a design school), I read a lot about s-shape curves, 3-steps theories and found this one quite intriguing. Also because after going 3 times to latin america for one year, I noticed how it could be an interesting field of observation. This paper is interestingly anchored in both relevant theoretical and empirical points that I may reuse in the course as well as in my research. The part about designing for appropriation is also relevant as it points out the role of taking into account these 3 phases in creating meaningful products and services.

Like many of you, I simply can’t keep up with the river of lifestreaming applications hitting public beta this year. Many seem to simply do the same thing, more or less, with a bit more of this or a bit more of that to differentiate each from its competitors. But social apps are bound, perhaps more even than “conventional” software, to conform to best practices. Why? Because they are social applications. Social applications succeed only if they can extend the individual user experience out into new and interesting social experiences.

And they do have to be interesting — for social applications, again more than conventional software, must be interesting. More often than not they are interesting because they are used as tools for talk. Talking with, to, at, amongst, in front of, behind, and to the side of. Talking with friends tends to be interesting to those involved simply because it is among friends. But where the face to face dimensions of social interaction are also rewarding for the obvious reasons, social applications must deliver a working substitute. There is no real “spending time together” online.

Even chatrooms, which are as much a precursor of lifestreaming as anything else online, can only approximate this sense of togetherness. I recall early days in IRC chatrooms where that sense of being there or of being in it was as much due to the suspense and waiting (for somebody to type out their response) as it was due to the “room” itself. One might even argue that this pressure of time grows in the user the slower the technology is to record and transmit time. The longer the latency, the greater the waiting, and thus the greater the anticipation, suspense, and urgency! (Is it not said that suspense in film is simply the time that it takes for something to happen?).

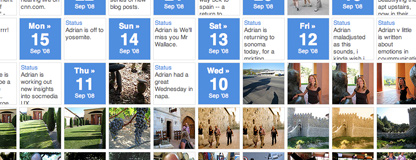

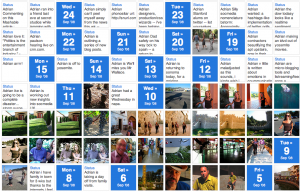

Swurl.com is interesting because it has a visual timeline of the lifestream (pictured above). In calendar format, and well-designed, the timeline looks good and is an attractive visual representation. It’s low on conversational content and talk, but it captures the past of a user’s activity in a compelling presentation. Plurk.com also has a timeline, but one that is used to steer interaction (and which looks more like a horizontal river display). Not only does Swurl’s calendar provide thumbnails of pictures and shortcuts to posts, it expands to accommodate periods of heavy activity. All days do not look alike. I like that.

This variation is important in lifestreaming apps. In contrast to the profile-based site or service, the stream stands in for the profile. The person’s talk stands in for profile elements. These choices make sense, because the call to action in a lifestreaming service is talk. It’s not browsing, searching, or navigating. At least not quite yet (I believe we’re ready for more order and structure). Really, each message/post/tweet in a lifestreaming app is its own call to (inter)action, which is also why most users are in it “now” or never.

Which makes Swurl’s representation of past user activity interesting to me. Most lifestreaming have stayed away from the archive of past activity (what’s the pleasure in paging backwards through a user’s posts?). But there’s a lot of value in past activity, and visual coverage of the past can take many forms (think Edward Tufte). We’ve seen none of them yet (Chirpscreen’s slideshows come to mind, though it would be nice to see them become actionable) but I’m certain that we will.

If twitter is the power curve of lifestreaming, then apps like swurl might show us some of the value in the long tail — the long tail being the past. To picture this, take the standard long tail graph and turn it sideways. The Present is the curve, the Past is the tail.

Mining the tail of time is mining in depth rather than mining across connections. Mining the connections of past time, for lifestreaming apps, might mean drawing connections across the past times (pastimes, experiences, too) of a site’s users. Currently, Swurl engages conversations around a user and his or her posts. But we could imagine indexing user streams for the purpose of making connections and extracting content. After all, a user’s post posts, talk, uploads, etc are used by many applications to predict or anticipate choices and preferences.

I’m excited to see what Swurl, with minimal complexity, has done to wrap a bit more around lifestreaming than we get out of tools like twitter. Twitter will remain for me my primary talk tool, as it has and will continue to have the best audience awareness. But if you wanted to imagine social networking, and profile-based social networking around lifestreaming instead of profile pages, Swurl would be a good place to start.

|