Monday, January 31. 2011

Via Luminapolis

Scientists have been studying the biological impacts of light perceived by the human eye since as long ago as the 1980s. But it was not until 2002 that they discovered ganglion cells in the retina of mammals that are not used for “seeing”. The newly identified cells respond most sensitively to visible blue light and set the “master clock” that synchronises the system of internal clocks with the external cycle of day and night. This booklet provides a practical and user-oriented summary of what science knows today about the non-visual impact of light on human beings. It is not a final, authoritative work but an ambitious attempt to describe the nascent, fast-growing field of research examining light, health and efficiency. More>

Tuesday, January 25. 2011

Via Libération

-----

By Sylvestre Huet

Récit - Le glaciologue Claude Lorius démontre que l’homme est devenu un «géo-ingénieur» climatique aussi puissant que les forces géologiques, et annonce l’anthropocène, l’ère de l’homme.

Iceberg dans les eaux antarctiques. (REUTERS)

-

More about this book, Claude Lorius and the Anthropocene directly on Libération.

Personal comment:

Anthropocene is a word we hear more and more about. It probably just gives a name to what we all observe everyday: that our environment is getting more an more artificial, conditioned at a global scale with ecological costs.

Humanity as a whole (and the consumption habits that came along with industry first, then re-inforced by capitalism) have become a geo-engineering force that is shaping the planet, with no effective couterparts at this day.

The question is maybe now how to critically "architecture" and reprogram this global scale and all what comes with it (so obviously, human habits are part of this), rather than if we can avoid it and go back to our "caves". At this point, the main force that drives globalism is the commercial one, this should change for something more valuable (for "les produits de "haute nécessité"", as Edouard Glissant would probably say).

Tuesday, January 18. 2011

Via Creative Applications

-----

by Jon Goodbun

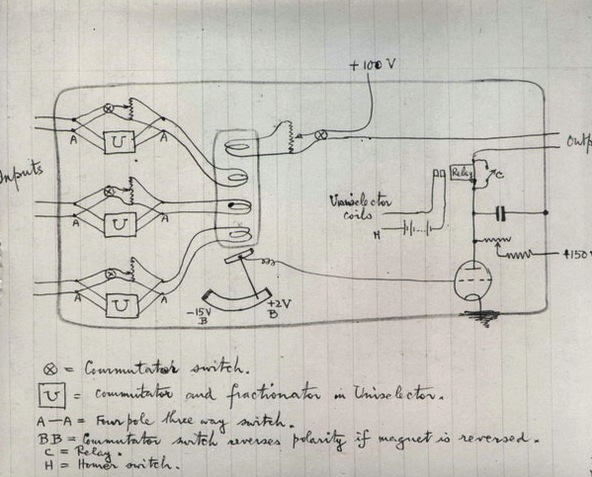

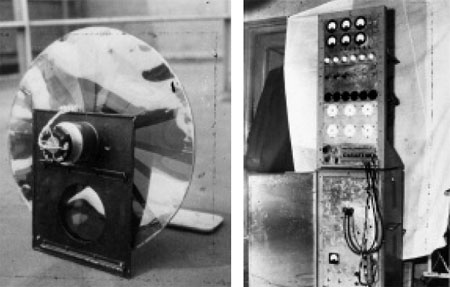

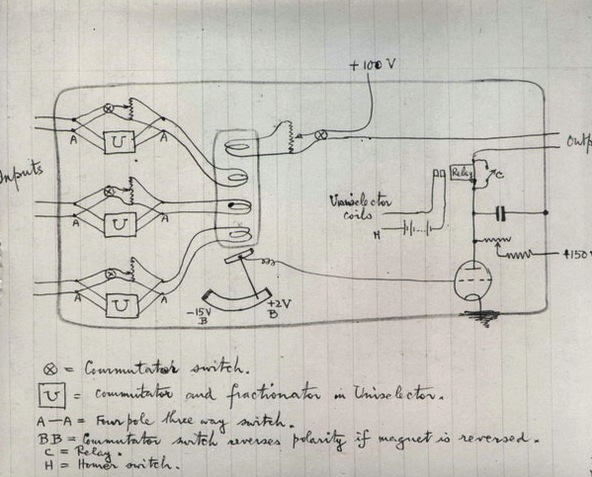

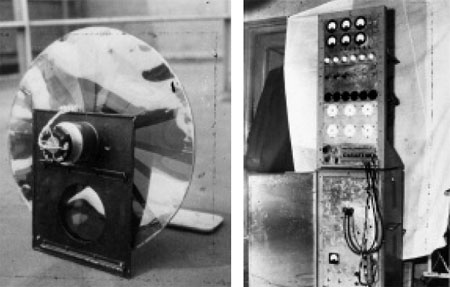

Grey Walter’s robotic tortoises ELSIE

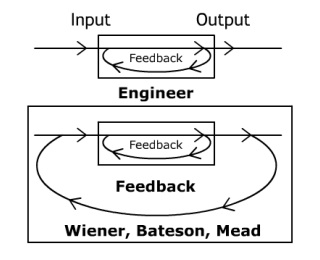

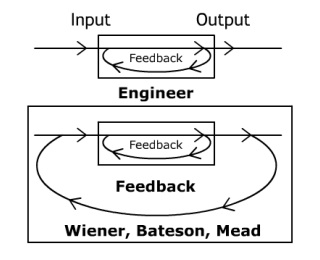

Usman Haque has, on several occasions, made the observation that there is an important difference between interactivity and responsiveness (see for example -pdf). A responsive system is a fundamentally linear set of relations, a kind of reaction where the same thing happens every time a given action is performed. A normal light switch is responsive in this sense. A typical light switch doesn’t consider any other variables, or have any other behavioural options. Pressing the switch will either turn it off or on, in what is a linear causal relationship. A properly interactive system is very different in its logical structure, and is characterised instead by a relational and circular (or more complex network) causality. In a properly interactive system, a given action will produce different results, because it depends upon the context at that moment, the history of previous interactions, and the relational creativity of the system. To take the banal example of a light switch again: in an interactive system an input might turn on a light, but it could equally result in other behaviour. A properly interactive light might set itself at different levels according to other sensor inputs, or the light might not come on at all, and instead curtains or windows might be opened to allow in more light. It might even ask you if you are afraid of the dark, or if you need help. It might try to sell you a torch, or it might just remind you that you are wearing shades. The post-war maverick ecologist and cybernetician Gregory Bateson used a different example to illustrate the same point. If you kick a stone, he said, then the trajectory of the stone is a simple mechanical affair, that can easily be calculated using Newton’s equations. If you kick a dog, then you do not know what is going to happen. It might bite you, or bark at you, or run away. A dog interacts with us. It has its own agency, and that is the important issue here.

One point to be made here then, is that many of the installations, systems and apps that we might broadly classify as interactive, are actually just responsive or reactive. There is nothing per se wrong with reactivity, and of course such responsive and reactive systems can in any case be ‘looped’ and networked to form components of more complex and properly interactive feedback systems. The important point rather, is that properly interactive systems are interesting, as they are able to stage a series of philosophical questions regarding the nature of agency and creativity – important questions that perhaps cannot be posed in any other way.

The way that circular causal systems which feature feedback and recursion act as minds was the broad research focus of the post war project of cybernetics, and has been the subject of a recently published book by Andrew Pickering, called The Cybernetic Brain – Sketches of Another Future (University of Chicago Press, 2010). In this work, Pickering takes the reader through this fascinating period of experimental work at the boundary of art and science, which he describes as “some of the most striking and visionary work that I have come across in the history of science and engineering”.

Pickering focuses upon the most radical traditions within cybernetic research, which largely arose out of the work of a series of distinctly eccentric British researchers, who he describes – borrowing a phrase from philosophers Giles Deleuze and Felix Guattari – as performing a nomadic science. He notes that “unlike more familiar sciences such as physics, which remain tied to specific academic departments and scholarly modes of transmission, cybernetics is better seen as a form of life, a way of going on in the world…” Pickering considers many experiments that have come to take on a legendary status within the history of cybernetics, ranging from Ross Ashby’s Homeostat (a network of four machines composed of movable magnets with electric connections through water, which would exhibit a range of emergent self-organised behaviours), Grey Walter’s robotic tortoises ELSIE and ELMER (which would respond to each other’s lights, or themselves in a mirror), to Stafford Beer’s remarkable Cybersyn project for Salvadore Allende’s government in Chile (an early form of the internet, which created the basis of a de-centralised socialist planned economy. For an information rich – though political analysis very poor – documentary, see here). Of particular interest to Pickering is the work of Gordon Pask, whose experimental installations and assemblages of various kinds captured in a uniquely distinct way, what Pickering describes as the “hylozoic wonder” of radical cybernetics – that is to say, under what conditions can we think of all matter as (at least capable of) being alive and thinking.

Gordon Pask was heavily influenced by the ideas of Gregory Bateson – in particular Bateson’s anthropological work with various Balinese tribes, and later with family therapy and schizophrenia. In this research Bateson showed how our very experience of being a ‘self’ is produced out, or emerges out of, our participation in a network or ecology of conversations with other actors in our environment: people, objects, rituals and so on. Bateson suggested that

“the total self-corrective unit which processes information, or as I say, ‘thinks’, ‘acts’ and ‘decides’, is a system whose boundaries do not at all coincide with the boundaries either of the body or of what is popularly called the ‘self’ or ‘consciousness’.”

For Pask famously, the conversation became the paradigm for thinking about interactivity – much of which focused on the question of how do systems learn and teach, or as Bateson described it, what is deuterolearning: learning how to learn? Pask’s writings in this area can often be rather obscure, especially to the newcomers to the field, and Pickering provides an excellent introduction to these projects – including Musicolour, SAKI, Eucrates, CASTE, and the yet more experimental chemical computing projects – many of which were developed in association with architecture schools and in art settings. In all of these projects, Pickering reminds us, Pask is ultimately staging questions about who we are, and what we and our world might be; questions which the ‘ecology of mind’ of radical cybernetics can still help us with today. In this regard, I can’t put it any better than Usman Haque, who has stated that:

“It is not about designing aesthetic representations of environmental data, or improving online efficiency or making urban structures more spectacular. Nor is it about making another piece of high-tech lobby art that responds to flows of people moving through the space, which is just as representational, metaphor-encumbered and unchallenging as a polite watercolour landscape. It is about designing tools that people themselves may use to construct – in the widest sense of the word – their environments and as a result build their own sense of agency. It is about developing ways in which people themselves can become more engaged with, and ultimately responsible for, the spaces they inhabit.”

–

About the Author: Jon Goodbun is researcher interested in networks of architecture, process philosophy, radical cybernetics, urban political ecology, and the natural and cognitive sciences. He sometimes refer to himself as an metropolitan tektologist, for want of a better description. His work focuses on near and medium term future scenarios. He is currently printing his PhD, working on a book ‘Critical and Maverick Systems Thinkers’, and planning some kind of exhibition on ‘Ecological Aesthetics, Empathy and Extended Mind’.

http://www.rheomode.org.uk

Thursday, January 13. 2011

Via MIT Technology Review

-----

Big computing providers are developing energy-saving strategies for new server farms.

By Cindy Waxer

When it came time for Hewlett-Packard to decide on a location for its new data center, the company could have considered variables like network connectivity, local talent, or proximity to corporate headquarters. Instead, a 100-year weather report convinced HP to build its new 360,000-square-foot facility in breezy Billingham, England.

|

Server farm: Yahoo’s data center in Lockport, New York, was inspired by a chicken coop and lets air naturally vent through the top.

Credit: Yahoo |

"You get a lot of cool and moist winds coming over the northeast coast of Britain," says Ian Brooks, HP's European head of sustainable computing. By harnessing these winds with massive fans, Brooks says, HP has created a system that uses 40 percent less energy than conventional methods of keeping data centers cool.

HP isn't the only company taking its cues from nature when it comes to the design and construction of data centers, clusters of server computers that run Internet services and store and crunch data. These facilities have been the smokestacks of the digital era because they use so much electricity: not only does it take a lot of power to run the machines themselves, but data centers are heavily air conditioned because servers generate a lot of heat and don't run well in environments much warmer than 25 ºC. As demand for online services skyrockets, the EPA predicts, U.S. data centers could nearly double their 2006 levels of energy consumption by 2011, reaching 100 billion kilowatt-hours per year—enough to power 10 million homes. By 2020, data centers will account for 18 percent of the world's carbon emissions, according to the Smart 2020 report released by the Climate Group, a nonprofit organization.

To reduce the environmental—and financial—burdens, more and more companies are trying innovative designs for data centers. For instance, at the HP center in Britain, known as Wynyard, fans more than two meters in diameter pull the North Sea winds into a mixing chamber, where they cool the warm air given off by the center's servers. That air is funneled into a large cavity beneath the servers, directed through vents in the floor, and then circulated throughout a series of aisles to chill the computers. The resulting warm exhaust is extracted, mixed with the incoming fresh air, and recirculated.

By eliminating the need for energy-intensive cooling equipment, the Wynyard facility cuts 12,500 metric tons of carbon dioxide from the total generated by the industry-standard data center. That is the equivalent of taking nearly 3,000 midsize vehicles off the road.

Another innovative data center is one that Yahoo opened in September 2010 in Lockport, New York. In this case, the inspiration came from chicken coops rather than coastal winds. "Chickens throw off a fair bit of heat; servers throw off a fair bit of heat," says Christina Page, Yahoo's director of climate and energy strategy. "So we built a long, tall, narrow building with a coop along the top to vent the air."

|

Drawn in: At Hewlett-Packard’s data center in Billingham, England, large fans pull in fresh air.

Credit: HP |

The 155,000-square-foot facility mimics the narrow design of a chicken coop and features louvers along the sides of the building so that prevailing winds can flow freely throughout the halls. On particularly hot days, the center can activate an evaporative cooling system, which uses less energy than traditional chillers. That means the facility uses at least 95 percent less water than a conventional data center, and 40 percent less energy—enough to power more than 9,000 households annually. What's more, with its preconstructed metal components, the chicken-coop structure can be assembled in less than six months.

"There's a good case to be made for the return on investment on a lot of green practices," says Page. "This data center was cheaper and faster to build, in addition to being more efficient on the operating-expenditure side."

The information-management company Iron Mountain, meanwhile, is taking advantage of natural geothermal conditions to slash energy consumption by locating a data center in a former limestone mine, 22 stories below ground. Iron Mountain's storage facility in Butler County, Pennsylvania, houses Room 48, whose racks of servers rely on the natural cooling properties of the limestone walls to remain at 13 ºC. Iron Mountain also developed a high-static air pressure differential cooling system that relies on high-velocity ducts, located in the cold aisles separating rows of servers, and linear return ducts in its hot aisles. The system creates winds that naturally cause cold air to sink and hot air to rise and exit the room through perforated ceiling tiles. The absence of air conditioners not only freed up about 30 percent more space in Room 48 but cut energy consumption for cooling by 10 to 15 percent compared with traditional data centers.

These are the kinds of unheralded changes that can really make a difference, says Mark Lafferty, director of strategic solutions at technology services provider CDW. "The really basic, non-glamorous, non-sexy stuff companies do can have a dramatic effect on the amount of resource consumption in a data center," he says.

Copyright Technology Review 2011.

Tuesday, January 11. 2011

Via Rhizome

-----

by Ceci Moss

|