Monday, May 31. 2010

Via /Message

-----

by Stowe Boyd

Verlyn Klinkenborg makes some astute observations about his use of iPad and Kindle as a reader of books, in particular the role that books play in social intercourse and how this is diminished because of the restrictions that digital book tools place upon us:

The entire impulse behind Amazon’s Kindle and Apple’s iBooks assumes that you cannot read a book unless you own it first — and only you can read it unless you want to pass on your device. That goes against the social value of reading, the collective knowledge and collaborative discourse that comes from access to shared libraries. That is not a good thing for readers, authors, publishers or our culture.

Removing the social affordance of loaning someone a book is perhaps the worst crime perpetuated by the new world order of digital content. The communitarian aspect of shared books in libraries is similarly damaged.

Books should be social. Our personal property should be ours to loan to friends.

Imagine if Sears mde it impossible for me to loan my chain saw, or if fingerprint recognition on my VW made it impossible for a neighbor to borrow it?

But, in the name of countering 'piracy', we can't loan The Moon Is A Harsh Mistress to a friend. And our society is lessened because of that.

Personal comment:

The problem with the evolution of electronic displays (iPad, iPhones, iWhatever --and this is true as well for the Android line or other products--), is that it evolves more and more towards ultimate consumption tools. "Just one click away of buying and consuming"... These are less and less creative oriented tools unfortunately, what made their strength at first compared to television for exemple. And this is a shame, because it is the "television" or "major's logic" that is entering computing and networks rather than the reverse. Not to say that usually their "new business model" is to make you pay several times for the same thing. Bad...

Monday, May 17. 2010

-----

by Jolie O'Dell

Free software pioneer Richard Stallman spoke with us recently about the principles of free and open source programs, and what he had to say is as relevant and revolutionary as when he first started working in this field 30 years ago. Free software pioneer Richard Stallman spoke with us recently about the principles of free and open source programs, and what he had to say is as relevant and revolutionary as when he first started working in this field 30 years ago.

Our community has been talking a lot lately about what it means to be open, about what makes software open, about what makes companies open. No matter what talk of “openness” you hear in the media, no major web company — not Facebook, not Google, not Adobe and certainly not Apple — is creating truly free and open applications. Some may make gestures toward this ideology with APIs or “open source” projects, but ultimately, the company controls the software and the users’ data.

At the end of the day, if you want freedom and privacy, the only way to attain those goals is to abstain from proprietary software, including media players, social networks, operating systems, document storage, email services and any other program that is licensed, patented and locked down by a corporation. If you prefer convenience — well, best to stop complaining about your loss of freedom and/or privacy.

Like many heroes of the digital era, Richard Stallman is largely unsung by the general populace. Yet when it comes to user privacy and technological freedom, he’s probably one of the most committed individuals in the world.

By freedom, he means four things:

- The software should be freely accessible.

- The software should be free to modify.

- The software should be free to share with others.

- The software should be free to change and redistribute copies of the changed software.

Stallman started the Free Software Foundation. He even worked to make an operating system (GNU/Linux) that could be entirely free. And he is deeply opposed to proprietary software, software with commercial licenses that fly in the face of everything he calls freedom.

If you’ve ever downloaded music illegally, if you’ve ever complained about closed platforms, if you’ve ever gotten a serial number online for software you didn’t buy, if you’re worried about social networks controlling your data, you need to hear what Stallman has to say.

We got the chance to interview Stallman extensively at WordCamp San Francisco, and we’ll be posting segments of that interview each week. Stay tuned for insights on music sharing, Apple versus Adobe and more.

Note: Stallman asked that we use Ogg Theora, an open format, for encoding this video. To download the original video, go to its Wikimedia page. This video is published under a Creative Commons-No Derivatives license.

Personal comment:

There's a debate now on Mashable about what is open vs what is proprietary in technology. Especially now that this has become a marketing term full of lies.

Interesting debate to follow, in particular for us in the perspective of two projects we are currently working on where we'll claim for an open approach regarding technology in public spaces (all of them, including in outer space! I-Weather as Deep Space Public Lighting) or where we'll adopt a critical approach regarding surveillance (technologies) in the public space (a future "Paranoid Shelter" project we are working on for a while), followed maybe a bit later by a collaboration ("Globale Paranoïa") with French writer and essayist Eric Sadin.

It's a really a whole big debate, quite complex, just as the question of freedom that is a complex one. For our part, we are interested maybe in a less complex question at first. The one of public space (public is not free so to say, it is shared in different ways and allows for, or offer this and that but not everything).

Monday, May 03. 2010

-----

The 'Living Earth Simulator' will mine economic, environmental and health data to create a model of the entire planet in real time.

When it comes to global crises, we're not short of complex systems that look close to the edge: the climate, the food supply, energy security, the banking system and so on. Add to this the threat of war in many parts of the world and the possibility of global pandemics and it's a wonder that anybody gets out of bed in the morning.

Science has certainly played an important role in understanding aspects of these systems but could it do more?

Today, Dirk Helbing at the Swiss Federal Institute of Technology in Zurich outlines an ambitious project to go further, much further.

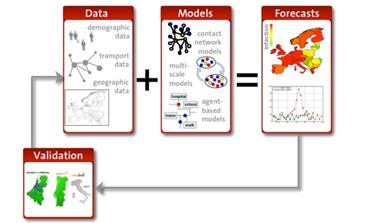

Helbing's idea is to create a kind of Manahattan project to study, understand and tackle these techno-socio-economic-environmental issues. His plan is to gather data about the planet in unheard of detail, use it to simulate the behaviour of entire economies and then to predict and prevent crises from emerging.

Think of it as a kind of Google Earth for society. We've all played with Google's 3D map of the Earth that uses real data to reveal not only the town where you live and work but your home and back garden too.

Imagine a similar model that uses in real time things like financial transactions, health records, travel details, carbon dioxide emissions and so on to build a model of not just the planet but the entire society that populates it. Helbing calls it 'reality mining'.

This model will be capable not only modelling the planet in real time but of simulating the future, rather in the manner of weather forecasters.

Helbing's simulator will look for economic bubbles and collapses, warn of global pandemics and suggest how to tackle them, it will model and predict the outcome of regional conflicts and determine the effect of our behaviour on the climate. He even wants to create 'situation rooms' in which global leaders can view and manage crises as they occur.

This Google-Earth-on-steroids is to be called the Living Earth Simulator and Helbing's plan is to have it working by 2022 at a cost of a cool EUR 1 billion, funded by the European Commission. He's even assembled an impressive team to help, including partners from most of the top universities in Europe.

So what to make of this plan and it's ambition. At first glance, it seems a somewhat worrying, even frightening, vision of the future. A Living Earth Simulator will change how we see ourselves and our planet in ways that are hard to imagine right now.

There's no question that we need to better understand the global nature of the society we live in and the effects that it has on the planet. We also need to know how to leverage the benefits of these global systems while limiting the downsides they can generate.

This capability is coming whether we like it or not. Clearly, the computing infrastructure of the near future will be increasingly capable of such a task.

The great worry, of course, is that it will not be the great public universities and government-funded research institutes that complete this task. The huge benefits of a Living Earth Simulator will make it a valuable tool for insurance companies, financial traders, global businesses and even search engines.

It's not hard to imagine a company like Google wanting and even building such a model. And if that seems hard to swallow, there are plenty of organisations that may be even less palatable operators of such a system. Imagine a Goldman Sachs Earth Simulator or one run by the People's Liberation Army. EUR 1 billion is just a small fraction of the money these organisations play with.

When viewed through that prism, it seems clear and even necessary that such a project is publicly funded and managed. Should the European Commission agree, Helbing, who is a world leader in the new science of techno-socio-economic studies, may well be the man who leads it.

A Living Earth Simulator is coming, one way or another, perhaps even to your living room or mobile communicator. The only question is who builds it.

Ref: arxiv.org/abs/1004.4969: The FuturICT Knowledge Accelerator

-----

An unprecedented analysis reveals that the micro-blogging service is remarkably effective at spreading "important" information.

By Christopher Mims

It's basically impossible for a journalist who relies on Twitter to find stories, stalk editors, rack up "whuffie" and beef with rap stars to be objective about the service.

Fortunately, I don't have to be, because four researchers from the Department of Computer Science at the Korea Advanced Institute of Science and Technology have performed a multi-part analysis of Twitter. They conclude that it's a surprisingly interconnected network and an effective way to filter quality information.

In a move unprecedented in the history of academic research on Demi Moore's chosen medium for feuding with Kim Kardashian, Kwak et al. built an array of 20 PCs to slurp down the entire contents of Twitter over the course of a month. If you were on Twitter in July 2009, you participated in their experiment.

|

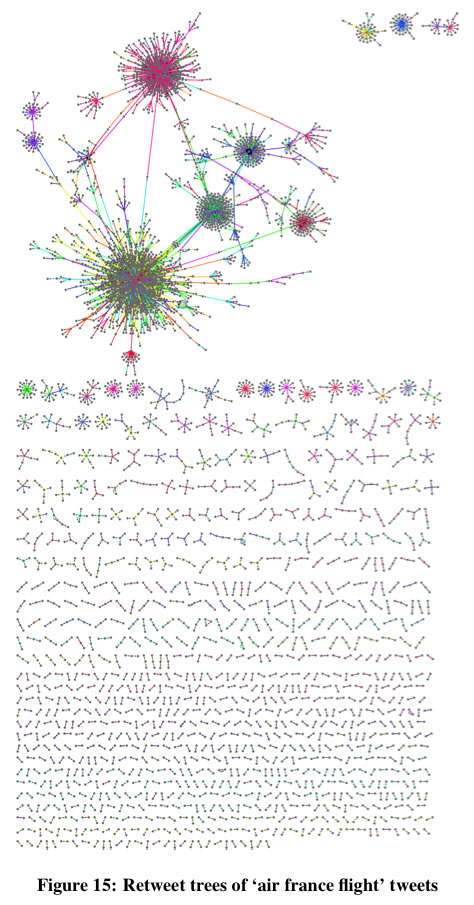

This "retweet tree analysis" shows instances of retweeting. When a message is retweeted just a few times it reaches a huge number of users. Credit: Kwak et al. |

Four Degrees of Separation

The ideas behind Stanley Milgram's original "six degrees of separation" experiment, which suggested that any two people on earth could be connected by at most six hops from one acquaintance to the next, have been widely applied to online social networks.

On the MSN messenger network of 180 million users, for example, the median degree of separation is 6. On Twitter, Kwak et al. hypothesized that because only 22.1% of links are reciprocal (that is, I follow you, and you follow me as well) the number of degrees separating users would be longer. In fact, the average path length on Twitter is 4.12.

What's more, because 94% of the users on Twitter are fewer than five degrees of separation from one another, it's likely that the distance between any random Joe or Jane and say, Bill Gates, is even shorter on Twitter than in real life.

Information as Outbreak

"...No matter how many followers a user has, the tweet is likely to reach [an audience of a certain size] once the user's tweet starts spreading via retweets," says Kwak et al. "That is, the mechanism of retweet has given every user the power to spread information broadly [...] Individual users have the power to dictate which information is important and should spread by the form of retweet [...] In a way we are witnessing the emergence of collective intelligence."

If this reminds of you early 90's hyperbole about the then-new world wide web, it should! Back then the web was a raucous, disorganized, largely volunteer-led effort full of surprisingly informative Geocities pages and equally uninformative corporate websites.

These days we have to contend with the creeping power of what can only notionally be defined as media "content"--produced purely to appear at the top of search results. But it appears that the (so far) still entirely human-filtered paradise of Twitter may come to the rescue. Owing to the short path length between any two users, news travels fast in the tweet-o-sphere.

Earlier work suggested that the best way to get noticed on Twitter was to tweet at certain times of day, and Kwak et al.'s paper sheds some light on why this is the case: "Half of retweeting occurs within an hour, and 75% under a day." And it's those initial re-tweets that make all the difference: "What is interesting is from the second hop and on is that the retweets two hops or more away from the source are much more responsive and basically occur back to back up to 5 hops away."

There Are a Lot of Lonely People on Twitter

Clashing with the service's interconnectivity, Kwak et al.'s analysis also suggests that there are a lot of lonely people on Twitter, and not just the ones who are tweeting angry political screeds at 8 pm on a Saturday night. "67.6% of users are not followed by any of their followings in Twitter," they report. "We conjecture that for these users Twitter is rather a source of information than a social networking site."

Another possibility, left unexplored by Kwak and his colleagues, is simply that on Twitter, like real life, some people are much more popular than others.

Aside from its monkey + keyboard simplicity, the fact that links on Twitter do not have to be reciprocal may be its ultimate genius. To that end, I urge all of you to follow Technology Review on Twitter. I must warn you that, as an enormously influential inanimate object, it has no empathy or conscience, so don't take it personally when it doesn't follow you back.

Personal comment:

And I quite like the following remark about this blog post, to someone expressing doubts about Twitter as an information network (to which I partly agree) :

"Instead of a social network, try thinking of Twitter as a distributed 140 char sensor network and you might have an easier time accepting it. Seems to me it's mostly bitter or unimaginative people who are riding the Twitter anti-hype. "

I think this idea of Twitter as a sort of analog sensors (the people) network about anything is quite interesting. It goes in the direction of human-computer ecosystem (a sort of different Machanical Turk?).

|

Free software pioneer Richard Stallman spoke with us recently about the principles of free and open source programs, and what he had to say is as relevant and revolutionary as when he first started working in this field 30 years ago.

Free software pioneer Richard Stallman spoke with us recently about the principles of free and open source programs, and what he had to say is as relevant and revolutionary as when he first started working in this field 30 years ago.