Wednesday, June 19. 2013

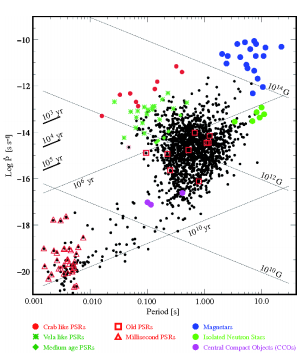

An Interplanetary GPS Using Pulsar Signals

-----

Spacecraft could determine their position anywhere in the solar system to within five kilometres using signals from x-ray pulsars, say astronomers.

Navigating in space is a tricky business. The usual method relies on Earth-based tracking stations to work out a spacecraft’s distance using radio waves, a process that is accurate to within a metre or so.

That’s fine for the radial distance, but tracking a spacecraft’s angular position is much harder because of the limited angular resolution of radio antennas. The current technology produces an uncertainty of about four kilometres per astronomical unit of distance between Earth and the spacecraft.

So for a spacecraft at the distance of Pluto, that’s an uncertainty of 200 kilometres and at the distance of Voyager 1, the uncertainty is 500 kilometres.

So a way for spacecraft to determine their own position accurately would clearly be useful.

Today, Werner Becker at the Max Planck Institute for Radio Astronomy in Germany and a couple of pals have worked out the practical details for an autonomous spacecraft navigation system using pulsars signals. They say that technology being developed now would allow spacecraft to work out their position to within five kilometres anywhere in the solar system.

The idea of using pulsars to navigate in space dates back several decades. But Becker and co say previous analyses have been hampered by a limited knowledge of pulsars and the relatively bulky technology that has been available to detect them. Both of those things have changed dramatically in recent years.

First, the number of known pulses is growing significantly. Astronomers are aware of well over 2,000 pulsars and the next generation of radio observatories are expected to reveal tens of thousands more.

The basic idea behind this interplanetary navigation system is to use the signals from these pulsars in essentially the same way that we use GPS satellites to navigate on Earth. By measuring the arrival time of pulses from at least three different pulsars and comparing this with their predicted arrival time, it is possible to work out a position in three-dimensional space.

(Since pulsars produce a stream of identical pulses, it is possible to generate any number of ambiguous solutions when doing this. But Becker and co point out that these can be eliminated by constraining the solutions to a finite volume around the assumed position.)

The feasibility of such a system depends on a number of important practical factors, largely determined by the wavelength of the pulsar radiation that the navigation system is designed to detect. This determines the antenna collecting area, the power consumption, the weight of the navigation system, and of course its cost.

Becker and co calculate that for 21-centimeter waves, the spacecraft would require an antenna with a collecting area of 150 square meters.

But a better idea, they say, is to use pulsars that emit x-rays since the technology for collecting and focusing x-rays has improved dramatically in recent years.

One measure of the performance of x-ray mirrors is their mass. The mirror used on the Chandra X-ray Observatory launched in 1999 had a mass of 18.5 tonnes per square metre of effective collecting area. By comparison, the state-of-the-art glass micropore optics being made today have a mass of only 25 kilograms for the same collecting area.

So x-ray optics make good sense for pulsar navigation, say Becker and co. “Using the X-ray signals from millisecond pulsars we estimated that navigation would be possible with an accuracy of ±5 km in the solar system and beyond,” they say.

It may not be necessary to have this accuracy for most missions envisaged in the short term. However Becker and pals are optimistic about its future potential: “It is clear already today that this navigation technique will find its applications in future astronautics.” As they say, to infinity and beyond …

Ref: arxiv.org/abs/1305.4842: Autonomous Spacecraft Navigation With Pulsars

Augmenting Social Reality in the Workplace

-----

By Ben Waber

A new line of research examines what happens in an office where the positions of the cubicles and walls—even the coffee pot—are all determined by data.

Can we use data about people to alter physical reality, even in real time, and improve their performance at work or in life? That is the question being asked by a developing field called augmented social reality.

Here’s a simple example. A few years ago, with Sandy Pentland’s human dynamics research group at MIT’s Media Lab, I created what I termed an “augmented cubicle.” It had two desks separated by a wall of plexiglass with an actuator-controlled window blind in the middle. Depending on whether we wanted different people to be talking to each other, the blinds would change position at night every few days or weeks.

The augmented cubicle was an experiment in how to influence the social dynamics of a workplace. If a company wanted engineers to talk more with designers, for example, it wouldn’t set up new reporting relationships or schedule endless meetings. Instead, the blinds in the cubicles between the groups would go down. Now as engineers passed the designers it would be easier to have a quick chat about last night’s game or a project they were working on.

Human social interaction is rapidly becoming more measurable at a large scale, thanks to always-on sensors like cell phones. The next challenge is to use what we learn from this behavioral data to influence or enhance how people work with each other. The Media Lab spinoff company I run uses ID badges packed with sensors to measure employees’ movements, their tone of voice, where they are in an office, and whom they are talking to. We use data we collect in offices to advise companies on how to change their organizations, often through actual physical changes to the work environment. For instance, after we found that people who ate in larger lunch groups were more productive, Google and other technology companies that depend on serendipitous interaction to spur innovation installed larger cafeteria tables.

In the future, some of these changes could be made in real time. At the Media Lab, Pentland’s group has shown how tone of voice, fluctuation in speaking volume, and speed of speech can predict things like how persuasive a person will be in, say, pitching a startup idea to a venture capitalist. As part of that work, we showed that it’s possible to digitally alter your voice so that you sound more interested and more engaged, making you more persuasive.

Another way we can imagine using behavioral data to augment social reality is a system that suggests who should meet whom in an organization. Traditionally that’s an ad hoc process that occurs during meetings or with the help of mentors. But we might be able to draw on sensor and digital communication data to compare actual communication patterns in the workplace with an organizational ideal, then prompt people to make introductions to bridge the gaps. This isn’t the LinkedIn model, where people ask to connect to you, but one where an analytical engine would determine which of your colleagues or friends to introduce to someone else. Such a system could be used to stitch together entire organizations.

Unlike augmented reality, which layers information on top of video or your field of view to provide extra information about the world, augmented social reality is about systems that change reality to meet the social needs of a group.

For instance, what if office coffee machines moved around according to the social context? When a coffee-pouring robot appeared as a gag in TV commercial two years ago, I thought seriously about the uses of a coffee machine with wheels. By positioning the coffee robot in between two groups, for example, we could increase the likelihood that certain coworkers would bump into each other. Once we detected—using smart badges or some other sensor—that the right conversations were occurring between the right people, the robot could move on to another location. Vending machines, bowls of snacks—all could migrate their way around the office on the basis of social data. One demonstration of these ideas came from a team at Plymouth University in the United Kingdom. In their “Slothbots” project, slow-moving robotic walls subtly change their position over time to alter the flow of people in a public space, constantly tuning their movement in response to people’s behavior.

The large amount of behavioral data that we can collect by digital means is starting to converge with technologies for shaping the world in response. Will we notify people when their environment is being subtly transformed? Is it even ethical to use data-driven techniques to persuade and influence people this way? These questions remain unanswered as technology leads us toward this augmented world.

Ben Waber is cofounder and CEO of Sociometric Solutions and the author of People Analytics: How Social Sensing Technology Will Transform Business, published by FT Press.

Personal comment:

Following my previous posts about data, monitoring or data centers: or when your "ashtray" will come close to you and your interlocutor, at the "right place", after having suggested to "meet" him...

Besides this trivial (as well as uninteresting and boring) functional example, there are undoubtedly tremendous implications and stakes around the fact that we might come to algorithmically negociated social interactions. In fact that we might increase these type of interactions, including physically, as we are already into algorithmic social interactions.

Which rules and algorithms, to do what? Again, we come back to the point when architects will have to start to design algorithms and implement them in close collaboration with developers.

Monday, June 17. 2013

Alive 2013

An interesting conference that will take place at the ETHZ CAAD department next July that I'm fowarding here:

---------------

Via DARCH - ETHZ

By Manuel Kretzer

Dear friends, colleagues and students,

I'm happy to invite you to join us for the - international symposium on adaptive architecture

The full day event will be take place on July 8th, 2013 / 9:00 - 18:00 at the Chair for Computer Aided Architectural Design ETH Zürich-Hönggerberg, HPZ Floor F.

Speakers include: Prof. Ludger Hovestadt (ETH Zürich, CH) | Prof. Philip Beesley (University of Waterloo, CA) | Prof. Kas Oosterhuis (TU Delft, NL) Martina Decker (DeckerYeadon, US) | Claudia Pasquero (ecoLogicStudio, UK) | Manuel Kretzer (ETH Zürich, CH) Tomasz Jaskiewicz (TU Delft, NL) | Jason Bruges (Jason Bruges Studio, UK) | Areti Markopoulou (IAAC, ES) | Ruairi Glynn (UCL, UK) Simon Schleicher (Universität Stuttgart, DE) | John Sarik (Columbia University, US) | Stefan Dulman (Hive Systems, NL)

More info on the speakers, the detailed program, location and registration can be found on the event's website and the attached flyer. www.alive2013.wordpress.com

The symposium is free of charge however registration until July 3rd, 2013 is obligatory. Seats are limited. http://alive13.eventbrite.com

The event is organised by Manuel Kretzer and Tomasz Jaskiewicz, hosted by the Chair for CAAD and supported through the Swiss National Science Foundation.

Tuesday, June 11. 2013

Deterritorialized Living, residency, storyboard for workshop and follow-up #1 (open call)

By fabric | ch

-----

I came back from Beijing more than a month ago now and before Christian Babski will return next week to China for fabric | ch during another month to finish our residency at the Tsinghua University (until mid July), I'm taking a bit of time to write a follow-up about the short workshop/sketch session I headed there with the students of Prof. Zhang Ga, at the Tsinghua Art & Sciences Media Laboratory.

The students originated from many different fields of art, design and sciences and worked in interdisciplinary teams. I must admit that the results didn't really reach my (certainly too high) expectations and ended somehow into too general and a bit precictable projects. Especially because the students didn't have time to go any further than some rough sketches due to parallel academic activities. Nonetheless, the ideas developed during their week of work helped us discuss and clear some paths for our own project. We plan to finish it this summer.

The good related news that came out of this is that fabric | ch together with TASML plan to further develop the ideas that surround the subject of our workshop/residency (Deterritorialized Living): we will open a call on different university campuses and art schools in China (Tsinghua and CAFA in Beijing, the China Academy of Arts in Hangzhou and possibly Tongji in Shanghai) for interdisciplinary teams of architects, interaction designers, designers at large, artists and scientists next September. Mainly, this open call, so as our full residency work will become an Associate Project during the next and much anticipated Lisbon Triennale (Close, Closer) co-curated by Beatrice Galilee, Liam Young, Mariana Pestana and Jose Esparza Chong Cuy.

The purpose of our residency is to work around a new project in relation with the idea of "deterritorialization", understood in our case as a state of decontextualisation / dislocation / disembodiment / ... triggered by networked services, "clouds" of all sorts and intensive mobility (physical and mediated therefore), as well as through varied artificialization phenomena.

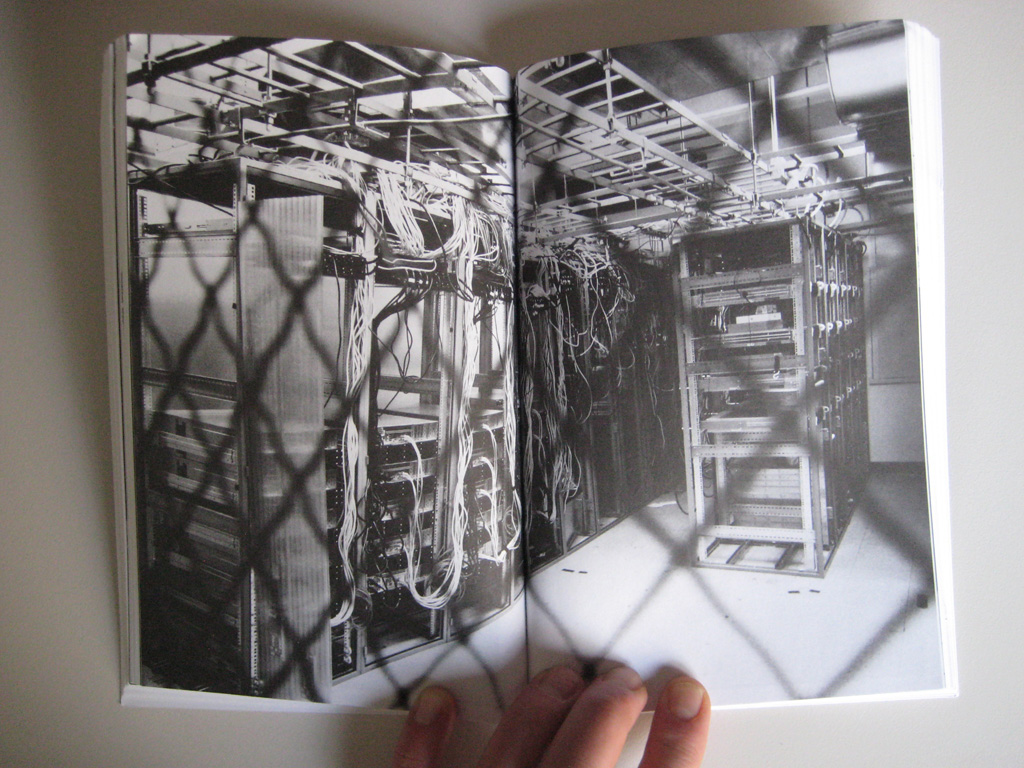

The physical figure of the data center appears undoubtedly behind this "state" that interests us, so far mainly (but only) as the infrastructure that holds it. Yet the paradox is, and that's where it probably gets more interesting, that "deterritorialization" is becoming very ambient these days... It's almost a new "context", a geo-engineered one (?) that follows us everywhere: a situation that is always potentially present, somehow proximate. We live in this "context" (too). The intention of our scheme is therefore to consider it and to develop artifacts within this frame of research.

At this state of evolution of this specific work, we plan that our residency project will end up into a proposal similar in its form to a previous project we did in collaboration with architect Philippe Rahm, back in 2001 (!) and 2009 (I-Weather): an open platform, a processed and networked atmosphere that everybody will be able to connect to, so to develop their own projects and devices. We hope to deliver an "ambient deterritorialization" in the form of a made atmosphere, the most elementary architecture according to us, that will also act in some ways as an information design about the global state of networks. This atmosphere will then serve us for more elaborate projects and installations.

Workshop:

The workshop we held with the students was considering the situation I roughly described above. Yet we decided to shrink it to a managable size: the computers cabinet (which could be considered as a very small, movable data center), but one that would be big enough to become permanently inhabitable though. The domestic inhabitability of this structure for one or two persons becoming part of our design brief.

Image of several servers' cabinets taken out of Clog: Data Space (2012).

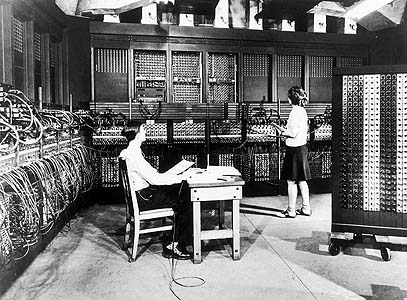

... and one of the first general purpose computer, the Eniac I, back in 1946.

Due to its new "program", this extended computers cabinet would then have to combine some specific physical needs for the machines (i.e. to cool down the servers with fresh air and to expulse hot and ionized air outside, energy supply) and for humans (breathable air, livable levels of heat and humidity, comfort zone, etc.), possibly while trying to find some symbiosis between them AND to provide the frame for dedicated mediated services.

We added a twist to our fictional brief, which was the existence of a second "sun" in the cabinet... In our minds, this second sun would be one of the artifacts we planed to develop in the context of our residency: Deterritorialized Daylight, a permanent daylight, always on yet constantly and slowly varying in its intensity.

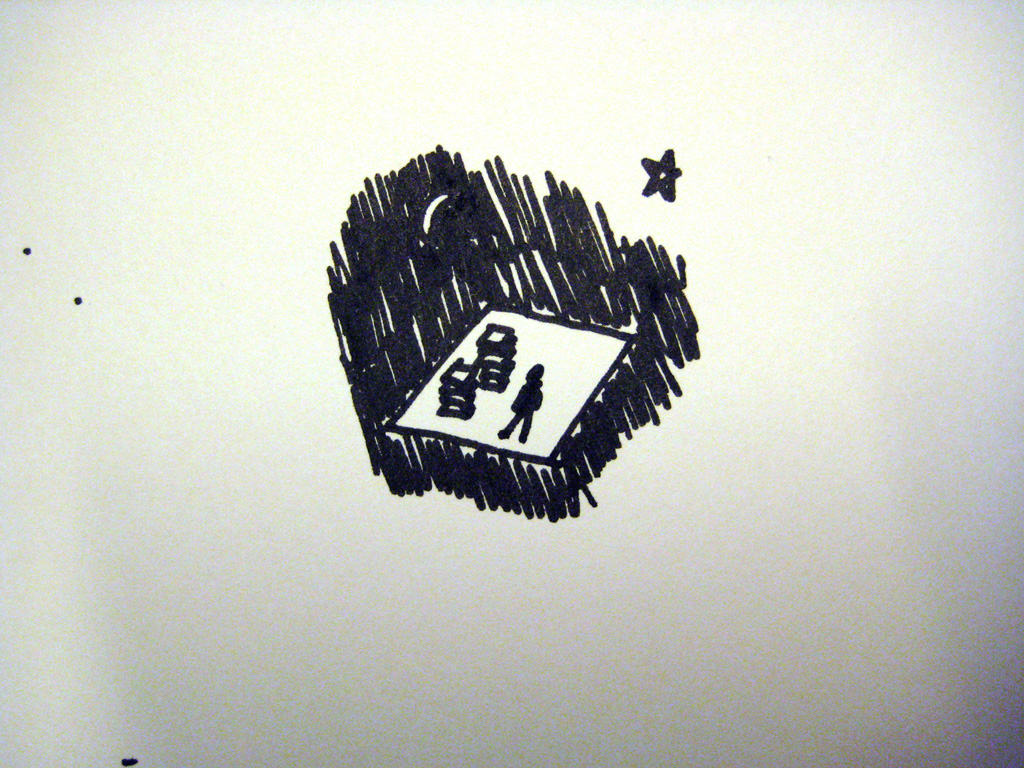

Step by step storyboard for the workshop: "Inhabiting the computers cabinet, with two suns"

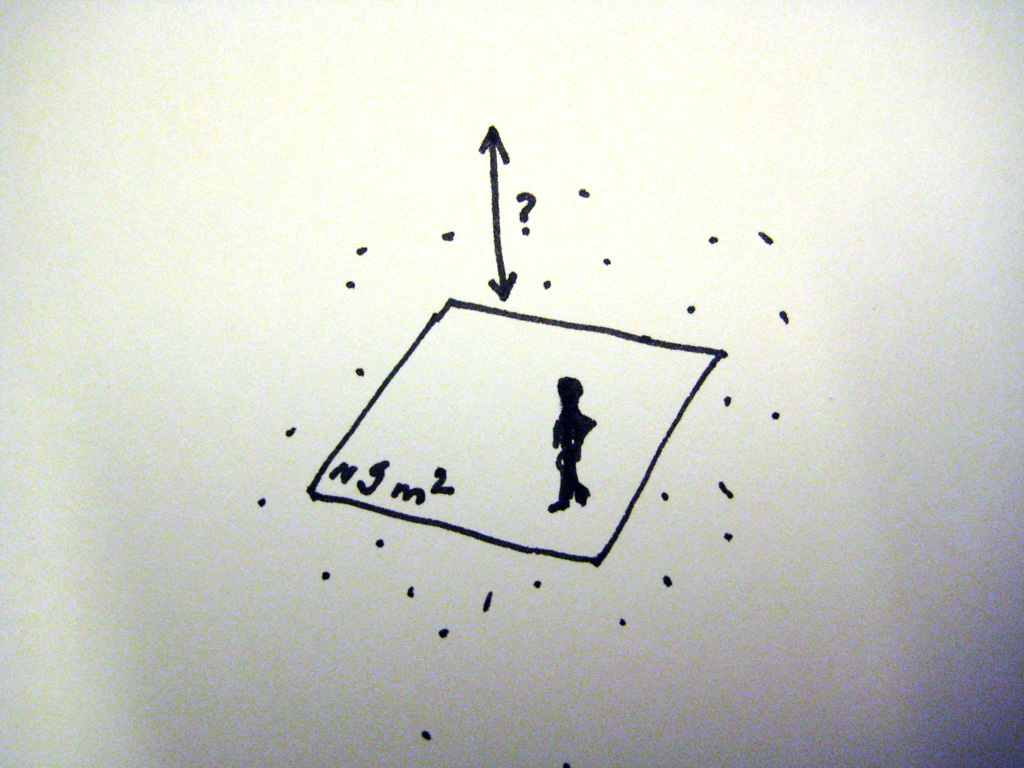

(1, left) 9 square meters (in any shape) to inhabit. Height is free to define. Fresh air surrounds this area. This is the expanded space of the Computers Cabinet. (2, right) A certain number of servers populate this space, along with the person(s) that inhabit it. Machines and humans have to share this place. The space is connected to the nearby and far outsides (by the means of physical and network communications). It provides services of different sorts, physical and digital, local and networked.

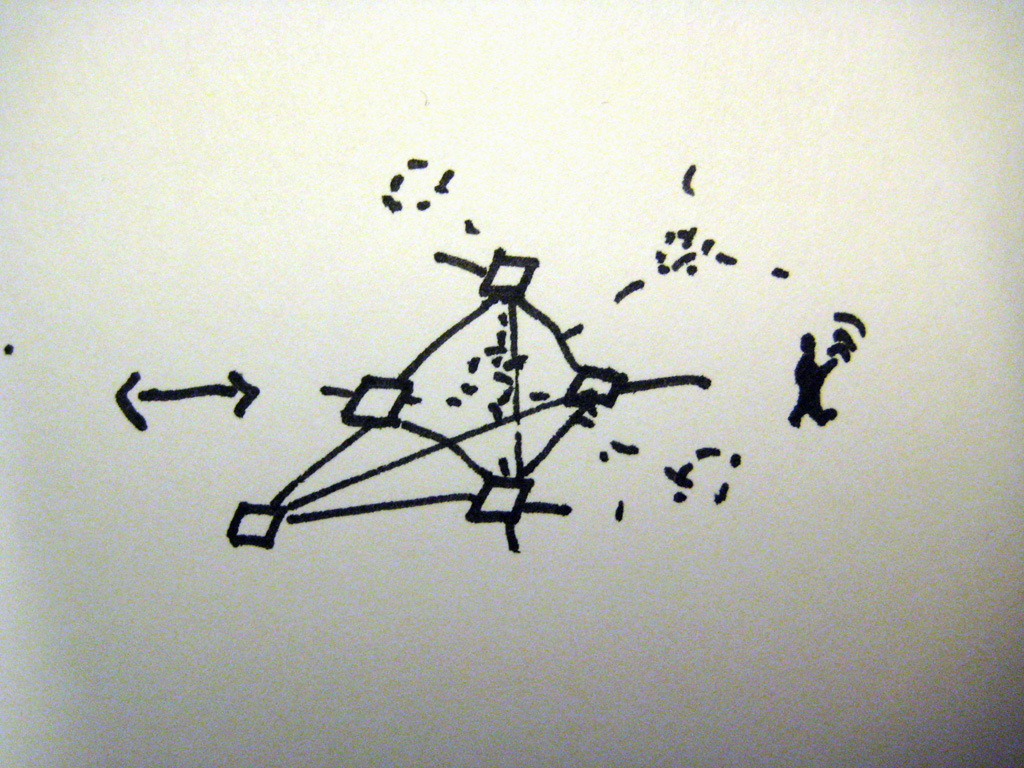

(3) The 9 m2 Computers Cabinet is part of a larger network of connected cabinets that possibly create a distributed larger structure.

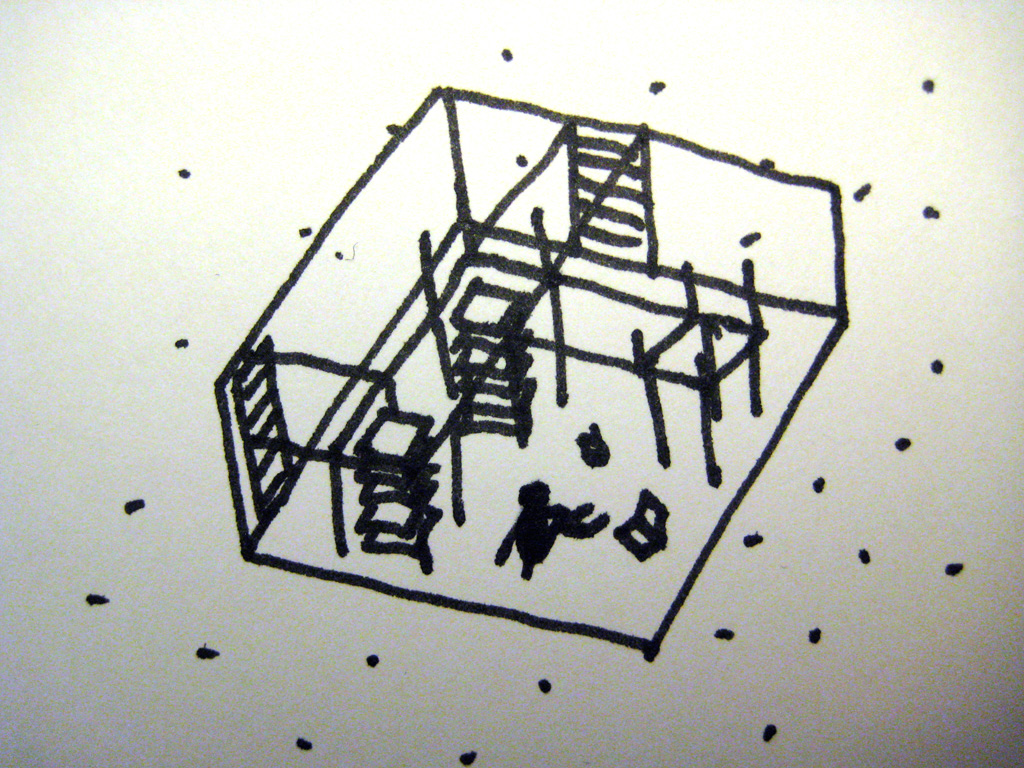

(4) The 9 m2 livable Computers Cabinet, with its computers and racks (which modularity, organization and shape can also be redesigned) could be considered as the extension of a regular servers cabinet (5).

(6) Fresh air needs to enter the space and cool down the machines. (7) Hot and ionized air comes out of the computers. Not very pleasant to breathe. Ionized air triggers electrostaticity.

(8) A natural sun with its natural cycle of days and seasons runs outside. (9) ... as well as a regular night.

(10) An "artificial sun" or daylight is also present within the 9 m2 of the Computers Cabinet. It is always "on", yet at different strengths depending on parameters and live feeds. (11) This "sun" is called Deterritorialized Daylight. It's a daylight that is computed, artificial and triggered by the overall user's activities on the networks (both humans and robots). Therefore, it is some sort of reverse daylight: users and their activities/actions, so as other parameters, generate the amount of deterritorialized daylight (it has been historically --or naturally-- the reverse: people getting active when daylight is "on" --until fire, candles, gas and then electricity started to change the rules--).

(12) All in all, this is the full situation we asked to consider: a 9m2 space and XX m3 volume, an extended and expanded computers cabinet to inhabit, with two "suns" (and a set of other constraints/possibilities!)

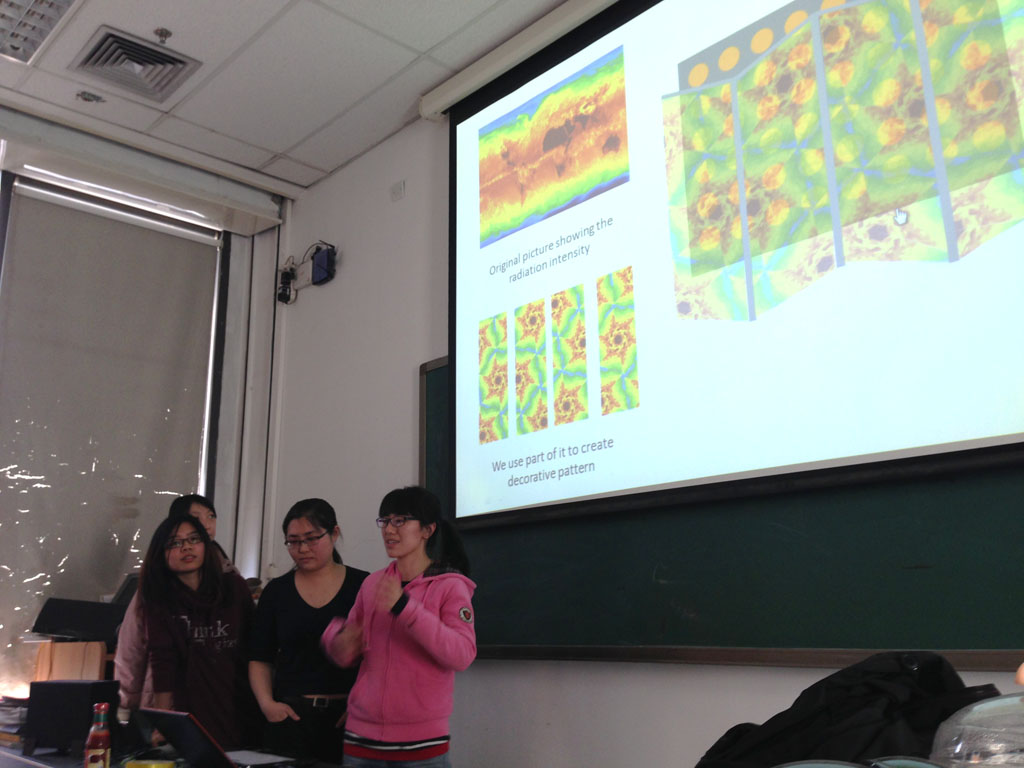

Finally and that was the real purpose of the workshop, we asked the TASML students to develop proposals for this (not so fictional) context. Their projects could be dedicated to the overall situation if they desired, but mostly, we asked them them to think about smaller things that would help interact with the situation and that would take place within it: objects, devices, interfaces, programs, etc.

Students' sketches:

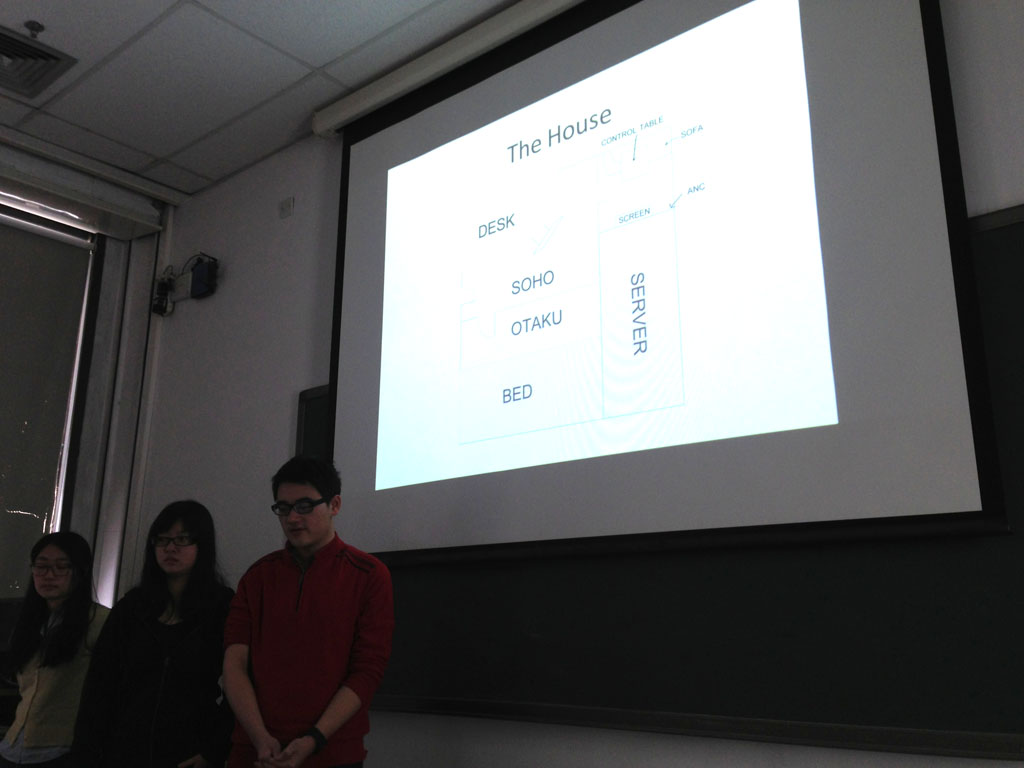

Group #1 (Na Wen, Yin Yiyi, Fang Ke) addressed the complete situation, so as all the 4 groups in fact, and came up with the idea to combine two concepts: the soho (for small office - home office) and the otaku (to my wiki understanding a word that means both "your house" and a person dedicated to an intense individual hobby, recently linked to manga readers and gamers). The computer cabinet would become a space for somebody to live partly retired from the "real" world into his fantasies and some sort of minimal living and self-sufficient space. A screen device was envisioned too which would be understood as an artificial night sky with stars. Only the map of the "stars" would be the one from the location of other cabinets and their brightness their activity.

-

.jpg)

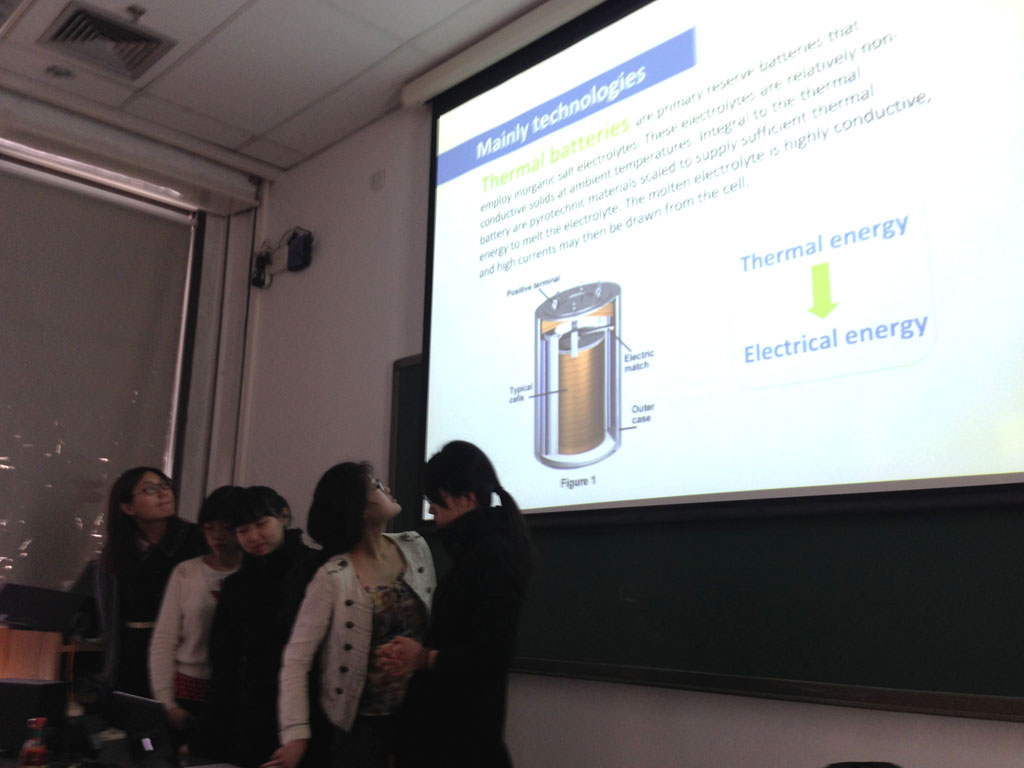

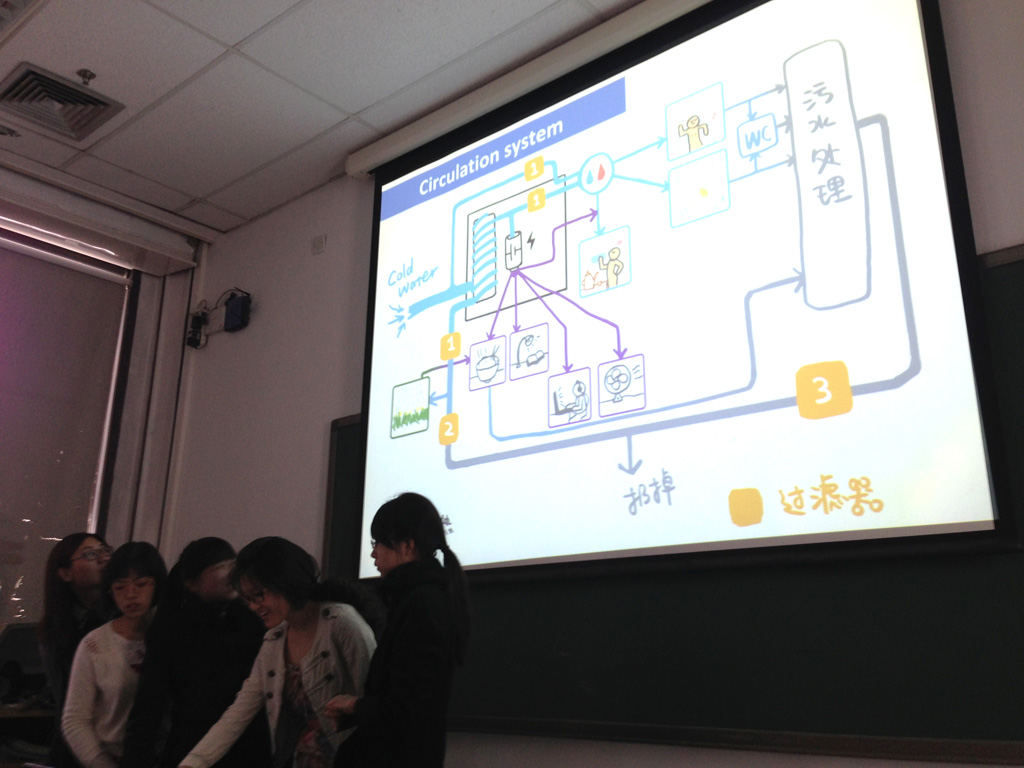

Group #2 (Yang Jieqin, Mou Xinzhu, Zhang Minzhi, Dong Huiyang) focused its attention around a device that converts heat into electricity (note: a "thermopile", but that is not efficient enough yet to be used in everyday situations due to its low performance. So that was fiction too). They thought about transforming the heat coming out of the servers into electricity and therefore produce electricity for the Cabinet (which is not possible today, at least to really power some demanding devices). This heat would also serve to pre-heat the water. Servers were located under the ceiling and were creating therefore a heat zone. The overall proposal was then a self-sufficient space (bio-sphere like) with a cycle of funtions that would follow a logic of distribution from hot > cold, clear water > waste water. The water at the end of the cycle would serve to grow vegetables that would then clean the air, etc.

-

.jpg)

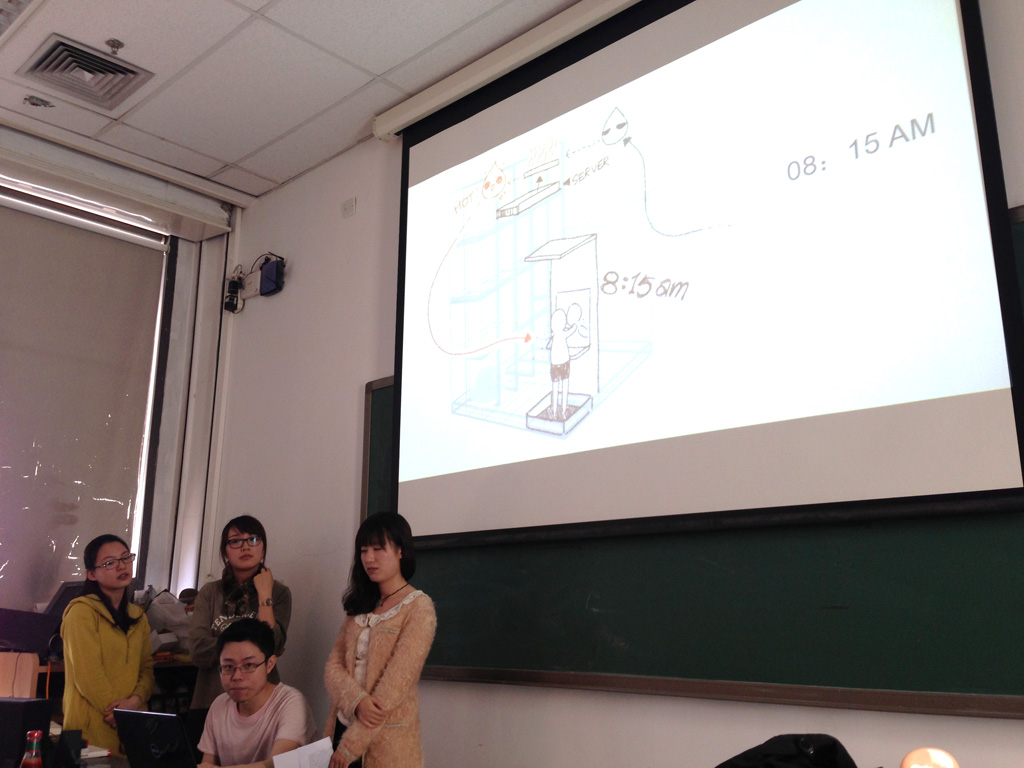

Group #3 (Ao Mengxing, Qian Yunzi, Liu Chang, Zhang Huixin) had an interesting early sketch: they started to design the Cabinet based on the modular system of the actual servers cabinet. Extending it to the 9 m2 size and filling it with other domestic functions. A description of activities happening along time in this space started to suggest that there could be some migration within this space. I suggested they could try a mapping between time, location in the cabinet, functions and possibly heat, while Zhang thought about redesigning the modular aspects of the regular cabinet. These approaches could have triggered a very interesting singular project or dedicated devices I believe. Shame that they didn't continue!

-

Group #4 (Zhang Yingxue, Lin Han, Sun Siran, Tao Lu) combined into one proposal (drawing) each of the four members' approach. The overall design was also a bit into the self-sustainable approach (bio-sphere oriented), yet with different devices: a decorative wallpaper (information design), a wall of vegetables (air cleaning and food production), a game based on a appartment bycycle that would produce electricity and a strange whilpool.

-

... and finally Group #5 stayed asleep on the day of presentation!

All in all, there were some interesting early sketches but that probably stoped too early or went in the wrong direction after the first presentation, into too applied or functional aproaches. That's why we discussed together with Zhang and Cheng Guo (TASML assistant) to plan a public call for students on different campuses, as the theme of work looks quite open for interesting interdisciplinary proposals. (More will follow about this).

One group still works on the project though and will deliver its semester work about it.

Related Links:

Monday, June 10. 2013

Quantum Computing AI Lab

-----

By Charles Choi

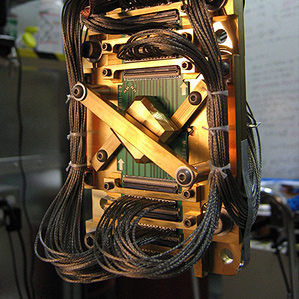

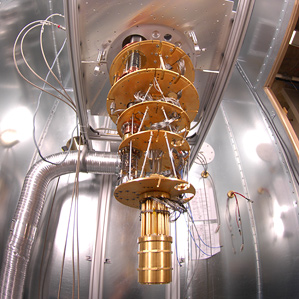

The Quantum Artificial Intelligence Lab will use the most advanced commercially available quantum computer, the D-Wave Two.

Quantum computing took a giant leap forward on the world stage today as NASA and Google, in partnership with a consortium of universities, launched an initiative to investigate how the technology might lead to breakthroughs in artificial intelligence.

The new Quantum Artificial Intelligence Lab will employ what may be the most advanced commercially available quantum computer, the D-Wave Two, which a recent study confirmed was much faster than conventional machines at defeating specific problems (see “D-Wave’s Quantum Computer Goes to the Races, Wins”). The machine will be installed at the NASA Advanced Supercomputing Facility at the Ames Research Center in Silicon Valley and is expected to be available for government, industrial, and university research later this year.

Google believes quantum computing might help it improve its Web search and speech recognition technology. University researchers might use it to devise better models of disease and climate, among many other possibilities. As for NASA, “computers play a much bigger role within NASA missions than most people realize,” says quantum computing expert Colin Williams, director of business development and strategic partnerships at D-Wave. “Examples today include using supercomputers to model space weather, simulate planetary atmospheres, explore magnetohydrodynamics, mimic galactic collisions, simulate hypersonic vehicles, and analyze large amounts of mission data.”

Quantum computers exploit the bizarre quantum-mechanical properties of atoms and other building blocks of the cosmos. At itse very smallest scale, the universe becomes a fuzzy, surreal place—objects can seemingly exist in more than one place at once or spin in opposite directions at the same time.

While regular computers symbolize data in bits, 1s and 0s expressed by flicking tiny switch-like transistors on or off, quantum computers use quantum bits, or qubits, that can essentially be both on and off, enabling them to carry out two or more calculations simultaneously. In principle, quantum computers could prove extraordinarily much faster than normal computers for certain problems because they can run through every possible combination at once. In fact, a quantum computer with 300 qubits could run more calculations in an instant than there are atoms in the universe.

D-Wave, which bills itself as the first commercial quantum computer company, has backers that include Amazon.com founder Jeff Bezos and the CIA’s investment arm In-Q-Tel (see “The CIA and Jeff Bezos Bet on Quantum Computing”). It sold its first quantum computing system, the 128-qubit D-Wave One, to the military contractor Lockheed Martin in 2011. Earlier this year it upgraded that machine to a 512-qubit D-Wave Two—reputedly for about $15 million, which might be roughly what the new Quantum Artificial Intelligence Lab paid for its device.

The collaboration between NASA, Google, and the Universities Space Research Association (USRA) aims to use its computer to advance machine learning, a branch of artificial intelligence devoted to developing computers that can improve with experience. Machine learning is a matter of optimizing behavior that may be easier for quantum computers than conventional machines.

For instance, imagine trying to find the lowest point on a surface covered in hills and valleys. A classical computer might start at a random spot on the surface and look around for a lower spot to explore until it cannot walk downhill anymore. This approach can often get stuck in a local minimum, a valley that is not actually the very lowest point on the surface. On the other hand, quantum computing could make it possible to tunnel through a ridge to see if there is a lower valley beyond it.

“Looks like win-win-win to me—Google, NASA, and USRA bring unique skills and an interest in novel applications to the field,” says Seth Lloyd, a quantum-mechanical engineer at MIT. “In my opinion, the focus on factoring and code-breaking for quantum computers has overemphasized the quest for constructing a large-scale quantum computer, while slighting other potentially more useful and equally interesting applications. Quantum machine learning is an example of a smaller-scale application of quantum computing.”

Over the years, many critics have questioned whether D-Wave’s machines are actually quantum computers and whether they are any more powerful than conventional machines. The standard approach toward operating quantum computers, called the gate model, involves arranging qubits in circuits and making them interact with each other in a fixed sequence. In contrast, D-Wave starts off with a set of noninteracting qubits—a collection of supercomputing loops kept at their lowest energy state, called the ground state—and then slowly, or “adiabatically,” transforms this system into a set of qubits whose interactions at its ground state represent the correct answer for the specific problem the researchers programmed it to solve.

Many scientists have wondered whether the approach D-Wave used was vulnerable to disturbances that might keep qubits from working properly. But independent researchers recently found that D-Wave’s computers can actually solve certain problems up to 3,600 times faster than classical computers. Before choosing the D-Wave Two, NASA, Google, and USRA ran the computer past a series of benchmark and acceptance tests. It passed, in some cases by a giant margin.

USRA will invite researchers across the United States to use the machine. Twenty percent of its computing time will be open to the university community at no cost through a competitive selection process, while the rest of it will be split evenly between NASA and Google. “We’ll be having some of the best and brightest minds in the country working on applications that run on the D-Wave hardware,” Williams says.

Related Links:

Personal comment:

When will we start to speak about "quantum architecture"? "Fuzzy, surreal" architectures "that can seemingly exist in more than one place at once" or that have different and sometimes opposite states at the same time? "Quantum reality" sounds quite like the environment I'm living in every day...

PS. And again, after the Large Hadron Collider, fascinating pictures from scientific devices!

fabric | rblg

This blog is the survey website of fabric | ch - studio for architecture, interaction and research.

We curate and reblog articles, researches, writings, exhibitions and projects that we notice and find interesting during our everyday practice and readings.

Most articles concern the intertwined fields of architecture, territory, art, interaction design, thinking and science. From time to time, we also publish documentation about our own work and research, immersed among these related resources and inspirations.

This website is used by fabric | ch as archive, references and resources. It is shared with all those interested in the same topics as we are, in the hope that they will also find valuable references and content in it.