Friday, October 20. 2017

Note: More than a year ago, I posted about this move by Alphabet-Google toward becoming city designers... I tried to point out the problems related to a company which business is to collect data becoming the main investor in public space and common goods (the city is still part of the commons, isn't it?) But of course, this is, again, about big business ("to make the world a better place" ... indeed) and slick ideas.

But it is highly problematic that a company start investing in public space "for free". We all know what this mean now, don't we? It is not needed and not desired.

So where are the "starchitects" now? What do they say? Not much... Where are all the "regular" architects as well? Almost invisible, tricked in the wrong stakes, with -- I'm sorry...-- very few of them being only able to identify the problem.

This is not about building a great building for a big brand or taking a conceptual position, not even about "die Gestalt" anymore. It is about everyday life for 66% of Earth population by 2050 (UN study). It is, in this precise case, about information technologies and mainly information stategies and businesses that materialize into structures of life.

Shouldn't this be a major concern?

Via MIT Technology Review

-----

By Jamie Condliffe

fabric | rblg legend: this hand drawn image contains all the marketing clichés (green, blue, clean air, bikes, local market, public transportation, autonomous car in a happy village atmosphere... Can't be further from what it will be).

An 800-acre strip of Toronto's waterfront may show us how cities of the future could be built. Alphabet’s urban innovation team, Sidewalk Labs, has announced a plan to inject urban design and new technologies into the city's quayside to boost "sustainability, affordability, mobility, and economic opportunity."

Huh?

Picture streets filled with robo-taxis, autonomous trash collection, modular buildings, and clean power generation. The only snag may be the humans: as we’ve said in the past, people can do dumb things with smart cities. Perhaps Toronto will be different.

Saturday, July 15. 2017

Note: Summer is coming again, and like each year now, it's time to digg into unread books or articles! "Luckily" and due to other activities, we didn't publish much since last Summer. So it won't be too much of a hassle to catch back. Nonetheless, there are almost 2000 entries now on | rblg...

So, I hope you'll enjoy your Summer readings (on the beach... or on the rocks)! On my side, I'll certainly try to do the same and will be back posting in September.

By fabric | ch

-----

As we lack a decent search engine on this blog and as we don't use a "tag cloud" either... but because Summer is certainly one of the best period of the year to spend time reading and digging into past content and topics:

HERE ARE ALL THE CURRENT UPDATED CATEGORIES TO NAVIGATE ON | RBLG BLOG:

(to be seen below if you're navigating on the blog's html pages or here for rss readers)

Tuesday, July 11. 2017

Note: some early optic art from 1802?

The visual optics plates were realized by scientist Thomas Young at that time, when he was studying light (wave theory of light). It took another 100 (and fifty) years to truly access the art world...

My question would be: what kind of "plates" are getting drawn today? (and this drives us to Leonardo, to art-sciences programs of different sorts, etc.)

Via Wikipedia

-----

"(...). Nevertheless, in the early-19th century Young put forth a number of theoretical reasons supporting the wave theory of light, and he developed two enduring demonstrations to support this viewpoint.

With the ripple tank he demonstrated the idea of interference in the context of water waves. With the Young's interference experiment, or double-slit experiment, he demonstrated interference in the context of light as a wave. (...)"

Monday, June 19. 2017

Note: the following post has been widely reblogged recently. The reason why I did wait a bit before archiving it in | rblg.

It interests me as a king of "device" that can handle environmental parameters. In this sense, it has undoubtedly architectural characteristics and could extend itself into an "architectural device". Think here for exemple about the ongoing Jade Eco Park by Philippe Rahm architectes, filled with devices in the competition proposal. Or to move less further about our own work, with small "environmental devices" like Perpetual (Tropical) SUNSHINE, Satellite Daylight, etc. Architecture as device like Public Platform of Future Past, I-Weather as Deep Space Public Lighting or Heterochrony, or even data tools like Deterritorialized Living.

As a matter of fact, there is a "devices" tag in this blog for this precise reason, to give references for trhese king of architectures that trigger modification in the environment.

Via Science

-----

In Switzerland, a giant new machine is sucking carbon directly from the air

By Christa Marshall, E&E News

The world's first commercial plant for capturing carbon dioxide directly from the air opened yesterday, refueling a debate about whether the technology can truly play a significant role in removing greenhouse gases already in the atmosphere. The Climeworks AG facility near Zurich becomes the first ever to capture CO2 at industrial scale from air and sell it directly to a buyer.

Developers say the plant will capture about 900 tons of CO2 annually — or the approximate level released from 200 cars — and pipe the gas to help grow vegetables.

While the amount of CO2 is a small fraction of what firms and climate advocates hope to trap at large fossil fuel plants, Climeworks says its venture is a first step in their goal to capture 1 percent of the world's global CO2 emissions with similar technology. To do so, there would need to be about 250,000 similar plants, the company says.

"Highly scalable negative emission technologies are crucial if we are to stay below the 2-degree target [for global temperature rise] of the international community," said Christoph Gebald, co-founder and managing director of Climeworks. The plant sits on top of a waste heat recovery facility that powers the process. Fans push air through a filter system that collects CO2. When the filter is saturated, CO2 is separated at temperatures above 100 degrees Celsius.

The gas is then sent through an underground pipeline to a greenhouse operated by Gebrüder Meier Primanatura AG to help grow vegetables, like tomatoes and cucumbers.

Gebald and Climeworks co-founder Jan Wurzbacher said the CO2 could have a variety of other uses, such as carbonating beverages. They established Climeworks in 2009 after working on air capture during postgraduate studies in Zurich.

The new plant is intended to run as a three-year demonstration project, they said. In the next year, the company said it plans to launch additional commercial ventures, including some that would bury gas underground to achieve negative emissions.

"With the energy and economic data from the plant, we can make reliable calculations for other, larger projects," said Wurzbacher.

Note: with interesting critical comments below concerning the real sustainable effect by Howard Herzog (MIT).

'Sideshow'

There are many critics of air capture technology who say it would be much cheaper to perfect carbon capture directly at fossil fuel plants and keep CO2 out of the air in the first place. Among the skeptics are Massachusetts Institute of Technology senior research engineer Howard Herzog, who called it a "sideshow" during a Washington event earlier this year. He estimated that total system costs for air capture could be as much as $1,000 per ton of CO2, or about 10 times the cost of carbon removal at a fossil fuel plant.

"At that price, it is ridiculous to think about right now. We have so many other ways to do it that are so much cheaper," Herzog said. He did not comment specifically on Climeworks but noted that the cost for air capture is high partly because CO2 is diffuse in the air, while it is more concentrated in the stream from a fossil fuel plant. Climeworks did not immediately release detailed information on its costs but said in a statement that the Swiss Federal Office of Energy would assist in financing. The European Union also provided funding.

In 2015, the National Academies of Sciences, Engineering and Medicine released a report saying climate intervention technologies like air capture were not a substitute for reducing emissions. Last year, two European scientists wrote in the journal Science that air capture and other "negative emissions" technologies are an "unjust gamble," distracting the world from viable climate solutions (Greenwire, Oct. 14, 2016).

Engineers have been toying with the technology for years, and many say it is a needed option to keep temperatures to controllable levels. It's just a matter of lowering costs, supporters say. More than a decade ago, entrepreneur Richard Branson launched the Virgin Earth Challenge and offered $25 million to the builder of a viable air capture design.

Climeworks was a finalist in that competition, as were companies like Carbon Engineering, which is backed by Microsoft Corp. co-founder Bill Gates and is testing air capture at a pilot plant in British Columbia.

-----

...

And let's also mention while we are here the similar device ("smog removal" for China cities) made by Studio Roosegaarde, Smog Free Project.

Friday, May 12. 2017

Note: an amazing climatic device.

For clean energy experimentation here. Would have loved to have that kind of devices (and budget ;)) when we put in place Perpetual Tropical Sunshine !

Via MIT Technology Review

-----

By Jamie Condliffe

Don’t lean against the light switch at the Synlight building in Jülich, Germany—if you do, things might get rather hotter than you can cope with.

The new facility is home to what researchers at the German Aerospace Center, known as DLR, have called the “world's largest artificial Sun.” Across a single wall in the building sit a series of Xenon short-arc lamps—the kind used in large cinemas to project movies. But in a huge cinema there would be one lamp. Here, spread across a surface 45 feet high and 52 feet wide, there are 140.

When all those lamps are switched on and focused on the same 20 by 20 centimeter spot, they create light that’s 10,000 times more intense than solar radiation anywhere on Earth. At the center, temperatures reach over 3,000 °C.

The setup is being used to mimic large concentrated solar power plants, which use a field full of adjustable mirrors to focus sunlight into a small incredibly hot area, where it melts salt that is then used to create steam and generate electricity.

Researchers at DLR, though, think that a similar mirror setup could be used to power a high-energy reaction where hydrogen is extracted from water vapor. In theory, that process could supply a constant and affordable source of liquid hydrogen fuel—something that clean energy researchers continue to lust after, because it creates no carbon emissions when burned.

Trouble is, folks at DLR don’t quite yet know how to make it happen. So they built a laboratory rig to allow them to tinker with the process using artificial light instead of reflected sunlight—a setup which, as Gizmodo notes, uses the equivalent of a household's entire year of electricity during just four hours of operation, somewhat belying its green aspirations.

Of course, it’s far from the first project to aim to create hydrogen fuel cheaply: artificial photosynthesis, seawater electrolysis, biomass reactions, and many other projects have all tried—and so far failed—to make it a cost-effective exercise. So now it’s over to the Sun. Or a fake one, for now.

(Read more: DLR, Gizmodo, “World’s Largest Solar Thermal Power Plant Delivers Power for the First Time,” “A Big Leap for an Artificial Leaf,” "A New Source of Hydrogen for Fuel-Cell Vehicles")

Monday, April 10. 2017

Note: this "car action" by James Bridle was largely reposted recently. Here comes an additionnal one...

Yet, in the context of this blog, it interests us because it underlines the possibilities of physical (or analog) hacks linked to digital devices that can see, touch, listen or produce sound, etc.

And they are several existing examples of "physical bugs" that come to mind: "Echo" recently tried to order cookies after listening and misunderstanding a american TV ad (it wasn't on Fox news though). A 3d print could be reproduced by listening and duplicating the sound of its printer, and we can now think about self-driving cars that could be tricked as well, mainly by twisting the elements upon which they base their understanding of the environment.

Interesting error potential...

Via Archinect

-----

By Julia Ingalls

James Bridle entraps a self-driving car in a "magic" salt circle. Image: Still from Vimeo, "Autonomous Trap 001."

As if the challenges of politics, engineering, and weather weren't enough, now self-driving cars face another obstacle: purposeful visual sabotage, in the form of specially painted traffic lines that entice the car in before trapping it in an endless loop. As profiled in Vice, the artist behind "Autonomous Trip 001," James Bridle, is demonstrating an unforeseen hazard of automation: those forces which, for whatever reason, want to mess it all up. Which raises the question: how does one effectively design for an impish sense of humor, or a deadly series of misleading markings?

Tuesday, March 07. 2017

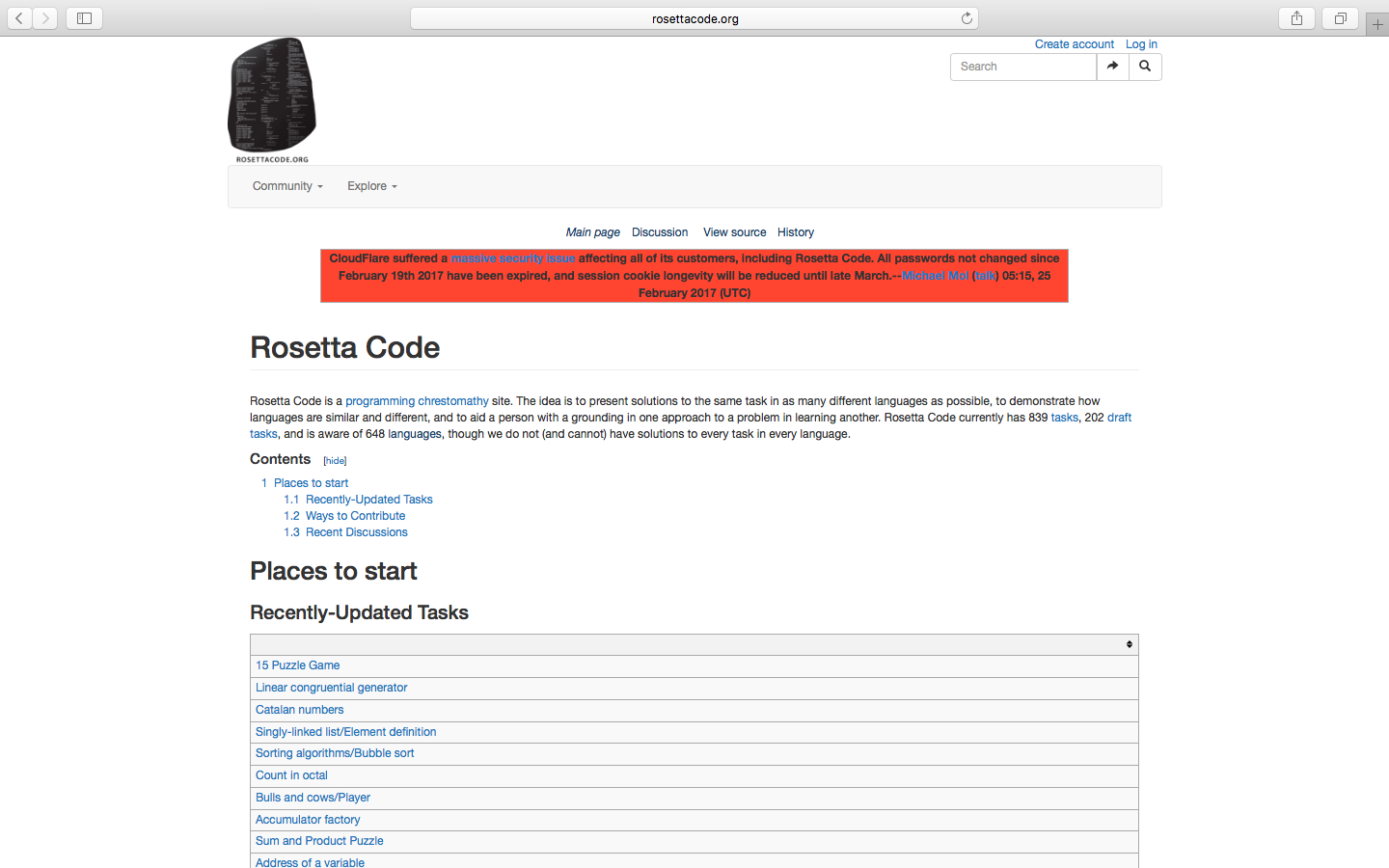

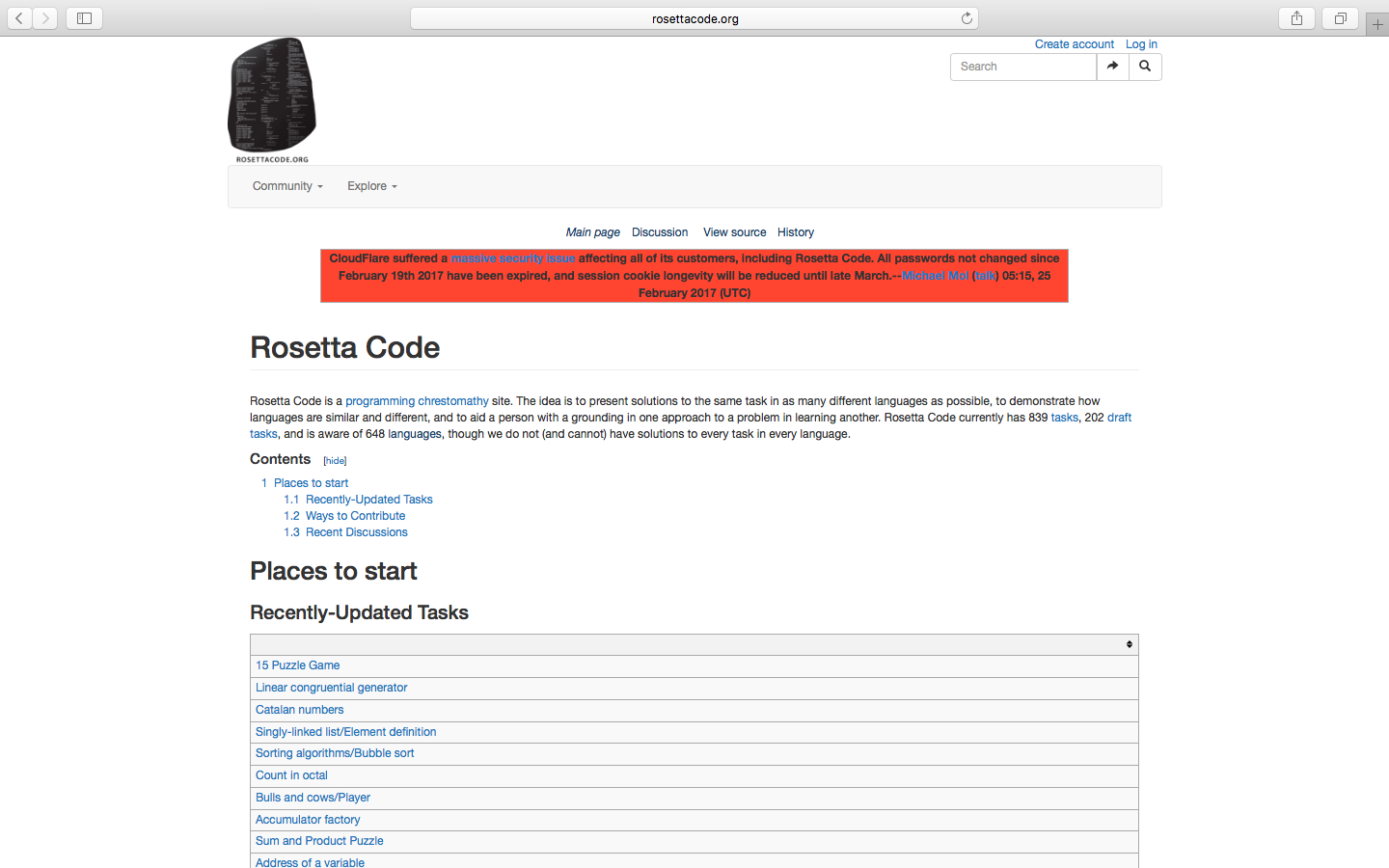

Note: I recently found out about this curious rosettacode.org projects that presents brief solutions of the same task in "as many languages as possible" (rem.: programming languages in this case). Therefore this name, Rosetta Code. Pointing of course to the Rosetta stone that was key to understand hieroglyphs.

The project presents itself as a "programming chrestomathy" site and counts 648 programing languages so far! (839 tasks done... and counting). Babelian (programming) task ... that could possibly help restore old coded pieces.

Via Rosetta Code

-----

(From the site:)

Rosetta Code

Rosetta Code is a programming chrestomathy site. The idea is to present solutions to the same task in as many different languages as possible, to demonstrate how languages are similar and different, and to aid a person with a grounding in one approach to a problem in learning another. Rosetta Code currently has 839 tasks, 202 draft tasks, and is aware of 648 languages, though we do not (and cannot) have solutions to every task in every language.

Monday, February 06. 2017

Note: following the two previous posts about algorythms and bots ("how do they ... ?), here comes a third one.

Slighty different and not really dedicated to bots per se, but which could be considered as related to "machinic intelligence" nonetheless. This time it concerns techniques and algoritms developed to understand the brain (BRAIN initiative, or in Europe the competing Blue Brain Project).

In a funny reversal, scientists applied techniques and algorythms developed to track human intelligence patterns based on data sets to the computer itself. How do a simple chip "compute information"? And the results are surprising: the computer doesn't understand how the computer "thinks" (or rather works in this case)!

This to confirm that the brain is certainly not a computer (made out of flesh)...

Via MIT Technology Review

-----

Neuroscience Can’t Explain How an Atari Works

By Jamie Condlife

When you apply tools used to analyze the human brain to a computer chip that plays Donkey Kong, can they reveal how the hardware works?

Many research schemes, such as the U.S. government’s BRAIN initiative, are seeking to build huge and detailed data sets that describe how cells and neural circuits are assembled. The hope is that using algorithms to analyze the data will help scientists understand how the brain works.

But those kind of data sets don’t yet exist. So Eric Jonas of the University of California, Berkeley, and Konrad Kording from the Rehabilitation Institute of Chicago and Northwestern University wondered if they could use their analytical software to work out how a simpler system worked.

They settled on the iconic MOS 6502 microchip, which was found inside the Apple I, the Commodore 64, and the Atari Video Game System. Unlike the brain, this slab of silicon is built by humans and fully understood, down to the last transistor.

The researchers wanted to see how accurately their software could describe its activity. Their idea: have the chip run different games—including Donkey Kong, Space Invaders, and Pitfall, which have already been mastered by some AIs—and capture the behavior of every single transistor as it did so (creating about 1.5 GB per second of data in the process). Then they would turn their analytical tools loose on the data to see if they could explain how the microchip actually works.

For instance, they used algorithms that could probe the structure of the chip—essentially the electronic equivalent of a connectome of the brain—to establish the function of each area. While the analysis could determine that different transistors played different roles, the researchers write in PLOS Computational Biology, the results “still cannot get anywhere near an understanding of the way the processor really works.”

Elsewhere, Jonas and Kording removed a transistor from the microchip to find out what happened to the game it was running—analogous to so-called lesion studies where behavior is compared before and after the removal of part of the brain. While the removal of some transistors stopped the game from running, the analysis was unable to explain why that was the case.

In these and other analyses, the approaches provided interesting results—but not enough detail to confidently describe how the microchip worked. “While some of the results give interesting hints as to what might be going on,” explains Jonas, “the gulf between what constitutes ‘real understanding’ of the processor and what we can discover with these techniques was surprising.”

It’s worth noting that chips and brains are rather different: synapses work differently from logic gates, for instance, and the brain doesn’t distinguish between software and hardware like a computer. Still, the results do, according to the researchers, highlight some considerations for establishing brain understanding from huge, detailed data sets.

First, simply amassing a handful of high-quality data sets of the brains may not be enough for us to make sense of neural processes. Second, without many detailed data sets to analyze just yet, neuroscientists ought to remain aware that their tools may provide results that don’t fully describe the brain’s function.

As for the question of whether neuroscience can explain how an Atari works? At the moment, not really.

(Read more: “Google's AI Masters Space Invaders (But It Still Stinks at Pac-Man),” “Government Seeks High-Fidelity ‘Brain-Computer’ Interface”)

Thursday, January 26. 2017

Note: I just read this piece of news last day about Echo (Amazon's "robot assistant"), who accidentally attempted to buy large amount of toys by (always) listening and misunderstanding a phrase being told on TV by a presenter (and therefore captured by Echo in the living room and so on)... It is so "stupid" (I mean, we can see how the act of buying linked to these so-called "A.I"s is automatized by default configuration), but revealing of the kind of feedback loops that can happen with automatized decision delegated to bots and machines.

Interesting word appearing in this context is, btw, "accidentally".

Via Endgadget

-----

By Jon Fingas

Amazon's Echo attempted a TV-fueled shopping spree

It's nothing new for voice-activated devices to behave badly when they misinterpret dialogue -- just ask anyone watching a Microsoft gaming event with a Kinect-equipped Xbox One nearby. However, Amazon's Echo devices is causing more of that chaos than usual. It started when a 6-year-old Dallas girl inadvertently ordered cookies and a dollhouse from Amazon by saying what she wanted. It was a costly goof ($170), but nothing too special by itself. However, the response to that story sent things over the top. When San Diego's CW6 discussed the snafu on a morning TV show, one of the hosts made the mistake of saying that he liked when the girl said "Alexa ordered me a dollhouse." You can probably guess what happened next.

Sure enough, the channel received multiple reports from viewers whose Echo devices tried to order dollhouses when they heard the TV broadcast. It's not clear that any of the purchases went through, but it no doubt caused some panic among people who weren't planning to buy toys that day.

It's easy to avoid this if you're worried: you can require a PIN code to make purchases through the Echo or turn off ordering altogether. You can also change the wake word so that TV personalities won't set off your speaker in the first place. However, this comedy of errors also suggests that there's a lot of work to be done on smart speakers before they're truly trustworthy. They may need to disable purchases by default, for example, and learn to recognize individual voices so that they won't respond to everyone who says the magic words. Until then, you may see repeats in the future.

Thursday, January 19. 2017

Note: let's "start" this new (delusional?) year with this short video about the ways "they" see things, and us. They? The "machines" of course, the bots, the algorithms...

An interesting reassembled trailer that was posted by Matthew Plummer-Fernandez on his Tumblr #algopop that documents the "appearance of algorithms in popular culture". Matthew was with us back in 2014, to collaborate on a research project at ECAL that will soon end btw and worked around this idea of bots in design.

Will this technological future become "delusional" as well, if we don't care enough? As essayist Eric Sadin points it in his recent book, "La silicolonisation du monde" (in French only at this time)?

Possibly... It is with no doubt up to each of us (to act), so as regarding our everyday life in common with our fellow human beings!

Via #algopop

-----

An algorithm watching a movie trailer by Støj

Everything but the detected objects are removed from the trailer of The Wolf of Wall Street. The software is powered by Yolo object-detection, which has been used for similar experiments.

|