Tuesday, September 03. 2013

We Need a Fixer (Not Just a Maker) Movement

Via Treehugger

-----

Wired has an excellent article, We Need a Fixer (Not Just a Maker) Movement, that focuses on how repairing electronics is more than simply good for the environment; it's good for brains, it's good for our souls:

Ultimately, the real challenge here isn’t technological. It’s cultural. Can fixing be made sexy? Can we make it delightful to preserve things?

I think so. Recently my kids were hankering for a laptop. So we pulled a five-year-old Dell from a closet. After consulting some YouTube how-tos and the manual (Dell circulates those freely), we deduced that we needed a new heat sink, keyboard, and DVD drive. When the parts arrived, we opened the case and dove in.

And you know what? It was shockingly easy. In three hours we had the laptop running perfectly. We even removed the syphilitic mess of Windows Vista and installed Ubuntu. For $90 in parts, the kids have a laptop that runs like new. Best of all, we felt like we had unlocked superpowers. We demystified the machine. We became puzzle solvers and fought against the waves of trash. It was so much fun that now we’re taking in our neighbors’ busted laptops and fixing them too.

We started off upgrading a machine — and wound up upgrading ourselves.

Read the full article here -- it is sure to inspire you. And just in case you need more inspiration, here is what we at TreeHugger have had to say about the importance of repairing over replacing, and DIYing electronics:

Why Gadget Repairability Is So Damn Important

If you can't open it, you don't own it; and what's worse is that if you can't or won't open it, then you're not fully grasping the actual impact of and potential for that device. What is more, your creativity and inventiveness is tossed aside and you are told what you will want, when you will want it.

The DIY Ethic and Creating Technology Independence

The DIY trend equates to independence from manufacturers, equates to freedom of creativity, equates to environmental responsibility on a larger scale. Learning to build, repair, upgrade and modify gadgets is at the foundation of this independence.

How DIY Electronics Benefit The Environment

"DIYing both directly and indirectly benefits the planet; not only are resources saved by reusing parts and rebuilding gadgets, but also tasks accomplished with the newly created devices can be of use for everyone from scientists to the average home owner." Explore the benefits from citizen science to minimizing use of rare metals.

The DIY Ethic and Modern Technology: Why taking ownership of your electronics is essential

When it comes to fixing, building, hacking and modifying electronic devices and systems to our own advantage, we may have strayed; but a growing community of gadgeteers is helping to bring back not only the notion that we can indeed do our own thing but that we should.

How The DIY Electronics Trend Is Empowering People, Communities, Businesses

Many DIYers are breaking through those "warranty voided" stickers, digging through boxes of components, coming up with plans and prototypes for imaginative ideas for solving problems, and we're seeing amazing results in empowering individuals, communities and businesses to pursue their concepts.

Wednesday, August 07. 2013

Massive NSA data center will use 1.7 million gallons of water a day

Via Treehugger

-----

By Megan Treacy

Undeniably one of the biggest stories of the year has been the leak about the NSA PRISM program, which has been monitoring American citizens' communications. Many people have been appalled by this revelation, but it turns out there is an environmentally appalling part of this spying program too. More details have been released about NSA's new Intelligence Community Comprehensive National Cybersecurity Initiative Data Center, otherwise known as that massive data center being built by the agency in Bluffdale, Utah.

Turns out that collecting tons of information in the form of phone calls, emails and web searches is an energy and water-hungry business. According to reports, the one million square-foot facility will house 100,000 square feet of data-storing servers and will use 1.7 million gallons of water per day to keep those servers cool.

The data center will account for one percent of all water use in the area and the city of Bluffdale is looking for additional water sources for when the facility is finished in September.

It won't be an energy-sipper either, but that was obvious from the size of the place. The facility will require 65 megawatts of power, which is the equivalent of 65,000 homes. It will have its own power substation and back-up diesel power generators.

The crazy thing is that this gigantic data center isn't quite enough. The NSA is also building another data center in Fort Meade, Maryland that will be two-thirds the size of the mega center, but that's still pretty darn big.

Thursday, July 11. 2013

What Ant Colony Networks Can Tell Us About Whats Next for Digital Networks

Via Next Nature

-----

Ever notice how ant colonies so successfully explore and exploit resources in the world … to find food at 4th of July picnics, for example? You may find it annoying. But as an ecologist who studies ants and collective behavior, I think it’s intriguing — especially the fact that it’s all done without any central control.

What’s especially remarkable: the close parallels between ant colonies’ networks and human-engineered ones. One example is “Anternet”, where we, a group of researchers at Stanford, found that the algorithm desert ants use to regulate foraging is like the Traffic Control Protocol (TCP) used to regulate data traffic on the internet. Both ant and human networks use positive feedback: either from acknowledgements that trigger the transmission of the next data packet, or from food-laden returning foragers that trigger the exit of another outgoing forager.

This research led some to marvel at the ingenuity of ants, able to invent systems familiar to us: wow, ants have been using internet algorithms for millions of years!

But insect behavior mimicking human networks — another example are the ant-like solutions to the traveling salesman problem provided by the ant colony optimization algorithm — is actually not what’s most interesting about ant networks. What’s far more interesting are the parallels in the other direction: What have the ants worked out that we humans haven’t thought of yet?

During the 130 million years or so that ants have been around, evolution has tuned ant colony algorithms.

During the 130 million years or so that ants have been around, evolution has tuned ant colony algorithms to deal with the variability and constraints set by specific environments.

Ant colonies use dynamic networks of brief interactions to adjust to changing conditions. No individual ant knows what’s going on. Each ant just keeps track of its recent experience meeting other ants, either in one-on-one encounters when ants touch antennae, or when an ant encounters a chemical deposited by another.

Such networks have made possible the phenomenal diversity and abundance of more than 11,000 ant species in every conceivable habitat on Earth. So Anternet, and other ant networks, have a lot to teach us. Ant protocols may suggest ways to build our own information networks…

Dealing with High Operating Costs

Harvester ant colonies in the desert must spend water to get water. The ants lose water when foraging in the hot sun, and get their water by metabolizing it out of the seeds that they collect. Since colonies store seeds, their system of positive feedback doesn’t waste foraging effort when water costs are high — even if it means they leave some seeds “on the table” (or rather, ground) to be obtained on another, more humid day.

In this way, the Anternet allows the colony to deal with high operating costs. In the internet, the TCP protocol also prevents the system from sending data out on the internet when there’s no bandwidth available. Effort would be wasted if the message is lost, so it’s not worth sending it out unless it’s certain to reach its destination.

More recently, I’ve shown how natural selection is currently optimizing the Anternet algorithm. I’ve been following a population of 300 harvester ant colonies for more than 25 years, and by using genetic fingerprinting we figured out which colonies had more offspring colonies.

Colonies store food inside the nest as a survival tactic. On especially hot days, colonies that are likely to lay low instead of collecting more food are the ones that have more offspring colonies over their 25-year lifetimes. Restraint therefore emerges as the best strategy at the colony level. Long-lived colonies in the desert regulate their behavior not to maximize or optimize food intake, but instead to keep going without wasting resources.

In the face of scarcity, the algorithm that regulates the flow of ants is evolving toward minimizing operating costs rather than immediate accumulation. This is a sustainable strategy for any system, like a desert ant colony or the mobile internet, where it’s essential to achieve long-term reliability while avoiding wasted effort.

Scaling Up from Small to Large Systems

What happens when a system scales up? Like human-engineered systems, ant systems must be robust to scale up as the colony grows, and they have to be able to tolerate the failure of individual components.

Since large systems allow for some messiness, the ideal solutions utilize the contributions of each additional ant in such a way that the benefit of an extra worker outweighs the cost of producing and feeding one.

The tools that serve large colonies well, therefore, are redundancy and minimal information. Enormous ant colonies function using very simple interactions among nameless ants without any address.

In engineered systems we too are searching for ways to ensure reliable outcomes, as our networks scale, by using cheap operations that make use of randomness. Elegant top-down designs are appealing, but the robustness of ant algorithms shows that tolerating imperfection sometimes leads to better solutions.

Optimizing for First-Mover Advantage

The diversity of ant algorithms shows how evolution has responded to different environmental constraints. When operating costs are low and colonies seek an ephemeral delicacy — like flower nectar or watermelon rinds — searching speed is essential if the colony is to capture the prize before it dries up or is taken away.

In the face of scarcity, the algorithm that regulates the flow of ants is evolving toward minimizing operating costs rather than immediate accumulation.

Since ant colonies compete with each other and many are out looking for the same food, the first colony to arrive might have the best chance of holding on to the food and keeping the other ants away.

How does a colony achieve this first-mover advantage without any central control? The challenge in this situation is for the colony to manage the flow of ants so it has an ant almost everywhere almost all the time. The goal is to increase the likelihood that some ant will be close enough to encounter whatever happens to show up.

One strategy ants use (familiar from our own data networks) is to set up a circuit of permanent highways — like a network of cell phone towers — from which ants search locally. The invasive Argentine ants are experts at this; they’ll find any crumb that lands on your kitchen counter.

The Argentine ants also adjust their paths, shifting from a close to random walk when there are lots of ants around, leading each ant to search thoroughly in a small area, to a straighter path when there are few ants around, thus allowing the whole group to cover more ground.

Like a distributed demand-response network, the aggregated responses of each ant to local conditions generates the outcome for the whole system, without any centralized direction or control.

Addressing Security Breaches and Disasters

In the tropics, where hundreds of ant species are packed close together and competing for resources, colonies must deal with security problems. This has led to the evolution of security protocols that use local information for intrusion detection and for response.

One colony might use (“borrow” or “steal”, as humans would say) information from another, such as chemical trails or the density of ants, to find and use resources.

Rather than attempting to prevent incursions completely, however, ants create loose, stochastic identity systems in which one species regulates its behavior in response to the level of incursion from another.

There are obvious parallels with computer security. It’s becoming clear (consider recent events!) that we too will need to implement local evaluation and repair of intrusions, tolerating some level of imperfection. The ants have found ways to let their systems respond to each others’ incursions, without attempting to set up a central authority that regulates hacks.

Ants have evolved security protocols that use local information for intrusion detection and response.

Some of our networks seem to be moving toward using methods deployed by the ants.

Take the disaster recovery protocols of ants that forage in trees where branches can break, so the threat of rupture is high. A ring network, with signals or ants flowing in both directions, allows for rapid recovery here; after a break in the flow in one direction, the flow in the other direction can re-establish a link.

Similarly, early fiber-optic cable networks were often disrupted by farm machinery and other digging: one break could bring down the system because it would isolate every load. Engineers soon discovered, as ants have already done, that ring networks would create networks that are easier to repair.

***

Our networks will continue to change and evolve. By examining and comparing the algorithms used by ants in the desert, in the tropical forest, and the invasive species that visit our kitchens, it’s already obvious that the ants have come up with new solutions that can teach us something about how we should engineer our systems.

Using simple interactions like the brief touch of antennae — not unlike our fleeting status updates in ephemeral social networks — colonies make networks that respond to a world that constantly changes, with resources that show up in patches and then disappear. These networks are easy to repair and can grow or shrink.

Ant colonies have been used throughout history as models of industry, obedience, and wisdom. Although the ants themselves can be indolent, inconsiderate of others, and downright stupid, we have much to learn from ant colony protocols. The ants have evolved ways of working together that we haven’t yet dreamed of.

-

Story via Wired. Image Shutterstock.

Personal comment:

Not only do the ants build amazing architectures, they are also using algorithms and networks for millenia to achieve quite sustainable results and behaviors. As the article suggest, should we learn from ants?

Thursday, July 04. 2013

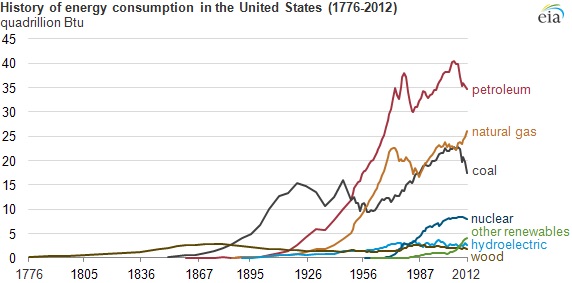

How Energy Consumption Has Changed Since 1776

-----

The U.S. Energy Information Administration reviews big changes in energy use since the Declaration of Independence.

By Kevin Bullis

Energy independence: Since the colonies parted from Britain there have been big changes in energy use. It’s easy to forget just how recently we started using fossil fuels in large amounts. In honor of the July 4th holiday, the U.S. Energy Information has produced a chart showing how rapidly the country shifted from using wood almost exclusively as an energy source to using first coal, then petroleum and natural gas. Here’s a couple of notable things about the chart. The first is the obvious staying power of coal (see “The Enduring Technology of Coal”). Coal wasn’t used in significant amounts until the mid-1800s, but then it increases quickly (and with it, overall energy consumption increases by about 5 times). When oil is introduced, it seems to displace coal, leading to a sharp drop in coal consumption. But coal use quickly recovers. A similar drop occurs when natural gas consumption starts to rise. But within a couple of decades coal use is growing again. Near the end of the chart coal use drops off again as natural gas production surges–a result of fracking technology. What the chart doesn’t show is that the EIA expects coal consumption to go up again this year. The stuff is cheap, and we seem to keep finding ways to use it. President Obama recently praised the reduction in carbon dioxide emissions that the surge of natural gas production enabled (see “A Drop in U.S. CO2 Emissions” and “Obama Orders EPA to Regulate Power Plants in Wide-Ranging Climate Plan”). Given the resilience of coal, though, it’s hard to be optimistic that the decreased rate of emissions will persist—absent regulations that prevent it. One other interesting bit. Renewables such as wind and solar power now produce more energy than was consumed in the mid-1800s. So if we want a society that runs completely on these renewables, all we have to do is reduce the population to what it was then, only use as much energy as they did, stop flying airplanes (big ones require oil), stop industrial processes that require energy in forms other than electricity, and only drive electric vehicles or ride horses. I may have left something out. The good news is renewables are increasing fast. But if history is a guide, the introduction of a new energy source doesn’t cause the other sources of energy to decrease, at least not in the long run. Even wood consumption has close to what it was in the 1800s, even though it’s less convenient in many ways that fossil fuels. Introducing new sources of energy seems to allow overall energy consumption to increase. Absent regulations or political crises that cause the cost of fossil fuels to rise, as technological advances make renewable energy cheaper we’ll use it more, but we’ll likely keep using more of the other sources of energy, too. Indeed, the EIA predicts that in 2040, 75% pf U.S. energy will still come from oil, coal, and natural gas.

Thursday, May 02. 2013

With Florida Project, the Smart Grid Has Arrived

-----

Smart grid technology has been implemented in many places, but Florida’s new deployment is the first full-scale system.

By Kevin Bullis on May 2, 2013

Smart power: Andrew Brown, an engineer at Florida Power & Light, monitors equipment in one of the utility’s smart grid diagnostic centers.

The first comprehensive and large scale smart grid is now operating. The $800 million project, built in Florida, has made power outages shorter and less frequent, and helped some customers save money, according to the utility that operates it.

Smart grids should be far more resilient than conventional grids, which is important for surviving storms, and make it easier to install more intermittent sources of energy like solar power (see “China Tests a Small Smart Electric Grid” and “On the Smart Grid, a Watt Saved Is a Watt Earned”). The Recovery Act of 2009 gave a vital boost to the development of smart grid technology, and the Florida grid was built with $200 million from the U.S. Department of Energy made available through the Recovery Act.

Dozens of utilities are building smart grids—or at least installing some smart grid components, but no one had put together all of the pieces at a large scale. Florida Power & Light’s project incorporates a wide variety of devices for monitoring and controlling every aspect of the grid, not just, say, smart meters in people’s homes.

“What is different is the breadth of what FPL’s done,” says Eric Dresselhuys, executive vice president of global development at Silver Spring Networks, a company that’s setting up smart grids around the world, and installed the network infrastructure for Florida Power & Light (see “Headed into an IPO, Smart Grid Company Struggles for Profit”).

Many utilities are installing smart meters—Pacific Gas & Electric in California has installed twice as many as FPL, for example. But while these are important, the flexibility and resilience that the smart grid promises depends on networking those together with thousands of sensors at key points in the grid— substations, transformers, local distribution lines, and high voltage transmission lines. (A project in Houston is similar in scope, but involves half as many customers, and covers somewhat less of the grid.)

In FPL’s system, devices at all of these places are networked—data jumps from device to device until it reaches a router that sends it back to the utility—and that makes it possible to sense problems before they cause an outage, and to limit the extent and duration of outages that still occur (see “The Challenges of Big Data on the Smart Grid”). The project involved 4.5 million smart meters and over 10,000 other devices on the grid.

The project was completed just last week, so data about the impact of the whole system isn’t available yet. But parts of the smart grid have been operating for a year or more, and there are examples of improved operation. Customers can track their energy usage by the hour using a website that organizes data from smart meters. This helped one customer identify a problem with his air conditioner, says Brian Olnick, vice president of smart grid solutions at Florida Power & Light, when he saw a jump in electricity consumption compared to the previous year in similar weather.

The meters have also cut the duration of power outages. Often power outages are caused by problems within a home, like a tripped circuit breaker. Instead of dispatching a crew to investigate, which could take hours, it is possible to resolve the issue remotely. That happened 42,000 times last year, reducing the duration of outages by about two hours in each case, Olnick says.

The utility also installed sensors that can continually monitor gases produced by transformers to “determine whether the transformer is healthy, is becoming sick, or is about to experience an outage,” says Mark Hura, global smart grid commercial leader at GE, which makes the sensor.

Ordinarily, utilities only check large transformers once every six months or less, he says. The process involves taking an oil sample and sending it to the lab. In one case this year, the new sensor system identified an ailing transformer in time to prevent a power outage that could have affected 45,000 people. Similar devices allowed the utility to identify 400 ailing neighborhood-level transformers before they failed.

Smart grid technology is having an impact elsewhere. After Hurricane Sandy, sensors helped utility workers in some areas restore power faster than in others. One problem smart grids address is nested power outages—when smaller problems are masked by an outage that hits a large area. In a conventional system, after utility workers fix the larger problem, it can take hours for them to realize that a downed line has cut off power to a small area. With the smart grid, utility workers can ping sensors at smart meters or power lines before they leave an area, identifying these smaller outages.

And smart grid devices are helping utilities identify problems that could otherwise go misdiagnosed for years. In Chicago, for example, new voltage monitors indicated that a neighborhood was getting the wrong voltage, a problem that could wear out appliances. The fix took a few minutes.

As more renewable energy is installed, the smart grid will make it easier for utilities to keep the lights on. Without local sensors, it’s difficult for them to know how much power is coming from solar panels—or how much backup they need to have available in case clouds roll in and that power drops.

But whether the nearly $1 billion investment in smart grid infrastructure will pay for itself remains to be seen. The DOE is preparing reports on the impact of the technology to be published this year and next. Smart grid technology is also raising questions about security, since the networks could offer hackers new targets (see “Hacking the Smart Grid”).

Personal comment:

This is a good news! As many countries are now looking to build smart grids, let's hope that the first outcomes of this implementation will be positive.

Smart grids try to do for energy what the internet did for information, meaning that potentially everybody could produce clean energy and "share it" (if in excess or at certain times) through the grid. And monitor the usages, for the good and for the bad. We'll certainly see some sort of Google thing in the energy sector very soon. This might have huge impacts, especially for the renewable energies. The big problem with the "clean" approach remains about the way to efficiently store excess energy when it is not used, so to use it later when it will be.

Jeremy Rifkin describes in his last book, "The Third Industrial Revolution", how a more horizontal society that will be both based on information networks and energy networks could look like and though it is certainly a bit simplified in many aspects or omits counter examples, it is very exciting nonetheless!

Tuesday, April 30. 2013

A Smarter Algorithm Could Cut Energy Use in Data Centers by 35 Percent

-----

By David Talbot on April 16, 2013

Storing video and other files more intelligently reduces the demand on servers in a data center.

Worldwide, data centers consume huge and growing amounts of electricity.

New research suggests that data centers could significantly cut their electricity usage simply by storing fewer copies of files, especially videos.

For now the work is theoretical, but over the next year, researchers at Alcatel-Lucent’s Bell Labs and MIT plan to test the idea, with an eye to eventually commercializing the technology. It could be implemented as software within existing facilities. “This approach is a very promising way to improve the efficiency of data centers,” says Emina Soljanin, a researcher at Bell Labs who participated in the work. “It is not a panacea, but it is significant, and there is no particular reason that it couldn’t be commercialized fairly quickly.”

With the new technology, any individual data center could be expected to save 35 percent in capacity and electricity costs—about $2.8 million a year or $18 million over the lifetime of the center, says Muriel Médard, a professor at MIT’s Research Laboratory of Electronics, who led the work and recently conducted the cost analysis.

So-called storage area networks within data center servers rely on a tremendous amount of redundancy to make sure that downloading videos and other content is a smooth, unbroken experience for consumers. Portions of a given video are stored on different disk drives in a data center, with each sequential piece cued up and buffered on your computer shortly before it’s needed. In addition, copies of each portion are stored on different drives, to provide a backup in case any single drive is jammed up. A single data center often serves millions of video requests at the same time.

The new technology, called network coding, cuts way back on the redundancy without sacrificing the smooth experience. Algorithms transform the data that makes up a video into a series of mathematical functions that can, if needed, be solved not just for that piece of the video, but also for different parts. This provides a form of backup that doesn’t rely on keeping complete copies of the data. Software at the data center could simply encode the data as it is stored and decode it as consumers request it.

Médard’s group previously proposed a similar technique for boosting wireless bandwidth (see “A Bandwidth Breakthrough”). That technology deals with a different problem: wireless networks waste a lot of bandwidth on back-and-forth traffic to recover dropped portions of a signal, called packets. If mathematical functions describing those packets are sent in place of the packets themselves, it becomes unnecessary to re-send a dropped packet; a mobile device can solve for the missing packet with minimal processing. That technology, which improves capacity up to tenfold, is currently being licensed to wireless carriers, she says.

Between the electricity needed to power computers and the air conditioning required to cool them, data centers worldwide consume so much energy that by 2020 they will cause more greenhouse-gas emissions than global air travel, according to the consulting firm McKinsey.

Smarter software to manage them has already proved to be a huge boon (see “A New Net”). Many companies are building data centers that use renewable energy and smarter energy management systems (see “The Little Secrets Behind Apple’s Green Data Centers”). And there are a number of ways to make chips and software operate more efficiently (see “Rethinking Energy Use in Data Centers”). But network coding could make a big contribution by cutting down on the extra disk drives—each needing energy and cooling—that cloud storage providers now rely on to ensure reliability.

This is not the first time that network coding has been proposed for data centers. But past work was geared toward recovering lost data. In this case, Médard says, “we have considered the use of coding to improve performance under normal operating conditions, with enhanced reliability a natural by-product.”

Personal comment:

Still a link in the context of our workshop at the Tsinghua University and related to data storage at large.

The link between energy, algorithms and data storage made obvious. To be read in parallel with the previous repost from Kazys Varnelis, Into the Cloud (with zombies).

-

In the same idea, another piece of code that could cut flight delays and therefore cut approx $1.2 million in annual crew costs and $5 million in annual fuel savings to a midsized airline...

Monday, April 22. 2013

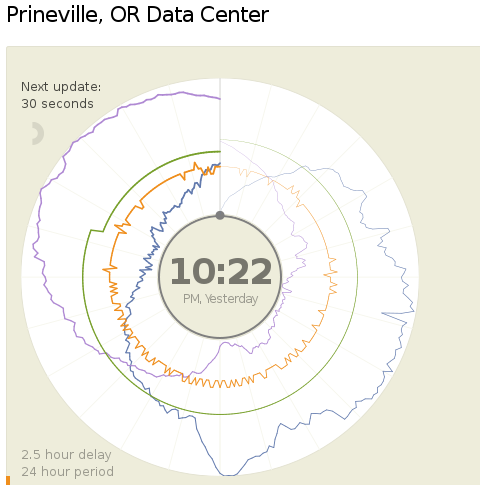

A new way to report data center's Power and Water Usage Effectiveness (PUE and WUE)

Via Computed.Blg via Open Compute Project

-----

Today (18.04.2013) Facebook launched two public dashboards that report continuous, near-real-time data for key efficiency metrics – specifically, PUE and WUE – for our data centers in Prineville, OR and Forest City, NC. These dashboards include both a granular look at the past 24 hours of data and a historical view of the past year’s values. In the historical view, trends within each data set and correlations between different metrics become visible. Once our data center in Luleå, Sweden, comes online, we’ll begin publishing for that site as well.

We began sharing PUE for our Prineville data center at the end of Q2 2011 and released our first Prineville WUE in the summer of 2012. Now we’re pulling back the curtain to share some of the same information that our data center technicians view every day. We’ll continue updating our annualized averages as we have in the past, and you’ll be able to find them on the Prineville and Forest City dashboards, right below the real-time data.

Why are we doing this? Well, we’re proud of our data center efficiency, and we think it’s important to demystify data centers and share more about what our operations really look like. Through the Open Compute Project (OCP), we’ve shared the building and hardware designs for our data centers. These dashboards are the natural next step, since they answer the question, “What really happens when those servers are installed and the power’s turned on?”

Creating these dashboards wasn’t a straightforward task. Our data centers aren’t completed yet; we’re still in the process of building out suites and finalizing the parameters for our building managements systems. All our data centers are literally still construction sites, with new data halls coming online at different points throughout the year. Since we’ve created dashboards that visualize an environment with so many shifting variables, you’ll probably see some weird numbers from time to time. That’s OK. These dashboards are about surfacing raw data – and sometimes, raw data looks messy. But we believe in iteration, in getting projects out the door and improving them over time. So we welcome you behind the curtain, wonky numbers and all. As our data centers near completion and our load evens out, we expect these inevitable fluctuations to correspondingly decrease.

We’re excited about sharing this data, and we encourage others to do the same. Working together with AREA 17, the company that designed these visualizations, we’ve decided to open-source the front-end code for these dashboards so that any organization interested in sharing PUE, WUE, temperature, and humidity at its data center sites can use these dashboards to get started. Sometime in the coming weeks we’ll publish the code on the Open Compute Project’s GitHub repository. All you have to do is connect your own CSV files to get started. And in the spirit of all other technologies shared via OCP, we encourage you to poke through the code and make updates to it. Do you have an idea to make these visuals even more compelling? Great! We encourage you to treat this as a starting point and use these dashboards to make everyone’s ability to share this data even more interesting and robust.

Lyrica McTiernan is a program manager for Facebook’s sustainability team.

Personal comment:

The Open Compute Project is definitely an interesting one and the fact that it comes with open data about centers' consumption as well. Though, PUE and WUE should be questioned further to know if these are the right measures about the effectiveness of a data center.

I'm not a specialist here, but It seems to me that these values don't give an idea of the overall use of energy for a dedicated task (data and services hosting, remote computing), but just how efficient the center is (if it makes a good use or not or energy and water).

To resume it: I could spend a super large amount of energy and water, but if I do it in an efficient way, then my pue and wue will good and it will look ok on the paper and for the brand communication.

That's certainly a good start (better have a good pue and wue) and in fact all factories should publish such numbers, but it is probably not enough. How much energy for what type of service might or should become a crucial question in a close future, until we'll have an "abundance" of renewable ones!

Creating Energy from Noise Pollution

Via Architecture Source via Archinect

-----

soundscraper. Source: eVolo 2013 Skyscraper Competition

Soundscrapers could soon turn urban noise pollution into usable energy to power cities.

An honourable mention-winning entry in the 2013 eVolo Skyscraper Competition, dubbed Soundscraper, looked into ways to convert the ambient noise in urban centres into a renewable energy form.

Noise pollution is currently a negative element of urban life but it could soon be valued and put to good use.

Acoustic architecture, or design to minimise noise, has long been an important facet of the architecture industry, but design aimed at maximising and capturing noise for beneficial reasons is an untapped area with great potential.

The Soundscraper concept is based around constructing the buildings near major highways and railroad junctions to capture noise vibrations and turn them into energy. The intensity and direction of urban noise dictates the vibrations captured by the building’s facade.

Covering a wide array of frequencies, everyday noise from trains, cars, planes and pedestrians would be picked up by 84,000 electro-active lashes covering a Soundscraper’s light metallic frame. Armed with Parametric Frequency Increased Generators (sound sensors) on the lashes, the vibrations would then be converted to kinetic energy through an energy harvester.

soundscraper. Source: eVolo 2013 Skyscraper Competition

The energy would be converted to electricity through transducer cells, at which point that power could be stored or sent to the grid for regular electricity usage.

The Soundscraper team of Julien Bourgeois, Savinien de Pizzol, Olivier Colliez, Romain Grouselle and Cédric Dounval estimate that 150 megawatts of energy could be produced from one Soundscraper, meaning that a single tower could produce enough energy to fuel 10 percent of Los Angeles’ lighting needs.

Constructing several 100-metre high Soundscrapers throughout a city near major motorways could help offset the electrical needs of the urban population. This form of renewable energy would also help lower the city’s CO2 emissions.

The energy-producing towers could become city landmarks and give interstitial spaces an important function. The electricity needs of an entire city could be met solely by Soundscrapers if enough were constructed at appropriate locations, also helping to minimise the city’s carbon footprint.

Saturday, April 20. 2013

Why Computing Won't Be Limited By Moore's Law. Ever

In less than 20 years, experts predict, we will reach the physical limit of how much processing capability can be squeezed out of silicon-based processors in the heart of our computing devices. But a recent scientific finding that could completely change the way we build computing devices may simply allow engineers to sidestep any obstacles.

The breakthrough from materials scientists at IBM Research doesn't sound like a big deal. In a nutshell, they claim to have figured out how to convert metal oxide materials, which act as natural insulators, to a conductive metallic state. Even better, the process is reversible.

Shifting materials from insulator to conductor and back is not exactly new, according to Stuart Parkin, IBM Fellow at IBM Research. What is new is that these changes in state are stable even after you shut off the power flowing through the materials.

And that's huge.

Power On… And On And On And On…

When it comes to computing — mobile, desktop or server — all devices have one key problem: they're inefficient as hell with power.

As users, we experience this every day with phone batteries dipping into the red, hot notebook computers burning our laps or noisily whirring PC fans grating our ears. System administrators and hardware architects in data centers are even more acutely aware of power inefficiency, since they run huge collections of machines that mainline electricity while generating tremendous amounts of heat (which in turn eats more power for the requisite cooling systems).

Here's one basic reason for all the inefficiency: Silicon-based transistors must be powered all the time, and as current runs through these very tiny transistors inside a computer processor, some of it leaks. Both the active transistors and the leaking current generate heat — so much that without heat sinks, water lines or fans to cool them, processors would probably just melt.

Enter the IBM researchers. Computers process information by switching transistors on or off, generating binary 1s and 0s. processing depends on manipulating two states of a transistor: off or on, 1s or 0s — all while the power is flowing. But suppose you could switch a transistor with just a microburst of electricity instead of supplying it constantly with current. The power savings would be enormous, and the heat generated, far, far lower.

That's exactly what the IBM team says it can now accomplish with its state-changing metal oxides. This kind of ultra-low power use is similar to the way neurons in our own brains fire to make connections across synapses, Parkin explained. The human brain is more powerful than the processors we use today, he added, but "it uses a millionth of the power."

The implications are clear. Assuming this technology can be refined and actually manufactured for use in processors and memory, it could form the basis of an entirely whole new class of electronic devices that would barely sip at power. Imagine a smartphone with that kind of technology. The screen, speakers and radios would still need power, but the processor and memory hardware would barely touch the battery.

Moore's Law? What Moore's Law?

There's a lot more research ahead before this technology sees practical applications. Parkin explained that the fluid used to help achieve the steady state changes in these materials needs to be more efficiently delivered using nano-channels, which is what he and his fellow researchers will be focusing on next.

Ultimately, this breakthrough is one among many that we have seen and will see in computing technology. Put in that perspective, it's hard to get that impressed. But stepping back a bit, it's clear that the so-called end of the road for processors due to physical limits is probably not as big a deal as one would think. True, silicon-based processing may see its time pass, but there are other technologies on the horizon that should take its place.

Now all we have to do is think of a new name for Silicon Valley.

Personal comment:

Thanks Christophe for the link! Following my previous post and still in the context of our workshop at Tsinghua, this is an interesting news as well (even so if entirely at the research phase for now but that somehow contradict the previous one I made) that means that in a not so far future, data centers might not consume so much energy anymore and will not produce heat either.

There's still some work to be done, but in a timeframe of 15-20 years, at the end of the silicon period, we could think this could become reality. A huge change for computing at that time (end of Moore's law period) or a bit before is to be expected in any case.

Friday, April 19. 2013

Une start-up lance le chauffage par ordinateur

Via Le Parisien via @chrstphggnrd

-----

Une douce chaleur règne chez 4 MTec, à Montrouge. Ici, pas de chauffage central, mais une quinzaine de Q.rad, les « radiateurs numériques » de la start-up Qarnot Computing, hébergée dans les locaux de ce bureau d’études. A l’origine de cette invention, Paul Benoît, ingénieur polytechnicien aux faux airs de Harry Potter, croit beaucoup dans son système « économique et écologique ».

Avec ses trois associés, dont le médiatique avocat Jérémie Assous, très investi dans la promotion du projet, une vingtaine de personnes planchent aujourd’hui sur sa mise en œuvre.

Le principe, que comprendront tous ceux qui ont déjà travaillé avec un ordinateur portable sur les genoux : utiliser la chaleur dégagée par des processeurs informatiques installés dans le radiateur. Vendue à des entreprises, des particuliers, des centres de recherche pour traiter des données ou faire du calcul intensif, leur utilisation suffit largement à couvrir la dépense en électricité. Avantage pour l’habitant du logement ainsi chauffé : c’est gratuit! Et pour les clients des serveurs informatiques, la garantie de tarifs bien inférieurs à ceux des coûteux data centers. Mais l’atout est aussi écologique : « notre système gaspille cinq fois moins d’énergie pour le même résultat », affirme Paul Benoît.

Pour son inventeur, le Q.rad ne connaît pas de limites. Qarnot Computing a réponse à tout : « On règle son chauffage comme on le souhaite, avec un thermostat. En fonction des besoins, nous régulons sur les différents radiateurs le flux des clients informatiques. » Et si ces derniers viennent à manquer? Aucun risque de panne hivernale, selon Paul Benoît : « Nous pourrons offrir gratuitement l’utilisation des serveurs à des chercheurs. » Et pour l’été? « Un mode basse consommation permet de conserver la moitié de la puissance de calcul en chauffant très peu. » Et si c’est encore trop, Qarnot Computing prévoit de la redéployer vers des lieux spécifiques, en équipant par exemple de Q.rad des écoles fermées pendant les grandes vacances. Mais l’ingénieur l’admet : pour son déploiement, la société a tout intérêt à privilégier les zones les plus froides…

300 Q.rad installés cet été dans la centaine de logements d’un HLM du XVe arrondissement parisien

Du côté des utilisateurs informatiques, c’est la question de la sécurité qui prime : là encore, Paul Benoît est sûr du résultat. « Nos systèmes ne stockent pas de données, elles ne font qu’y transiter de manière cryptée et à travers des calculateurs disséminés un peu partout. » Et si l’on tente d’ouvrir la machine, « elle s’arrête », prévient l’inventeur.

Des arguments qui ont déjà convaincu : le mois prochain, 25 radiateurs viendront chauffer l’école d’ingénieurs Télécom Paris Tech. Et à partir de cet été, ce sont quelque 300 Q.rad qui vont être installés dans la centaine de logements d’un HLM du XVe arrondissement parisien, à Balard. « Une première expérimentation à grande échelle, salue Jean-Louis Missika, l’adjoint parisien chargé de l’innovation et de la recherche, pour un projet qui pourrait être révolutionnaire! »

Related Links:

Personal comment:

While we are still located in Beijing for the moment (and therefore not publishing so much on the blog --sorry for that--) and while we are working with some students at Tsinghua around the idea of "inhabiting the data center" (transposed in a much smaller context, which becomes therefore in this case "inhabiting the servers' cabinet"), this news about servers becoming heaters is entirely related to our project and could certainly be used.

fabric | rblg

This blog is the survey website of fabric | ch - studio for architecture, interaction and research.

We curate and reblog articles, researches, writings, exhibitions and projects that we notice and find interesting during our everyday practice and readings.

Most articles concern the intertwined fields of architecture, territory, art, interaction design, thinking and science. From time to time, we also publish documentation about our own work and research, immersed among these related resources and inspirations.

This website is used by fabric | ch as archive, references and resources. It is shared with all those interested in the same topics as we are, in the hope that they will also find valuable references and content in it.

Quicksearch

Categories

Calendar

|

|

April '24 | |||||

| Mon | Tue | Wed | Thu | Fri | Sat | Sun |

| 1 | 2 | 3 | 4 | 5 | 6 | 7 |

| 8 | 9 | 10 | 11 | 12 | 13 | 14 |

| 15 | 16 | 17 | 18 | 19 | 20 | 21 |

| 22 | 23 | 24 | 25 | 26 | 27 | 28 |

| 29 | 30 | |||||