Tuesday, April 30. 2013

A Smarter Algorithm Could Cut Energy Use in Data Centers by 35 Percent

-----

By David Talbot on April 16, 2013

Storing video and other files more intelligently reduces the demand on servers in a data center.

Worldwide, data centers consume huge and growing amounts of electricity.

New research suggests that data centers could significantly cut their electricity usage simply by storing fewer copies of files, especially videos.

For now the work is theoretical, but over the next year, researchers at Alcatel-Lucent’s Bell Labs and MIT plan to test the idea, with an eye to eventually commercializing the technology. It could be implemented as software within existing facilities. “This approach is a very promising way to improve the efficiency of data centers,” says Emina Soljanin, a researcher at Bell Labs who participated in the work. “It is not a panacea, but it is significant, and there is no particular reason that it couldn’t be commercialized fairly quickly.”

With the new technology, any individual data center could be expected to save 35 percent in capacity and electricity costs—about $2.8 million a year or $18 million over the lifetime of the center, says Muriel Médard, a professor at MIT’s Research Laboratory of Electronics, who led the work and recently conducted the cost analysis.

So-called storage area networks within data center servers rely on a tremendous amount of redundancy to make sure that downloading videos and other content is a smooth, unbroken experience for consumers. Portions of a given video are stored on different disk drives in a data center, with each sequential piece cued up and buffered on your computer shortly before it’s needed. In addition, copies of each portion are stored on different drives, to provide a backup in case any single drive is jammed up. A single data center often serves millions of video requests at the same time.

The new technology, called network coding, cuts way back on the redundancy without sacrificing the smooth experience. Algorithms transform the data that makes up a video into a series of mathematical functions that can, if needed, be solved not just for that piece of the video, but also for different parts. This provides a form of backup that doesn’t rely on keeping complete copies of the data. Software at the data center could simply encode the data as it is stored and decode it as consumers request it.

Médard’s group previously proposed a similar technique for boosting wireless bandwidth (see “A Bandwidth Breakthrough”). That technology deals with a different problem: wireless networks waste a lot of bandwidth on back-and-forth traffic to recover dropped portions of a signal, called packets. If mathematical functions describing those packets are sent in place of the packets themselves, it becomes unnecessary to re-send a dropped packet; a mobile device can solve for the missing packet with minimal processing. That technology, which improves capacity up to tenfold, is currently being licensed to wireless carriers, she says.

Between the electricity needed to power computers and the air conditioning required to cool them, data centers worldwide consume so much energy that by 2020 they will cause more greenhouse-gas emissions than global air travel, according to the consulting firm McKinsey.

Smarter software to manage them has already proved to be a huge boon (see “A New Net”). Many companies are building data centers that use renewable energy and smarter energy management systems (see “The Little Secrets Behind Apple’s Green Data Centers”). And there are a number of ways to make chips and software operate more efficiently (see “Rethinking Energy Use in Data Centers”). But network coding could make a big contribution by cutting down on the extra disk drives—each needing energy and cooling—that cloud storage providers now rely on to ensure reliability.

This is not the first time that network coding has been proposed for data centers. But past work was geared toward recovering lost data. In this case, Médard says, “we have considered the use of coding to improve performance under normal operating conditions, with enhanced reliability a natural by-product.”

Personal comment:

Still a link in the context of our workshop at the Tsinghua University and related to data storage at large.

The link between energy, algorithms and data storage made obvious. To be read in parallel with the previous repost from Kazys Varnelis, Into the Cloud (with zombies).

-

In the same idea, another piece of code that could cut flight delays and therefore cut approx $1.2 million in annual crew costs and $5 million in annual fuel savings to a midsized airline...

Friday, April 26. 2013

Resonate Festival

Via DomusWeb

-----

By Roberto Arista

With its second edition, the Serbian festival – a meeting point for technology and art – establishes itself as a sounding board for a mature and growing scene.

Resonate Festival, Belgrade, 2013. Projection during the debate with Memo Akten, Rainer Kohlberger, Eno Henze and Shane Walter.

Resonate was founded in 2012 by Magnetic Field B and the Creative Applications network, in an attempt to provide the visual arts world with a new platform for discussion. The event focuses on the role of technology in art and culture, and especially on the connections between the disciplines that these areas involve. The 2013 edition took place from March 21 to 23 in the Dom Omladine cultural space, close to the city’s Republic Square. More than 1200 visitors attended the event, which was already sold out several days before the opening.

The first day was devoted to a rich and varied assortment of workshops – open to all selected participants – regarding the analysis of the available tools (hardware and software) for video mapping, data visualization on different media, the design of cross-platform applications, or even the choreography of (flying) drones.

Golan Levin during the “Computer vision in interactive arts” workshop. Photo courtesy of Resonate

The next two days were dedicated to a full program of 44 lectures and video projections. The general impression is that there is a panorama of versatile designers who can carefully hybridise different disciplines and tools – marrying electronic engineering with products, landscape with graphics, analogical techniques with digital media. These designers are bolstered by the freedom to experiment that distinguishes those who are not pigeonholed within a specific category. The profession’s evolution and, more generally, a look at the recent past, were leitmotifs of some of the most interesting projects presented. Examples range from Memo Akten, Golan Levin and Joachim Sauter, who are now ready to offer an engaging retrospective of their projects, to the much admired by the public Meet your creator, Free Universal Construction Kit and Kinetic Sculpture.

The audience in the main room at Dom Omladine during the festival. Photo courtesy of Resonate

Similarly, a lively debate followed the talk by artist and interaction designer Zach Gage. Is it possible that the "game" – understood within a broader realm than the videogame – has not yet found the right place to be preserved, celebrated and narrated?

A view of the Building Kluz, where many of the festival's performances took place. Photo courtesy of Resonate

Participants were moved by London-based architect, critic and curator Liam Young’s future scenarios and landscape mutations. Projects like Silent Spring dampened that blind faith in technological advancement that permeated the festival. The work by professors in Europe’s most popular Interaction Design courses was of great interest, in particular Anthony Dunne from the RCA in London, David Gauthier from CIID in Copenhagen and Alain Bellet from ECAL in Lausanne. These schools have overcome the unnecessary separation between the humanistic and scientific universes, while in Italy the legacy left behind by Benedetto Croce still paralyses many university courses.

Debate participants during the second day of the festival: Memo Akten, Rainer Kohlberger, Eno Henze and Shane Walter. Photo courtesy of Resonate

It is striking that there were no Italian presenters given the number of European speakers. This is probably due to the Italian design world’s reluctance to accept the digital sphere. However, some undisputed masters were mentioned: Luigi Serafini, whose Codex Seraphinianus has become an international case study, or Bruno Munari’s work in design teaching.

A view of Memo Atken's "How I learnt to stop worrying and love the drones" workshop. Photo courtesy of Resonate.

It became evident that childlike curiosity is fundamental in developing languages and tools. Many festival speakers dared to compare their more mature projects with images from their childhoods, so it is no coincidence that a statement by Carl Sagan’s was heard several times during the festival: "Every kid starts out as a natural-born scientist, and then we beat it out of them. A few trickle through the system with their wonder and enthusiasm for science intact." Roberto Arista

Related Links:

Personal comment:

A little report by Roberto Arista on Domusweb about the last and good Resonate conference that happened in Belgrade last March. With the talk of Alain Bellet that is head of the very good bachelor in Interaction Design at the ECAL, in Lausanne Switzerland (and occasionally, my "boss" too, as I'm teaching there as well)!

Monday, April 22. 2013

A new way to report data center's Power and Water Usage Effectiveness (PUE and WUE)

Via Computed.Blg via Open Compute Project

-----

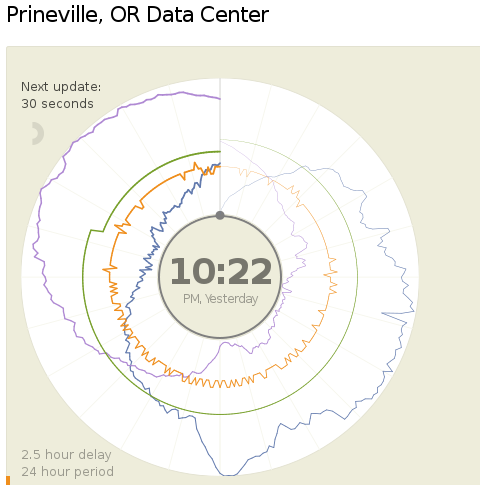

Today (18.04.2013) Facebook launched two public dashboards that report continuous, near-real-time data for key efficiency metrics – specifically, PUE and WUE – for our data centers in Prineville, OR and Forest City, NC. These dashboards include both a granular look at the past 24 hours of data and a historical view of the past year’s values. In the historical view, trends within each data set and correlations between different metrics become visible. Once our data center in Luleå, Sweden, comes online, we’ll begin publishing for that site as well.

We began sharing PUE for our Prineville data center at the end of Q2 2011 and released our first Prineville WUE in the summer of 2012. Now we’re pulling back the curtain to share some of the same information that our data center technicians view every day. We’ll continue updating our annualized averages as we have in the past, and you’ll be able to find them on the Prineville and Forest City dashboards, right below the real-time data.

Why are we doing this? Well, we’re proud of our data center efficiency, and we think it’s important to demystify data centers and share more about what our operations really look like. Through the Open Compute Project (OCP), we’ve shared the building and hardware designs for our data centers. These dashboards are the natural next step, since they answer the question, “What really happens when those servers are installed and the power’s turned on?”

Creating these dashboards wasn’t a straightforward task. Our data centers aren’t completed yet; we’re still in the process of building out suites and finalizing the parameters for our building managements systems. All our data centers are literally still construction sites, with new data halls coming online at different points throughout the year. Since we’ve created dashboards that visualize an environment with so many shifting variables, you’ll probably see some weird numbers from time to time. That’s OK. These dashboards are about surfacing raw data – and sometimes, raw data looks messy. But we believe in iteration, in getting projects out the door and improving them over time. So we welcome you behind the curtain, wonky numbers and all. As our data centers near completion and our load evens out, we expect these inevitable fluctuations to correspondingly decrease.

We’re excited about sharing this data, and we encourage others to do the same. Working together with AREA 17, the company that designed these visualizations, we’ve decided to open-source the front-end code for these dashboards so that any organization interested in sharing PUE, WUE, temperature, and humidity at its data center sites can use these dashboards to get started. Sometime in the coming weeks we’ll publish the code on the Open Compute Project’s GitHub repository. All you have to do is connect your own CSV files to get started. And in the spirit of all other technologies shared via OCP, we encourage you to poke through the code and make updates to it. Do you have an idea to make these visuals even more compelling? Great! We encourage you to treat this as a starting point and use these dashboards to make everyone’s ability to share this data even more interesting and robust.

Lyrica McTiernan is a program manager for Facebook’s sustainability team.

Personal comment:

The Open Compute Project is definitely an interesting one and the fact that it comes with open data about centers' consumption as well. Though, PUE and WUE should be questioned further to know if these are the right measures about the effectiveness of a data center.

I'm not a specialist here, but It seems to me that these values don't give an idea of the overall use of energy for a dedicated task (data and services hosting, remote computing), but just how efficient the center is (if it makes a good use or not or energy and water).

To resume it: I could spend a super large amount of energy and water, but if I do it in an efficient way, then my pue and wue will good and it will look ok on the paper and for the brand communication.

That's certainly a good start (better have a good pue and wue) and in fact all factories should publish such numbers, but it is probably not enough. How much energy for what type of service might or should become a crucial question in a close future, until we'll have an "abundance" of renewable ones!

Saturday, April 20. 2013

Why Computing Won't Be Limited By Moore's Law. Ever

In less than 20 years, experts predict, we will reach the physical limit of how much processing capability can be squeezed out of silicon-based processors in the heart of our computing devices. But a recent scientific finding that could completely change the way we build computing devices may simply allow engineers to sidestep any obstacles.

The breakthrough from materials scientists at IBM Research doesn't sound like a big deal. In a nutshell, they claim to have figured out how to convert metal oxide materials, which act as natural insulators, to a conductive metallic state. Even better, the process is reversible.

Shifting materials from insulator to conductor and back is not exactly new, according to Stuart Parkin, IBM Fellow at IBM Research. What is new is that these changes in state are stable even after you shut off the power flowing through the materials.

And that's huge.

Power On… And On And On And On…

When it comes to computing — mobile, desktop or server — all devices have one key problem: they're inefficient as hell with power.

As users, we experience this every day with phone batteries dipping into the red, hot notebook computers burning our laps or noisily whirring PC fans grating our ears. System administrators and hardware architects in data centers are even more acutely aware of power inefficiency, since they run huge collections of machines that mainline electricity while generating tremendous amounts of heat (which in turn eats more power for the requisite cooling systems).

Here's one basic reason for all the inefficiency: Silicon-based transistors must be powered all the time, and as current runs through these very tiny transistors inside a computer processor, some of it leaks. Both the active transistors and the leaking current generate heat — so much that without heat sinks, water lines or fans to cool them, processors would probably just melt.

Enter the IBM researchers. Computers process information by switching transistors on or off, generating binary 1s and 0s. processing depends on manipulating two states of a transistor: off or on, 1s or 0s — all while the power is flowing. But suppose you could switch a transistor with just a microburst of electricity instead of supplying it constantly with current. The power savings would be enormous, and the heat generated, far, far lower.

That's exactly what the IBM team says it can now accomplish with its state-changing metal oxides. This kind of ultra-low power use is similar to the way neurons in our own brains fire to make connections across synapses, Parkin explained. The human brain is more powerful than the processors we use today, he added, but "it uses a millionth of the power."

The implications are clear. Assuming this technology can be refined and actually manufactured for use in processors and memory, it could form the basis of an entirely whole new class of electronic devices that would barely sip at power. Imagine a smartphone with that kind of technology. The screen, speakers and radios would still need power, but the processor and memory hardware would barely touch the battery.

Moore's Law? What Moore's Law?

There's a lot more research ahead before this technology sees practical applications. Parkin explained that the fluid used to help achieve the steady state changes in these materials needs to be more efficiently delivered using nano-channels, which is what he and his fellow researchers will be focusing on next.

Ultimately, this breakthrough is one among many that we have seen and will see in computing technology. Put in that perspective, it's hard to get that impressed. But stepping back a bit, it's clear that the so-called end of the road for processors due to physical limits is probably not as big a deal as one would think. True, silicon-based processing may see its time pass, but there are other technologies on the horizon that should take its place.

Now all we have to do is think of a new name for Silicon Valley.

Personal comment:

Thanks Christophe for the link! Following my previous post and still in the context of our workshop at Tsinghua, this is an interesting news as well (even so if entirely at the research phase for now but that somehow contradict the previous one I made) that means that in a not so far future, data centers might not consume so much energy anymore and will not produce heat either.

There's still some work to be done, but in a timeframe of 15-20 years, at the end of the silicon period, we could think this could become reality. A huge change for computing at that time (end of Moore's law period) or a bit before is to be expected in any case.

Friday, April 19. 2013

Une start-up lance le chauffage par ordinateur

Via Le Parisien via @chrstphggnrd

-----

Une douce chaleur règne chez 4 MTec, à Montrouge. Ici, pas de chauffage central, mais une quinzaine de Q.rad, les « radiateurs numériques » de la start-up Qarnot Computing, hébergée dans les locaux de ce bureau d’études. A l’origine de cette invention, Paul Benoît, ingénieur polytechnicien aux faux airs de Harry Potter, croit beaucoup dans son système « économique et écologique ».

Avec ses trois associés, dont le médiatique avocat Jérémie Assous, très investi dans la promotion du projet, une vingtaine de personnes planchent aujourd’hui sur sa mise en œuvre.

Le principe, que comprendront tous ceux qui ont déjà travaillé avec un ordinateur portable sur les genoux : utiliser la chaleur dégagée par des processeurs informatiques installés dans le radiateur. Vendue à des entreprises, des particuliers, des centres de recherche pour traiter des données ou faire du calcul intensif, leur utilisation suffit largement à couvrir la dépense en électricité. Avantage pour l’habitant du logement ainsi chauffé : c’est gratuit! Et pour les clients des serveurs informatiques, la garantie de tarifs bien inférieurs à ceux des coûteux data centers. Mais l’atout est aussi écologique : « notre système gaspille cinq fois moins d’énergie pour le même résultat », affirme Paul Benoît.

Pour son inventeur, le Q.rad ne connaît pas de limites. Qarnot Computing a réponse à tout : « On règle son chauffage comme on le souhaite, avec un thermostat. En fonction des besoins, nous régulons sur les différents radiateurs le flux des clients informatiques. » Et si ces derniers viennent à manquer? Aucun risque de panne hivernale, selon Paul Benoît : « Nous pourrons offrir gratuitement l’utilisation des serveurs à des chercheurs. » Et pour l’été? « Un mode basse consommation permet de conserver la moitié de la puissance de calcul en chauffant très peu. » Et si c’est encore trop, Qarnot Computing prévoit de la redéployer vers des lieux spécifiques, en équipant par exemple de Q.rad des écoles fermées pendant les grandes vacances. Mais l’ingénieur l’admet : pour son déploiement, la société a tout intérêt à privilégier les zones les plus froides…

300 Q.rad installés cet été dans la centaine de logements d’un HLM du XVe arrondissement parisien

Du côté des utilisateurs informatiques, c’est la question de la sécurité qui prime : là encore, Paul Benoît est sûr du résultat. « Nos systèmes ne stockent pas de données, elles ne font qu’y transiter de manière cryptée et à travers des calculateurs disséminés un peu partout. » Et si l’on tente d’ouvrir la machine, « elle s’arrête », prévient l’inventeur.

Des arguments qui ont déjà convaincu : le mois prochain, 25 radiateurs viendront chauffer l’école d’ingénieurs Télécom Paris Tech. Et à partir de cet été, ce sont quelque 300 Q.rad qui vont être installés dans la centaine de logements d’un HLM du XVe arrondissement parisien, à Balard. « Une première expérimentation à grande échelle, salue Jean-Louis Missika, l’adjoint parisien chargé de l’innovation et de la recherche, pour un projet qui pourrait être révolutionnaire! »

Related Links:

Personal comment:

While we are still located in Beijing for the moment (and therefore not publishing so much on the blog --sorry for that--) and while we are working with some students at Tsinghua around the idea of "inhabiting the data center" (transposed in a much smaller context, which becomes therefore in this case "inhabiting the servers' cabinet"), this news about servers becoming heaters is entirely related to our project and could certainly be used.

fabric | rblg

This blog is the survey website of fabric | ch - studio for architecture, interaction and research.

We curate and reblog articles, researches, writings, exhibitions and projects that we notice and find interesting during our everyday practice and readings.

Most articles concern the intertwined fields of architecture, territory, art, interaction design, thinking and science. From time to time, we also publish documentation about our own work and research, immersed among these related resources and inspirations.

This website is used by fabric | ch as archive, references and resources. It is shared with all those interested in the same topics as we are, in the hope that they will also find valuable references and content in it.