Monday, April 11. 2011

-----

by Cord Jefferson

This is going to sound sort of obvious, but here we go: A study from University College London published this week in Current Biology has discovered that there are actually differences in the brains of liberals and conservatives. Specifically, liberals' brains tend to be bigger in the area that deals with processing complex ideas and situations, while conservatives' brains are bigger in the area that processes fear.

According to the report: "We found that greater liberalism was associated with increased gray matter volume in the anterior cingulate cortex, whereas greater conservatism was associated with increased volume of the right amygdala."

People with larger amygdalae respond to perceived threats with more aggression and "are more sensitive to threatening facial expressions." The anterior cingulate cortex, however, "monitors uncertainty and conflict." "Thus," says the report, "it is conceivable that individuals with a larger ACC have a higher capacity to tolerate uncertainty and conflicts, allowing them to accept more liberal views."

The London researchers say they're unsure whether the brain's structure causes political views or is the effect of them. Regardless, this puts the "Obama's a Muslim socialist" fearmongering at Tea Party rallies into a whole new light.

photo (cc) via Flickr user Jon Olav

Friday, April 08. 2011

Via MIT Technology Review

-----

The social network breaks an unwritten rule by giving away plans to its new data center—an action it hopes will make the Web more efficient.

By Tom Simonite

|

The new data center, in Prineville, Oregon, covers 147,000 square feet and is one of the most energy-efficient computing warehouses ever built.

Credit: Jason Madera |

Just weeks before switching on a massive, super-efficient data center in rural Oregon, Facebook is giving away the designs and specifications to the whole thing online. In doing so, the company is breaking a long-established unwritten rule for Web companies: don't share the secrets of your server-stuffed data warehouses.

Ironically, most of those secret servers rely heavily on open source or free software, for example the Linux operating system and the Apache webserver. Facebook's move—dubbed the Open Compute Project—aims to kick-start a similar trend with hardware.

"Mark [Zuckerberg] was able to start Facebook in his dorm room because PHP and Apache and other free and open-source software existed," says David Recordon, who helps coordinate Facebook's use of, and contribution to, open-source software. "We wanted to encourage that for hardware, and release enough information about our data center and servers that someone else could go and actually build them."

The attitude of other large technology firms couldn't be more different, says Ricardo Bianchini, who researches energy-efficient computing infrastructure at Rutgers University. "Typically, companies like Google or Microsoft won't tell you anything about their designs," he says. A more open approach could help the Web as a whole become more efficient, he says. "Opening up the building like this will help researchers a lot, and also other industry players," he says. "It's opening up new opportunities to share and collaborate."

The open hardware designs are for a new data center in Prineville, Oregon, that will be switched on later this month. The 147,000-square-foot building will increase Facebook's overall computing capacity by around half; the social network already processes some 100 million new photos every day, and its user base of over 500 million is growing fast.

The material being made available - on a new website - includes detailed specifications of the building's electrical and cooling systems, as well as the custom designs of the servers inside. Facebook is dubbing the approach "open" rather than open-source because its designs won't be subject to a true open-source legal license, which requires anyone modifying them to share any changes they make.

The plans reveal the fruits of Facebook's efforts to create one of the most energy-efficient data centers ever built. Unlike almost every other data center, Facebook's new building doesn't use chillers to cool the air flowing past the servers. Instead, air from the outside flows over foam pads moistened by water sprays to cool by evaporation. The building is carefully oriented so that prevailing winds direct outside air into the building in both winter and summer.

Facebook's engineers also created a novel electrical design that cuts the number of times that the electricity from the grid is run through a transformer to reduce its voltage en route to the servers inside. Most data centers use transformers to reduce the 480 volts from the nearest substation down to 208 volts, but Facebook's design skips that step. "We run 480 volts right up to the server," says Jay Park, Facebook's director of data-center engineering. "That eliminates the need for a transformer that wastes energy."

To make this possible, Park and colleagues created a new type of server power supply that takes 277 volts and which can be split off from the 408-volt supply without the need for a transformer. The 408 volts is delivered using a method known as "three phase power": three wires carry three alternating currents with carefully different timings. Splitting off one of those wires extracts a 277-volt supply.

Park and colleagues also came up with a new design for the backup batteries that keep servers running during power outages before backup generators kick in—a period of about 90 seconds. Instead of building one huge battery store in a dedicated room, many cabinet-sized battery packs are spread among the servers. This is more efficient because the batteries share electrical connections with the computers around them, eliminating the dedicated connections and transformers needed for one large store. Park calculates that his new electrical design wastes about 7 percent of the power fed into it, compared to around 23 percent for a more conventional design.

According to the standard measure of data-center efficiency—the power usage efficiency (PUE) score—Facebook's tweaks have created one of the most efficient data centers ever. A PUE is calculated by dividing a building's total power use by the energy used by its computers - a perfect data center would score 1. "Our tests show that Prineville has a PUE of 1.07," says Park. Google, which invests heavily in data-center efficiency, reported an average PUE of 1.13 across all its locations for the last quarter of 2010 (when winter temperatures make data centers most efficient), with the most efficient scoring 1.1.

Google and others will now be able to cherry pick elements from Facebook's designs, but that poses no threat to Facebook's real business, says Frank Frankovsky, the company's director of hardware design. "Facebook is successful because of the great social product, not [because] we can build low-cost infrastructure," he says. "There's no reason we shouldn't help others out with this."

Copyright Technology Review 2011.

Personal comment:

Will efficient and sustainable ways to organize architectural climate as well as to use energy become a by product of data centers? Might be.

Via MIT Technology Review blog

-----

By Steve Hsu

Brian Christian, author of The Most Human Human, tells interviewer Leonard Lopate what it's like to be a participant in the Loebner Prize competition, an annual version of the Turing Test. See also Christian's article, excerpted below.

Atlantic Monthly: ... The first Loebner Prize competition was held on November 8, 1991, at the Boston Computer Museum. In its first few years, the contest required each program and human confederate to choose a topic, as a means of limiting the conversation. One of the confederates in 1991 was the Shakespeare expert Cynthia Clay, who was, famously, deemed a computer by three different judges after a conversation about the playwright. The consensus seemed to be: “No one knows that much about Shakespeare.” (For this reason, Clay took her misclassifications as a compliment.)

... Philosophers, psychologists, and scientists have been puzzling over the essential definition of human uniqueness since the beginning of recorded history. The Harvard psychologist Daniel Gilbert says that every psychologist must, at some point in his or her career, write a version of what he calls “The Sentence.” Specifically, The Sentence reads like this:

The human being is the only animal that ____.

The story of humans’ sense of self is, you might say, the story of failed, debunked versions of The Sentence. Except now it’s not just the animals that we’re worried about.

We once thought humans were unique for using language, but this seems less certain each year; we once thought humans were unique for using tools, but this claim also erodes with ongoing animal-behavior research; we once thought humans were unique for being able to do mathematics, and now we can barely imagine being able to do what our calculators can.

We might ask ourselves: Is it appropriate to allow our definition of our own uniqueness to be, in some sense, reactive to the advancing front of technology? And why is it that we are so compelled to feel unique in the first place?

“Sometimes it seems,” says Douglas Hofstadter, a Pulitzer Prize–winning cognitive scientist, “as though each new step towards AI, rather than producing something which everyone agrees is real intelligence, merely reveals what real intelligence is not.” While at first this seems a consoling position—one that keeps our unique claim to thought intact—it does bear the uncomfortable appearance of a gradual retreat, like a medieval army withdrawing from the castle to the keep. But the retreat can’t continue indefinitely. Consider: if everything that we thought hinged on thinking turns out to not involve it, then … what is thinking? It would seem to reduce to either an epiphenomenon—a kind of “exhaust” thrown off by the brain—or, worse, an illusion.

Where is the keep of our selfhood?

The story of the 21st century will be, in part, the story of the drawing and redrawing of these battle lines, the story of Homo sapiens trying to stake a claim on shifting ground, flanked by beast and machine, pinned between meat and math.

... In May 1989, Mark Humphrys, a 21-year-old University College Dublin undergraduate, put online an Eliza-style program he’d written, called “MGonz,” and left the building for the day. A user (screen name “Someone”) at Drake University in Iowa tentatively sent the message “finger” to Humphrys’s account—an early-Internet command that acted as a request for basic information about a user. To Someone’s surprise, a response came back immediately: “cut this cryptic shit speak in full sentences.” This began an argument between Someone and MGonz that lasted almost an hour and a half. (The best part was undoubtedly when Someone said, “you sound like a goddamn robot that repeats everything.”)

Returning to the lab the next morning, Humphrys was stunned to find the log, and felt a strange, ambivalent emotion. His program might have just shown how to pass the Turing Test, he thought—but the evidence was so profane that he was afraid to publish it. ...

Via Creative Applications

-----

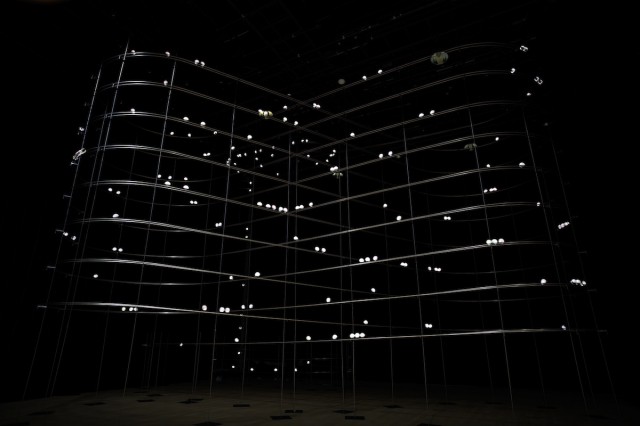

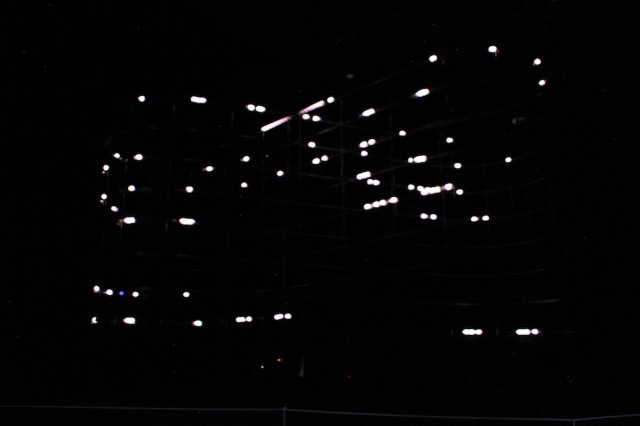

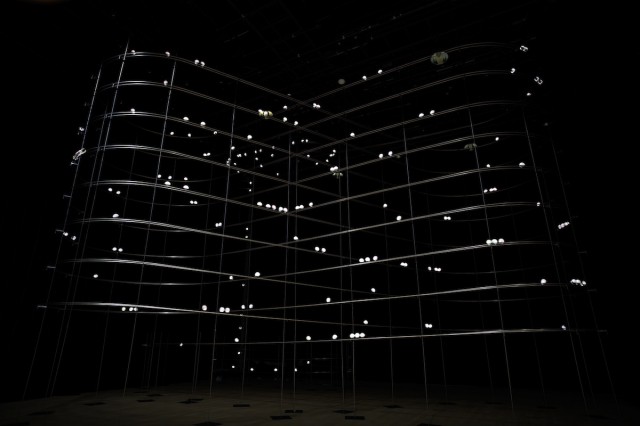

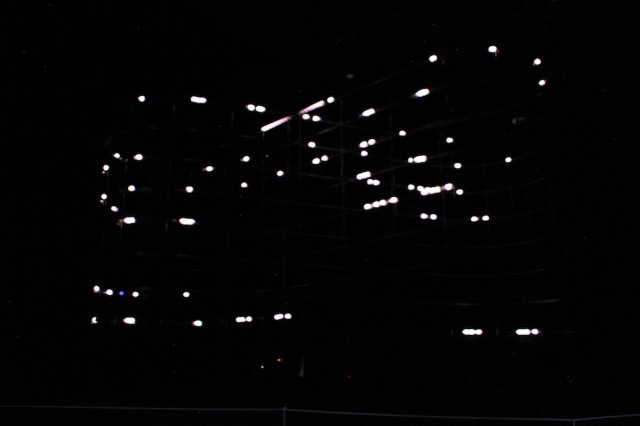

Particles is the latest installation by Daito Manabe and Motoi Ishibashi currently on exhibit at the Yamaguchi Center for Arts and Media [YCAM]. The installation centers around a spiral-shaped rail construction on which a number of balls with built-in LEDs and xbee transmitters are rolling while blinking in different time intervals, resulting in spatial drawings of light particles.

This is an art installation which is able to create a visionary beautiful dots pattern of blinking innumerable illuminations floating in all directions on the air. The number of balls with a built-in LED, pass through one after another on the rail “8-spiral shape.” We see this phenomenon like “the light particle float around” because the balls radiate in various timing.

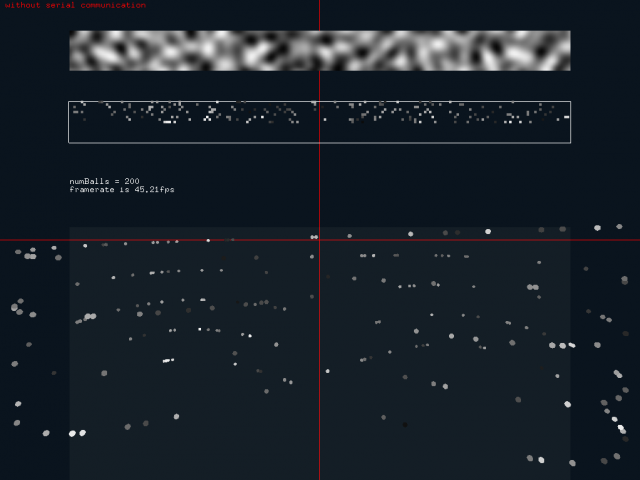

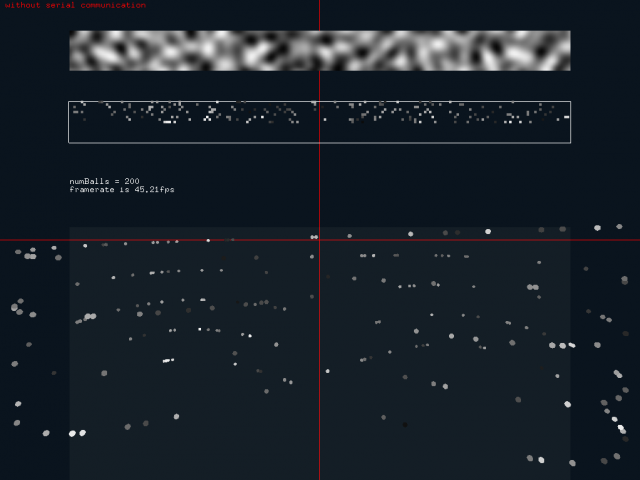

The openFrameworks application controls both the release of “particles” as well as their glow based on the information read within the application. The image below shows perlin noise being translated into particles, giving each one glow and position properties.

The position of each ball is determined via total of 17 control points on the rail. Every time a ball passes through one of them, the respective ball’ s positional information is transmitted via a built-in infrared sensor. During the time the ball travels between one control points to the next, this position is calculated based on its average speed. The data for regulating the balls’ luminescence are divided by the control point segments and are switched every time a ball passes on a control point.

The audiences can select a shape from several patterns floating in aerial space using an interface of the display. The activation of the virtual balls on the screen are determined by the timing which a ball moving on the rail passes through a certain check point on the rail and the speed which is calculated by using average speed values. The sound is generated from the ball positions and the information of LED flash pattern and is played through 8ch speakers. The board inside the ball is an Arduino compatible board based on the original design from Arduino

Exhibition page: particles.ycam.jp/en/

Date & Time:March 5 (sat)−May 5 (thu) , 2011 10:00−19:00

Venue: Yamaguchi Center for Arts and Media [YCAM] Studio B

Admission free

Images courtesy of Yamaguchi Center for Arts and Media [YCAM] Photos: Ryuichi Maruo (YCAM)

Thursday, April 07. 2011

Via MIT Technology Review

-----

A novel approach to design and construction could save materials and energy, and create unusually beautiful structures.

By Kevin Bullis

|

Model maker: Neri Oxman works on “Cartesian Wax: Prototype for a Responsive Skin,” a model that is now part of the permanent collection at the Museum of Modern Art in New York.

Credit: Mikey Siegel |

In conventional construction, workers piece together buildings from mass-produced, prefabricated bricks, I-beams, concrete columns, plates of glass and so on. Neri Oxman, an architect and a professor at MIT's Media Lab, intends to print them instead—essentially using concrete, polymers, and other materials in the place of ink. Oxman is developing a new way of designing buildings to take advantage of the flexibility that printing can provide. If she's successful, her approach could lead to designs that are impossible with today's construction methods.

Existing 3-D printers, also called rapid prototyping machines, build structures layer by layer. So far these machines have been used mainly to make detailed plastic models based on computer designs. But as such printers improve and become capable of using more durable materials, including metals, they've become a potentially interesting way to make working products.

Oxman is working to extend the capabilities of these machines—making it possible to change the elasticity of a polymer or the porosity of concrete as it's printed, for example—and mounting print heads on flexible robot arms that have greater freedom of movement than current printers.

She's also drawing inspiration from nature to develop new design strategies that take advantage of these capabilities. For example, the density of wood in a palm tree trunk varies, depending on the load it must support. The densest wood is on the outside, where bending stress is the greatest, while the center is porous and weighs less. Oxman estimates that making concrete columns this way—with low-density porous concrete in the center—could reduce the amount of concrete needed by more than 10 percent, a significant savings on the scale of a construction project.

Oxman is developing software to realize her design strategy. She inputs data about physical stresses on a structure, as well as design constraints such as size, overall shape, and the need to let in light into certain areas of a building. Based on this information, the software applies algorithms to specify how the material properties need to change throughout a structure. Then she prints out small models based on these specifications.

The early results of her work are so beautiful and intriguing that they've been featured at the Museum of Modern Art in New York and the Museum of Science in Boston. One example, which she calls Beast, is a chair whose design is based on the shape of a human body (her own) and the predicted distribution of pressure on the chair. The resulting 3-D model features a complex network of cells and branching structures that are soft where needed to relieve pressure and stiff where needed for support.

The work is at an early stage, but the new approach to construction and design suggests many new possibilities. A load-bearing wall could be printed in elaborate patterns that correspond to the stresses it will experience from the load it supports from wind or earthquakes, for instance.

The pattern could also account for the need to allow light into a building. Some areas would have strong, dense concrete, but in areas of low stress, the concrete could be extremely porous and light, serving only as a barrier to the elements while saving material and reducing the weight of the structure. In these non-load bearing areas, it could also be possible to print concrete that's so porous that light can penetrate, or to mix the concrete gradually with transparent materials. Such designs could save energy by increasing the amount of daylight inside a building and reducing the need for artificial lighting. Eventually, it may be possible to print efficient insulation and ventilation at the same time. The structure can be complex, since it costs no more to print elaborate patterns than simple ones.

Other researchers are developing technology to print walls and other large structures. Behrokh Khoshnevis, a professor of industrial and systems engineering and civil and environmental engineering at the University of Southern California, has built a system that can deposit concrete walls without the need for forms to contain the concrete. Oxman's work would take this another step, adding the ability to vary the properties of the concrete, and eventually work with multiple materials.

The first applications of Oxman's approach will likely to be on a relatively small scale, in consumer products and medical devices. She's used her principles to design and print wrist braces for carpal tunnel syndrome. They're customized based on the pain that a particular patient experiences. The approach could also improve the performance of prosthetics.

Oxman, 35, is developing her techniques in partnership with a range of specialists, such as Craig Carter, a professor of materials science at MIT. While he says her approach faces challenges in controlling the properties of materials, he's impressed with her ideas: "There's no doubt that the results are strikingly beautiful."

Copyright Technology Review 2011.

|