Wednesday, April 22. 2009

[Image: The Cepheid Variable RS Pup]. [Image: The Cepheid Variable RS Pup].

Apparently, all those stars out there might be something more than mere heavenly bodies.

Indeed, "the galactic equivalent of the internet," if there is such a thing, might just take the form of manipulated stars. What kind of stars? Cepheid variables, or "stars that vary regularly in brightness."

This regular dimming and brightening could be used as a way both to encode and broadcast information.

From the article:

Crucially, these "Cepheid variables" are so luminous they can be seen as far away as 60 million light years. Jolting the star with a kick of energy – possibly by shooting it with a beam of high-energy particles called neutrinos – could advance the pulsation by causing its core to heat up and expand, [some scientists] say. That could shorten its brightness cycle – just as an electric stimulus to a human heart at the right time can advance a heartbeat. The normal and shortened cycles could be used to encode binary "0"s and "1"s.

The implication here is that hundreds of stars might already be "a galaxy-spanning internet" put into service by intelligent, nonhuman species.

The print version of the article differs a bit from the online, and I'm quoting the print version here: "There are over 500 cepheids in the Milky Way, and countless more in nearby galaxies, so data could be shuffled around as in a computer network."

Overlooking some of the more basic questions here – such as why on earth is this kind of cannabinoid speculation being printed in a science magazine? – the idea that information is being relayed back and forth, from star to star, as if inside some vast celestial harddrive, raised at least my eyebrows.

What messages, or fragments of messages, might we be witnessing every night?

And if there are no messages, yet we transcribe those flickering astral patterns nonetheless, what unexpected literatures of deep space might we think we've been translating?

-----

Via BLDBLOG

Personal comment:

Un réseau d'information littéralement inter-planétaires? Se servir de la lumière émise par certaines étoiles pour véhiculer de l'information?

Thursday, April 09. 2009

The device harnesses both sunlight and mechanical energy.

By Katherine Bourzac

|

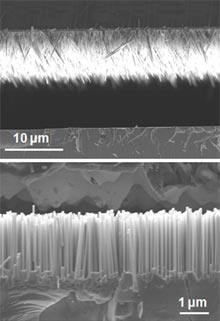

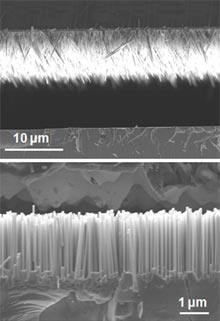

Nano hybrid: A dye-sensitized solar cell (top) and a nanogenerator (bottom) sit on the same substrate in the new device.

Credit: Xudong Wang |

Nanoscale generators can turn ambient mechanical energy--vibrations, fluid flow, and even biological movement--into a power source. Now researchers have combined a nanogenerator with a solar cell to create an integrated mechanical- and solar-energy-harvesting device. This hybrid generator is the first of its kind and might be used, for instance, to power airplane sensors by capturing sunlight as well as engine vibrations.

Nanogenerators typically use piezoelectric nanowires--hairlike zinc oxide structures that generate an electrical potential when mechanically stressed--to produce small amounts of power. The first such devices were made by Zhong Lin Wang, a professor at Georgia Tech and director of the institute's Center for Nanostructure Characterization. Wang hopes that nanogenerators will one day eliminate the need for batteries in implantable medical sensors, and will eventually generate enough power to charge up larger personal electronics.

Compared with solar cells, nanogenerators are still a relatively inefficient way of harvesting energy, says Wang, but "sometimes solar energy isn't available." So he collaborated with Xudong Wang, an assistant professor of materials science and engineering at the University of Wisconsin-Madison, to make the new hybrid device.

It combines two previously developed technologies in a layered silicon substrate, both of which rely on zinc oxide nanowires. The top layer consists of a thin-film solar cell embedded with dye-coated zinc oxide nanowires. The large surface area of the nanowires boosts the device's light absorption, a design based on work by Peidong Yang, a professor of chemistry at the University of California, Berkeley. The bottom layer contains Wang's nanogenerator. On the underside of the silicon is a jagged array of polymer-coated zinc oxide nanowires in a toothlike arrangement. When the device is exposed to vibrations, these "teeth" scrape against an underlying array of vertically aligned zinc oxide nanowires, creating an electrical potential.

The solar cell and the nanogenerator are electrically connected by the silicon substrate itself, which acts as both the anode of the solar cell and the cathode of the nanogenerator. It is possible to string together large groups of solar cells and nanogenerators, but having them integrated in a single system takes up less space and is therefore energy efficient. The prototype device can generate 0.6 volts of solar power and 10 millivolts of piezoelectric power. While the prototype device had only one nanogenerator, Wang expects to increase the power output by creating devices with multiple layers of nanogenerators. He says that a likely first application of these devices might be in sensor-laden military aircraft. The U.S. Air Force recently issued a call for research funding proposals related to hybrid energy-scavenging devices.

Charles Lieber, a professor of chemistry at Harvard University, says that Wang's device is "creative" and is, to his knowledge, the first hybrid nanoscale device capable of harvesting two types of energy. "That is particularly important, given that one is light active, while the other can work in the dark," says Lieber. He expects Wang's work to inspire other researchers to focus on hybrid nanogenerator devices, as well as on devices that combine nanogenerators with "complementary nano-enabled power storage."

Copyright Technology Review 2009.

-----

Via MIT Technology Review

A Microsoft project lets a touch screen control other hardware.

By Kate Greene

|

Halo effect: This MIDI controller is surrounded by virtual controls. Four of the virtual buttons control discrete tasks, including playing or pausing a track. The physical knobs provide finer control of the same function than the four virtual sliders.

Credit: Microsoft Research |

|

Multimedia

|

Large touch-screen tables have emerged as a useful way for several people to collaborate on projects like video editing or graphic design, but often these tasks require fine controls that can be difficult to simulate on a touch surface with limited resolution. When a person needs precision, it may be best to use a physical controller instead, says Dan Morris, a researcher at Microsoft.

Morris and his colleagues have developed software for touch-screen surfaces that allows physical controls to be added to them. In addition, the software lets people define the functions that each knob, button, and slider on a controller will perform.

The researchers' system, called Ensemble, was presented on Monday at the Computer-Human Interaction (CHI 2009) Conference in Boston. It consists of a custom-made touch table that is two meters long and one meter wide, and several portable sound-editing controllers that connect to the computer that controls the surface. The table is similar to Microsoft's Surface, but larger. As with Surface, cameras underneath the tabletop are used to sense when a user touches the surface or when an object is placed on top of it.

The idea of incorporating traditional input devices like mouses or keyboards with a touch display is not new, but the Microsoft researchers show with Ensemble that it's possible to make hardware do more than a single specified task.

Cameras within the Ensemble table detect a special tag on the bottom of each audio control box to recognize each box and determine its position on the surface. The software then produces an "aura" around each device, including touch-surface controls like "play," "pause," and "stop," and virtual sliders that correspond to physical knobs on the box.

A person can then edit a music track, for example, using both the physical device and the touch-surface controls. The virtual sliders can be used to zoom in on the audio waveform of a track, or to go to a different location on the waveform by panning. The physical knobs on the box perform the same function but offer much finer control. The system also allows a person to change the function of the knobs to, say, control the volume of a trumpet track instead.

"It's a software mechanism for telling the hardware what to do," says Morris. He explains that once a person has mapped different functions onto the controller, she's able to save it for later or pass it along to someone else who has a similar role in the editing process.

The paper, presented at CHI 2009 by Rebecca Fiebrink, a graduate student at Princeton University, also describes a study examining how people used the interface. Most of the study participants used the physical controls, favoring the accuracy and responsiveness that they offer. However, these participants also made extensive use of surface controls, choosing them mainly for tasks in which a single touch produced a discrete result, such as playing or stopping a track.

Robert Jacob, a professor of electrical engineering at Tufts University, in Medford, MA, says that the researchers "did a nice job of investigating what users actually did when given both [physical controllers and a touch screen] and the opportunity to switch between them."

Jacob, who chaired the session in which the paper was presented, acknowledges that bridging the gap between physical and digital objects can be challenging. "It's a difficult problem with no general solutions, but rather individual interesting designs," he says. "Ideally, you want the benefits of the digital without giving up those of the physical."

While Ensemble was designed for sound editing, its underlying technology could find other applications in graphics, gaming, and visual design, says Morris. "It could be used in scenarios where you want people to collaborate on a surface as a group," he says, but where the resolution of touch surface limits the precision of the virtual controls.

Copyright Technology Review 2009.

-----

Via MIT Technology Review

Personal comment:

Pas encore très concluant (voir la vidéo: interaction très lente... et je demande à voir une fois une véritable collaboration "de travail" dans un tel contexte), mais il y a de l'idée dans cette sorte de réalité augmentée partagée à plusieurs et projetée autour d'outils de travail ou d'édition.

Tuesday, April 07. 2009

If you're like us, you're constantly looking for things in your neighborhood, whether it's [restaurants in zurich] or a new [dentist in houston]. If you specify your location in your query, we often show your results on a map. But we've noticed that much of the time users make simpler searches, like [restaurants] or [dentist].

We like to make search as easy as we can, so we've just finished the worldwide rollout of local search results on a map, which will now appear even when you don't type in a location. When you search on Google, we will guess where you are and show results near you. (Click on the image to view larger.)

How do we guess your location? In most cases, we match your IP address to a broad geographical location. You can also specify your likely location using the "Change location" link on the top right corner, above the map. We try to make our guesses as good as they can be so that whether you're shopping for [groceries], [sporting goods] or [flowers], or looking for your [bank], your [gym], or the [post office], you can just say what you want, and we'll try to find it right where you are. You can also search for specific stores or street addresses near you, like [cornelia st cafe] in New York, for example. How do we guess your location? In most cases, we match your IP address to a broad geographical location. You can also specify your likely location using the "Change location" link on the top right corner, above the map. We try to make our guesses as good as they can be so that whether you're shopping for [groceries], [sporting goods] or [flowers], or looking for your [bank], your [gym], or the [post office], you can just say what you want, and we'll try to find it right where you are. You can also search for specific stores or street addresses near you, like [cornelia st cafe] in New York, for example.

Or [111 8th ave] in New York. Or [111 8th ave] in New York.

We hope this new feature will make it just a little bit easier for you to get where you're going. We hope this new feature will make it just a little bit easier for you to get where you're going.

Posted by Jenn Taylor and Jim Muller, Software Engineers

-----

Via Official Google Blog

Personal comment:

Une évolution de Google Maps qui tend à faire des résultats de chaque recherche quelquechose de personnalisé et "localisé". C'est une tendance, littérallement un "trend" actuel du design d'information. Tout comme les résultats d'une recherche standard sur Google sont désormais personnalisés en fonction de l'historique de nos recherches passées, sauvegardées chez Google.

Ainsi, ce que l'on voit sur notre écran est personnalisé, "individualisé". C'est encore une fois une tendance actuelle, viser l'individu et la singularité (la surveillance tend également à ça). C'est "bien", mais d'un autre côté, on a parfois également besoin de partager la même chose avec un grand nombre de personne, pouvoir comparer avec d'autres (ce qu'étaient dans le fond jusqu'ici les "mass médias").

A mon avis il ne faut pas tout individualiser (interfaces, résultats de recherches, contenus, publicité, etc.) car cela forme un sentiment d'isolement. Il faut pouvoir choisir, customiser ou "tuner" entre une version individualisée et une version mass-médiatisée.

Monday, April 06. 2009

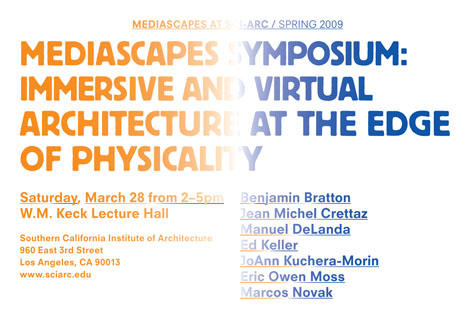

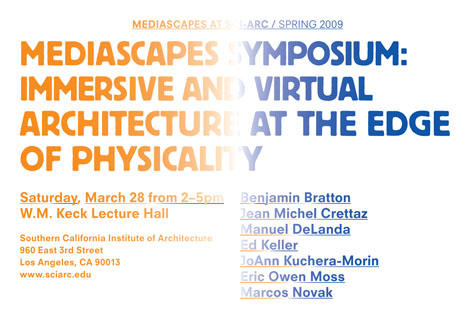

Starting just a few hours from now down at SCI-Arc, on a cloudless 73º day, "seven distinguished architects and theorists" whose designs straddle "the intersection of physical and virtual worlds" will be presenting their work at the Mediascapes Symposium, led by Ed Keller.

The bulk of the afternoon's discussion will encompass "the practice of immersive and virtual architecture, which spans animation and 3D technologies, digital environments, and questions of materiality... asking how these classifications will define our understanding of the relationships between tangible and intangible worlds." The bulk of the afternoon's discussion will encompass "the practice of immersive and virtual architecture, which spans animation and 3D technologies, digital environments, and questions of materiality... asking how these classifications will define our understanding of the relationships between tangible and intangible worlds."

One of today's speakers, Benjamin Bratton, who will also be presenting next week at Postopolis! LA, describes his talk: "Pervasive computing will make inanimate objects see, hear, and comment on our interactions with them. This experience will, in many cases, be indistinguishable from a psychotic break, or from the rituals of classical Animism." That, or it will feel like The Sorcerer's Apprentice.

If you're in LA, be sure to stop by.

Via BLDBLOG

Personal comment:

Some names... actifs dans la région de LA autour de thématiques qui ne nous sont pas étrangères! Des "usual suspects" (comme Marcos Novak ou Eric Owenn Moss) et des nouveaux suspects (Ed Keller, Benjamin Bratton, etc.)

|

[Image: The Cepheid Variable

[Image: The Cepheid Variable

The bulk of the afternoon's discussion will encompass "the practice of immersive and virtual architecture, which spans animation and 3D technologies, digital environments, and questions of materiality... asking how these classifications will define our understanding of the relationships between tangible and intangible worlds."

The bulk of the afternoon's discussion will encompass "the practice of immersive and virtual architecture, which spans animation and 3D technologies, digital environments, and questions of materiality... asking how these classifications will define our understanding of the relationships between tangible and intangible worlds."