Tuesday, March 18. 2014

Airships and atmosphere | #airship #satellite

-----

Airships can patrol the upper atmosphere, monitoring the ground or peering at the stars for a fraction of a cost of satellites, according to a new report. All that’s needed is a prize to kick-start innovation.

The Naval Air Engineering Station in Lakehurst New Jersey must be one of the most famous airfields in the world. If you’ve ever watched the extraordinary footage of the German passenger airship Hindenburg catching fire as it attempted to moor, you’ll have seen Lakehurst. That’s where the disaster took place.

Despite its notorious past, Lakehurst is still a center of airship engineering and technology. In 2012, it was home to the Long Endurance Multi-Intelligence Vehicle, an airship designed and built for the U.S. military to use for surveillance purposes over Afghanistan.

The vehicle is colossal—91 meters long, 34 meters wide, and 26 meters high, about the size of a 30 story office block lying on its side. And it is designed to fly uncrewed at about 10 kilometers for up to three weeks at a time. (Last year, the program was canceled and the airship sold back to the British contractor that built it, which now intends to fly it commercially.)

This ambitious program and a few others like it mostly funded by the U.S. military, have attracted some jealous glances from scientists. The ability to fly at 20 kilometers or more for extended periods of time could be hugely useful.

Fitted with cameras that scan the ground, sensors that monitor the atmosphere or telescopes that point to the stars, these observatories could revolutionize the kind of data researchers are able to gather about the universe.

Today, Sarah Miller and few pals have prepared a report for the Keck Institute of Space Studies in Pasadena suggesting that scientists have unnecessarily ignored the advantages of airships and that the time is right for a new era of science based on this capability.

The problem, of course, is that airships capable of these missions have not yet been built. Most of the well-funded development has come from the military for long duration surveillance missions. But with the end of the wars in Iraq and Afghanistan and the downsizing of the U.S. military machine, this funding has dried up.

But Miller and co have a suggestion. They say that innovation in this area could be stimulated by setting up a prize for the development of a next-generation airship, just as the X-Prize stimulated interest in reusable rocket flights. The goal, they say, should be to build a maneuverable, stationed-keeping airship that can stay aloft at an altitude of more than 20 km from least 20 hours while carrying a science payload of a least 20 kg.

That’s a significant challenge. One problem will be carrying or generating the power required to propel the airship. This increases with the cube of its airspeed and so will be the biggest drain on the vehicle’s resources.

Another challenge is to handle the thermal loads at this altitude, where temperatures can vary by as much as 50 °C and where there is little air to carry heat away.

But none of these problems look like showstoppers. Given the right kind of incentives, it should be possible to put one of these things in the air in the very near future, perhaps based on the technology developed for vehicles like the Long Endurance Multi-Intelligence Vehicle.

All that’s needed is a sponsor willing to cough up a few million dollars for a prize. Anybody with a few bucks to spare?

Ref: arxiv.org/abs/1402.6706 : AIRSHIPS : A New Horizon for Science.

Related Links:

Personal comment:

In regard of the now necessary needs to monitor our man transformed atmosphere... (and not only to have universal Internet access provided by private companies), a fully artificial need, the creation of such blimp-drones would be interesting. Yet I totally disagree with the fact that this should be a private initiative. It is capital to my understanding that it remains public, in the hands of the public and driven by public technology (including the monitored data).

If this won't be the case, what I can see is the commodification of air and upper atmosphere, like human interactions have been commodified through social networks (you know, the "data problem") or many slightly modified genes are in the process to be. We can see the very problematic outcomes of this "neo-liberal only" attitude today, with data and our (digital) selves that are sold and turned into products.

So, please, let's keep it public infrastructrure.

Friday, February 28. 2014

Big Data, Big Questions | #smart? #data #monitoring

It looks like managing a "smart" city is similar to a moon mission! IBM Intelligent Operations Center in Rio de Janeiro.

Via Metropolis

-----

IBM, INTELLIGENT OPERATIONS CENTER, RIO DE JANEIRO

At the Intelligent Operations Center in Rio, workers manage the city from behind a giant wall of screens, which beam them data on how the city is doing— from the level of water in a street following a rainstorm to a recent mugging or a developing traffic jam. As the home to both the 2014 World Cup and the 2016 Olympics, the city hopes to prove it can be in control of itself, even under pressure. And IBM hopes to prove the power of its new Smarter Cities software to a global audience.

And an intersting post, long and detailed (including regarding recent IBM, CISCO, Siemens "solutions" and operations), about smart cities in the same article, by Alex Marshall:

"The smart-city movement spreading around the globe raises serious concerns about who controls the information, and for what purpose."

More about it HERE.

Wednesday, February 26. 2014

Picture Piece: Cybersyn, Chile 1971-73 | #cybernetic #history

Three years ago we published a post by Nicolas Nova about Salvator Allende's project Cybersyn. A trial to build a cybernetic society (including feedbacks from the chilean population) back in the early 70ies.

Here is another article and picture piece about this amazing projetc on Frieze. You'll need to buy the magazione to see the pictures, though!

-----

Via Frieze

Phograph of Cybersyn, Salvador Allende's attempt to create a 'socialist internet, decades ahead of its time'

This is a tantalizing glimpse of a world that could have been our world. What we are looking at is the heart of the Cybersyn system, created for Salvador Allende’s socialist Chilean government by the British cybernetician Stafford Beer. Beer’s ambition was to ‘implant an electronic nervous system’ into Chile. With its network of telex machines and other communication devices, Cybersyn was to be – in the words of Andy Beckett, author of Pinochet in Piccadilly (2003) – a ‘socialist internet, decades ahead of its time’.

Capitalist propagandists claimed that this was a Big Brother-style surveillance system, but the aim was exactly the opposite: Beer and Allende wanted a network that would allow workers unprecedented levels of control over their own lives. Instead of commanding from on high, the government would be able to respond to up-to-the-minute information coming from factories. Yet Cybersyn was envisaged as much more than a system for relaying economic data: it was also hoped that it would eventually allow the population to instantaneously communicate its feelings about decisions the government had taken.

In 1973, General Pinochet’s cia-backed military coup brutally overthrew Allende’s government. The stakes couldn’t have been higher. It wasn’t only that a new model of socialism was defeated in Chile; the defeat immediately cleared the ground for Chile to become the testing-ground for the neoliberal version of capitalism. The military takeover was swiftly followed by the widespread torture and terrorization of Allende’s supporters, alongside a massive programme of privatization and de-regulation. One world was destroyed before it could really be born; another world – the world in which there is no alternative to capitalism, our world, the world of capitalist realism – started to emerge.

There’s an aching poignancy in this image of Cybersyn now, when the pathological effects of communicative capitalism’s always-on cyberblitz are becoming increasingly apparent. Cloaked in a rhetoric of inclusion and participation, semio-capitalism keeps us in a state of permanent anxiety. But Cybersyn reminds us that this is not an inherent feature of communications technology. A whole other use of cybernetic sytems is possible. Perhaps, rather than being some fragment of a lost world, Cybersyn is a glimpse of a future that can still happen.

Shall we dance Tango? (for Google) | #monitoring #data

Seen everywhere online these days and now on | rblg too... Yet another "trojan horse" by Google to turn you into a mobile and indoor sensor for their own sake (data collection, if I said so). And soon will we be able to visit your flat or the ones of your friends through Google Maps/Earth, or through a constellation of other applications. After clicking at the door, of course.

But also, as it is often the case with such devices, an interesting tool as well... On top of which disruptive apps will be built that will further mix material and immaterial experiences and that will further locate parts of your "home" into "clouds".

As it consists in an open call for ideas, before they'll give away 200 dev. kits, don't hesitate to send them a line if you have an unpredictable one (this promiss to be very competing...)!

Link to the projetc and call HERE.

-----

*An Android unit with registration.

http://www.google.com/atap/projecttango/

(…)

“What is it?

“Our current prototype is a 5” phone containing customized hardware and software designed to track the full 3D motion of the device, while simultaneously creating a map of the environment. These sensors allow the phone to make over a quarter million 3D measurements every second, updating its position and orientation in real-time, combining that data into a single 3D model of the space around you.

“It runs Android and includes development APIs to provide position, orientation, and depth data to standard Android applications written in Java, C/C++, as well as the Unity Game Engine. These early prototypes, algorithms, and APIs are still in active development. So, these experimental devices are intended only for the adventurous and are not a final shipping product….”

Thursday, December 05. 2013

Why Is Google Buying So Many Robot Startups? | #robotics

-----

Forget robotic product delivery. As usual for Google, I suspect it’s all about the data.

Google-bot: The M1 Mobile Manipulator from Meka, one of several robot companies acquired recently by Google.

Google has quietly bought seven robotics companies, and has given Andy Rubin, the man who originally led the Android project, the job of developing Google’s first robot army. And so, the New York Times suggests it might only be a few years before a Google robot driving in a Google car is delivering products to your door.

I somehow doubt Google has anything quite so futuristic in mind. I think the effort is quite similar to both Google’s self-driving car endeavor and its Android project. In other words, it’s all about gaining a dominant position in markets where data is about to explode.

Take Google’s self-driving cars. Contrary to common perception, the company didn’t “invent” this technology; most carmakers were already working on autonomous system when Google got involved, and academic researchers had made dramatic recent progress—propelled in large part by several DARPA challenges (see “Driverless Cars are Further Away than You Think”). Google just saw that this was where the automotive industry was headed, and realized that the advent of automation, telematics, and communication would mean a tsunami of data that it could both supply and profit from. Given that many of us spend several hours a day in automobiles, this data could help Google learn more about users and tailor its products accordingly.

Similarly, I suspect Google has recognized that a new generation of smarter, safer, industrial robots is rapidly emerging (see “This Robot Could Transform Manufacturing” and “Why This Might be the Model-T of Workplace Robots”), and it’s realized that these bots could have a huge impact both at work and at home. Whoever provides the software that controls and manages these robots not only stands to make a fortune by selling that software; they will have access to a vast new repository of data about how we live and work.

In this sense, I think Google is being true to its stated mission: “to organize the world’s information”—although it’s worth noting that in an increasingly connected and data-rich world that could mean seeking to organize just about every aspect of our lives. Luckily for Google, it may soon have a robot army to help it keep everything in order.

Thursday, October 03. 2013

Urban Sensor Hack as Maker sessions

Note: Makezine is currently running a useful online program (tutorials, explorations, projects, etc. --Sept. 24 - Oct. 15) for a couple of weeks about the "make" approach that is typical of the magazine, this time linked to the "civic" use of urban sensors. Obviously, we should quickly multiply these kind of initiatives to offer alternatives approaches if we don't want to end up into big corporate/monetized monitored cities...

Via Makezine

-----

- Join our Urban Sensor Hacks Google+ community and connect with makers from all over who are exploring the world around them using off-the-shelf tech and their own ingenuity.

- Discover how sensor-based applications help us understand the urban environment and how people interact within it.

- Learn how sensor platforms make it easy and affordable to build and deploy numerous sensors in urban areas.

- Get started creating sensor-based applications to experiment and learn about the world you live in.

Upcoming online sessions:

10/3 – Sean Montgomery, Kipp Bradford – Bio-Sensing: Feeling the Pulse of a City. At the heart of urban life are people — what they do and communicate, how they think and feel. Bio-sensing is opening a window into people’s behaviors and motivations in a way that will change nearly every aspect of our lives from health to education to retail experience. Learn how you can hack the bio-sensing revolution and change the way you look at yourself and people around you.

10/8 – Tim Dye, Michael Heimbinder, Iem Heng, Raymond Yap – Join the AirCasting crew as they guide you through a step by step process for building your own air quality monitor, discuss the challenges involved in achieving accurate measurements, and detail their work with grassroots groups and schools to conduct environmental monitoring and advance STEAM education.

10/10 – Tomas Diez – Smart Citizen: The largest crowdsourced sensor platform and community on earth. How can we use the information that is surrounding us to improve our cities? Can I become a sensor in my city? Can communities make their neighbourhoods better by sensing and acting in their environment? Smart Citizen tries to tackle these questions by developing an open source and easy-to-use sensor kit connected with an online platform and mobile app. The projects starts with environmental sensors to capture data about air pollution, sound, temperature and humidity in the urban environment, but will grow to more applications in relation with energy, agriculture, health, and its use in the Internet of Things ecosystem. More about Smart Citizen.

Abour Maker Sessions:

Making and hacking: Live online events using a Google Plus community to bring together makers online and at physical locations for hacking and making. Maker Sessions are organized around a theme or a purpose – to look at technologies that enable new applications and to encourage people of all skill levels and interests to participate in the development of ideas and applications.

Hacking the hackathon: Bring makers together where they live and work – at home, at a university or at makerspaces. Explore opportunities to do something cool – something that perhaps nobody else is doing. Learn from master makers about an application area and discover cool maker projects.

Related Links:

Friday, September 20. 2013

How Mechanical Turkers Crowdsourced a Huge Lexicon of Links Between Words and Emotion

-----

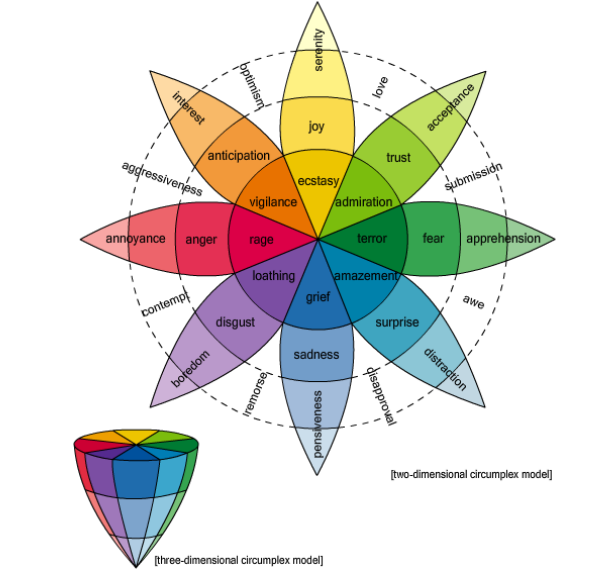

Sentiment analysis on the social web depends on how a person’s state of mind is expressed in words. Now a new database of the links between words and emotions could provide a better foundation for this kind of analysis.

One of the buzzphrases associated with the social web is sentiment analysis. This is the ability to determine a person’s opinion or state of mind by analysing the words they post on Twitter, Facebook or some other medium. Much has been promised with this method—the ability to measure satisfaction with politicians, movies and products; the ability to better manage customer relations; the ability to create dialogue for emotion-aware games; the ability to measure the flow of emotion in novels; and so on. The idea is to entirely automate this process—to analyse the firehose of words produced by social websites using advanced data mining techniques to gauge sentiment on a vast scale. But all this depends on how well we understand the emotion and polarity (whether negative or positive) that people associate with each word or combinations of words. Today, Saif Mohammad and Peter Turney at the National Research Council Canada in Ottawa unveil a huge database of words and their associated emotions and polarity, which they have assembled quickly and inexpensively using Amazon’s crowdsourcing Mechanical Turk website. They say this crowdsourcing mechanism makes it possible to increase the size and quality of the database quickly and easily. Most psychologists believe that there are essentially six basic emotions– joy, sadness, anger, fear, disgust, and surprise– or at most eight if you include trust and anticipation. So the task of any word-emotion lexicon is to determine how strongly a word is associated with each of these emotions. One way to do this is to use a small group of experts to associate emotions with a set of words. One of the most famous databases, created in the 1960s and known as the General Inquirer database, has over 11,000 words labelled with 182 different tags, including some of the emotions that psychologist now think are the most basic. A more modern database is the WordNet Affect Lexicon, which has a few hundred words tagged in this way. This used a small group of experts to manually tag a set of seed words with the basic emotions. The size of this database was then dramatically increased by automatically associating the same emotions with all the synonyms of these words. One of the problems with these approaches is the sheer time it takes to compile a large database so Mohammad and Turney tried a different approach. These guys selected about 10,000 words from an existing thesaurus and the lexicons described above and then created a set of five questions to ask about each word that would reveal the emotions and polarity associated with it. That’s a total of over 50,000 questions. They then asked these questions to over 2000 people, or Turkers, on Amazon’s Mechanical Turk website, paying 4 cents for each set of properly answered questions. The result is a comprehensive word-emotion lexicon for over 10,000 words or two-word phrases which they call EmoLex. One important factor in this research is the quality of the answers that crowdsourcing gives. For example, some Turkers might answer at random or even deliberately enter wrong answers. Mohammad and Turney have tackled this by inserting test questions that they use to judge whether or not the Turker is answering well. If not, all the data from that person is ignored. They tested the quality of their database by comparing it to earlier ones created by experts and say it compares well. “We compared a subset of our lexicon with existing gold standard data to show that the annotations obtained are indeed of high quality,” they say. This approach has significant potential for the future. Mohammad and Turney say it should be straightforward to increase the size of the date database and at the same technique can be easily adapted to create similar lexicons in other languages. And all this can be done very cheaply—they spent $2100 on Mechanical Turk in this work. The bottom line is that sentiment analysis can only ever be as good as the database on which it relies. With EmoLex, analysts have a new tool for their box of tricks. Ref: arxiv.org/abs/1308.6297: Crowdsourcing a Word-Emotion Association Lexicon.

Monday, August 12. 2013

Pour un "habeas corpus" numérique, Eric Sadin

Via ericsadin.org via Le Monde

-----

Following all these recent posts aboutths nsa, algorithms, surveillance/monitoring, etc., I take here the opportunity to reblog what our very good friend Eric Sadin published in Le Monde last month about this question (the article is only in French, it was following the publication of a new book by Eric: L'humanité augmentée - L'administration numérique du monde -, éd. L'échapée). It is also the occasion to remind that we did a work in common about this last year, Globale surveillance, that will be on stage again later this year!

Le 5 juin, Glenn Greenwald, chroniqueur au quotidien britannique The Guardian, révèle sur son blog que l'Agence de sécurité nationale (NSA) américaine bénéficie d'un accès illimité aux données de Verizon, un des principaux opérateurs téléphoniques et fournisseurs d'accès Internet américains. La copie de la décision de justice confidentielle est publiée, attestant de l'obligation imposée à l'entreprise de fournir les relevés détaillés des appels de ses abonnés. "Ce document démontre pour la première fois que, sous l'administration Obama, les données de communication de millions de citoyens sont collectées sans distinction et en masse, qu'ils soient ou non suspects", commente-t-il sur la même page.

Dès le lendemain, Glenn Greenwald relate dans un article cosigné avec un journaliste du Guardian d'autres faits décisifs : neuf des plus grands acteurs américains d'Internet (Google, Facebook, Microsoft, Apple, Yahoo, AOL, YouTube, Skype et PalTalk) permettraient au FBI (la police fédérale) et à la NSA d'avoir directement accès aux données de leurs utilisateurs, par le biais d'un système hautement sophistiqué baptisé "Prism", dont l'usage régulier serait à l'oeuvre depuis 2007. Informations confidentielles à l'origine livrées par Edward

Snowden, jeune informaticien ex-employé de l'Agence centrale du renseignement (CIA), officiant pour différents sous-traitants de la NSA. Le "lanceur d'alerte" affirme que de telles pratiques mettent en péril la vie privée, engageant en toute conscience son devoir de citoyen de les divulguer, malgré les risques de poursuites encourues. Les entreprises incriminées ont aussitôt démenti cette version, laissant néanmoins supposer que des négociations sont en cours en vue de créer un cadre coopératif "viable" élaboré d'un commun accord.

Divulgations qui ont aussitôt suscité un afflux de commentaires de tous ordres, relatifs à l'ampleur des informations interceptées par les agences de renseignement, autant qu'à l'impérieuse nécessité de préserver les libertés individuelles.

S'il demeure de nombreuses zones d'ombre et des incertitudes quant aux procédés employés et à l'implication de chacun des acteurs, ces affaires confirment manifestement, aux yeux du monde, qu'un des enjeux cruciaux actuels renvoie à l'épineuse question de la récolte et de l'usage des données à caractère personnel. Ces événements, s'ils sont confirmés dans leur version initiale, sont éminemment répréhensibles, néanmoins, pour ma part, je ne veux y voir qu'une forme de "banalité de la surveillance contemporaine", qui dans les faits se déploie à tout instant, en tout lieu et sous diverses formes, la plupart du temps favorisée par notre propre consentement.

Car ce qui se joue ici ne relève pas de faits isolés, principalement "localisés" aux Etats-Unis et conduits par de seules instances gouvernementales, dont il faudrait s'offusquer au rythme des révélations successives. C'est dans leur valeur symptomale qu'ils doivent être saisis, en tant qu'exemples de phénomènes aujourd'hui globalisés, rendus possibles par la conjonction de trois facteurs hétérogènes et concomitants. Sorte de "bouillon de culture" qui se serait formé vers le début de la première décennie du XXIe siècle, et qui a "autorisé" l'extension sans cesse croissante de procédures de surveillance, suivant une ampleur et une profondeur sans commune mesure historique.

D'abord, c'est l'expansion ininterrompue du numérique depuis le début des années 1980, plus tard croisée aux réseaux de télécommunication, qui a rendu possible vers le milieu des années 1990 la généralisation d'Internet, soit l'interconnexion globalisée en temps réel.

Ensuite, l'intensification de la concurrence économique a encouragé l'instauration de stratégies marketing agressives, cherchant à capter et à pénétrer toujours plus profondément les comportements des consommateurs, un objectif facilité par la dissémination croissante de données grâce au suivi des navigations, ou autres achats par cartes de crédit ou de fidélisation.

Enfin, les événements du 11 septembre 2001 ont contribué à amplifier un profilage sécuritaire indifférencié du plus grand nombre d'individus possible. Ce qui est désormais nommé " Big Data ", soit la profusion de données disséminées par les corps et les choses, se substitue en quelque sorte à la figure unique et omnipotente de Big Brother, en une fragmentation éparpillée de serveurs et d'organismes, qui concourent ensemble et séparément à affiner la connaissance des personnes, en vue d'une multiplicité de fonctionnalités à exploitations prioritairement sécuritaires ou commerciales.

Le lien charnel, tactile ou quasi ombilical que nous entretenons avec nos prothèses numériques miniaturisées - particulièrement emblématique dans le smartphone - bouleverse les conditions historiques de l'expérience humaine. La géolocalisation intégrée aux dispositifs transforme ou "élargit" notre appréhension sensorielle de l'espace ; la portabilité expose une forme d'ubiquité induisant une perception de la durée au rythme de la vitesse des flux électroniques reçus et transmis ; les applications traitent des magmas d'informations à des vitesses infiniment supérieures à celles de nos capacités cérébrales, et sont dotées de miraculeux pouvoirs cognitifs et suggestifs, qui peu à peu infléchissent la courbe de nos quotidiens.

C'est un nouveau mode d'intelligibilité du réel qui s'est peu à peu constitué, fondé sur une transparence généralisée, qui réduit la part de vide séparant les êtres entre eux et les êtres aux choses. "Tournant numérico-cognitif" engendré par l'intelligence croissante acquise par la technique, capable d'évaluer les situations, d'alerter, de suggérer et de prendre dorénavant des décisions à notre place (à l'instar du récent prototype de la Google Car, ou du trading à haute fréquence).

Le mouvement généralisé de numérisation du monde, dont Google constitue le levier principal, vise à instaurer une "rationalisation algorithmique de l'existence", à redoubler le réel logé au sein de "fermes de serveurs" hautement sécurisées, en vue de le quantifier et de l'orienter en continu à des fins d'"optimisation" sécuritaire, commerciale, thérapeutique ou relationnelle. C'est une rupture anthropologique qui actuellement se trame par le fait de notre condition de toute part interconnectée et robotiquement assistée, qui s'est déployée avec une telle rapidité qu'elle nous a empêchés d'en saisir la portée civilisationnelle.

Les récentes révélations mettent en lumière un enjeu crucial de notre temps, auquel nos sociétés dans leur ensemble doivent se confronter activement, devant engager à mon sens trois impératifs éthiques catégoriques. Le premier consiste à élaborer des lois viables à échelles nationale et internationale, visant à marquer des limites, et à rendre autant que possible transparents les processus à l'oeuvre, souvent dérobés à notre perception.

Dimensions complexes dans la mesure où les développements techniques et les logiques économiques se déploient suivant des vitesses qui dépassent celle de la délibération démocratique, et ensuite parce que les rapports de force géopolitiques tendent à ralentir ou à freiner toute velléité d'harmonisation transnationale (voir à ce sujet la récente offensive de lobbying américain cherchant à contrarier ou à empêcher la mise en place d'un projet visant à améliorer la protection des données personnelles des Européens : The Data Protection Regulation - DPR - ).

Le deuxième requiert un devoir d'enseignement et d'apprentissage des disciplines informatiques à l'école et à l'université, visant à faire comprendre "de l'intérieur" les fonctionnements complexes du code, des algorithmes et des systèmes. Disposition susceptible de positionner chaque citoyen comme un artisan actif de sa vie numérique, à l'instar de certains hackeurs qui en appellent à comprendre les processus à l'oeuvre, à se réapproprier les dispositifs ou à en inventer de singuliers, en vue d'usages "libres" et partagés en toute connaissance des choses.

Le troisième mobilise l'enjeu capital visant à maintenir une forme de "veille mutualisée" à l'égard des protocoles et de nos pratiques, grâce à des initiatives citoyennes s'emparant sous de multiples formes de ces questions, afin de les exposer dans le domaine public au fur et à mesure des évolutions et innovations successives. Nous devons espérer que l'admirable courage d'Edward Snowden ou la remarquable ténacité de Glenn Greenwald soient annonciateurs d'un "printemps globalisé", appelé à faire fleurir de toute part et pour le meilleur les champs de nos consciences individuelles et collectives.

Citons les propos énoncés par Barack Obama lors de sa conférence de presse du 7 juin : "Je pense qu'il est important de reconnaître que vous ne pouvez pas avoir 100 % de sécurité, mais aussi 100 % de respect de la vie privée et zéro inconvénient. Vous savez, nous allons devoir faire des choix de société." Dont acte, et sans plus tarder.

A l'aune de l'incorporation annoncée de puces électroniques à l'intérieur de nos tissus biologiques, qui témoignerait alors sans rupture spatio-temporelle de l'intégralité de nos gestes et de la nature de nos relations, la mise en place d'un "Habeas corpus numérique" relève à coup sûr d'un enjeu civilisationnel majeur de notre temps.

Eric Sadin

© Le Monde

Wednesday, August 07. 2013

Massive NSA data center will use 1.7 million gallons of water a day

Via Treehugger

-----

By Megan Treacy

Undeniably one of the biggest stories of the year has been the leak about the NSA PRISM program, which has been monitoring American citizens' communications. Many people have been appalled by this revelation, but it turns out there is an environmentally appalling part of this spying program too. More details have been released about NSA's new Intelligence Community Comprehensive National Cybersecurity Initiative Data Center, otherwise known as that massive data center being built by the agency in Bluffdale, Utah.

Turns out that collecting tons of information in the form of phone calls, emails and web searches is an energy and water-hungry business. According to reports, the one million square-foot facility will house 100,000 square feet of data-storing servers and will use 1.7 million gallons of water per day to keep those servers cool.

The data center will account for one percent of all water use in the area and the city of Bluffdale is looking for additional water sources for when the facility is finished in September.

It won't be an energy-sipper either, but that was obvious from the size of the place. The facility will require 65 megawatts of power, which is the equivalent of 65,000 homes. It will have its own power substation and back-up diesel power generators.

The crazy thing is that this gigantic data center isn't quite enough. The NSA is also building another data center in Fort Meade, Maryland that will be two-thirds the size of the mega center, but that's still pretty darn big.

Thursday, July 25. 2013

Using a Smartphones Eyes and Ears to Log Your Every Move

Via MIT Technology Review via @chrstphggnrd

-----

New tricks will enable a life-logging app called Saga to figure out not only where you are, but what you’re doing.

By Tom Simonite

Having mobile devices closely monitoring our behavior could make them more useful, and open up new business opportunities.

Many of us already record the places we go and things we do by using our smartphone to diligently snap photos and videos, and to update social media accounts. A company called ARO is building technology that automatically collects a more comprehensive, automatic record of your life.

ARO is behind an app called Saga that automatically records every place that a person goes. Now ARO’s engineers are testing ways to use the barometer, cameras, and microphones in a device, along with a phone’s location sensors, to figure out where someone is and what they are up to. That approach should debut in the Saga app in late summer or early fall.

The current version of Saga, available for Apple and Android phones, automatically logs the places a person visits; it can also collect data on daily activity from other services, including the exercise-tracking apps FitBit and RunKeeper, and can pull in updates from social media accounts like Facebook, Instagram, and Twitter. Once the app has been running on a person’s phone for a little while, it produces infographics about his or her life; for example, charting the variation in times when they leave for work in the morning.

Software running on ARO’s servers creates and maintains a model of each user’s typical movements. Those models power Saga’s life-summarizing features, and help the app to track a person all day without requiring sensors to be always on, which would burn too much battery life.

“If I know that you’re going to be sitting at work for nine hours, we can power down our collection policy to draw as little power as possible,” says Andy Hickl, CEO of ARO. Saga will wake up and check a person’s location if, for example, a phone’s accelerometer suggests he or she is on the move; and there may be confirmation from other clues, such as the mix of Wi-Fi networks in range of the phone. Hickl says that Saga typically consumes around 1 percent of a device’s battery, significantly less than many popular apps for e-mail, mapping, or social networking.

That consumption is low enough, says Hickl, that Saga can afford to ramp up the information it collects by accessing additional phone sensors. He says that occasionally sampling data from a phone’s barometer, cameras, and microphones will enable logging of details like when a person walked into a conference room for a meeting, or when they visit Starbucks, either alone or with company.

The Android version of Saga recently began using the barometer present in many smartphones to distinguish locations close to one another. “Pressure changes can be used to better distinguish similar places,” says Ian Clifton, who leads development of the Android version of ARO. “That might be first floor versus third floor in the same building, but also inside a vehicle versus outside it, even in the same physical space.”

ARO is internally testing versions of Saga that sample light and sound from a person’s environment. Clifton says that using a phone’s microphone to collect short acoustic fingerprints of different places can be a valuable additional signal of location, and allow inferences about what a person is doing. “Sometimes we’re not sure if you’re in Starbucks or the bar next door,” says Clifton. “With acoustic fingerprints, even if the [location] sensor readings are similar, we can distinguish that.”

Occasionally sampling the light around a phone using its camera provides another kind of extra signal of a person’s activity. “If you go from ambient light to natural light, that would say to us your context has changed,” says Hickl, and it should be possible for Saga to learn the difference between, say, the different areas of an office.

The end result of sampling light, sound, and pressure data will be Saga’s machine learning models being able to fill in more details of a users’ life, says Hickl. “[When] I go home today and spend 12 hours there, to Saga that looks like a wall of nothing,” he says, noting that Saga could use sound or light cues to infer when during that time at home he was, say, watching TV, playing with his kids, or eating dinner.

Andrew Campbell, who leads research into smartphone sensing at Dartmouth College, says that adding more detailed, automatic life-logging features is crucial for Saga or any similar app to have a widespread impact. “Automatic sensing relieves the user of the burden of inputting lots of data,” he says. “Automatic and continuous sensing apps that minimize user interaction are likely to win out.”

Campbell says that automatic logging coupled with machine learning should allow apps to learn more about users’ health and welfare, too. He recently started analyzing data from a trial in which 60 students used a life-logging app that Campbell developed called Biorhythm. It uses various data collection tricks, including listening for nearby voices to determine when a student is in a conversation. “We can see many interesting patterns related to class performance, personality, stress, sociability, and health,” says Campbell. “This could translate into any workplace performance situation, such as a startup, hospital, large company, or the home.”

Campbell’s project may shape how he runs his courses, but it doesn’t have to make money. ARO, funded by Microsoft cofounder Paul Allen, ultimately needs to make life-logging pay. Hickl says that he has already begun to rent out some of ARO’s technology to other companies that want to be able to identify their users’ location or activities. Aggregate data from Saga users should also be valuable, he says.

“Now we’re getting a critical mass of users in some areas and we’re able to do some trend-spotting,” he says. “The U.S. national soccer team was in Seattle, and we were able to see where activity was heating up around the city.” Hickl says the data from that event could help city authorities or businesses plan for future soccer events in Seattle or elsewhere. He adds that Saga could provide similar insights into many other otherwise invisible patterns of daily life.

Personal comment:

Or how to build up knowledge and minable data from low end "sensors". Finally, how some trivial inputs from low cost sensors can, combined with others, reveal deeper patterns in our everyday habits.

But who's Aro? Who founded it? Who are the "business angels" behind it and what are they up to? What does the technology exactly do? Where are its legal headquarters located (under which law)? That's the first questions you should ask yourself before eventually giving your data to a private company... (I know, this is usual suspects these days). But that's pretty hard to find! CEO is Mr Andy Hickl based in Seattle, having 1096 followers on Twitter and a "Sorry, this page isn't available" on Facebook, you can start to digg from there and mine for him on Google...

-

We are in need of some sort of efficient Creative Commons equivalent for data. But that would be respected by companies. As well as some open source equivalent for Facebook, Google, Dropbox, etc. (but also MS, Apple, etc.), located in countries that support these efforts through their laws and where these "Creative Commons" profiles and data would be implemented. Then, at least, we would have some choice.

In Switzerland, we had a term to describe how the landscape has been progressivily used since the 60ies to build small individual or holiday houses: "mitage du territoire" ("urban sprawl" sounds to be the equivalent in english, but "mitage" is related to "moths" to be precise, so rather ""mothed" landscape" if I could say so, which says what it says) and we had the opportunity to vote against it recently, with success. I believe that now the same thing is happening with personal and/or public sphere, with our lives: it is sprawled or "mothed" by private interests.

So, it is time to ask for the opportunity to "vote" (against it) everybody and have the choice between keeping the ownership of your data, releasing them as public or being paid for them (like a share in the company('s product))!

fabric | rblg

This blog is the survey website of fabric | ch - studio for architecture, interaction and research.

We curate and reblog articles, researches, writings, exhibitions and projects that we notice and find interesting during our everyday practice and readings.

Most articles concern the intertwined fields of architecture, territory, art, interaction design, thinking and science. From time to time, we also publish documentation about our own work and research, immersed among these related resources and inspirations.

This website is used by fabric | ch as archive, references and resources. It is shared with all those interested in the same topics as we are, in the hope that they will also find valuable references and content in it.

Quicksearch

Categories

Calendar

|

|

April '24 | |||||

| Mon | Tue | Wed | Thu | Fri | Sat | Sun |

| 1 | 2 | 3 | 4 | 5 | 6 | 7 |

| 8 | 9 | 10 | 11 | 12 | 13 | 14 |

| 15 | 16 | 17 | 18 | 19 | 20 | 21 |

| 22 | 23 | 24 | 25 | 26 | 27 | 28 |

| 29 | 30 | |||||