Monday, September 08. 2008

?Networked cities? session at LIFT Asia 2008

(Special fav session at LIFT Asia 2008 this morning since this topic is linked to my own research, my quick notes).

By Nicolas Nova

Adam Greenfield’s talk “The Long Here, the Big Now… and other tales of the networked city” was the follow-up of his “The City is Here for You to Use“. Adam’s approach here was “not technical talk but affective”, about what does it feel to live in networked cities and less about technologies that would support it. The central idea of ubicomp: A world in which all the objects and surfaces of everyday life are able to sense, process, receive, display, store, transmit and take physical action upon information. Very common in Korea, it’s called “ubiquitous” or just “u-” such as u-Cheonggyecheong or New Songdo. However, this approach is often starting from technology and not human desire.

Adam’s more interested in what it really feels like to live your life in such a place or how we can get a truer understanding of how people will experience the ubiquitous city. He claims that that we can begin to get an idea by looking at the ways people use their mobile devices and other contemporary digital artifacts. Hence his job of Design Director at Nokia.

For example: a woman talking in a mobile phone walking around in a mall in Singapore, no longer responding to architecture around her but having a sort of “schizeogographic” walk (as formulated by Mark Shepard). There is hence “no sovereignty of the physical”. Same with people in Tokyo or Seoul’s metro: physically there but on the phone, they’re here physically but their commitment is in the virtual.

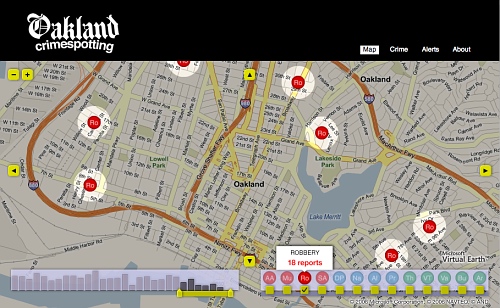

(Oakland Crimespotting by Stamen Design)

Adam think that the primarily conditions choice and action in the city are no longer physical but resides in the invisible and intangible overlay of networked information that enfolds it. The potential for this are the following:

- The Long here (named in conjunction with Brian Eno and Steward Brand’s “Long Now”): layering a persistent and retrievable history of the things that are done and witnessed there over anyplace on Earth that can be specified with machine-readable coordinates. An example of such layering experience on any place on earth is the Oakland Crimespotting map or the practice of geotagging pictures on Flickr.

- The Big Now: which is about making the total real-time option space of the city a present and tangible reality locally AND, globally, enhancing and deepening our sense of the world’s massive parallelism. For instance, with Twitter one can get the sense of what happens locally in parallel and also globally. You see the world as a parallel ongoing experiment. A more complex example is to use Twitter not only for people but also for objects, see for instance Tom Armitage’s Making bridges talk (Tower Bridge can twitter when it is opening and closing, captured through sensors and updated on Twitter). At MIT SENSEeable City, there is also this project called “Talk Exchange” which depicts the connections between countries based on phone calls.

Of course, there are less happy consequences, these tech can be used to exclude, what Adam calls the “The Soft Wall”: networked mechanisms intended to actively deny, delay or degrade the free use of space. Defensible space is definitely part of it as Adam points out Steven Flusty’s categories to describe how spaces becomes: “stealthy, slippery, crusty, prickly, jittery and foggy”. The result is simply differential permissioning without effective recourse: some people have the right to have access to certain places and others don’t. When a networked device does that you have less recourse than when it’s a human with whom you can argue, talk, fight, etc. Effective recourse is something we take for granted that may disappear.

We’ll see profound new patterns of interactions in the city:

- Information about cities and patterns of their use, visualized in new ways. But this information can also be made available on mobile devices locally, on demand, and in a way that it can be acted upon.

- Transition from passive facade (such as huge urban displays) to addressable, scriptable and queryable surfaces. See for example, the Galleria West by UNStudio and Arup Engineering or Pervasive Times Square (by Matt Worsnick and Evan Allen) which show how it may look like.

- A signature interaction style: when information processing dissolving in behavior (simple behavior, no external token of transaction left)

The take away of this presentation is that networked cities will respond to the behavior of its residents and other users, in something like real time, underwriting the transition from browse urbanism to search urbanism. And Adam’s final word is that networked cities’s future is up to us, that is to say designers, consumers, and citizens.

Jef Huang: “Interactive Cities” then built on Adam’s presentation by showing projects. To him, a fundamental design question is “How to fuse digital technologies into our cities to foster better communities?”. Jef wants to focus on how digital technology can augment physical architecture to do so. The premise is that the basic technology is really mature or reached a certain stage of maturity: mobile technology, facade tech, LEDs, etc. What is lacking is the was these technologies have been applied in the city. For instance, if you take a walk in any major city, the most obvious appearance of ubiquitous tech are surveillance cameras and media facades (that bombard citizen with ads). You can compare them to physical spam but there’s not spam filter, you can either go around it, close your eyes or wear sunglasses. You can compare the situation to the first times of the Web.

When designing the networked cities, the point is to push the city towards the same path: more empowered and more social platforms. Jef’s then showed some projects along that line: Listening Walls (Carpenter Center, Cambridge, USA), the now famous Swisshouse physical/virtual wall project, Beijing Newscocoons (National Arts Museum of China, Beijing) which gives digital information, such as news or blogposts a sense of physicality through inflatable cocoons. Jef also showed a project he did for the Madrid’s answer to the Olympic bid for 2012: a real time/real scale urban traffic nodes. Another intriguing project is the “Seesaw connectivity”, which allows to learn a new language in airport through shared seesaw (one part in an airport and the other in another one).

The bottom line of Jef’s talk is that fusing digital technologies into our cities to foster better communities should go beyond media façades and surveillance cams, allow empowerment (from passive to co-creator), enable social, interactive, tactile dimensions. Of course, it leads to some issues such as the status of the architecture (public? private?) and sustainability questions.

The final presentation, by Soo-In Yang, called “Living City“, is about the fact that buildings have the capability to talk to one another. The presence of sensor is now disappearing into the woodwork and all kinds of data is transferred instantly and wirelessly—buildings will communicate information about their local conditions to a network of other buildings. His project, is an ecology of facades where individual buildings collect data, share it with others in their “social network” and sometimes take “collective action”.

What he showed is a prototype facade that breathes in response to pollution, what he called “a full-scale building skin designed to open and close its gills in response to air quality”. The platform allows building to communicate with cities, with organizations, and with individuals about any topic related to data collected by sensors. He explained how this project enabled them to explore air as “public space and building facades as public space”.

Yang’s work is very interesting as they design proof of concept, they indeed don’t want to rely only on virtual renderings and abstract ideas but installed different sensors on buildings in NYC. They could then collect and share the data from each wireless sensor network, allowing any participating building (the Empire State Building and the Van Alen Institute building) to talk to others and take action in response. In a sense they use the “city as a research lab“.

Related Links:

Robot session @ LIFT Asia

Saturday morning at LIFT Asia 2008, quick notes.

By Nicolas Nova

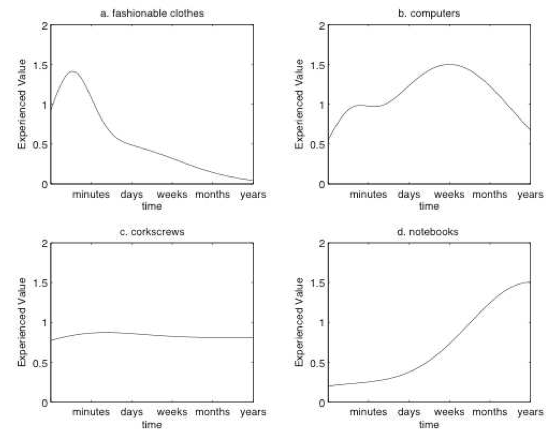

Frederic Kaplan began his talk by stating that the number of object we have at home is huge (nearly 3500), all of them have different “value profile”. he showed curves that capture the evolution of the experienced value of an object). See the curve below. A roomba for example follows a curve such as a corkscrew (c) whereas an Aibo, an entertainment robot, follows more a “notebook” curve: where value augment over time through the relationship with the owner(s).

Frederic stated how we know how to deal with the mid to end part of the curve but not the beginning, namely how to create the first part of the robot-owner relationship, which is a crux question in general for robots/communicating objects designers. There are many reasons for that: in the west, it’s not easy in the occidental culture, to “raise” and talk a robot; most people try but stop, and show it only when friends come visit. So the robot is a pretty expensive gadget.

After moving from Sony to the CRAFT laboratory, Frederic started moving form robot to interactive furnitures and became interested in how objects can be “robotized” and the fact that perhaps robots should not always look like robots. Since 1984, computers have not changed much (shapes, icons have been modified but still it’s always the same story). We changed the way we used computers (listen to music, watch photos, get the news, that was not what computers were intended for) but they did not change, so they thought it would be an idea to build a robotic computer as in the former Apple commercial. They therefore designed the Wizkid, an “expressive computer” which recognizes people, gestures proposing a new sort of interactivity with people. To some extent, he showed how you can have expressivity without any anthropomorphic robot (unlike the demo we had of the Speecys robot).

Some use cases:

- in the living room, the Wizkid can act as a central interface to the media players: showing a CD make the robot playing it; it can also take pictures autonomously and create a visual summary of the event that can be sent to guests afterwards. It’s like an automatic logging system that remembers and use that information.

- in the kitchen: the wizkid can help you cook and shop. When the owner prepare a recipe, the wizkid will help following it step by step, tracking face and gestures (ans also doing some suggestions). It would be possible to show an item and the wizkid add it to the shopping list.

- games are also an interesting field: you can play augmented reality games with the wizkid: you look at yourself in the screen and see yourself in imaginary worlds.

As a conclusion, Frederic said that most people things that robots will look like objects but he claims that everyday objects can become robot and the next generation of computer interfaces will be robotic. People used to go to the machine to interact but now interactivity comes to you. Computers used to live in their own world, now they live in yours.

Then Bruno Bonnell in his “from robota to homo robotus: revisiting Asimov’s laws of robotic” took up the floor and gave an insightful presentation about robot designers should revisit the definition of “robots” (and therefore Asimov’ laws). To him, there is a vocabulary problem when it comes to robots.

In Czech, “robot” means “work” and it pervaded our representation of what is a robot, that is to say, a mechanic slave. Hence the laws of robotics for Asimov. These laws work well for military or industrial robots but what about leisure robots such as the Aibo, the roomba, iRobiq? We had the same problem with the word “computer”. it’s only since World War II that the word “computer” (from Latin computare, “to reckon,” “sum up”) been applied to machines. The Oxford English Dictionary still describes a computer as “a person employed to make calculations in an observatory, in surveying, etc.”. We moved that into machines and computers took over the successive activities: Systematic tasks, support creation tools, became and artistic Medium and finally an amplifier of imagination. And it’s the same with animals: it used to be food, then working forces, companions and finally friends. In addition, we don’t talk just about “animals”: there are ponies, dogs, etc. with a classification: animals, mammals, equids, horses. It would be possible to classify computers according to the same classification: order/family/genre/specy.

So, what about robots? are the very different robots all the same? Couldn’t we classify them in a classification: a family of static robot, a family of moving robot, etc. So now, it’s no more “robot, robot, robot” but “Robots,Mover,Humanoide,IrobiQ”. What is important here is that all these robots in the classifications are recognized as having different features and characteristics. We start recognizing that they are all not the same species. By classifying (giving a name), you generate some different applications and can improve the quality of the product that you are designing. Putting names on things helps creating them. It allows to go beyond the limits of the robot vision: and it allows to reconcile the vision of having of both an anthropomorphic robot (like Speecys’ robot we saw first) and a different one (like Frederic’s Wizkid) since they are from two different “species”.

After this classification, we can go into the evolution, how to branch out the future of robots. there could be the following path: mechanical slave, the alternative to human actions, the substitute of human care, the companions and finally the amplifier of human body and mind. Is it scifi or Reality ? Today or Tomorrow ? Is it possible technically? We don’t know but what is important is to start today and look ahead?

An interesting path to do so is to move away from practical robots and investigate useless robots, as well as not being afraid of technical limitations (think about the guys who designed Pong at Atari). To the question “what does the robot do?”, the answer is simple: to create an emotional bond with humans (that would be recipe for a robot success). The important characteristics are therefore: fun, thrilling, etc. Which is very close to video games do: they creatine a emotional bond with the players because they are faithful to a reality, they are reliable, available, adaptable, and above all TRUSTFUL. In the same fashion, robots should be trustful. The bottom line is thus that we should forget the Asimov laws and invent the Tao of robotic where the “gameplay” is the key to accept them as part of our reality.

Also, the funny part of the session was the first talk where Tomoaki Kasuga’s demonstration of his robot, which “charm point” is the hip (or something else as attested by the picture below), especially when dancing on stage. What Tomoaki showed is that expressivity (through dance, movement, the quality of the pieces) is very important for human-computer interaction.

Related Links:

Opening Search to Semantic Upstarts

Even if you have a great idea for a new search engine, it's far from easy to get it off the ground. For one thing, the best engineering talent resides at big-name companies. Even more significantly, according to some estimates, it costs hundreds of millions of dollars to buy and maintain the servers needed to index the Web in its entirety.

However, Yahoo recently released a resource that may offer hope to search innovators and entrepreneurs. Called Build Your Own Search Service (BOSS), it allows programmers to make use of Yahoo's index of the Web--billions of pages that are continually updated--thereby removing perhaps the biggest barrier to search innovation. By opening its index to thousands of independent programmers and entrepreneurs, Yahoo hopes that BOSS will kick-start projects that it lacks the time, money, and resources to invent itself. Prabhakar Raghavan, head of Yahoo Research and a consulting professor at Stanford University, says this might include better ways of searching videos or images, tools that use social networks to rank search results, or a semantic search engine that tries to understand the contents of Web pages, rather than just a collection of keywords and links.

"We're trying to break down the barriers to innovation," says Raghavan, although he admits that BOSS is far from an altruistic venture. If a new search-engine tool built using Yahoo's index becomes popular and potentially profitable, Yahoo reserves the right to place ads next to its results.

So far, no BOSS-powered site has become that successful. But a number of startups are beginning to build their services on top of BOSS, and Semantic Web companies, in particular, are benefiting from the platform. These companies are developing software to process concepts and meanings in order to better organize information on the Web.

For instance, Hakia, a company based in New York, began building a semantic search engine in 2004. Its algorithms use a database of concepts--people, places, objects, and more--to "understand" concepts in documents. Hakia also creates maps linking together different documents, such as Web pages, based on these concepts in order to understand their relevance to one another. Riza Berkan, CEO of the company, says that focusing on the meaning of pages, instead of simply on the links between them, could serve up more relevant search results and help people find content that they didn't even know they were looking for.

However, in order to do this well, Hakia needs to have access to as many Web pages as possible, and this is where BOSS fits in. For a given query, Hakia uses Yahoo's BOSS index to determine a set of relevant results. Hakia's software then determines whether these pages have already been analyzed by the company's semantic software. If they haven't, they will be processed, and the results will be stored on Hakia's servers. "We crawl the Web anyway," says Berkan. "But without Yahoo's index, we'd be behind on the sites that people are searching for today." And the more popular pages Hakia scans, the better its index will be.

Another semantic startup, called Cluuz, from Ontario, Canada, is taking a slightly different approach. When a user searches with Cluuz, she will see Yahoo BOSS results, but they are reordered according to the startup's own semantic search technology. "When you do a query," says Alex Zivkovic, CTO of Cluuz, "we pass it on to Yahoo BOSS, and we get a list of results back . . . Then for each of those pages, the Cluuz engine analyzes the content, extracts entities--people, companies, phone numbers, and those sorts of things." These concepts, he explains, are then checked against the concepts found on other pages, and the concepts that arise most often are deemed most relevant.

"Instead of looking at pages being linked based on the physical links, we're looking at them in terms of whether or not they are talking about the same concepts," says Zivkovic. This leads to a different user experience, he adds. For instance, terms relevant to a search query are pulled from the Web and highlighted on the right of the results page. A search for "Kate Greene" immediately pulls up my e-mail address at Technology Review, the university I attended, and a number of the people I've interviewed for past stories. Additionally, Cluuz provides other tools that allow the links and relationships between different semantic concepts to be visualized easily.

Even with the power of Yahoo's index behind a company, there's no guarantee that Hakia or Cluuz will be a success. But if they do take off, it could help Yahoo, which still lags way behind Google in terms of popularity, regain the edge. "The underlying philosophy [with BOSS] is, we're not going to be able to invent everything on our own," says Raghavan. "So we should facilitate innovation."

Copyright Technology Review 2008.

Related Links:

fabric | rblg

This blog is the survey website of fabric | ch - studio for architecture, interaction and research.

We curate and reblog articles, researches, writings, exhibitions and projects that we notice and find interesting during our everyday practice and readings.

Most articles concern the intertwined fields of architecture, territory, art, interaction design, thinking and science. From time to time, we also publish documentation about our own work and research, immersed among these related resources and inspirations.

This website is used by fabric | ch as archive, references and resources. It is shared with all those interested in the same topics as we are, in the hope that they will also find valuable references and content in it.