Tuesday, August 12. 2008

Green plug prospects

By Glenn Fleishman

The unsightly plastic warts on our walls are sucking down terawatts of power globally each year. It’s time to put a stop to that needless energy drain by replacing dumb bricks with smart hubs -- putting a computerized stake through the hearts of our home electrical vampires.

Devices that are plugged in but not in use consume between 200 and 400 terawatt hours (TWh) per year, according to the International Energy Agency. Other research pegs the not-in-use drain from 5 to 25 percent of all residential energy used in the U.S., with numbers rising.

Research doesn’t divvy up between the consumption of DC-converting “wall warts” that provide juice to recharge batteries or convert power for various electronics, and the power sucked by the standby mode of televisions, microwaves and other appliances that are ostensibly “off.” But experts believe the adapters drain a significantly greater amount.

DC adapters waste power through excess heat in transforming AC to DC current, through continual charging (which shortens device lifespans), and through drawing power even when nothing is attached to its DC plug, or when an attached device is powered down. Most DC converters are cheaply built, vary widely even from the same maker in efficiency, and have little of the prowess built into most other home electronics and computing peripherals.

With the rise in prices of oil and the volatility of electrical prices in the U.S., there are many different efforts underway to reduce standby power, as well as shift recharging power from daytime to off-peak hours when electrical demand is low.

One of the comprehensive solutions for some of the lowest-hanging culprits comes from California-based Green Plug. The company wants to give away a chunk of their technology to secure themselves a place in all power supplies sold. The tradeoff may be very worthwhile.

Building a DC Ecosystem

Green Plug’s goal is to kill off the adapters by creating a new standard for power recharging: a central and efficient DC conversion hub. They use standard USB connectors and intelligence about power supplies to reduce electrical usage while potentially extending the life of our hardware. Green Plug has developed their own high-power version of a USB cable that could recharge laptops and other more demanding hardware.

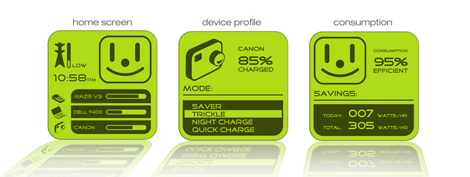

In Green Plug's model, a central hub with multiple USB ports handles anything that’s plugged into it. It checks for whether a given device has its smart technology built in, and whether the device is high- or low-power. Unless a higher charge is required, the hub uses only USB-compatible low power. In standby mode, it simply shuts off power, instead of allowing an unneeded trickle.

According to company founder and CEO Frank P. Paniagua, Jr., the system works because it's a natural transition for consumers. “You don't have to change your behavior: you plug in, you save energy, you cut e-waste. Plus, it's safe: it detects what that client device is on the other side.”

A related benefit of Green Plug’s approach is that the charging system has smarts. Any hardware enabled with Green Plug’s chips can transmit information about its status—number of battery cycles, current charge, and other details.

Home users might use this information to see the power they’re consuming, to find out whether they can unplug a camera or phone and use it for the day, or to help with technical support when a device goes south. In offices, this information could ultimately be aggregated and used to control when power is used, as companies can often score off-peak prices from utilities, or spot faulty hardware.

It’s the enabled hardware that’s the limiting factor for adoption, however.

Will Manufacturers Buy In?

Green Plug has patented some of its technology, and opted to provide royalty-free licenses for its communications protocol (Greentalk) and its modification to USB connectors to allow high power. It makes its money by selling its own chips and licensing its technology to chipmakers to embed in their power-supply systems. Westinghouse is the first company to sign on to use Green Plug’s system in some new products.

Paniagua believes that they have a fighting chance because of regulatory and energy market changes. Manufacturers in some countries, including those in the European Union, must plan for a product’s lifecycle, and be able to accept and disassemble systems when they’ve expired. “If you manufacture it, you're going to have to take it back." (Read more about producer take-bake programs in the Worldchanging archives).

Green Plug likes to emphasize the waste resulting from the adapters' short lifespans. The company estimates 3.2 billion external power supplies will be built worldwide in 2008 (with about a quarter coming to the U.S.), and that 434 million will be retired this year in just the U.S.—with only a small percentage heading into electronics recycling. Green Plug’s system—which, as their reference design demonstrates, uses water-soluble plastic and solder—easily comes apart at end-of-life, making it easy to harvest recyclable components.

But even without fees, Green Plug faces no easy task in challenging the industry to adopt a new norm. To begin the conversation, Green Plug founded a trade group, the Alliance for Universal Power Supplies, to bring together stakeholders like utilities, chip makers and manufacturers. California utility giant PG&E has hosted the first two alliance meetings.

The Future of Charging

Perhaps what’s most likely to help lead Green Plug’s ecosystem to success, however, is Paniagua’s focus on the broader charging market—including hybrid plug-in and electric cars. PG&E’s involvement with the power supply alliance stems from a broader goal: to combine smart-grid intelligence on a power system with smart-charging intelligence in devices like cars.

Utilities are looking for “a real-time secure protocol” that they can work with, he said, and Green Plug hopes theirs becomes the winner.

For instance, a plug-in hybrid or electric car with Greentalk inside could be scheduled through an owner’s computer to charge between 1 am and 5 am in the morning, with the device figuring out the amount of current it needs to draw to charge within that period.

This could allow the kind of personally managed power shaving that utilities love: moving power usage off peak daytime hours into the night when power is cheap. Utilities that own plants also run their least-efficient, most-expensive, and most-polluting facilities last.

Ultimately, the inefficiency of almost every part of electronics power usage, from cords to adapters to power supply components, has to be addressed as the cost of raw materials increases, manufacturers are more obliged to use less and accept back more, and power prices climb.

Green Plug may not have the only answer, but they do have a viable one. Equipment using their technology should start appearing as soon as late this year.

Glenn Fleishman is a Seattle journalist who focuses on technology, and how to overcome it. Glenn writes regularly about wireless data at Wi-Fi Networking News, and Macs at TidBITS.

Image credit: Green Plug.

Maps, Information design & architecture

At the end of last month, I attended the International Symposium on Electronic Arts (ISEA), that was held in Singapore. Although the juried exhibition of art works didn’t involve that many works on the themes of The Mobile City, the ISEA seminar had quite a few sessions on media technology and the experience of place and space. Unfortunately, there were so many parallel sessions, that I can’t pretend to come even close to a overall wrap-up of the conference. So I will just pinpoint some of the themes and works that I found interesting in a few posts in the next few days or so.

At the end of last month, I attended the International Symposium on Electronic Arts (ISEA), that was held in Singapore. Although the juried exhibition of art works didn’t involve that many works on the themes of The Mobile City, the ISEA seminar had quite a few sessions on media technology and the experience of place and space. Unfortunately, there were so many parallel sessions, that I can’t pretend to come even close to a overall wrap-up of the conference. So I will just pinpoint some of the themes and works that I found interesting in a few posts in the next few days or so.

If there was one trend that struck me at ISEA, it was probably the idea of data visualization as a way of making abstract or invisible cultural processes more tangible. It wasn’t only the topic of some of the artist presentations, but also the core of Lev Manovich’s inspiring closing talk.

The idea is that now that we have more content and metadata than ever (Lev Manovich: this is not the era of ‘new media’ any longer, but rather the era of ‘more media’), interesting cultural forms are emerging from aggregating, analyzing and visualizing this data. Examples range from from simple tag clouds that tell us what people are talking about on the web to visualization of traffic flows in the city and systems that monitor epidemic outbreaks. In business these mappings of aggregated data are called ‘dashboards’.

Lev Manovich pointed out that companies have had these dashboards for some time, but that the cultural sector is only now catching up. Right now, these mappings are becoming a new cultural form in themselves. Just look at websites like Visual Complexity , CultureVis, Infosthetics and Information Esthetics.

Of course locative and mobile media play an important role in this process. First of all mobile phones are often used as tracking devices. Usage data from mobile phone operators can be used to analyze movement through a city for instance. At the same time, these devices can function as dashboards to their users: mobile phone widgets can show them actual real time analysis of certain social processes in the city. But is not only about mobile devices: these visualizations can become a part of architecture as well, or displayed on urban screens.

While all of this is interesting in itself, of course the more interesting question is how to go beyond mere ‘dashboarding’ and mapping of flows? How can we turn these display of statistics in interesting pieced of public art? And how will these maps influence our experience of both the city and our social relationships?

Some examples of this trend that were shown or referred to at ISEA:

Arch-OS was presented by Mike Philips. It is a system that can collects all sorts of data from a building, varying from movement in the building by analyzing the images of cctv camera’s and internet traffic on the LAN to climate data. These data streams can then be used to drive different architectural features, varying from visuals on LED screens to a giant wall sized robot that moves through the open space of an atrium.

An ‘Operating System’ for contemporary architecture (Arch-OS, ’software for buildings’) has been developed to manifest the life of a building and provide artists, engineers and scientists with a unique environment for developing transdisciplinary work and new public art.

Paul Thomas showed his i-500 project, an art work that is part of a new building for Curtin University’s new Minerals and Chemistry Research and Education Buildings. It uses the Arch-Os to analyze the work of the scientists in the building and translates their activity into a public art work that is an integral part of the building.

Working in close collaboration with Woods Bagot Architects, as part of the architects project team, the i-500 project team are creating a public artwork to be incorporated into the fabric of the complex with the intention to encourage building users to communicate and collaborate.

The i-500 will perform a vital and integral role in the development of scientific research in the fields of nanochemistry (atomic microscopy and computer modeling), applied chemistry, environmental science, hydrometallurgy, biotechnology, and forensic science. The artworks potential is to represent the visualisation of quantitative scientific research as part of the architectural environment.

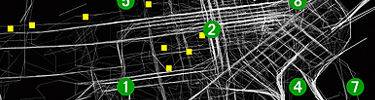

Chris Bowman and Teresa Leung are researching what looked like a ‘grammar of gps visualization systems’.

|

Cabspotting traces San Francisco’s taxi cabs as they travel throughout the Bay Area. The patterns traced by each cab create a living and always-changing map of city life. This map hints at economic, social, and cultural trends that are otherwise invisible. The Exploratorium has invited artists and researchers to use this information to reveal these “Invisible Dynamics.

|

Cascade on Wheels is a visualization project that intends to express the quantity of cars we live with in big cities nowadays. The data set we worked on is the daily average of cars passing by streets, over a year. In this case, a section of the Madrid city center, during 2006. The averages are grouped down into four categories of car types. Light vehicles, taxis, trucks, and buses.

The project aggregated data from cell phones (obtained using Telecom Italia’s innovative Lochness platform), buses and taxis in Rome to better understand urban dynamics in real time. By revealing the pulse of the city, the project aims to show how technology can help individuals make more informed decisions about their environment.

Related Links:

Thursday, August 07. 2008

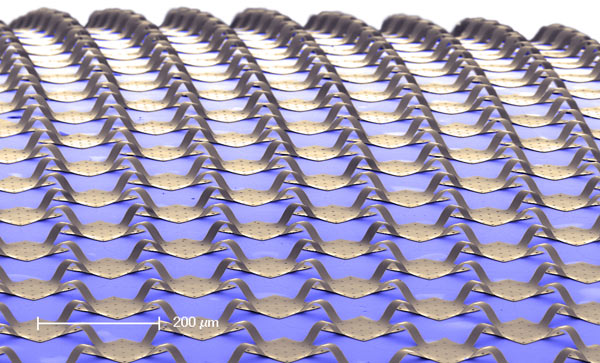

A Spherical Camera Sensor

A stretchable circuit allows researchers to make simple, high-quality camera sensors.

By Kate Greene

-

|

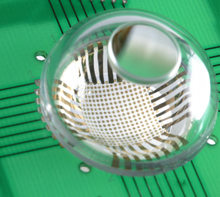

| The eyes have it: This camera consists of a hemisphere-shaped array of photodetectors (white square with gold-colored dots) and a single lens atop a transparent globe. The curved shape of the photodetector array provides a wide field of view and high-quality images in a compact package. Credit: Beckman Institute, University of Illinois |

Today's digital cameras are remarkable devices, but even the most advanced cameras lack the simplicity and quality of the human eye. Now, researchers at the University of Illinois at Urbana-Champaign have built a spherical camera that follows the form and function of a human eye by building a circuit onto a curved surface.

The curved sensor has properties that are found in eyes, such as a wide field of view, that can't be produced in digital cameras without a lot of complexity, says John Rogers, lead researcher on the project. "One of the most prominent [features of the human eye] is that the detector surface of the retina is not planar like the digital chip in a camera," he says. "The consequence of that is [that] the optics are well suited to forming high-quality images even with simple imaging elements, such as the single lens of the cornea."

Electronic devices have been, for the most part, built on rigid, flat chips. But over the past decade, engineers have moved beyond stiff chips and built circuits on bendable sheets. More recently, researchers have gone a step beyond simple bendable electronics and put high-quality silicon circuits on stretchable, rubberlike surfaces. The advantage of a stretchable circuit, says Rogers, is that it can conform over curvy surfaces, whereas bendable devices can't.

The key to the spherical camera is a sensor array that can withstand a curve of about 50 percent of its original shape without breaking, allowing it to be removed from the stiff wafer on which it was originally fabricated and transferred to a rubberlike surface. "Doing that requires more than just making the detector flexible," says Rogers. "You can't just wrap a sphere with a sheet of paper. You need stretchability in order to do a geometry transformation."

The array, which consists of a collection of tiny squares of silicon photodetectors connected by thin ribbons of polymer and metal, is initially fabricated on a silicon wafer. It is then removed from the wafer with a chemical process and transferred to a piece of rubberlike material that was previously formed into a hemisphere shape. At the time of transfer, the rubber hemisphere is stretched out flat. Once the array has been successfully adhered to the rubber, the hemisphere is relaxed into its natural curved shape.

Because the ribbons that connect the tiny islands of silicon are so thin, they are able to bend easily without breaking, Rogers says. If two of the silicon squares are pressed closer together, the ribbons buckle, forming a bridge. "They can accommodate strain without inducing any stretching in the silicon," he says.

To complete the camera, the sensor array is connected to a circuit board that connects to a computer that controls the camera. The array is capped with a globelike cover fitted with a lens. In this setup, the sensor array mimics the retina of a human eye while the lens mimics the cornea.

|

| Stretchable mesh: The square silicon photodetectors, connected by thin ribbons of metal and polymer, are mounted on a hemisphere-shaped rubber surface. The entire device is able to conform to any curvilinear shape due to the flexibility of the ribbons that connect the silicon islands. Credit: Beckman Institute, University of Illinois |

"This technology heralds the advent of a new class of imaging devices with wide-angle fields of view, low distortion, and compact size," says Takao Someya, a professor of engineering at the University of Tokyo, who was not involved in the research. "I believe this work is a real breakthrough in the field of stretchable electronics."

Rogers isn't the first to use the concept of a stretchable electronic mesh, but this work distinguishes itself in that it is not constrained to stretching in limited directions, like other stretchable electronic meshes. And importantly, his is the first stretchable mesh to be implemented in an artificial eye camera.

The camera's resolution is 256 pixels. At the moment, it's difficult to improve resolution due to the limitations of the fabrication facilities at the University of Illinois, says Rogers. "At some level, it's a little frustrating because you have this neat electronic eye and everything's pixelated," he says. But his team has sidestepped the problem by taking another cue from biology. The human eye dithers from side to side, constantly capturing snippets of images; the brain pieces the snippets together to form a complete picture. In the same way, Rogers's team runs a computer program that makes the images crisper by interpolating multiple images taken from different angles.

The most immediate application for these eyeball cameras, says Rogers, is most likely with the military. The simple, compact design could be used in imaging technology in the field, he suggests. And while the concept of an electronic eye conjures up images of eye implants, Rogers says that at this time he is not collaborating with other researchers to make these devices biocompatible. However, he's not ruling out the possibility in the future.

Related Links:

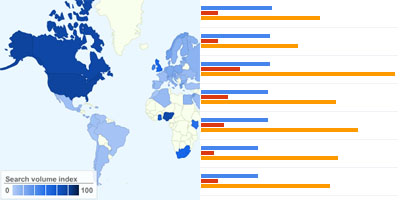

Google insights for search

a new online service by google that allows users to compare search volume patterns across specific regions, categories & time frames.

[link: google.com]

Related Links:

Wednesday, August 06. 2008

Obscura Digital VisionAire 3D multitouch hologram

Obscura Digital, who specialise in interactive media, have released a video demonstrating their new VisionAire “multitouch” projection system. The company themselves admit that it’s not exactly true multitouch, since there’s actually no contact involved; in fact, you gesture around in mid-air to control different windows and other objects. Multiple people can use the system at the same time.

There isn’t much in the way of technical details; the Obscura blog describes it as “our standard multi-touch framework [integrated] with the Musion system we have in house”. Musion are a company that produces a high-definition freeform 3D holograph on a live stage.

No word on whether this system will be made commercially available.

Related Links:

fabric | rblg

This blog is the survey website of fabric | ch - studio for architecture, interaction and research.

We curate and reblog articles, researches, writings, exhibitions and projects that we notice and find interesting during our everyday practice and readings.

Most articles concern the intertwined fields of architecture, territory, art, interaction design, thinking and science. From time to time, we also publish documentation about our own work and research, immersed among these related resources and inspirations.

This website is used by fabric | ch as archive, references and resources. It is shared with all those interested in the same topics as we are, in the hope that they will also find valuable references and content in it.